- How Do Voice Agents Create New Enterprise Security Risks?

- Enterprise Threat Model for Voice AI Systems (End-to-End)

- Voice AI Security Architecture for Enterprises: A Layered Blueprint

- Zero-Trust Architecture for Voice AI Systems

- AI Voice Agent Compliance & Security: From Regulation to Implementation

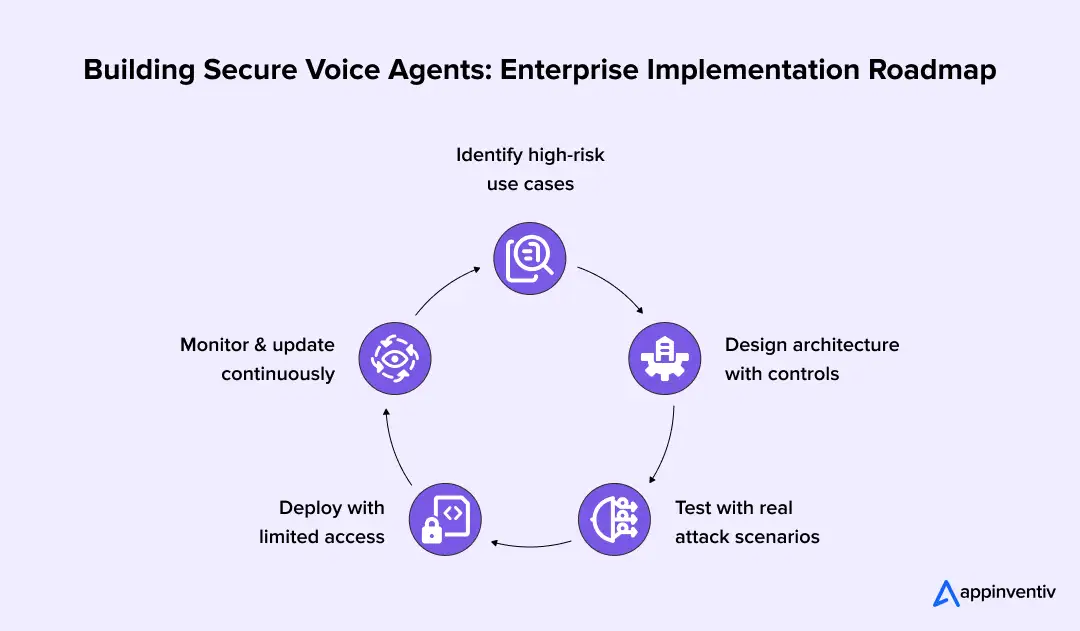

- Building Secure Voice Agents: Enterprise Implementation Roadmap

- Vendor Evaluation Checklist for Secure Voice AI Platforms

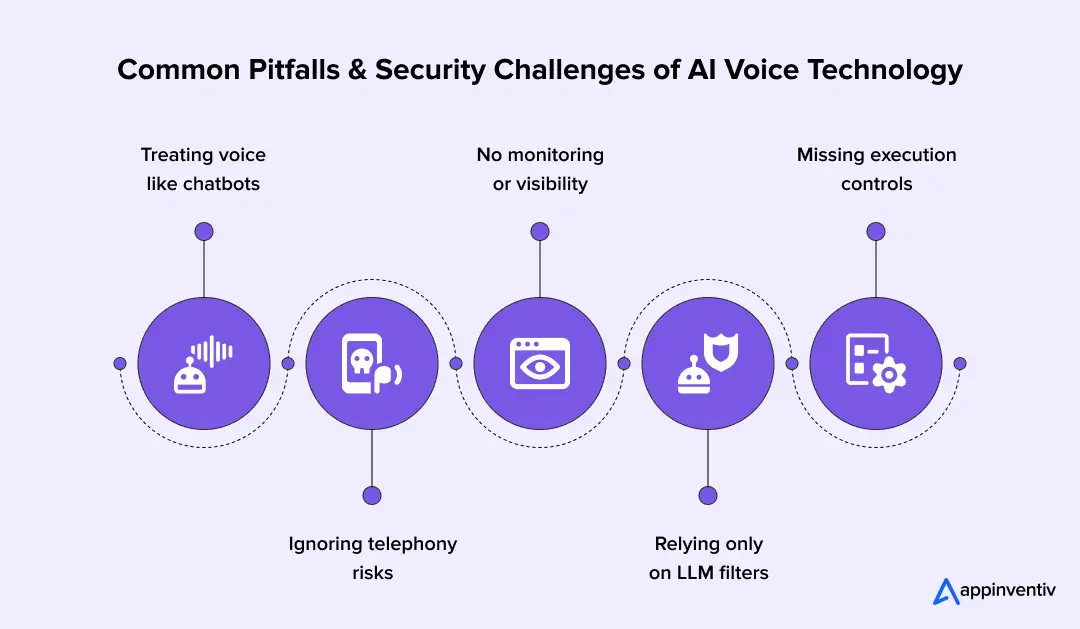

- Common Pitfalls & Security Challenges of AI Voice Technology

- Future of Voice Agent Security (2026–2030 Outlook)

- How Appinventiv Helps Enterprises Build Secure Voice AI Systems

- FAQ's

Key takeaways:

- Voice agents now execute transactions and workflows, turning minor errors into direct financial, operational, and compliance risks.

- Security must exist across every layer, from audio input to execution, not as a final checkpoint.

- Enterprises need measurable benchmarks such as FAR, hallucination rate, and attack success rate to validate the actual security of their systems.

- Compliance is enforced through runtime controls, including PII masking, audit logs, and strict access governance.

- Secure voice AI requires architecture, monitoring, and governance working together, not isolated models or standalone guardrails.

Voice AI has moved past simple assistants. It now executes actions inside enterprise systems. A voice agent can approve a payment, update a patient record, or reset account credentials in real time.

Adoption is rising fast. Gartner reports that over 70 percent of enterprises are testing or deploying secure conversational AI systems. Data from Statista shows that voice-assisted eCommerce transactions have reached about $19.4 billion, marking a fourfold increase in just two years.

At the same time, synthetic voice fraud has grown sharply, with some reports showing deepfake and voice impersonation attacks rising by over 300 percent in recent years, and banks reporting measurable losses tied to these incidents.

This shift creates a new risk layer. A single misinterpreted command or manipulated audio input can trigger real business actions. In healthcare, that can affect clinical data. In finance, it can move money. In customer support, it can expose identity data.

Enterprises are deploying voice AI faster than they can secure it.

This blog breaks down how to close that gap. It covers AI voice agent security solutions, including layered architecture, measurable benchmarks, compliance controls, and a clear implementation model for production systems.

Most systems are already in production, but few have layered security controls in place.

How Do Voice Agents Create New Enterprise Security Risks?

Every voice agent security risk begins at the interaction layer. They do not just process language. They interpret intent and trigger actions across connected systems. This creates a direct path from spoken input to business execution.

From Chatbots to Action-Taking Agents

Traditional chatbots answered questions. They stayed within defined scripts and limited system access.

Unlike basic tools, AI agents in enterprise settings operate with deeper integration, connecting to backend systems like CRMs, EHRs, and payment gateways. They can read data, write updates, and trigger workflows in real time.

This shift moves risk from conversation errors to execution errors. If the system misinterprets a request, it does not just respond incorrectly. It performs the wrong action.

The 5-Layer Voice AI Attack Surface Model

Enterprise voice systems operate across multiple layers. Each layer introduces its own attack vector, which is why AI security for voice interfaces must be applied at every level.

- Audio Input Layer

Attackers can use cloned voices or crafted audio to bypass identity checks.

- Speech-to-Text Layer

ASR systems can be forced to misinterpret commands through noise injection or phonetic tricks.

- LLM Reasoning Layer

Spoken prompts can inject hidden instructions that alter system behavior or extract data.

- Text-to-Speech Layer

The system can generate unsafe or misleading responses if outputs are not validated.

- Telephony and API Layer

SIP-based systems and APIs can be exploited to intercept calls or trigger unauthorized actions.

Real Enterprise Risk Scenarios

These risks already appear in production systems across industries, making enterprise voice AI security a critical priority.

- Synthetic voices in banking have been used to approve fraudulent transfers, highlighting the need for AI voice fraud prevention solutions.

- In healthcare, transcription errors have altered patient records and treatment details.

- In contact centers, weak identity checks have exposed sensitive customer data, a risk that grows as AI agents in customer service take on more complex interactions.

Enterprise Threat Model for Voice AI Systems (End-to-End)

A voice agent processes input, interprets intent, and executes actions across systems. Each stage introduces a distinct risk. Effective voice AI risk management for the enterprise maps these risks across the full pipeline, from audio capture to system execution.

Key Attack Vectors

Voice systems face a mix of audio, model, and system-level threats.

- Voice cloning and impersonation: Attackers use synthetic speech to bypass voice-based authentication and gain access.

- Prompt injection through speech: Hidden instructions inside spoken input can alter system behavior or extract sensitive data.

- Adversarial audio inputs: Carefully crafted audio can distort transcription and trigger unintended commands.

- Data exfiltration through conversations: Attackers can extract sensitive information through repeated or structured queries.

- Model hallucination leading to incorrect execution: The system can generate incorrect outputs that result in incorrect actions or decisions. In complex queries, models can produce unsupported outputs in a noticeable percentage of cases if grounding is not enforced.

Mapping Threats Across the Voice Pipeline

Each layer in the voice pipeline introduces a specific threat that can impact system behavior and business outcomes.

| Layer | Threat | Impact | Example |

|---|---|---|---|

| Audio Input | Voice spoofing | Unauthorized access | Cloned voice passes authentication |

| Speech-to-Text | Misinterpretation | Incorrect command execution | “Transfer 15” read as “Transfer 50” |

| LLM Reasoning | Prompt injection | Data leakage or logic override | Hidden spoken instruction alters output |

| Decision Layer | Policy bypass | Unauthorized actions | System executes without validation |

| Output Layer | Unsafe response | Misinformation | Incorrect instructions to the user |

| Telephony/API | Call interception | System compromise | SIP exploit redirects call flow |

Risk Severity Classification Framework

Each voice agent security risk must be classified based on business impact.

| Risk | Impact |

|---|---|

| High Risk |

|

| Medium Risk |

|

| Low Risk |

|

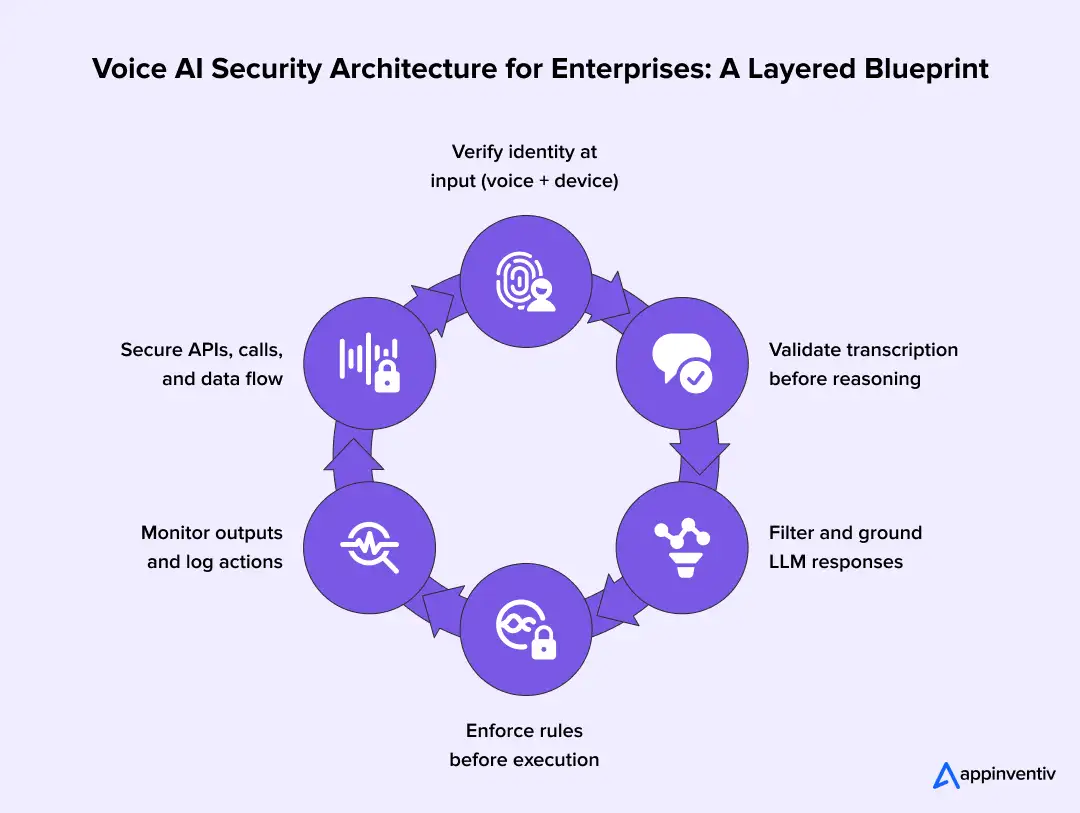

Voice AI Security Architecture for Enterprises: A Layered Blueprint

A voice agent does not fail in one place. It fails in steps. Audio enters, gets converted to text, then moves through reasoning, and ends in an action. Each step can be altered, misread, or exploited.

A sound voice AI security architecture needs control at every step. Not one gate at the start. Not one filter at the end. Each layer checks what it receives and decides whether to move forward.

Architecture Layers

Each layer handles a specific job. Each one blocks a different type of risk. Together, they form a controlled path from input to execution.

1. Input Security Layer

This is where the system first hears the user.

- Biometric voice authentication security compares speech patterns with stored voice profiles.

- Anti-spoofing models detect cloned or replayed audio

- Signal checks look for distortion, injected noise, or abnormal frequency shifts

- Device signals and session tokens are tied to the voice input for identity binding

If this layer fails, the system may accept a false identity.

2. Speech Processing Layer (ASR Security)

This layer turns speech into text.

- Confidence scores flag uncertain words or phrases

- A second ASR pass can confirm or challenge the first result

- Phoneme alignment helps catch mismatched sounds and words

A small error here can change the meaning. “Fifteen” can become “fifty.” In noisy or uncontrolled environments, error rates can increase significantly without proper validation. That is enough to trigger the wrong action.

3. LLM Security Layer

This layer interprets the request. How this layer is built matters as much as how it is secured, which is why understanding enterprise LLM development is key for teams designing voice pipelines.

- Input filters scan for hidden or misleading instructions

- Guardrails limit what the model can generate or trigger

- Retrieved data is checked against trusted sources before use

- Output grounding checks match responses with source data before execution

- Session and tenant isolation prevent cross-user or cross-system data leakage

This is where intent is formed. If this layer is weak, the system can be steered.

4. Decision and Action Layer

This is where the system connects to real operations.

- Voice agent authentication and authorization follow role-based and attribute-based controls.

- High-risk actions require step-up verification, such as OTP or secondary approval

- Transaction rules validate amount, type, and destination before execution

- Manual approval is triggered for sensitive actions

- Access is enforced using multi-factor authentication, SSO, and session-level identity validation

If something goes wrong here, the impact is immediate and real.

5. Output Security Layer (TTS)

This layer converts responses back into speech.

- Output filters block unsafe or misleading content

- Responses are checked against expected formats and allowed data

- End-to-end logs capture input, decision path, and final response for audit review

This step controls what the user hears and what gets recorded.

6. Infrastructure and Telephony Layer

This layer connects everything.

- SIP traffic is secured using authentication and encrypted protocols such as SRTP

- APIs use secure tokens and strict access rules

- End-to-end encryption across the voice AI pipeline covers data during transfer and storage

- Rate limits and call throttling prevent repeated abuse attempts

- Call flows are monitored for unusual routing or injection patterns

- Voice data is protected using encrypted transport (TLS, SRTP) and secure storage standards such as AES-256

Attacks at this layer do not change the model. They change how the system is reached or controlled.

Each layer reduces risk on its own. Together, they prevent errors from becoming actions.

Zero-Trust Architecture for Voice AI Systems

Voice agents don’t just answer questions anymore. They take action. A spoken request can trigger a payment, update a record, or expose sensitive data. That’s why traditional “authenticate once and trust” models fall apart here.

Zero-trust in voice AI works differently. Nothing is trusted by default. Every step in the pipeline must continuously verify three things before moving forward:

- Who is speaking?

- What are they asking?

- Is that action allowed right now?

These checks repeat across the system, from audio input to final execution. If something looks off at any stage, the flow stops or gets revalidated.

What Zero-Trust Means in Practice

In voice systems, risk doesn’t sit in one place. It moves with the interaction. A user might sound genuine but issue an unusual request. Or the request might be valid, but the transcription is slightly wrong.

Zero trust handles this by replacing one-time validation with continuous verification.

- Identity is checked more than once

- Intent is validated before any action

- Permissions are enforced in real time

The system doesn’t assume anything. It keeps checking as the interaction progresses.

Core Principles Behind It

A few rules define how zero-trust voice systems behave:

- Continuous identity checks: Voice, device, and session signals are verified throughout, especially before sensitive actions.

- Intent before execution: The system pauses on unclear or risky requests instead of acting immediately.

- Policy-led decisions: Every action is matched against rules based on role, risk level, and context.

- Separation of roles: The model interprets. A control layer decides. Execution is handled separately.

- Context awareness: Decisions consider behavior, session history, and device signals, not just the command.

How It’s Implemented Across the Pipeline

Zero trust is enforced step by step, not as a single control.

- Identity layer: Voice biometrics, anti-spoofing checks, and device signals work together to confirm the speaker. High-risk actions trigger additional verification.

- Speech layer (ASR validation): Confidence scores and multi-pass checks reduce transcription errors. If something is unclear, the system asks instead of guessing.

- Reasoning layer (LLM controls): Inputs are filtered for hidden instructions. Outputs are grounded in verified data. The model cannot directly trigger actions.

- Decision layer: Access rules (RBAC/ABAC), transaction limits, and step-up authentication decide whether a request should proceed.

- Execution layer: APIs are gated, actions are validated, and suspicious behavior can pause or stop execution.

- Monitoring layer: Everything is logged and tracked. Anomalies, drift, and unusual patterns are flagged in real time.

Zero Trust vs Traditional Voice Security

Zero-trust voice security continuously verifies identity, intent, and permissions at every step, unlike traditional models that rely on one-time authentication and implicit trust.

| Approach | Where It Fails | Zero-Trust Fix |

|---|---|---|

| One-time authentication | A spoofed voice can pass | Identity checked continuously |

| Model-only guardrails | No control over actions | Validation before execution |

| Perimeter security | Assumes internal trust | No implicit trust anywhere |

| Static rules | Break under new threats | Context-aware decisions |

Why This Matters

Voice systems operate in messy, real-world conditions. Background noise, accents, phrasing changes, even synthetic audio, all of it affects how the system behaves.

Without zero trust:

- A cloned voice can pass as real

- A small transcription error can trigger the wrong action

- A crafted prompt can override system logic

With zero trust:

- Every step is checked before impact

- Actions are controlled, not assumed

- The system stays reliable even under pressure

For enterprise voice AI, this isn’t an upgrade. It’s the baseline required to safely let systems act, not just respond.

Security Benchmarks for Voice Agents: What Enterprises Should Measure

Most teams say their voice system is ‘secure.’ Few can prove it. AI security for voice assistants shows up in numbers, not claims. If you cannot measure failure, you cannot control it.

A production system should track identity errors, model behavior, and execution risk. These signals tell you where the system breaks under pressure.

Core Security KPIs

These metrics focus on what actually fails in real deployments.

False Acceptance Rate (FAR)

How often does a system let the wrong speaker pass voice authentication? In banking flows, even a small increase can expose accounts.

False Rejection Rate (FRR)

How often does a valid user get blocked? High FRR leads to fallback flows, like OTP or human escalation.

Attack Success Rate

How many test attacks get through? This includes replay attacks, synthetic voice inputs, and injected commands.

Hallucination Rate

How often does the model produce an answer that is not backed by source data? In action systems, this can trigger wrong API calls.

Response Integrity Score

Checks if the final output matches verified data before execution. This is often tied to grounding checks in RAG pipelines.

Operational Benchmarks

These show how the system behaves in live traffic.

Latency Versus Validation Depth

Every check adds a delay. Voice systems usually target sub-300-millisecond response windows, even with validation in place. High-performing systems also keep false acceptance rates below 1 percent and rejection rates within a controlled range to balance security and usability.

Threat Detection Window

Time taken to detect and flag abnormal patterns, such as repeated failed voice matches or unusual command sequences.

Drift Thresholds

Changes in ASR accuracy or model responses over time. Teams track word error rate and intent deviation across sessions.

AI Red Teaming and Testing Frameworks

Voice systems need continuous testing, not one-time checks.

- Adversarial audio testing: Injects noise, hidden frequencies, or crafted phonemes to test ASR stability.

- Voice prompt fuzzing: Feeds varied spoken inputs to expose how the model handles edge cases and hidden instructions.

- Continuous evaluation pipelines: Automated tests run against live systems. These pipelines track regression in accuracy, security, and response behavior.

Voice AI Security Maturity Levels

This helps teams understand where they stand, much like a formal AI maturity assessment that maps capability against readiness.

- Level 1: Basic controls

Voice authentication, simple filters, and API access checks.

- Level 2: Monitored systems

Logs, alerts, and basic anomaly detection tied to usage patterns.

- Level 3: Adaptive controls

Real-time detection with dynamic response, like blocking suspicious sessions.

- Level 4: Autonomous defense

Systems adjust thresholds, block threats, and retrain detection models based on live signals.

Features do not define a secure system. Its performance under stress defines it.

AI Voice Agent Compliance & Security: From Regulation to Implementation

Most voice AI projects fail audits for one reason. The cost of failure is high, with data breaches in regulated industries often running into millions per incident. The system works, but the controls are not mapped to the regulation. Compliance is not a document. It is how the system handles data at every step.

Voice systems process biometric, personal, and transactional data in a single flow. Each is subject to different regulatory requirements. The system must enforce these requirements during capture, processing, storage, and retrieval.

Key Global Regulations Impacting Voice AI

Enterprises must align voice systems with multiple regulatory frameworks. Each one targets a different risk.

- GDPR

Covers personal data in the EU. Voice recordings, transcripts, and derived data all fall under its scope. - HIPAA

Applies to healthcare data in the US. Voice interactions that include patient information must follow strict access and audit controls. - SOC 2

Focuses on system controls. It requires logging, monitoring, and secure handling of data across services. - ISO 27001

Defines how information security systems are managed. It applies to infrastructure, access control, and risk management. - PCI-DSS

Covers payment data. Voice systems handling card details must mask, tokenize, or avoid storing sensitive fields.

Compliance Mapping to Technical Controls

Regulations define requirements. Systems must translate them into enforceable controls.

| Regulation | Requirement | Technical Implementation |

|---|---|---|

| GDPR | Data minimization Right to erasure | Real-time PII redaction during transcription Configurable data deletion workflows |

| HIPAA | Data security | End-to-end encryption and access logging |

| SOC 2 | Auditability | Immutable logs for all interactions |

| ISO 27001 | Access control | Role-based and attribute-based permissions |

| PCI-DSS | Payment protection | Tokenization and audio masking for card data |

This mapping ensures that compliance is enforced during runtime, not just documented.

Data Governance in Voice Systems

Data privacy fundamentals for voice agent deployments depend on how data moves through the system.

- Data lineage tracking

Tracks how voice data flows from input to storage. Each transformation must be recorded.

- Consent management

Users must approve recording and processing. Consent must be stored and linked to each session.

- Retention policies

Voice data should not be stored longer than required. Systems must support automated deletion in accordance with policy.

- Sensitive data masking and redaction controls

Sensitive personal data, such as financial details or biometric signals, is masked or redacted before storage or reuse

A compliant system does not rely on policy documents. It enforces rules inside the pipeline, which is what purpose-built compliance management software is designed to support.

Building Secure Voice Agents: Enterprise Implementation Roadmap

Most failures happen after deployment, not before. As more enterprises adopt AI-driven systems, the gap between deployment speed and security readiness continues to widen. Teams build a working system, then try to secure it later. That approach creates gaps. Security must be built into each stage, from the first use case to live operations.

Phase 1: Risk Assessment and Use Case Prioritization

Start with where the system can cause real impact.

- Identify workflows that trigger financial actions, data access, or system changes

- Map which systems the voice agent will connect to, such as CRM, EHR, or payment gateways

- Classify each voice agent security risk by use case before development begins.

High-risk flows need stricter controls from day one.

Phase 2: Architecture Design and Security Integration

Design a safety framework for AI voice agents with security controls built into each layer.

- Define identity checks across voice, device, and session

- Apply role-based and attribute-based access rules for all actions

- Plan validation steps before execution, not after

- Set boundaries for what the system can and cannot trigger

This stage decides how much control the system will have in production. Teams that invest in professional AI agent development services get these boundaries defined before a single line runs in production.

Phase 3: Testing and Validation

Test the system under failure conditions, not just normal usage.

- Run red team exercises with spoofed audio and injected commands

- Simulate edge cases such as unclear speech, background noise, and overlapping inputs

- Validate system behavior across different accents, speeds, and environments

The goal is to find where the system breaks before users do.

Phase 4: Deployment with Guardrails

Do not move from testing to full scale in one step.

- Start with a limited rollout and restricted actions

- Monitor identity failures, transcription errors, and execution logs

- Add rate limits and fallback paths, such as human escalation

Early deployment should focus on control, not scale.

Phase 5: Continuous Governance

Security does not stop after release. The system will change over time.

- Track drift in transcription accuracy and model decisions

- Review audit logs for unusual patterns or repeated failures

- Run periodic compliance checks against active workflows

- Update policies as new risks appear

A secure voice system is continuously observed and adjusted.

Without continuous validation and monitoring, issues surface only when the system is already in use.

Vendor Evaluation Checklist for Secure Voice AI Platforms

A demo can sound convincing. Real AI voice agent security solutions show how they behave under pressure. The difference appears in logs, controls, and how the platform handles failure.

Critical Questions to Ask Vendors

Ask how the system behaves step by step, not what features it claims to have.

- Walk me through a single request. From audio input to final action, where do you validate identity, intent, and permission?

- What if the transcription is uncertain or partially wrong? Does the system pause, confirm, or proceed?

- If a spoken prompt carries hidden instructions, where is it detected and stopped?

- Before an API call is made, what checks run? Is there a rule engine between the model and run?

- How do you tie a voice session to a real user? Is voice used alone, or combined with device, session, or OTP checks?

- Can you show a full trace for one interaction, including audio, transcript, model output, and action taken?

- If someone makes repeated requests quickly or changes patterns, how does the system respond?

- How is sensitive data handled during a live call? What gets masked, logged, or stored?

The answers should describe actual system behavior, not general design claims.

Must-Have Capabilities

A production system should make its decisions visible and traceable.

- Clear decision paths that show why an action was taken

- Live dashboards that track identity checks, errors, and actions

- Built-in controls for masking data and restricting access

- Access rules tied to user role and context

- Full trace from input to execution for every session

Teams that work with Appinventiv often expect these controls to be part of the system design, not added after deployment.

Build vs Buy vs Hybrid Decision Framework

The right model depends on control needs, internal capability, and time to deployment, all of which are covered in depth in this build vs buy guide.

| Approach | Control Level | Speed | When It Fits |

|---|---|---|---|

| Build | Full control over models, data, and infrastructure | Slower | Regulated environments with strict data control |

| Buy | Limited control, vendor-managed systems | Faster | Standard use cases with lower risk exposure |

| Hybrid | Shared control between internal systems and vendor platforms | Balanced | Enterprises that need customization with faster rollout |

Many enterprises choose a hybrid model. It allows control over sensitive workflows while using proven components for speech and model layers.

This is where teams often engage partners, such as Appinventiv, to design and implement secure architectures that align with internal systems, compliance requirements, and long-term scalability.

Choosing the right approach is only part of the equation. What matters more is how these systems perform in real-world environments where decisions are executed, not just suggested.

AI Agents Driving Real-Time Decisions

Appinventiv built an AI-powered business consultant platform for MyExec that moved from static analysis to real-time decision support.

- Reduced manual analysis effort by automating business data interpretation

- Enabled faster decision-making through real-time AI-driven insights

- Built a multi-agent system to process documents and generate structured recommendations

- Replaced traditional consulting dependency with a scalable AI-driven model

This shows how AI agents can operate as decision systems with control, traceability, and enterprise-grade reliability.

Common Pitfalls & Security Challenges of AI Voice Technology

Most issues in voice AI do not come from the model alone. Building effective AI voice agent security solutions starts with the system’s design and operation. The same mistakes appear across industries, and each has a clear fix.

Treating Voice AI Like Chatbots

Teams often reuse chatbot logic for voice systems, especially when expanding AI agents in retail or other high-volume consumer environments. That approach fails because voice agents trigger actions, not just responses. Design for execution from the start. Add validation steps before any action. Separate response generation from action approval so the system does not act on raw intent.

Ignoring Telephony Layer Security

Many deployments focus on models and ignore the call layer. Attackers target SIP routing, call forwarding, and session hijacking. Secure call flows with authentication and encryption. Monitor call patterns for unusual routing or repeated attempts. Apply rate limits to block automated abuse.

Lack Of Monitoring

Some systems go live without visibility into their behavior. In many cases, issues are only detected after repeated failures or unusual usage patterns appear in logs. Errors and misuse go unnoticed until they cause damage. Track every step of the interaction. Log audio input, transcription, model output, and final action. Set alerts for failed identity checks, unusual patterns, and high-risk actions.

Over-Reliance On LLM Guardrails Alone

Guardrails help, but they do not control execution. A filtered response does not stop a bad action if the system bypasses checks. Place a control layer between the model and execution, an approach that sits at the heart of AI governance guardrails for enterprise deployments. Validate intent, enforce access rules, and require approval for sensitive actions. Treat the model as one component, not the final authority.

Each of these issues appears simple. In production, they lead to real loss or exposure if left unaddressed.

Weak validation layers and missing controls create direct execution risks in production systems.

Future of Voice Agent Security (2026–2030 Outlook)

Voice systems are approaching direct execution. They are no longer limited to answering queries. They approve actions, update records, and trigger transactions. This shift will push security closer to the point where decisions are made.

AI-Driven Threat Detection

Systems will monitor voice patterns, command flows, and session behavior in real time, using learned baselines to detect deviations during live interactions. Detection will rely less on static rules and more on models that learn normal behavior and flag deviations during the interaction.

Synthetic Identity Detection

Voice alone will not be enough to verify a user. Systems will combine voice signals with device data, session history, and usage patterns. Identity will depend on multiple signals working together.

Autonomous Security Systems

Manual review will not keep up with real-time interactions. Systems will block, pause, or escalate actions as they happen, opening new ground for AI agent business ideas built around autonomous security and real-time decision making. High-risk actions will trigger additional checks without delay.

Regulatory Tightening

Voice data will be subject to stricter controls, especially when biometric data is involved. Systems will need clear consent tracking, detailed audit logs, and defined data retention rules.

With over a decade of experience building enterprise-grade digital systems, Appinventiv sees these shifts already taking shape in live deployments. Teams are moving toward systems that can detect, decide, and act on security signals in real time, without slowing down the user experience.

Voice AI’s next phase will not be defined by capability alone. It will be defined by how safely those capabilities are executed.

How Appinventiv Helps Enterprises Build Secure Voice AI Systems

Voice AI can trigger real actions. That makes security a design requirement, not a later fix. As a specialized AI development company, Appinventiv builds voice systems with control, traceability, and compliance built into each layer.

How Appinventiv approaches secure voice AI

- Governance-first design with clear separation between intent, validation, and execution

- Control layers are placed before every action, not after

- Identity checks that combine voice, device, and session context

- Built-in compliance controls such as PII masking, audit logs, and access rules

- End-to-end traceability from audio input to final system action

Execution backed by experience

- 100+ autonomous AI agents deployed across enterprise environments

- 200+ data scientists and AI engineers working on production systems

- 150+ custom AI models trained and deployed

- Experience across 35+ industries with varied regulatory requirements

Measured business impact

- Up to 50 percent reduction in manual processes

- Over 90 percent task accuracy in controlled workflows

- Systems designed to scale up to 2x without losing control or visibility

Appinventiv builds systems that act with control, combining production experience with measurable outcomes across enterprise deployments. Voice agent security has a significant impact, and it must be built into every operation from the start.

FAQ’s

Q. Can attackers extract private data from voice AI models?

A. They can if the system is loose. Attackers try repeated questions, change phrasing, or hide instructions in speech. If the system does not check intent or limit access, data can slip out. Strong access rules, output checks, and session limits reduce this risk.

Q. How can casual conversation lead to sensitive data leaks in voice AI?

A. A user may ask seemingly harmless questions. Over a few turns, those questions can reveal patterns or partial data. If responses are not filtered, small pieces can add up. Masking sensitive fields and limiting what each session can access helps prevent this.

Q. How do compliance requirements affect voice AI development?

A. They shape how the system handles data at every step. Teams must control who can access data, how long it is stored, and how it is logged. This affects design choices early, not just final deployment.

Q. How should I handle voice data retention for compliance purposes?

A. Keep only what is needed. Set clear rules for how long recordings and transcripts stay in the system. Delete or anonymize data once it is no longer required. Always keep audit logs separate from raw voice data.

Q. What compliance frameworks should a voice agent handling healthcare data meet?

A. In the US, HIPAA is the main standard. It requires strict control over patient data, access tracking, and secure storage. Other regions may add their own rules, so systems often need to handle multiple standards.

Q. How are ethical concerns like bias, consent, and misuse handled in voice AI systems?

A. Voice systems must control how data is collected, processed, and used. Bias and discrimination are reduced through diverse training data and regular bias audits. Consent is captured before recording or analysis. Systems must limit profiling based on demographic or psychological traits and restrict the misuse of biometric or emotional signals. Transparency, fallback to human agents, and strict handling of sensitive customer data help maintain fairness and trust.

Q. How does Appinventiv ensure voice agent security in its deployments?

A. Appinventiv builds control into the system from the start. Each step, from input to action, is checked and logged. Access is limited based on role and context. The system is designed to meet standards like HIPAA, GDPR and SOC 2 without slowing execution.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

AI Outsourcing for Enterprises: How to Hire the Right Partner and Avoid Costly Implementation

Key Takeaways Don’t judge by demos alone. Real value shows in production, how the system handles scale, failures, and messy data. Data matters more than the model. If the partner isn’t strong on pipelines, validation, and data flow, problems will show up later. MLOps is not optional. Without monitoring, versioning, and retraining, even good models…

How to Choose the Right AI Cybersecurity Consultant for High-Risk AI Deployments

Key takeaways: High-risk deployments require specialized security expertise. Many organizations hire AI cybersecurity consultants to identify vulnerabilities before systems go live. A qualified AI cybersecurity expert should understand model security, data pipelines, infrastructure protection, and adversarial testing. Enterprises should evaluate experience with high-risk systems, threat modeling capabilities, and monitoring strategies before hiring a consultant. Continuous…

How to Hire Machine Learning Engineers to Scale AI From Prototype to Full Production

Key Takeaways Most AI projects fail after the demo. The model works, but the system around it does not. Data scientists build the model. ML engineers make sure it survives real traffic. Scaling AI means fixing pipelines, monitoring drift, and automating retraining, not just improving accuracy. As AI matures, teams must expand. Production systems need…