- Why HIPAA Compliance Is Critical in Medical Voice Assistants

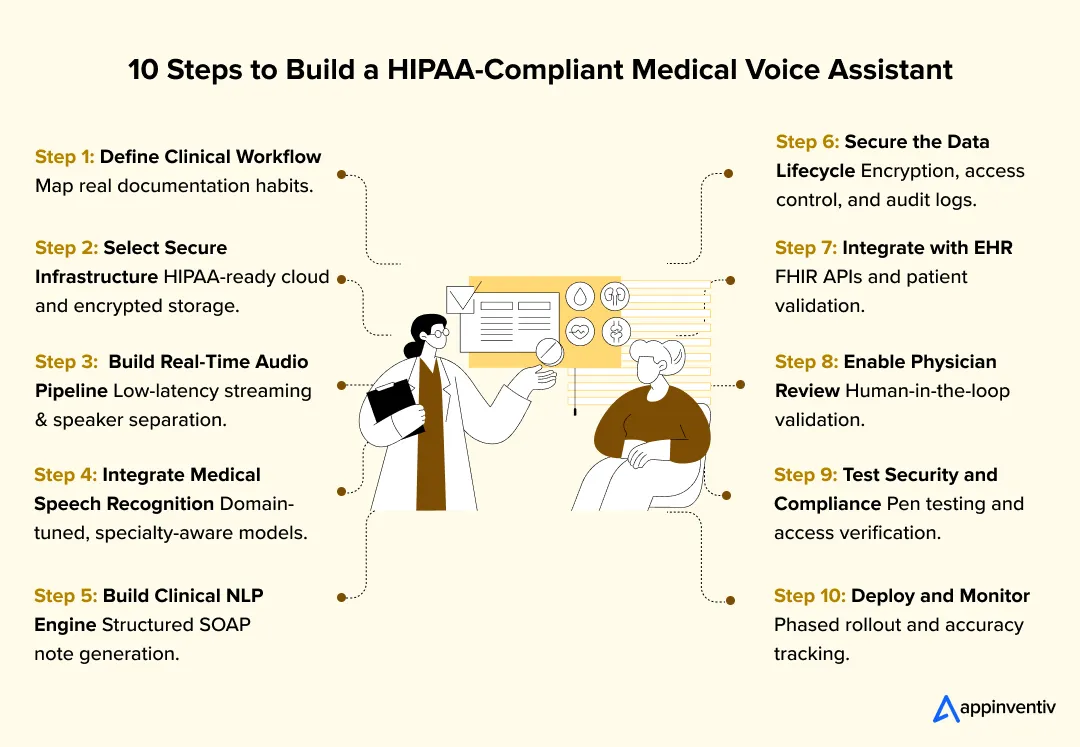

- Step-by-Step Guide to Building a HIPAA-Compliant Medical Voice Assistant

- How Do You Design for HIPAA Compliance from Day One?

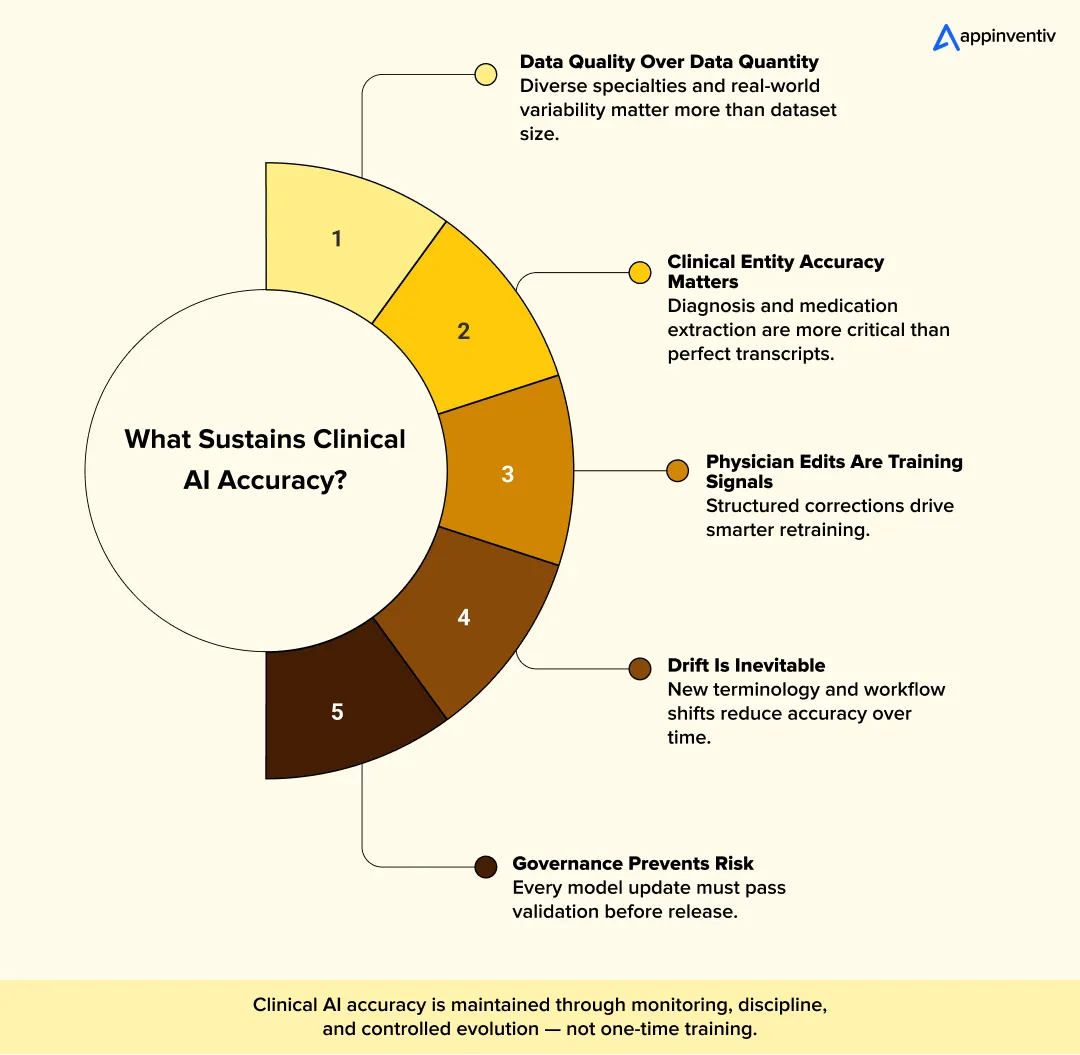

- How Do You Train AI Models for Clinical Accuracy Over Time?

- How Do You Integrate Medical Voice Assistants with EHR Systems at Scale?

- What Are the Most Common Security Risks in Medical Voice Assistants and How Can They Be Controlled?

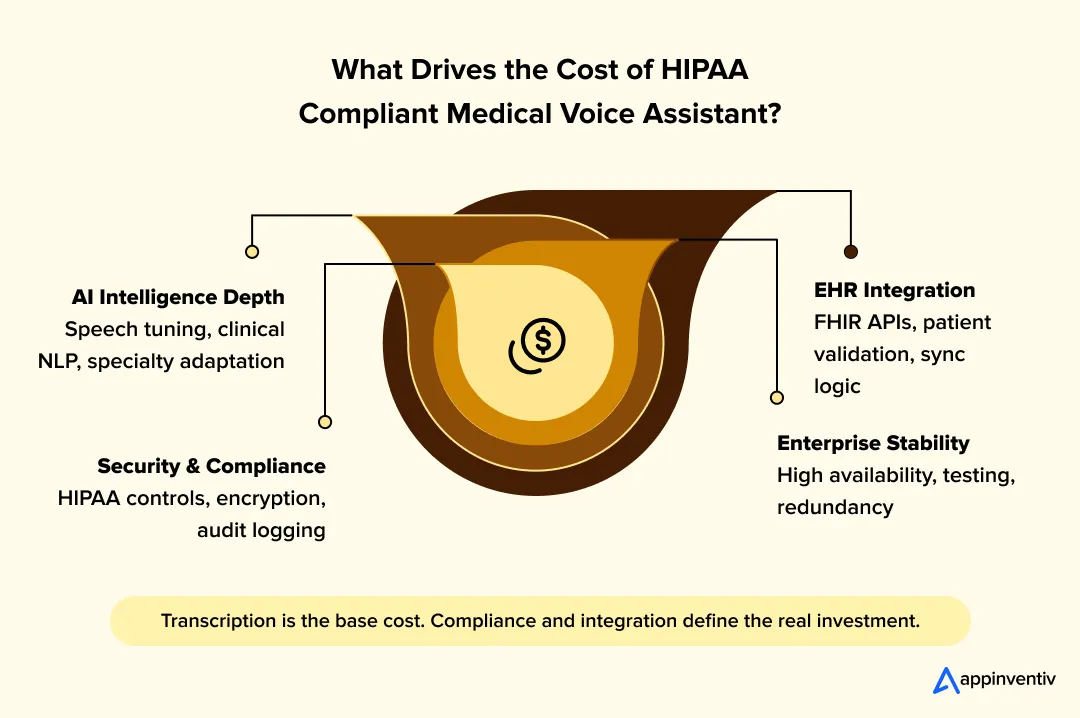

- What Is the Cost to Build a Medical Voice Assistant?

- Cost Range by System Complexity

- What Makes Medical Voice Assistants Difficult to Build and How Can Teams Address It?

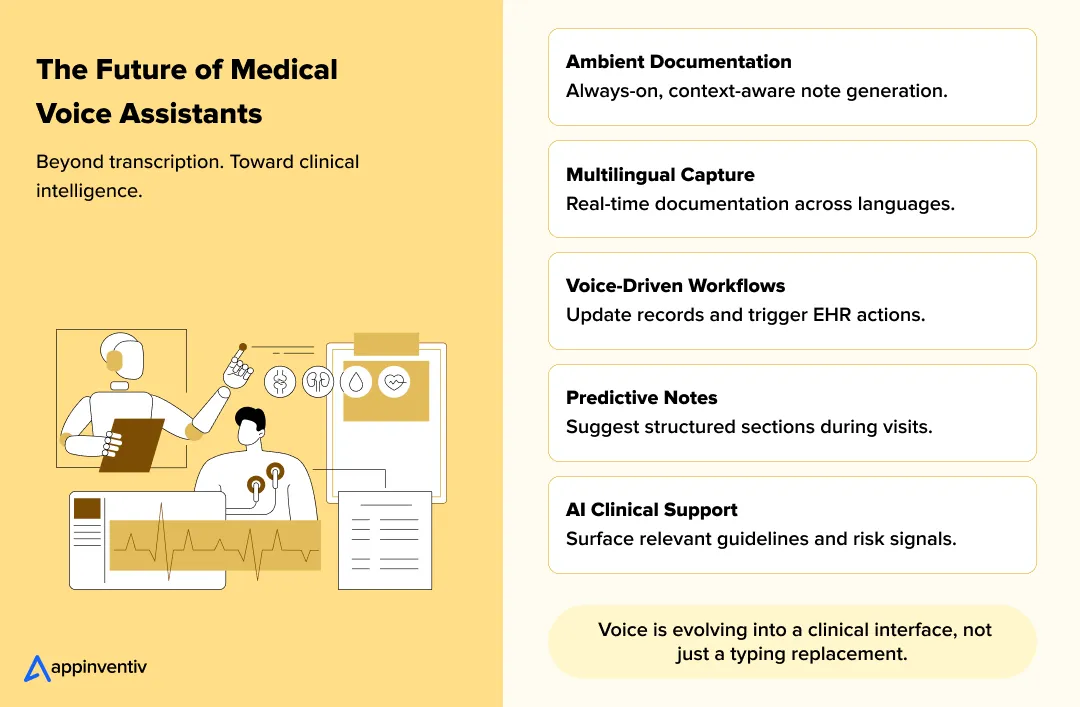

- What’s The Future of Medical Voice Assistants?

- How Appinventiv Helps Healthcare Organizations Build HIPAA-Compliant Medical Voice Assistants

- FAQs

Key takeaways:

- HIPAA compliance must be embedded into architecture, not added later.

- Clinical accuracy depends on domain-tuned models and continuous retraining.

- Real-time AI clinical documentation requires low-latency streaming pipelines.

- EHR integration complexity often drives more cost than transcription itself.

- Security governance and audit controls are essential for enterprise deployment.

- Medical voice assistants are evolving into workflow and decision support systems.

If you want to build a HIPAA compliant medical voice assistant that actually survives in a hospital environment, it has to be engineered for security, latency, and clinical accuracy from the start. Speech-to-text alone is not enough.

Doctors are spending hours every week finishing notes after the clinic. Health systems are responding by implementing AI powered voice agents aimed at capturing conversations in real time and turning them into structured documentation. The opportunity is real. So is the risk. Once a system listens inside an exam room, it handles protected health information every second. That changes how you design infrastructure, access control, storage, and integration.

This guide explains how to build a medical voice AI that supports real-time doctor-patient transcription without compromising HIPAA requirements. We will walk through architecture decisions, secure data handling, model training, EHR integration, and the cost to build a medical voice assistant at different levels of complexity.

Turn live doctor-patient conversations into structured clinical notes with measurable workflow efficiency and built-in HIPAA safeguards.

Why HIPAA Compliance Is Critical in Medical Voice Assistants

Healthcare continues to be one of the most targeted industries for cyberattacks. Recent data shows that the average cost of a healthcare data breach has exceeded $9.8 million per incident in 2025, far higher than most other sectors and highlighting the financial and regulatory stakes of handling clinical data securely.

Healthcare organizations are also actively expanding AI use into clinical operations, with automation and workflow-heavy applications such as ambient scribing and documentation among the highest-value opportunities.

In voice AI development for healthcare, regulatory controls shape architecture decisions more than feature design.

If you plan to build HIPAA compliant medical voice assistant systems, compliance decisions come first. Once a solution starts listening inside an exam room, it is handling protected health information in real time. That changes how you design infrastructure, processing pipelines, and storage.

Understanding PHI Risks in Voice Systems

Voice data contains more than conversation. It captures diagnoses, medication plans, insurance details, and sometimes background identifiers spoken casually during a visit. Once transcribed, that content becomes part of the medical record.

Risk is not limited to final storage. It appears in:

- Live audio streaming channels

- Temporary processing buffers

- Model inference environments

- API calls into electronic health records

- System monitoring and backup logs

This expanded processing chain means compliance must extend beyond storage encryption. It must cover the entire lifecycle of the audio and transcript.

Core HIPAA Requirements That Apply to Voice Systems

In healthcare voice assistant architecture, HIPAA requirements translate into specific engineering controls.

| Compliance Area | Practical Meaning in Voice Systems | Technical Focus |

|---|---|---|

| Encryption | Protect voice and transcript data during transmission and storage | TLS for streaming audio, AES-256 at rest, managed key rotation |

| Access Control | Limit who can access raw audio and structured notes | Role-based access control, least privilege enforcement |

| Secure Authentication | Prevent unauthorized entry to APIs and admin panels | Multi-factor authentication, short-lived tokens |

| Audit Logging | Track who accessed or modified PHI | Immutable logging, cross-service traceability |

| Business Associate Agreements | Ensure cloud and infrastructure vendors meet HIPAA standards | Executed BAAs with all data-handling providers |

| Data Retention | Define how long audio and transcripts are stored | Policy-driven retention schedules and secure deletion workflows |

The central principle is simple: voice data must be treated as PHI from the moment it is captured to the moment it is deleted. That includes raw audio, temporary transcripts, structured documentation, and even system logs. Compliance is not a feature layered on top of AI medical voice assistant development. It is a structural requirement that governs how the system is built and operated.

Step-by-Step Guide to Building a HIPAA-Compliant Medical Voice Assistant

Building medical AI assistant systems requires sequencing workflow alignment, infrastructure security, and model accuracy deliberately.

If you want to build HIPAA compliant medical voice assistant systems, here are some steps that usually determine whether the solution becomes operational or stays experimental.

Step 1: Define Clinical Workflow Requirements

When you build your AI voice agent, start by understanding how documentation happens today. Not how it should happen, but how it actually happens.

In some clinics, physicians type during the visit. In others, they dictate later. Some specialties rely heavily on structured templates, others use free-form notes. Map the real interaction between doctor and patient. Identify when recording should begin, how interruptions are handled, and when the note is considered complete.

Clarify early:

- Which departments are included in the rollout

- Expected output format such as structured SOAP sections

- Where review and edits occur

- Acceptable delay during live transcription

If workflow alignment is weak, even accurate transcription will feel intrusive.

Outcome: A documented clinical workflow aligned with daily practice.

Step 2: Select HIPAA-Compliant Infrastructure

Once workflows are clear, infrastructure decisions follow. This is where many teams underestimate complexity.

Choose cloud environments that formally support HIPAA-regulated workloads. Separate development data from production data. Restrict administrative privileges. Encrypt storage by default and control key access centrally.

Core priorities include:

- Network segmentation between services

- Encrypted storage for audio and transcripts

- Implement role-based identity and access management

- Centralized identity management

The goal is simple. The system must handle patent healthcare data securely before processing a single real encounter.

Outcome: Infrastructure prepared for regulated healthcare operations.

Step 3: Build the Real-Time Audio Streaming Pipeline

Audio quality and timing shape the clinician’s experience. Stable streaming architecture is the backbone of real-time AI clinical documentation in live consultations.

Capture input reliably, stream it securely and avoid noticeable delay. Physicians will not tolerate lag during live documentation. Even small buffering issues can break trust.

Engineering considerations include:

- Secure streaming over encrypted channels

- Noise reduction suited for exam rooms

- Speaker separation to distinguish clinician from patient

- Continuous streaming inference rather than batch uploads

Real-time behavior is not optional. It is expected.

Outcome: Stable and responsive audio ingestion.

Step 4: Integrate Medical Speech Recognition

Deploying HIPAA compliant speech to text AI requires domain tuning and secure inference environments. Standard speech engines are not designed for medical conversations. They often misinterpretate drug names, procedural terms.

Medical speech recognition software development usually involves expanding vocabulary sets, tuning pronunciation handling, and testing against real consultation recordings. Incremental transcript updates are preferred over delayed outputs.

The technology of your speech recognition software for healthcare should focus on:

- Specialty-specific terminology

- Accurate recognition of abbreviations

- Accent and background noise handling

- Incremental real-time updates

Errors at this layer propagate into clinical documentation.

Outcome: Reliable medical speech-to-text conversion.

Step 5: Build the Clinical NLP and Documentation Engine

Transcripts alone are not enough. Clinicians need structured notes they can review quickly.

This is the stage where teams truly build AI clinical documentation assistant capabilities rather than simple transcription tools.

The NLP layer should identify symptoms, diagnoses, medications, and treatment plans. It should organize content into familiar sections and avoid altering clinical meaning during summarization.

Key capabilities include:

- Clinical entity extraction

- Structured note formatting

- Abbreviation normalization

- Alignment with electronic health record fields

At this point, the system transitions from transcription engine to AI scribe for doctors operating inside clinical workflows. When done correctly, the assistant reduces after-hours charting instead of creating extra editing work.

Outcome: Structured documentation ready for physician validation.

Step 6: Implement Secure Data Handling

By this stage, the system can listen, transcribe, and structure notes. Now the question becomes simple: how is that data controlled?

Every component that touches audio or transcripts must follow the same security posture. That includes streaming services, inference layers, storage systems, backups, and logs. Protected health information cannot be treated differently at different stages of processing.

This layer generally implements:

- AES-256 encrypted storage

- Role-based access enforcement

- Immutable access logging

- Defined archival and deletion policies

Temporary data is still PHI. Intermediate transcripts are still PHI. Logs that reference patient identifiers are still PHI. The system must reflect that consistently.

Outcome: A controlled and auditable PHI lifecycle.

Step 7: Integrate with EHR Systems

Transcription only creates value when it reaches the electronic health record accurately.

Integration should use supported healthcare standards such as FHIR where possible. Structured notes must be mapped to the correct patient, encounter, and documentation template. Even small mismatches in identifiers can create clinical risk.

Focus on:

- Accurate patient and visit matching

- Mapping structured output to defined EHR fields

- Confirmation of successful data writes

- Retry logic for failed transactions

Integration failures are one of the most common points of friction during deployment. This layer must be treated as critical infrastructure.

Outcome: Reliable synchronization with the EHR.

Also Read: Healthcare Interoperability Guide 2026: FHIR & EHR Integration

Step 8: Implement Human-in-the-Loop Validation

Even strong AI models require oversight. Physicians must review and approve documentation before it becomes part of the medical record.

Provide a clear review interface where edits can be made quickly. Track changes transparently. Capture recurring corrections to inform future model adjustments.

Key considerations:

- Separation between draft and finalized documentation

- Visible edit history

- Feedback loops tied to model improvement

Adoption increases when clinicians understand that they retain control over the final note.

Outcome: Higher documentation accuracy and stronger clinician trust.

Step 9: Conduct Security and Compliance Testing

Before expanding access, validate the system under real conditions.

Test APIs, streaming endpoints, and authentication flows. Attempt role escalation and access boundary violations. Review logs to ensure traceability works as expected. Confirm that encryption settings are enforced consistently.

Testing should include:

- Penetration testing against exposed services

- Vulnerability scanning of infrastructure components

- Access control verification

- Log integrity validation

Compliance should be demonstrated through evidence, not assumption.

Outcome: Confirmed security posture prior to broader rollout.

Step 10: Deploy and Monitor the System

Deployment should be phased rather than system-wide from day one. Begin with a controlled group of clinicians. Monitor performance closely.

Establish dashboards to track latency, transcription accuracy, API success rates, and system uptime. Review access logs regularly. Watch for drift in model accuracy over time.

Operational priorities include:

- Monitoring response time and reliability

- Tracking documentation accuracy trends

- Reviewing security alerts

- Scheduling periodic model updates

Long-term success depends on consistent oversight. Stability and predictability matter more than feature expansion.

Outcome: A monitored, compliant medical voice assistant operating reliably in production.

Move from framework to a secure, real-time clinical deployment.

How Do You Design for HIPAA Compliance from Day One?

HIPAA compliant AI transcription development requires engineering controls at every stage of the data lifecycle. If compliance is discussed after the system is built, it usually means something will need to be rebuilt.

In regulated healthcare environments, architecture and compliance move together. When a medical voice assistant captures live patient conversations, every layer touching that data must reflect HIPAA safeguards.

Below is how experienced teams approach compliance during system design.

Encryption Across the Entire Data Path

Voice systems handle PHI in motion and at rest. It includes live audio streams, temporary transcripts, structured notes, and archived records. That’s why HIPAA compliant speech to text AI must enforce encryption from audio capture to transcript storage.

For data in transit, enforce TLS across all client-to-server and service-to-service communication. Internal traffic should not be assumed safe simply because it stays within a private network.

For data at rest:

- Encrypt stored audio and transcripts using strong encryption standards such as AES-256

- Use centralized key management services instead of application-managed keys

- Restrict key access through role-based controls

- Define scheduled key rotation policies

Temporary buffers and intermediate files should follow the same rules as permanent storage. PHI does not become less sensitive because it is short-lived.

Identity and Access Management

Access control is often where compliance gaps appear.

Every user and service account should have clearly defined permissions. Administrative privileges must be separated from operational roles. Multi-factor authentication should be mandatory for elevated access.

In practice, this means:

- Role-based access control aligned to job function

- Least privilege enforcement

- Short-lived API tokens

- Periodic access reviews

Service-to-service authentication should be explicit. Hard-coded credentials or shared keys increase long-term risk.

Also Read: AI in Healthcare Administration: Reduce Workload by 40%

Audit Logging and Traceability

Compliance requires traceability. You should be able to reconstruct who accessed patient data and when.

Log events across:

- Audio ingestion services

- Speech recognition and NLP layers

- Storage reads and writes

- EHR integration calls

Logs should be centralized and protected against modification. Time synchronization across services helps maintain reliable event sequencing. Automated monitoring can flag unusual access patterns before they escalate.

Audit readiness is not about storing logs. It is about being able to interpret them.

Secure API and Service Communication

Medical voice systems rely heavily on APIs, particularly for EHR integration.

Secure API design should include:

- Strong authentication with token expiration

- Strict input validation

- Rate limiting to prevent misuse

- Segmented internal and external endpoints

Internal services should communicate within controlled network boundaries. Public exposure should be limited to defined gateways with monitored traffic.

Compliance at this level is not a theoretical exercise. It is reflected in how predictable and controlled the system behaves under real operating conditions.

How Do You Train AI Models for Clinical Accuracy Over Time?

Model performance does not stay fixed after deployment. Clinical language evolves, new medications enter the market, and documentation habits vary across departments. If training stops after launch, accuracy slowly declines. Long-term reliability depends on disciplined model lifecycle management.

This is where many AI medical voice assistant development initiatives either mature or stall.

Preparing Clinical Datasets

High accuracy begins with representative data.

Training data should reflect real doctor-patient conversations, not scripted examples. Audio should include natural interruptions, overlapping speech, and exam room background noise. Transcripts must be annotated beyond word-level correction to capture structured clinical elements such as symptoms, diagnoses, medications, and plans.

Strong dataset preparation usually includes:

- Specialty-specific datasets, for example cardiology, pediatrics, or orthopedics

- Annotated entity tagging aligned with clinical documentation standards

- Variations in accents, speech pace, and tone

- Controlled inclusion of environmental noise

Data quality directly influences downstream entity extraction and structured note generation.

Model Optimization and Fine-Tuning

Initial training provides a foundation. Fine-tuning LLM models aligns the system with real-world practice.

Domain adaptation adjusts acoustic and language models to better recognize medical terminology. Vocabulary injection allows the model to prioritize drug names, abbreviations, and specialty terms. Evaluation should measure not only word error rate but also clinical entity accuracy.

Common optimization practices include:

- Specialty-level fine-tuning of language models

- Custom vocabulary weighting for rare but critical terms

- Incremental retraining using validated correction data

- Benchmark testing against controlled evaluation datasets

Accuracy in healthcare voice recognition software is measured by clinical correctness, not just transcript readability.

Continuous Model Improvement

Performance drift is gradual and often unnoticed until clinicians begin flagging errors. New terminology, changes in documentation style, and demographic shifts can reduce recognition quality.

Enterprise-grade systems monitor accuracy continuously. This typically involves:

- Capturing structured physician edits from review workflows

- Periodic retraining using curated correction datasets

- Drift detection across departments and specialties

- Scheduled evaluation cycles with standardized benchmarks

Model retraining should follow defined governance controls. Updated models must pass validation tests before being deployed into production.

AI systems in clinical decision making require structured feedback loops and controlled release processes. Sustained accuracy depends on monitoring, retraining, and disciplined evaluation rather than one-time model optimization.

How Do You Integrate Medical Voice Assistants with EHR Systems at Scale?

At enterprise scale, integration is not just about sending notes into an electronic health record. It requires standards compliance, workflow coordination across systems, and controlled recovery mechanisms when failures occur.

Below is a structured view of what large-scale AI-EHR integration typically involves.

| Integration Area | Enterprise Focus | Technical Considerations |

|---|---|---|

| Healthcare Integration Standards | Ensure interoperability with major EHR platforms | HL7 message structures for legacy systems, FHIR resources for structured data exchange, SMART on FHIR for secure application-level access |

| Multi-System Synchronization | Keep voice assistant output aligned with scheduling, billing, and clinical systems | Event-driven updates, real-time encounter linking, API orchestration across services |

| Patient Identity Matching | Prevent documentation mismatches across records | Master patient index validation, encounter ID verification, deterministic and probabilistic matching safeguards |

| Metadata Alignment | Preserve clinical context during data transfer | Mapping structured notes to correct EHR templates, visit timestamps, provider identifiers, department codes |

| Sync Retry Mechanisms | Maintain reliability during network or API failures | Idempotent API calls, exponential backoff retry logic, transaction logging |

| Conflict Resolution Controls | Prevent duplicate or overwritten documentation | Version control checks, write validation rules, concurrency handling |

| Data Reconciliation Processes | Detect and correct integration inconsistencies | Periodic cross-system validation, automated discrepancy reporting, audit-based verification |

Enterprise integration is less about connectivity and more about controlled data movement. Systems must exchange structured information reliably, maintain patient context, and recover gracefully when transactions fail.

Also Read: How to Build an AI Scheduling Assistant for Healthcare: Achieve 35% Better Efficiency

What Are the Most Common Security Risks in Medical Voice Assistants and How Can They Be Controlled?

When a medical voice assistant goes live, security risk becomes practical, not theoretical. The system is now capturing real conversations, writing into clinical records, and interacting with hospital infrastructure. Weaknesses tend to show up in access control, data handling, or integration points.

Below are the risks that appear most often in operational environments and how experienced teams address them.

Unauthorized Access Risks

Access problems usually start with identity mismanagement rather than advanced attacks. Over-permissioned accounts, shared credentials, or forgotten test users can create unnecessary exposure.

Typical scenarios include:

- Administrative access not limited by role

- API tokens that do not expire quickly

- Internal dashboards accessible without multi-factor authentication

Mitigation requires discipline:

- Enforce multi-factor authentication for privileged users

- Apply least privilege principles to every account

- Use short-lived service tokens instead of static credentials

- Conduct periodic access reviews

The goal is simple. No user or service should have more access than required.

Data Leakage Risks

Leakage often happens quietly. A misconfigured storage bucket, a debugging log containing patient identifiers, or a backup system without encryption can expose PHI.

Common risk points include:

- Logs that capture structured patient data

- Non-production environments using real encounter data

- Unencrypted backup storage

Control measures should be consistent across environments:

- Encrypt storage by default

- Restrict log visibility to authorized roles

- Separate production and testing datasets

- Monitor unusual outbound traffic patterns

PHI should be treated the same way in development as in production.

Voice Spoofing Risks

Voice-based systems introduce a different category of risk. In telehealth or remote documentation scenarios, synthetic or replayed audio could be injected to influence documentation.

While this is less common in in-person clinic environments, it becomes relevant as remote care expands.

Mitigation strategies include:

- Verifying clinician identity at session start

- Applying speaker verification models

- Monitoring abnormal speech patterns

- Restricting who can initiate transcription sessions

Voice input should not be assumed trustworthy without validation.

Model Exploitation Risks

AI systems can be influenced by unexpected or malicious input. In clinical transcription systems, poorly validated input may distort structured notes or trigger unintended behavior.

Risks typically surface through:

- Unvalidated API requests

- Malformed input passed directly to processing services

- Excessive request volume targeting inference endpoints

Mitigation focuses on resilience:

- Strict input validation at service boundaries

- Rate limiting on external APIs

- Monitoring unusual model outputs

- Isolating services to prevent cascade failures

Security in medical AI systems is ongoing. It requires active monitoring and periodic reassessment as usage patterns evolve.

Also Read: How to Overcome AI Challenges in Healthcare

From secure AI architectures to enterprise healthcare deployments, we build production-ready systems designed for regulated environments.

What Is the Cost to Build a Medical Voice Assistant?

The short answer: it depends on scope, compliance depth, and integration complexity. For most healthcare organizations, the cost to build a medical voice assistant falls between $40,000 and $400,000. The lower end typically covers focused transcription capabilities. The higher end reflects enterprise-grade systems with structured documentation, EHR integration, and compliance controls.

Below is how that range usually breaks down.

1. Core Development Costs

This includes the engineering work required to develop AI-powered medical transcription and structured documentation logic.

Typical cost drivers:

- Medical speech recognition configuration

- Clinical NLP development

- Real-time streaming setup

- Frontend clinician interface

- Testing across devices and environments

Estimated Range: $25K–$150K

Complexity increases when supporting multiple specialties or structured documentation templates. The largest cost variable in AI medical transcription software development is the depth of clinical NLP and specialty-level tuning required.

2. Infrastructure and Hosting Costs

Real-time systems require stable, secure infrastructure.

Cost components often include:

- HIPAA-eligible cloud hosting

- Encrypted storage

- Streaming services

- Load balancing and scaling controls

- Monitoring and logging tools

Estimated Range (initial setup + first year): $10K–$80K

Costs vary depending on traffic volume and model inference load.

3. Compliance and Security Implementation

Building HIPAA controls into the system requires additional engineering and validation effort.

This may include:

- Encryption implementation across services

- Identity and access management setup

- Audit logging systems

- Security testing and risk assessments

Estimated Range: $5K–$50K

Projects involving HIPAA compliant AI transcription development typically allocate additional budget to audit validation and infrastructure hardening. For enterprise deployments, this portion tends to expand due to formal security reviews.

4. EHR Integration and Interoperability

At enterprise scale, voice AI for electronic health records requires standards compliance and structured synchronization logic. And it often becomes one of the largest cost variables.

Factors influencing cost:

- FHIR-based API development

- Custom mapping to EHR templates

- Testing across multiple departments

- Sync and reconciliation workflows

Estimated Range: $10K–$120K

Multi-system hospital environments push integration costs toward the higher end.

Cost Range by System Complexity

Enterprise-grade AI scribes for doctors’ solutions require higher investment due to structured integration demands.

Below is a simplified view of how the cost to build a medical voice assistant typically scales.

| System Scope | Typical Capabilities | Estimated Cost |

|---|---|---|

| Basic Transcription Tool | Real-time speech-to-text with minimal NLP | $40K–$80K |

| Mid-Level Clinical Assistant | Structured notes + limited EHR integration | $80K–$200K |

| Enterprise-Grade Voice Assistant | Full NLP, multi-department integration, compliance architecture | $200K–$400K |

Reliable voice AI for electronic health records depends on strict identity validation and transaction integrity controls.

What Increases the Cost?

Several factors move the project toward the upper end of the range:

- Multi-specialty model tuning

- Advanced AI clinical documentation tools

- Complex healthcare voice assistant architecture

- High availability and redundancy requirements

- Extensive security testing and audit preparation

Organizations planning to build enterprise AI virtual health assistant systems should budget for ongoing model updates and infrastructure scaling as adoption grows.

Also Read: AI Personal Assistant App Development Cost

What Makes Medical Voice Assistants Difficult to Build and How Can Teams Address It?

Organizations attempting to build AI clinical documentation assistant platforms often underestimate documentation variability across specialties.

Most early demos of medical voice assistants look promising. The system transcribes clearly. Notes appear structured. Integration works in a sandbox. The real friction starts when the tool meets real clinicians, real patients, and real hospital systems.

Below are the challenges that tend to surface in production and the practical responses that work.

1. Medical Vocabulary Complexity → Use Domain-Trained Models

Clinical language is messy. Physicians switch between formal terminology and shorthand mid-sentence. Drug names are long and often sound alike. The same abbreviation may mean different things in different departments.

A generic speech engine will struggle.

The solution is targeted domain adaptation. That means training and fine-tuning models on specialty-specific conversations, expanding vocabulary sets with real encounter data, and validating accuracy against structured clinical outputs. Word accuracy alone is not enough. Entity-level accuracy matters more.

2. Real-Time Latency Constraints → Optimize Streaming Architecture

Physicians will not tolerate delay during live documentation. If transcripts lag behind speech, the system quickly becomes a distraction.

Latency issues usually stem from buffering strategies or overloaded inference services. Batch-style processing does not work in live consultations.

The practical approach is to use streaming pipelines with short rolling buffers and continuous partial transcript updates. Latency thresholds should be monitored in production, not just during testing. If response times cross acceptable limits, alerts should trigger investigation immediately.

3. Integration Complexity → Use Standards-Based APIs

Electronic health record systems are rarely uniform across large organizations. Templates vary. Department workflows differ. Even patient identifier formats may not be consistent.

Integration mistakes create operational risk.

Teams that succeed treat integration as core infrastructure. Standards such as FHIR simplify structured data exchange. Strict validation of patient and encounter context reduces mismatches. Idempotent update logic prevents duplicate notes during retries.

Integration is not a finishing step. It is a structural dependency.

4. Compliance Overhead → Design with Security Controls Built In

Security reviews can slow projects if compliance is treated as a separate phase. Retrofitting encryption, audit logging, or identity controls often forces architectural changes.

The better approach is to embed security controls from the start. Encrypt every data path. Define role-based access policies early. Centralize logging and monitoring before go-live.

When compliance is integrated into system design, scaling does not introduce new regulatory exposure.

5. Clinical Adoption Resistance → Align With Existing Workflows

Even well-built systems fail if clinicians feel forced to change how they work.

Some physicians prefer structured templates. Others rely on narrative notes. A rigid documentation style can increase editing time rather than reduce it.

Adoption improves when the assistant supports existing workflows instead of replacing them. Provide clear review and edit controls. Pilot with a small group first. Incorporate feedback before broader deployment.

Building medical AI assistant systems demands operational realism more than experimental speed. Clinical trust builds gradually. Technical capability alone does not guarantee it.

What’s The Future of Medical Voice Assistants?

Medical voice assistants are moving past simple transcription. The first generation focused on reducing typing. The next phase of voice AI development for healthcare is expanding beyond transcription into workflow intelligence.

It will be focused on intelligence, automation, and deeper clinical integration.

Ambient Clinical Intelligence

Future systems are designed to work quietly in the background using technologies like ambient listening in healthcare. Physicians do not need to manually start or manage documentation. The assistant listens during the consultation and builds structured notes automatically.

The real challenge here is maintaining context. Conversations move quickly. Patients interrupt. Clinicians shift topics. The system must follow that flow without distorting medical meaning. When implemented correctly, documentation feels natural instead of forced.

Multilingual Transcription

Healthcare environments are multilingual. Patients often switch languages mid-visit. Advanced systems now detect language automatically and generate standardized clinical documentation regardless of the spoken language.

This requires robust speech models trained across accents, dialects, and real-world audio conditions. As health systems expand globally, multilingual capability becomes operationally necessary.

Voice-Driven Clinical Workflows

Voice is starting to function as an interface layer, not just a documentation tool.

Instead of only creating notes, systems can retrieve patient history, update medication lists, or initiate documentation fields directly within the EHR. This shift represents early-stage AI clinical workflow automation built directly on voice interaction.

Expanding the assistant’s role from passive listener to workflow participant. Strong integration controls and permission management are essential to keep these actions safe.

Predictive Documentation

Predictive models are increasingly layered into real-time AI clinical documentation workflows. As documentation patterns are often repetitive within specialties. Emerging systems analyze historical encounters and suggest structured sections or likely assessments during the visit.

These suggestions are meant to support clinicians, not replace their judgment. When carefully governed, predictive analytics in healthcare reduces documentation time without reducing clinical control.

AI-Assisted Diagnostic Support

In more advanced environments, structured transcripts combined with patient data can surface relevant guidelines or highlight possible risk indicators during documentation.

With agentic AI in healthcare, systems function as decision support tools. They provide context, not conclusions. Auditability and validation remain critical before such features are widely adopted.

The overall direction is clear. Over time, AI clinical workflow automation will extend beyond documentation into broader care coordination systems.

Voice assistance is becoming a working interface across documentation, system interaction, and clinical support rather than a simple replacement for typing.

Future-ready medical voice assistants require secure design, intelligent workflows, and long-term scalability.

Let’s build it together.

How Appinventiv Helps Healthcare Organizations Build HIPAA-Compliant Medical Voice Assistants

Building a HIPAA-compliant medical voice assistant requires more than AI capability. It demands regulatory alignment, production-grade infrastructure, and deep healthcare system integration. With 500+ digital health platforms delivered, 450+ healthcare clients served, and 300+ AI-powered solutions deployed, Appinventiv’s AI development services brings structured healthcare delivery experience into every engagement.

We partner with healthcare organizations that develop AI medical assistant platforms requiring secure, production-grade deployment.

We design systems with a compliance-first architecture, shaped by real-world healthcare deployments such as the YouCOMM health app, where secure real-time communication, encrypted data handling, and controlled access were foundational. Encryption across all data states, strict role-based access controls, audit logging, and secure API layers are embedded from the start.

Real-time transcription pipelines are engineered for low-latency performance, domain-tuned medical speech recognition, and structured clinical documentation aligned with physician workflows.

Our team offers AI consulting services before integrating voice systems directly into enterprise healthcare ecosystems using standards-based APIs. Patient identity validation, encounter synchronization, and retry-safe data writes ensure reliable EHR connectivity at scale. Integration is treated as critical infrastructure, not an afterthought.

Before production rollout, every system passes clinical-grade testing frameworks covering security validation, performance benchmarking, and structured accuracy evaluation across specialties.

Connect with our experts and build your HIPAA-compliant medical voice assistant for regulated environments.

FAQs

Q. How do you build a HIPAA compliant voice assistant for healthcare?

A. To build a HIPAA compliant voice assistant, the process must begin with compliance architecture, not model selection. Audio capture, streaming pipelines, speech recognition, structured NLP, and EHR integration must all operate within encrypted and access-controlled environments.

A production-ready system includes:

- TLS-secured real-time audio streaming

- Encrypted storage with managed key rotation

- Role-based identity and access controls

- Immutable audit logging

- Standards-based EHR integration

Building medical AI assistant systems for healthcare requires treating voice data as protected health information from capture to deletion. Compliance is not a layer added later. It defines the system design from the beginning.

Q. How does AI medical transcription work for doctor-patient conversations?

A. AI medical transcription software development typically combines real-time speech recognition with clinical NLP processing.

The system captures live audio during a consultation, streams it securely to an inference engine, and converts speech into text. That transcript is then processed by a clinical language model that extracts medical entities such as symptoms, diagnoses, medications, and treatment plans. The output becomes structured documentation aligned with electronic health record formats.

In advanced deployments, the system functions as an AI scribe for doctors, updating notes incrementally during the visit and allowing physician review before finalization.

Q. What technologies are used to build healthcare voice assistants?

A. Voice AI development for healthcare involves multiple layers of technology working together:

- Real-time speech recognition engines trained on medical vocabulary

- Clinical NLP models for entity extraction and structured note generation

- Secure streaming and buffering architectures

- Encryption and identity management systems

- Standards-based APIs such as FHIR and HL7 for integration

When organizations develop AI medical assistant platforms, they must combine speech modeling, secure infrastructure, and interoperability standards within a single healthcare voice assistant architecture.

Q. How can AI improve clinical documentation workflows?

A. AI can reduce administrative burden by enabling real-time AI clinical documentation during patient encounters. Instead of typing notes after clinic hours, physicians can review structured documentation generated during the visit.

AI clinical workflow automation can also assist with:

- Organizing notes into SOAP format

- Suggesting structured documentation sections

- Triggering documentation-related EHR updates

- Reducing repetitive manual entry

When properly implemented, these systems support clinician productivity without replacing clinical judgment.

Q. What are the HIPAA requirements for medical voice AI systems?

A. HIPAA compliant AI transcription development requires strict safeguards across the entire data lifecycle.

Key requirements include:

- Encryption in transit and at rest

- Controlled access using role-based policies

- Secure authentication for APIs and dashboards

- Audit logging and traceability

- Business Associate Agreements with infrastructure vendors

- Defined retention and secure deletion policies

HIPAA compliant speech to text AI systems must enforce these controls consistently across audio streaming, transcript processing, storage, and EHR integration.

Q. How does Appinventiv build HIPAA-compliant healthcare AI solutions?

A. Appinventiv approaches voice AI development for healthcare with a compliance-first framework. Systems are designed with encrypted pipelines, identity governance, structured integration, and clinical validation built in from the start.

With experience delivering hundreds of digital health platforms and AI-powered solutions, the team supports organizations that want to build AI clinical documentation assistant systems aligned with real clinical workflows.

Every deployment includes architecture validation, security testing, integration verification, and controlled rollout to ensure stable, compliant production environments.

Q. Can medical voice assistants be customized using open source technologies?

A. Yes, medical voice assistants can be built using open source voice assistant platforms and modular components, depending on compliance and operational requirements.

Organizations often choose API-based custom solutions that allow BYO STT, LLM, or TTS models for greater control over accuracy and performance. Frameworks such as LiveKit, Pipecat, or Vocode can support real-time streaming and conversational orchestration, while tools like the Porcupine wake word Python SDK enable secure on-device activation.

Customization also includes:

- Custom vocabulary implementation for specialty terminology

- Domain-trained clinical NLP models

- Integration with proprietary EHR workflows

- Controlled data processing pipelines

However, open source flexibility must still align with HIPAA controls, security hardening, and audit logging requirements before production deployment.

Q. Should healthcare organizations choose self-hosting or ready-made voice assistant software?

A. The decision between self-hosting and ready-made software depends on compliance depth, data residency requirements, and integration complexity.

Ready-made software options may reduce initial development time but often limit customization, specialty tuning, and EHR integration flexibility. They may also restrict control over data storage and infrastructure configuration.

Self-hosting or hybrid deployment models provide:

- Full control over PHI handling

- Data residency alignment with regulatory mandates

- Custom healthcare voice assistant architecture

- Infrastructure-level security governance

For enterprise healthcare environments, deployment strategy should be determined by compliance obligations, long-term scalability needs, and integration depth rather than short-term cost savings.

Q. How should conversational design be handled in medical voice assistants?

A. Conversational design in healthcare must feel natural while enforcing strict compliance. Systems should support natural conversation flows based on real clinical interactions, accommodate authentic accent patterns, and require minimal training for physicians.

At the same time, safeguards such as consent capture language, redaction of sensitive fields, configurable retention windows, and role-based access control must be embedded into the experience. Access to EHR and scheduling APIs should follow least-privilege principles.

In clinical environments, good conversational design balances usability, privacy, and workflow safety.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

AI Outsourcing for Enterprises: How to Hire the Right Partner and Avoid Costly Implementation

Key Takeaways Don’t judge by demos alone. Real value shows in production, how the system handles scale, failures, and messy data. Data matters more than the model. If the partner isn’t strong on pipelines, validation, and data flow, problems will show up later. MLOps is not optional. Without monitoring, versioning, and retraining, even good models…

How to Choose the Right AI Cybersecurity Consultant for High-Risk AI Deployments

Key takeaways: High-risk deployments require specialized security expertise. Many organizations hire AI cybersecurity consultants to identify vulnerabilities before systems go live. A qualified AI cybersecurity expert should understand model security, data pipelines, infrastructure protection, and adversarial testing. Enterprises should evaluate experience with high-risk systems, threat modeling capabilities, and monitoring strategies before hiring a consultant. Continuous…

How to Hire Machine Learning Engineers to Scale AI From Prototype to Full Production

Key Takeaways Most AI projects fail after the demo. The model works, but the system around it does not. Data scientists build the model. ML engineers make sure it survives real traffic. Scaling AI means fixing pipelines, monitoring drift, and automating retraining, not just improving accuracy. As AI matures, teams must expand. Production systems need…