- How to Select the Right AI Cybersecurity Consultant for High-Risk AI Deployments

- The Role of an AI Cybersecurity Consultant in Securing AI Systems

- Key Skills to Look for in an AI Cybersecurity Consultant

- Questions to Ask Before Hiring an AI Cybersecurity Consultant

- The Importance of AI Red-Team Testing and Adversarial Simulations

- AI Governance, Compliance, and Regulatory Requirements

- Common Mistakes Companies Make When Hiring an AI Security Consultant

- Why Partner With Appinventiv for Secure AI Deployments

- High-risk deployments require specialized security expertise. Many organizations hire AI cybersecurity consultants to identify vulnerabilities before systems go live.

- A qualified AI cybersecurity expert should understand model security, data pipelines, infrastructure protection, and adversarial testing.

- Enterprises should evaluate experience with high-risk systems, threat modeling capabilities, and monitoring strategies before hiring a consultant.

- Continuous monitoring, red-team testing, and strong governance practices help protect automated systems from evolving cyber threats.

- Partnering with experienced firms that offer AI and cybersecurity consulting helps organizations deploy secure, reliable AI systems.

You usually notice this right before launch. Everything looks stable, data is flowing, and then the real question comes up. Is this actually secure?

We see this often while auditing high-risk AI systems. In one recent financial engagement, the platform had already cleared standard checks, yet inference-time gaps still allowed prompt manipulation to influence outputs.

That’s where teams pause. Once these systems go live across finance, healthcare, or logistics, even a small gap can expose sensitive data or disrupt operations.

The risk is growing fast. The 2026 CrowdStrike Global Threat Report highlights a 89% year-over-year rise in AI-driven cyber activity. Attacks are becoming faster, more adaptive, and harder to detect.

Over time, we have seen threats shift from simple intrusions to AI-powered patterns that learn and bypass defenses at scale. What once needed manual effort can now run continuously in the background.

This is why many organizations bring in AI cybersecurity consultants before launch. Whether you extend your internal team or work with specialists, early intervention helps close gaps before they turn into incidents.

In this blog, we will guide you through multiple ways and the hooks and crooks involved in the whole process. Let’s have a look!

The 2026 CrowdStrike report shows AI-driven cyber activity increased by 89%. Protect your systems before attackers test them.

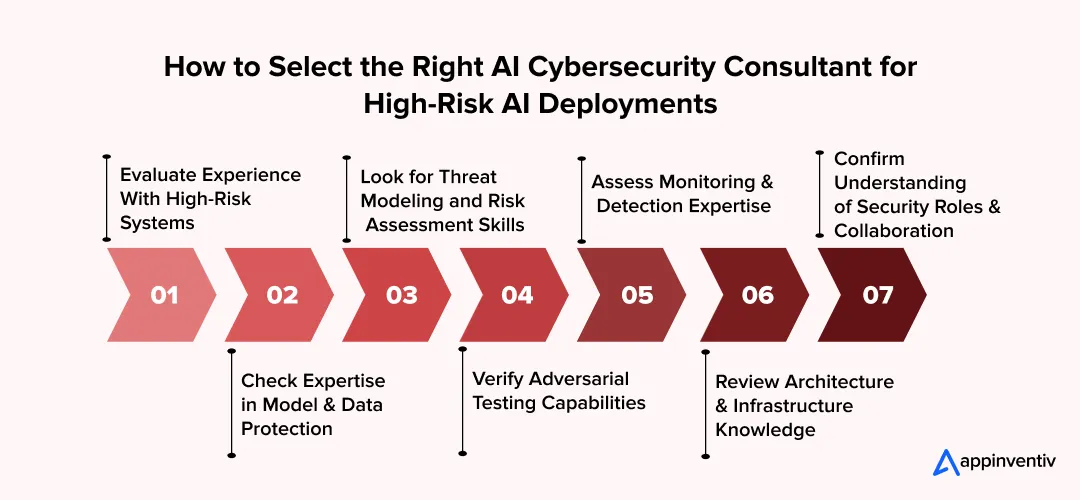

How to Select the Right AI Cybersecurity Consultant for High-Risk AI Deployments

In a high-risk deployment, “good enough” security becomes a liability. One prompt injection or model leak can damage a brand overnight. A system that handles financial records, healthcare data, or customer transactions carries a serious risk. Many organizations look for AI cybersecurity consultants only after problems arise. A better decision is to bring the right specialist into the project early.

A qualified consultant reviews system structure, data handling, and access controls before deployment. Many enterprises seek AI cybersecurity expert advisors with experience in protecting complex platforms. The right professional helps teams close security gaps before attackers discover them. In many cases, companies compare specialists who provide advanced AI-powered cybersecurity solutions alongside expertise in machine learning security.

Below are the key factors companies should review before making a decision.

1. Evaluate Experience With High-Risk Systems

Experience matters when systems operate in sensitive environments. Security work on small applications differs from protecting large enterprise platforms.

Organizations often look for AI cybersecurity consultants for hire who have worked on real production systems. These professionals understand how security failures affect business operations.

Look for experience in areas such as:

- Financial platforms and payment systems

- Healthcare data environments

- Enterprise intelligent automation

- Large customer data platforms

Consultants who have worked in these environments identify vulnerabilities more quickly and provide practical guidance.

2. Check Expertise in Model and Data Protection

Modern systems rely heavily on trained models and structured data pipelines. Weak protection around these components exposes the entire system.

Enterprises integrating RAG (Retrieval-Augmented Generation) systems must also protect vector databases. These databases store embeddings that represent proprietary documents, financial data, or internal knowledge bases. Without strict access control, attackers can query the vector store and extract sensitive context that the model uses.

Companies often hire AI system security experts to examine how these components function after deployment.

A proper review often includes:

- Protection of training datasets

- Access controls for model environments

- Secure storage for system data

- API security and external integrations

This step prevents unauthorized access and data misuse.

3. Look for Threat Modeling and Risk Assessment Skills

Security professionals must study how attackers might target the system. This process identifies weaknesses before they turn into incidents.

Enterprises frequently seek AI threat-modeling specialists to analyze potential attack paths.

The evaluation usually focuses on:

- User access controls

- Data handling practices

- External system connections

- Third-party service exposure

Threat modeling helps teams correct weaknesses during development rather than after deployment.

4. Verify Adversarial Testing Capabilities

Security testing should replicate real attack attempts. Standard vulnerability scans rarely expose every weakness.

Organizations often hire ML security experts who simulate attempts to manipulate system outputs or corrupt training data.

Adversarial testing can reveal:

- Manipulated input attacks

- Data poisoning attempts

- Unauthorized system access

- Model behavior manipulation

These tests help security teams understand how the system reacts under pressure.

5. Assess Monitoring and Detection Expertise

Security continues after the system goes live. Teams must watch system activity and respond quickly to unusual behavior.

Many organizations hire AI threat-detection specialists who analyze alerts, logs, and behavior patterns. Large enterprises may support analysts with an AI cybersecurity assistant that helps review alerts and suspicious activity.

Common monitoring practices include:

- Continuous log monitoring across system services

- Detection of abnormal input or output patterns

- Tracking unusual access requests

- Alert escalation for possible intrusions

An experienced Internet Security Advisor often guides internal teams on building long-term monitoring procedures.

6. Review Architecture and Infrastructure Knowledge

System security depends on a strong architecture. Weak infrastructure design creates hidden vulnerabilities across applications and data environments.

Organizations often rely on AI security architecture experts to review system structure and recommend stronger protections.

Their analysis usually focuses on:

- Cloud infrastructure configuration

- Network segmentation and access control

- Secure API communication

- Data storage and encryption practices

Strong architecture reduces exposure across the entire system.

7. Confirm Understanding of Security Roles and Collaboration

Security work requires coordination across teams. Developers, security analysts, and infrastructure engineers must work together to close vulnerabilities.

A qualified AI security specialist roles function across large organizations. This awareness improves communication during security reviews and remediation work.

Consultants who support collaboration often help with:

- Coordinating reviews between engineering and security teams

- Documenting vulnerabilities and remediation steps

- Supporting internal security audits

- Training teams on security best practices

Companies that look for AI cybersecurity consultants with strong collaboration skills strengthen both system protection and internal security processes.

Also Read: Hire AI Consultants: Find the Perfect Fit for Your Business

The Role of an AI Cybersecurity Consultant in Securing AI Systems

A new system often looks ready once the code runs and the dashboard lights up. Security teams look closer. Data flows through many services. Models connect to APIs. Users interact with automated responses. Each connection creates a new point of risk. Many companies look for AI cybersecurity consultants at this stage to review the entire system before launch.

A skilled consultant studies how the system handles data, access controls, and external integrations. The work is practical. Find weak points early and close them before attackers discover them. Large enterprises often hire AI cybersecurity expert advisors when platforms support financial services, healthcare records, or large customer databases.

Core responsibilities include:

- Security architecture review: AI security experts examine the system’s structure, APIs, and cloud configurations. The goal is to locate exposed entry points.

- Threat modeling and risk analysis: AI threat modeling specialists map how attackers might attempt to access or manipulate system outputs.

- Security assessments and audits: Many organizations hire AI risk and security consultants or specialists to test defenses before deployment.

- Adversarial testing: Adversarial AI security experts simulate attacks that attempt to alter outputs or corrupt training data.

- Monitoring and threat detection: AI threat-detection specialists track unusual activity and alert teams when something appears suspicious.

You can also hire machine learning security experts to secure training environments and model updates.

The work of an AI security expert protects systems that businesses rely on every day. A thorough security review reduces risk before problems arise.

Mature security reviews now focus on inference-time vulnerabilities as much as training pipelines. Consultants evaluate prompt-injection exposure, vector-database security in RAG architectures, and model-weight protection through encryption or secure enclaves. Without these controls, attackers can manipulate outputs, extract confidential training data, or reverse-engineer proprietary models.

Also Read: Top Cybersecurity Measures for Businesses

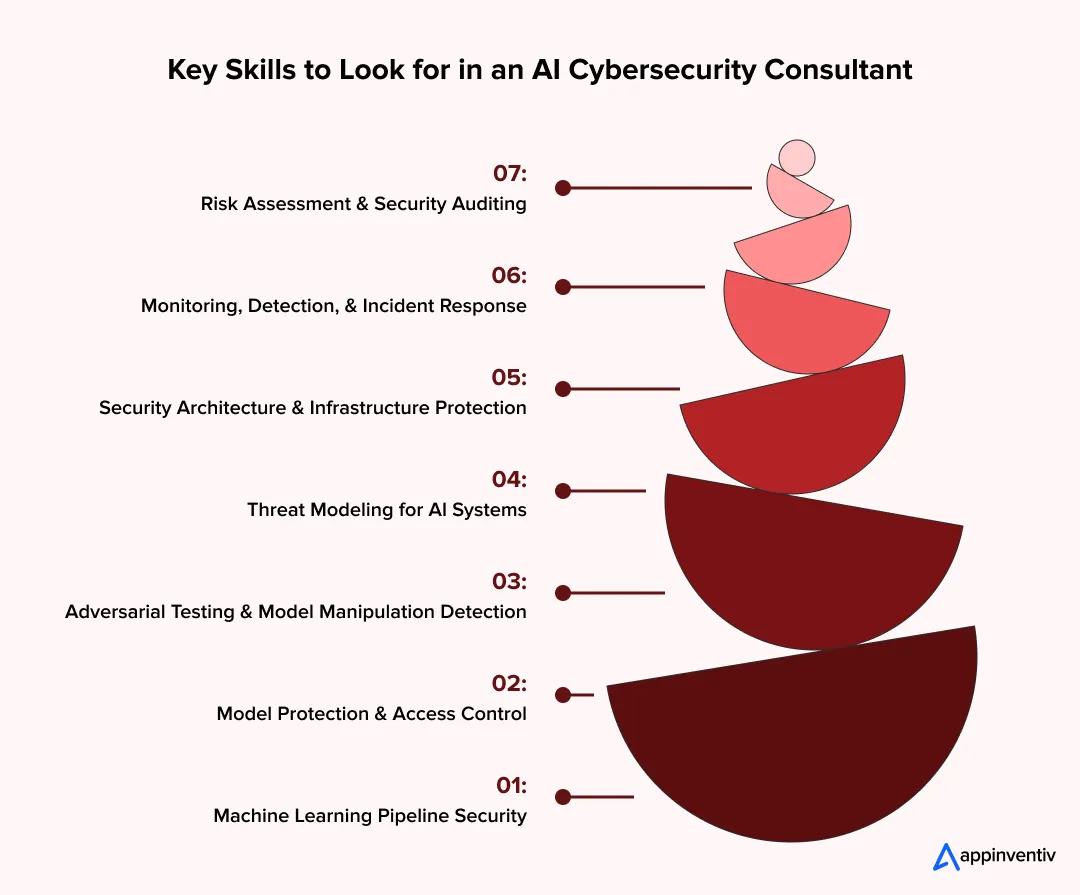

Key Skills to Look for in an AI Cybersecurity Consultant

Many organizations decide to look for AI cybersecurity consultants once systems move close to production. A strong consultant does more than run vulnerability scans. The role requires deep technical knowledge across machine learning pipelines, infrastructure security, and threat analysis.

One of the biggest challenges that the SOCs face today is detecting and responding to future attacks proactively.

The quote above defines a core challenge in the industry, raising a demand for intelligence-led security, where experts anticipate attacker behavior rather than just respond to alerts. A qualified professional must understand how models operate in real environments. Data flows through pipelines, and APIs connect services. Outputs interact with applications and users. Each layer creates potential security exposure. Companies that hire AI cybersecurity expert advisors expect them to examine these layers in detail and identify weaknesses early.

Below are the technical skills that separate experienced consultants from general security professionals.

1. Machine Learning Pipeline Security

Modern systems depend on structured pipelines that collect, process, and train data. A consultant must understand how these pipelines operate across development and production environments.

Security teams must also protect the RLHF (Reinforcement Learning from Human Feedback) pipeline. Attackers can manipulate feedback loops or inject malicious training signals that influence model behavior over time. Strong controls around feedback datasets and moderation pipelines reduce this risk.

Companies often hire ML security experts who analyze risks across the full training lifecycle. This includes data ingestion, preprocessing, model training, evaluation, and deployment.

A strong consultant reviews:

- Data ingestion pipelines and external data sources

- Data validation controls to detect manipulated inputs

- Storage environments that hold training datasets

- Permissions that control access to training resources

Weak controls at this stage can allow attackers to insert malicious data into training sets.

2. Model Protection and Access Control

Once deployed, models often run behind APIs or internal services. Poor access controls create opportunities for unauthorized use or data extraction.

Many organizations hire AI model security consultants who evaluate how models are exposed through applications.

A detailed review usually includes:

- API authentication and authorization policies

- Rate limiting to prevent large-scale extraction attempts

- Encryption technology for model endpoints and request traffic

- Monitoring systems that detect abnormal query patterns

These protections reduce the risk of model theft or misuse.

3. Adversarial Testing and Model Manipulation Detection

Traditional penetration testing does not cover many model-specific attacks. Attackers often manipulate inputs or attempt to influence outputs through crafted prompts or data patterns.

Enterprises often seek adversarial AI security experts who specialize in testing how models respond under adversarial conditions.

Adversarial testing may involve:

- Input manipulation that attempts to alter predictions

- Data poisoning attempts inside training pipelines

- Model extraction through repeated queries

- Prompt manipulation that attempts to bypass restrictions

This testing reveals weaknesses that normal security audits miss.

4. Threat Modeling for AI Systems

Security planning requires a structured threat model. Consultants must map how attackers might interact with the system and where vulnerabilities exist.

Many organizations hire AI threat modeling specialists who build detailed threat maps across infrastructure and applications.

A typical threat model examines:

- Access paths between applications and models

- Data exposure across system services

- Authentication controls for internal tools

- Attack surfaces created by external APIs

This process helps teams understand where to strengthen defenses.

5. Security Architecture and Infrastructure Protection

Large systems run across multiple services, cloud platforms, and databases. Infrastructure misconfiguration often creates hidden vulnerabilities.

Enterprises frequently rely on AI security architecture experts who evaluate system design and infrastructure security.

Their work often includes:

- Cloud identity and access management policies

- Network segmentation between services

- Container security and runtime monitoring

- Secure configuration of compute and storage resources

Infrastructure security forms the foundation for protecting complex systems.

6. Monitoring, Detection, and Incident Response

Security work continues after deployment. Teams must detect abnormal behavior quickly and respond before damage spreads.

Organizations often hire AI threat-detection specialists to build monitoring systems that analyze logs, model outputs, and traffic patterns.

Monitoring practices usually include:

- Behavior analysis across system inputs and outputs

- Detection of repeated suspicious requests

- Alert systems connected to security teams

- Incident response procedures for rapid containment

Large organizations may support analysts with an AI cybersecurity assistant that helps process alerts and flag unusual activity.

7. Risk Assessment and Security Auditing

Consultants must evaluate overall system risk before deployment. This process involves reviewing infrastructure, data handling practices, and operational security policies.

Many companies hire AI security assessment specialists to perform detailed security audits.

These assessments often include:

- Evaluation of system exposure across networks

- Review of data protection controls

- Security testing of external integrations

- Documentation of vulnerabilities and remediation steps

A skilled AI security expert combines technical analysis with clear reporting. This helps leadership understand risks and act quickly.

Organizations that look for AI cybersecurity consultants with these capabilities gain stronger protection across complex systems and long-term security stability.

Protect your systems with experts who understand model security, data pipelines, and the risks of enterprise infrastructure.

Questions to Ask Before Hiring an AI Cybersecurity Consultant

A company may review several candidates before deciding who to trust with system security. Resumes and certifications provide some information, yet the real test comes from direct questions. The answers reveal how a consultant studies systems, tests defenses, and reports vulnerabilities.

Organizations seeking AI cybersecurity consultants often conduct technical interviews with both engineering and security leaders. These conversations help determine whether the consultant has practical experience with complex systems. Companies that hire AI cybersecurity expert advisors typically seek clear explanations, concrete examples, and a structured review process.

The questions below help teams identify qualified professionals.

1. Ask About Experience Securing Real Production Systems

Theory alone does not prepare a consultant for complex enterprise environments. Systems that process customer data or financial transactions pose a greater security risk.

Organizations should ask candidates to describe real projects in which they helped protect large-scale systems. Many companies look for AI cybersecurity consultants for hire who have already worked on production deployments.

Key questions include:

- What types of enterprise systems have you secured?

- What security issues did you discover during those reviews?

- What steps did you recommend to fix the problems?

- What tools did you use during the assessment?

Experienced consultants explain both the vulnerability and the remediation process.

2. Ask How They Conduct Model and Data Security Reviews

Models rely on large datasets and multiple services. Weak controls around these components create serious risks.

A strong security consultant for AI systems should explain how they examine model access, data storage, and training pipelines.

Useful questions include:

- How do you secure training data pipelines?

- How do you detect manipulated data inputs?

- What controls prevent unauthorized model access?

- How do you protect model endpoints exposed through APIs?

These questions reveal how well the consultant understands system architecture.

3. Ask About Threat Modeling and Risk Assessment Methods

Security planning requires structured threat analysis. Consultants must map how attackers might exploit system components.

Companies often hire AI risk and security consultants who follow a detailed evaluation process.

Questions to ask include:

- How do you identify attack paths across the system?

- What framework do you use for threat modeling?

- How do you evaluate risks from external integrations?

- How do you prioritize vulnerabilities for remediation?

A strong answer should include a clear methodology rather than vague explanations.

4. Ask About Adversarial Testing Techniques

Attackers frequently attempt to manipulate systems using crafted inputs or repeated queries. Security teams must test these scenarios before deployment.

Organizations often hire ML security experts who conduct these tests.

Key questions include:

- How do you simulate model manipulation attempts?

- How do you test for data poisoning risks?

- How do you detect model extraction attempts?

- What tools do you use for adversarial testing?

Consultants with hands-on experience describe detailed testing procedures.

5. Ask About Monitoring and Detection Strategies

Security review does not end after deployment. Systems require continuous monitoring and rapid response when unusual activity appears.

Many companies hire AI threat detection specialists to build monitoring strategies that track system activity. Some teams also deploy an AI cybersecurity assistant to support log analysis and alert investigation.

Useful questions include:

- What monitoring tools do you recommend after deployment?

- How should teams detect abnormal system behavior?

- What logs should security teams collect and analyze?

- How should teams respond to detected threats?

An experienced Internet Security Advisor often explains how monitoring integrates with existing security operations.

6. Ask About Collaboration With Internal Teams

Security improvements require cooperation between consultants, developers, and internal security staff.

Important questions include:

- How do you communicate vulnerabilities to engineering teams?

- How do you document remediation steps?

- How do you support internal security audits?

- How do you help teams maintain security after the assessment?

Organizations that look for AI cybersecurity consultants who communicate clearly often implement security improvements faster and reduce operational risk.

Also read: How to Hire an AI Agent Development Company

The Importance of AI Red-Team Testing and Adversarial Simulations

Security testing must reflect real attack behavior. Standard vulnerability scans rarely reveal how a system reacts under targeted manipulation. This gap explains why many organizations hire AI cybersecurity consultants who specialize in adversarial testing.

Adversarial testing recreates attackers’ tactics. The consultant attempts to manipulate inputs, extract information, or alter system responses. Companies often hire AI Cybersecurity Expert advisors to run these controlled attack simulations before systems enter production.

Modern red-team testing follows threat models outlined in the OWASP Top 10 for LLM Applications. These include vulnerabilities such as prompt injection, insecure output handling, data leakage through system prompts, and training data poisoning. Testing against these scenarios reveals weaknesses that traditional penetration testing often misses.

These tests focus on areas where systems face the highest risk.

Common red-team testing activities include:

- Attempting input manipulation to change model outputs

- Simulating data poisoning within training pipelines

- Testing for model extraction through repeated queries

- Probing APIs for unauthorized access or response leaks

Enterprises often rely on adversarial AI security experts who understand how attackers exploit automated systems. Their work helps security teams detect weaknesses that normal testing methods overlook.

AI Governance, Compliance, and Regulatory Requirements

Strong security protects systems from attackers. Governance protects the business from legal trouble, operational failure, and reputational damage. Many companies hire AI cybersecurity consultants to review both technical controls and governance practices before launching critical systems.

Large organizations handle customer records, financial transactions, and internal operational data. A single data handling mistake can trigger regulatory penalties or customer trust issues.

This risk pushes companies to hire AI cybersecurity experts for enterprises that understand security, compliance rules, and operational risk.

A governance review usually examines several core areas.

1. Data Control and Training Data Management

Many systems rely on large datasets that contain sensitive information. Poor data control creates serious exposure.

Organizations often look for AI security assessment specialists to examine how datasets are collected, stored, and accessed.

A proper review checks:

- Who can access training datasets

- Where datasets are stored and how they are protected

- Whether incoming data passes validation checks

- Whether data changes are tracked and recorded

Clear data control prevents unauthorized changes and protects sensitive information.

2. Decision Tracking and System Accountability

Enterprises must document how automated systems reach decisions. This requirement matters in sectors such as finance, healthcare, and insurance.

Companies often hire AI model security consultants who examine how systems log activity and track updates.

Security reviews often verify:

- Logs that record system activity and decisions

- Version history for models and datasets

- Documentation for model updates and changes

- Access records for system administrators

Clear records help security teams investigate incidents and demonstrate accountability.

3. Regulatory Alignment

Government oversight of automated systems continues to expand. Enterprises must prepare for regulatory reviews before launching new platforms.

Many organizations work with an experienced Internet Security Advisor and internal AI security specialist teams to review compliance requirements.

Regulatory reviews often examine alignment with:

- NIST AI Risk Management Framework in the United States

- EU AI Act rules for high-risk systems

- Data protection laws such as GDPR

These reviews confirm that the system design and data practices comply with regulatory expectations.

4. Risk Management and Operational Oversight

Security leaders must understand how system failures affect operations. A system error or data corruption event can interrupt services or produce incorrect results.

Enterprises often hire AI threat modeling specialists to evaluate operational risks across the system.

Many enterprises now map adversarial threats using the MITRE ATLAS framework, which catalogues attack techniques used against machine learning systems. This framework helps security teams simulate attacks, including model evasion, data poisoning, and model extraction.

Risk reviews usually examine:

- Failure scenarios and recovery plans

- Data integrity monitoring processes

- Access control policies across system services

- Internal approval procedures for system changes

This analysis helps leadership prepare for operational incidents.

Also Read: AI in Risk Management: Key Use Cases

5. Continuous Monitoring and Oversight

Governance requires ongoing monitoring after deployment. Systems evolve as new data enters and models receive updates.

Many companies hire AI threat detection experts who monitor logs, traffic patterns, and unusual system activity. Organizations that hire consultants with governance experience build stronger oversight around critical systems and reduce long-term risk.

Also read: How to Hire AI Governance Consultants in 2026?

Common Mistakes Companies Make When Hiring an AI Security Consultant

Many companies rush to hire AI cybersecurity consultants as a project nears deployment. The pressure to launch often leads to quick decisions. Some teams choose a consultant based only on reputation or cost. That shortcut creates risk.

A security review works best when the consultant understands both system architecture and modern attack methods. Companies that hire AI cybersecurity expert advisors without checking their technical depth often miss serious vulnerabilities.

Below are common mistakes that weaken security assessments.

1. Hiring General Cybersecurity Professionals Without AI Experience

Traditional cybersecurity knowledge does not always cover automated systems. Model behavior, data pipelines, and inference services introduce new attack surfaces.

Some organizations hire a general consultant who lacks experience with these systems. Enterprises seeking machine learning or AI system security experts receive more precise assessments.

These specialists understand how attackers attempt to manipulate model behavior or extract sensitive data.

Also read: How to Hire Machine Learning Engineers to Scale AI From Prototype to Full Production

2. Ignoring Model and Data Pipeline Security

Many security reviews focus only on infrastructure. The model itself and its training data often receive less attention.

In several real incidents, attackers used prompt injection through RAG document retrieval to force systems to reveal hidden system prompts or internal policies.

Organizations should hire AI model security consultants who examine how AI models interact with datasets and external inputs. Common weaknesses appear in:

- Data ingestion pipelines

- Dataset validation processes

- Model update mechanisms

- External API integrations

Ignoring these areas allows attackers to manipulate system behavior.

3. Treating Security as a One-Time Audit

A single assessment does not protect systems in the long term. Data changes, model updates, and new attack methods appear regularly.

Companies often seek AI risk and security consultants for an initial review, but fail to plan for continuous monitoring. Strong security programs include ongoing reviews by AI threat-detection experts who track unusual activity.

Some organizations support analysts with an AI cybersecurity assistant that helps process alerts and monitor logs.

4. Overlooking Adversarial Testing

Traditional penetration testing does not reveal many model-specific weaknesses. Attackers frequently attempt to manipulate inputs, make repeated queries, or poison datasets.

Organizations seeking AI threat-modeling specialists can test these scenarios before attackers do.

Adversarial testing reveals vulnerabilities that standard security scans rarely detect.

Also Read: Vulnerability Assessment and Penetration Testing Guide

5. Failing to Align Security With Governance Requirements

Security reviews must support compliance and operational policies. Companies that ignore governance risks face legal and regulatory consequences.

Enterprises often work with experienced Internet Security Advisors to align security controls with compliance requirements.

Get a full security review and strengthen system defenses before vulnerabilities affect your business.

Why Partner With Appinventiv for Secure AI Deployments

Organizations that hire AI cybersecurity consultants often look for a partner with strong engineering experience and real production exposure. Appinventiv supports enterprises seeking to build secure, reliable intelligent systems. The team works across system design, architecture review, and governance planning. Through their AI consulting services, businesses receive guidance from early strategy to deployment and long-term system support.

Appinventiv has delivered results across multiple industries. The company has delivered 300+ AI-powered solutions, trained and deployed 150+ custom AI models, and completed 75+ enterprise AI integrations. One example is the MyExec AI Business Consultant platform. The solution functions as a conversational advisor that analyzes business documents and translates complex data into clear recommendations for decision-makers. This platform helps leaders review information quickly and make informed business decisions.

Companies planning high-risk deployments need a partner that understands both system development and operational security. Appinventiv’s AI consulting services help businesses design secure platforms, review risks, and deploy production-ready systems with confidence.

If your organization plans to build intelligent systems, working with experienced consultants like Appienventiv can help you launch faster while protecting critical data and operations. Connect with us!

FAQs

Q. What is AI cybersecurity, and why is it important?

A. AI cybersecurity focuses on protecting automated systems, models, and data pipelines from misuse or attacks. Many companies hire AI cybersecurity consultants to review system security before launch. These specialists check data access, model exposure, and system integrations. Their work helps businesses protect sensitive data and avoid operational disruptions.

Q. What are the biggest security risks in AI deployments?

A. Automated systems introduce new security risks that traditional tools may not detect. Common threats include data poisoning, model extraction, manipulated inputs, and unauthorized API access. To reduce these risks, many organizations hire AI risk and security consultants who review system architecture and identify vulnerabilities.

Q. What skills should organizations look for in an AI cybersecurity expert?

A. Organizations usually hire AI security engineers with experience in system architecture, data protection, and threat analysis. A strong expert understands model behavior, infrastructure security, and attack detection. Many enterprises also rely on professionals who understand different AI security expert roles across engineering and security teams.

Q. How can organizations secure AI models in production environments?

A. Production systems need strong access controls and continuous monitoring. Many companies hire machine learning security experts to review model deployment environments. Security teams also rely on AI threat-detection specialists and AI security-architecture experts to monitor system behavior and prevent unauthorized access.

Q. How does Appinventiv support enterprises in securing AI deployments?

A. Appinventiv supports organizations that want secure and scalable intelligent systems. Through its AI consulting services and enterprise Cybersecurity services, the company helps businesses design secure architectures, build custom models, and deploy production-ready platforms. Their teams guide organizations through development, security reviews, and long-term system monitoring.

Q. Can’t our existing IT security team handle this?

A. Not completely. It’s not about skill, it’s about context. Most security teams are trained to protect data at rest and in transit. AI systems introduce a different challenge where the decision-making logic itself can be targeted.

Unless your team has experience in areas like adversarial machine learning or prompt-level attacks, some risks can go unnoticed. That’s why many organizations bring in AI cybersecurity specialists to work alongside internal teams and close these gaps.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

Key takeaways: Start with research before development. Map compliance before choosing the architecture. Build the data foundation before training the model. Cost can range from $100K to $5M+, depending on scope. The biggest challenge is keeping the AI accurate, explainable, and compliant after launch. Building a real estate investment AI means planning for two industries:…

What UAE CDS 2027 Means for Your Platform: Integration, Compliance, Systems, and What to Build

Key takeaways: UAE CDS 2027 age verification compliance mandates real-time, auditable systems integrated into identity, access control, and enforcement layers across platforms. Effective compliance requires risk-based, multi-layered verification combining biometrics, Emirates ID checks, device intelligence, and behavioral signals. Legacy KYC systems fail CDS expectations; enterprises must implement continuous verification with dynamic risk scoring and re-validation…

Key takeaways: AI agents for cybersecurity are moving past triage assistance into autonomous decision-making across SOC, AppSec, and threat intelligence. Extensive AI use in security operations saves $1.9M per breach and cuts the breach lifecycle by 80 days (IBM, 2025). 97% of organizations hit by an AI-related security incident lacked proper AI access controls. The…