- How to Evaluate an AI Outsourcing Partner

- When to Outsource AI vs Build In-House

- AI Outsourcing Models Enterprises Actually Use

- The Real Cost of AI Outsourcing (What Most Teams Miss)

- Common Failure Signals in Outsourcing of AI

- How to Differentiate Between AI-First Partners vs Traditional Outsourcing Vendors

- Best Practices for AI Outsourcing Success

- How Appinventiv Helps Enterprises Build and Scale AI With Confidence

Key Takeaways

- Don’t judge by demos alone. Real value shows in production, how the system handles scale, failures, and messy data.

- Data matters more than the model. If the partner isn’t strong on pipelines, validation, and data flow, problems will show up later.

- MLOps is not optional. Without monitoring, versioning, and retraining, even good models won’t hold up over time.

- Integration is where most projects fail. Choose a partner who understands your systems, not just AI tools.

- Pay attention to how they think. The right partner asks tough questions early and challenges assumptions, not just agrees.

You’ve likely seen this happen. A team pilots an AI use case, the model works fine in a controlled setup, and then things slow down when it has to fit into real systems. That’s usually when AI outsourcing comes up. Not as a cost-cutting move, but as a way to bring in the right expertise and keep things moving.

The pressure is real. According to Gartner, AI is expected to touch virtually all enterprise IT work by 2030, reshaping how systems are built, operated, and scaled. That shift is forcing faster decisions on what to build in-house and where external support makes more sense.

This is why AI outsourcing for enterprises is becoming a strategic choice. Some capabilities need in-house control, especially around data and IP. Others are better handled by partners who can accelerate delivery without adding long-term complexity.

The real question is not whether to outsource, but where it actually fits. Getting that right early often decides whether your AI investment scales or quietly stalls. This shift is also changing how enterprises approach AI in outsourcing, moving from cost-saving decisions to capability-driven partnerships.

In this blog, you will see how to decide what to outsource, how to choose the right partner, and how to avoid costly missteps.

If your pilot is stuck in testing, it’s time to make it work in real systems.

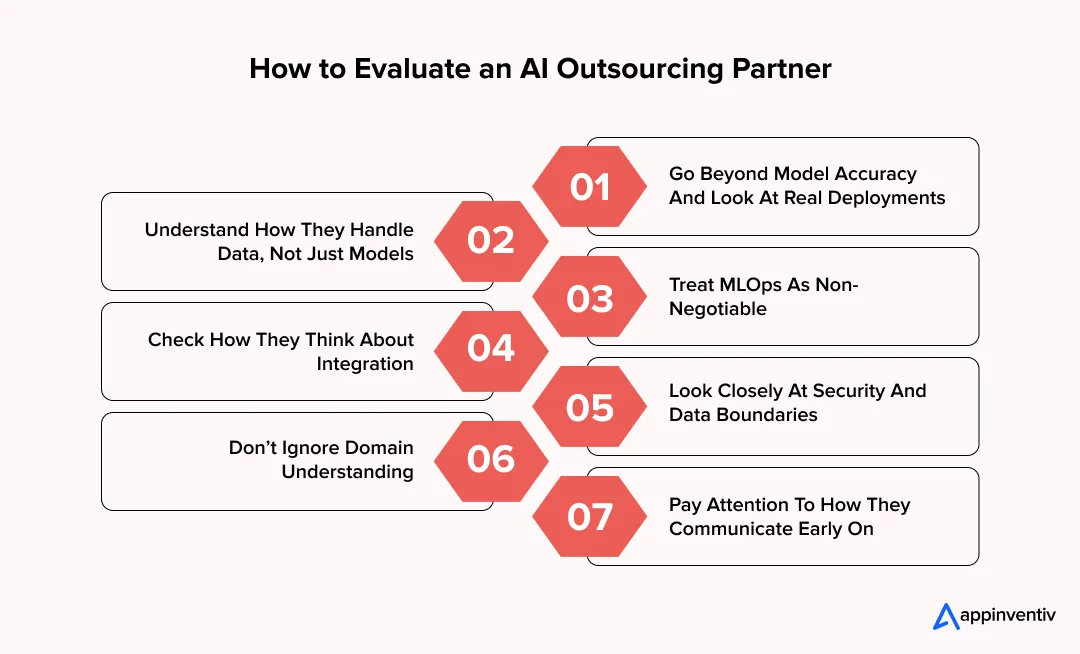

How to Evaluate an AI Outsourcing Partner

This is where the gap shows up. A demo works fine, vendors sound confident, but things change once real data and systems come into play. Evaluating a partner isn’t just about demos or capabilities. Think of this as an AI outsourcing checklist that helps you assess whether a team can handle real-world systems, not just controlled environments. Can they take ownership beyond the model and make it work in your environment over time?

The easiest way to assess this is to shift your focus. Look less at what they can build, and more at how they handle the parts that usually break.

1. Go Beyond Model Accuracy And Look At Real Deployments

A model performing well in testing doesn’t guarantee it will handle real-world conditions like scale, latency, and unpredictable data.

- Ask for examples where models are live and handling real workloads

- Check how they deal with latency, failures, and scale

- Look for experience with real-time inference or high-volume systems

- Ask what happens when performance drops after deployment

If the conversation stays around accuracy scores, you’re missing the bigger picture.

2. Understand How They Handle Data, Not Just Models

Most AI adoption failures come from poor data handling, not weak algorithms, so their approach to data pipelines matters more than model choice.

- Do they build end-to-end data pipelines, or expect clean inputs?

- How do they handle missing data, schema changes, or noisy inputs?

- Can they manage both batch and real-time data flows?

- Do they define clear data contracts between systems?

Strong teams treat data as part of the system, not a pre-step.

3. Treat MLOps As Non-Negotiable

MLOps ensures the system continues to perform reliably after deployment, not just at launch.

- Versioning for models, datasets, and features

- Monitoring for data drift and performance drops

- Clear triggers for retraining based on real signals

- Safe deployment with rollback options

If this part feels unclear, issues tend to show up later.

4. Check How They Think About Integration

Integration determines whether the AI system actually works within your existing ecosystem.

- Have they worked with legacy systems or enterprise APIs before?

- Can they fit into your existing architecture without forcing changes?

- Do they plan for failures, retries, and async workflows?

- How do they maintain data consistency across systems?

A good partner brings this up early, not midway through the project.

5. Look Closely At Security And Data Boundaries

Handling sensitive data requires strict controls, and gaps in these controls can create long-term risk.

- Experience with PII handling and access controls

- Familiarity with standards like SOC 2 or GDPR compliance

- Clear understanding of data ownership and storage

- Ability to work within secure environments like VPCs or on-prem setups

This is also where AI governance consulting services become relevant, especially when you need clear policies around model usage, auditability, and long-term compliance.

This directly impacts long-term risk.

6. Don’t Ignore Domain Understanding

Without domain context, even strong technical solutions can miss real-world requirements.

- Do they understand how your industry workflows actually run?

- Can they anticipate edge cases based on real usage?

- Do they suggest improvements, or just follow instructions?

You’ll usually see this in the kind of questions they ask.

7. Pay Attention To How They Communicate Early On

Early communication reflects how the partnership will work once development starts.

- Do they ask about data readiness and system dependencies first?

- Are they comfortable saying something may not work as expected?

- Can they explain trade-offs without overpromising?

If everything sounds too simple, it’s worth digging deeper.

Choosing the right partner is not about who can build the most advanced model. It’s about who understands the full system and stays accountable when things get complex. That’s what choosing the right AI outsourcing partner looks like in practice.

If you’re still narrowing down options, it also helps to understand how to hire an AI developer before finalizing your outsourcing partner.

When to Outsource AI vs Build In-House

This is usually where teams pause for a minute. You’re looking at a use case, timelines are tight, and someone asks, ” Do we build this ourselves or bring in an external team? The answer depends less on preference and more on what you’re trying to protect versus how fast you need to move.

| Decision Factor | Build In-House | Outsource AI Development |

|---|---|---|

| Strategic Value | Directly tied to your product edge or IP. Think fraud models, pricing engines, core intelligence layers | Useful, but not your differentiator. Things like chat interfaces, document parsing, or support automation |

| Data Sensitivity | Strict control needed. Regulated data, internal pipelines, or proprietary datasets | Can work with masked, structured, or shared datasets under defined controls |

| Time Pressure | You can afford a longer setup time for hiring, experimentation, and iteration | You need something working sooner, with teams that have done it before |

| Internal Capability | You already have data scientists, ML engineers, and MLOps in place | Skills are limited or spread thin across teams |

| MLOps & Infra Readiness | Pipelines for training, deployment, monitoring, and retraining are already in place | No clear pipeline yet, or systems are still evolving |

| Integration Depth | Deep ties with legacy systems where internal context matters a lot | API-driven or modular integration that an external team can plug into |

| Cost View | Higher upfront investment, more control over time | Lower starting cost, but ongoing external dependency |

| Ownership & Iteration | You want full control over model updates, tuning, and long-term evolution | Comfortable with shared ownership or vendor-led iteration cycles |

| Risk Appetite | Prefer tighter control, even if it slows things down | Willing to move faster with some trade-offs in control |

If it shapes how your product competes, keep it close to you. If it’s slowing execution or has already been solved elsewhere, it’s often smarter to outsource AI development..

Also Read: How to Build an AI App?

Appinventiv Insight

In most enterprise setups, it’s not a binary choice. Teams keep core intelligence in-house while accelerating execution through AI development outsourcing. The ones that get this right define that boundary early, which avoids rework later.What this looks like across the market:

- 70%+ enterprises follow a hybrid build + outsource AI approach

- 80% of AI failures are linked to data and integration issues

- 60%+ organizations face AI skill gaps internally

That early clarity is usually what makes scaling much smoother.

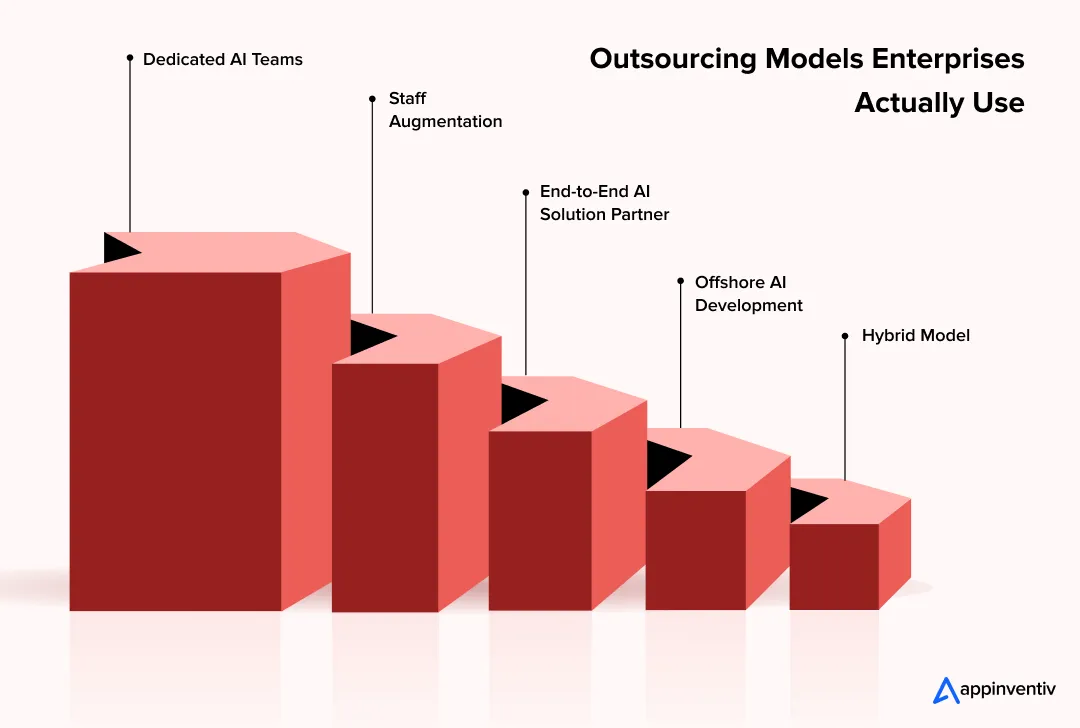

AI Outsourcing Models Enterprises Actually Use

You’ll notice this pretty quickly once you start evaluating vendors. Most discussions jump straight to pricing or team size, but the real question is how the engagement is structured. The model you choose directly affects speed, control, and the amount of ownership your team retains.

There isn’t a single model that fits every use case. Most enterprises mix these depending on the problem they’re solving and on the maturity of their internal setup.

1. Dedicated AI Teams (Extension Of Your Core Team)

This works when you already have a roadmap and some internal direction, but need consistent execution capacity.

- A cross-functional team with ML engineers, data engineers, and sometimes domain specialists works as an extension of your team

- They follow your architecture, tools, and sprint cycles

- Useful when you need to scale development without losing visibility

Where it fits: Ongoing AI initiatives, platform-level work, or when you’re building multiple models over time.

What to watch:

- Alignment on coding standards, data access, and deployment workflows

- Clear ownership between internal and external teams to avoid overlap

2. Staff Augmentation (Plugging Specific Skill Gaps)

This is more targeted. You bring in specific roles rather than a full team.

- Hire for roles like ML engineers, NLP specialists, or MLOps engineers

- They work inside your team and tools from day one

- Helps when your bottleneck is a specific capability, not overall execution

Where it fits: Short-term needs such as model optimization, pipeline setup, or infrastructure scaling.

What to watch:

- Dependency on individuals instead of systems

- Lack of long-term ownership if knowledge transfer isn’t planned

3. End-to-End AI Solution Partner (Outcome-Focused Model)

This is where you hand over responsibility for delivery, not just execution.

- Partner owns data pipelines, model development, deployment, and monitoring

- Typically includes solution architecture, infra setup, and integration

- Works well when internal AI maturity is still evolving

Where it fits: New AI initiatives, first-time implementations, or complex use cases involving multiple systems.

What to watch:

- Clarity on data ownership and model IP

- Transparency in how decisions are made during development

- Avoiding black-box solutions that are hard to maintain later

4. Offshore AI Development (Cost + Scale Play)

This is often used to optimize cost while maintaining delivery speed.

- Teams operate from offshore locations with lower cost structures

- Can be combined with any of the above models

- Useful for scaling data labeling, model training, or backend development

Where it fits: Large-scale data work, repetitive model training cycles, or cost-sensitive projects.

What to watch:

- Communication gaps across time zones

- Quality, consistency and governance

- Need for strong internal coordination

Also Read: Hiring Offshore AI Developers? Here’s How to Hire the Best

5. Hybrid Model (What Most Enterprises Actually End Up Using)

In reality, most enterprises combine models.

- Core architecture and sensitive components stay in-house

- External teams handle execution-heavy or specialized layers

- Mix of dedicated teams + targeted augmentation + offshore support

Where it fits: Mature setups where multiple AI initiatives are running in parallel.

What to watch:

- Clear boundaries between teams

- Shared visibility into pipelines, models, and deployment status

- Consistent governance across all contributors

The model you choose shapes how fast you move and how much control you keep. There’s no single right answer, but the wrong structure can slow everything down even if the team is strong.

Most enterprises don’t stick to one model. They evolve as their AI maturity grows, often starting with external support and gradually bringing more control in-house where it matters.

The engagement model you choose will either accelerate delivery or create months of rework.

The Real Cost of AI Outsourcing (What Most Teams Miss)

This is where things usually start to feel off midway through a project. The initial quote looks clean. Hourly rates, team size, timelines. Then the work begins, and you realize a large part of the cost sits outside that estimate.

In most enterprise scenarios, AI outsourcing typically ranges between $40,000 and $400,000, depending on system complexity, data readiness, and integration depth. But this is only one part of the overall AI development cost, which often extends beyond the initial scope.

AI Outsourcing Cost Range Breakdown:

| Cost Range | What You’re Building | What’s Typically Included |

|---|---|---|

| $40,000 – $80,000 | Simple AI use cases like chatbots or basic automation | Pre-trained models, light customization, basic APIs, limited scale |

| $80,000 – $200,000 | More functional systems like recommendations or analytics | Model tuning, some data engineering, integration with a few systems, basic MLOps |

| $200,000 – $400,000 | Complex, enterprise-grade AI systems | Custom models, heavy data pipelines, deep integrations, full MLOps, security layers |

What actually drives the cost is everything around the model.

- Data preparation & engineering: Cleaning, structuring, and setting up pipelines. This usually takes longer than expected, and if the data isn’t solid, everything else slows down.

- Model iteration & retraining: Multiple training cycles, tuning, and updates. The first version rarely works perfectly, so iteration adds time and cost

- Integration & system fit: Connecting APIs, databases, and legacy systems. This is where most friction shows up and timelines start stretching

- Infrastructure & compute: Cloud, GPUs, storage, and inference. Costs grow as usage scales, not something you pay for just once

- MLOps & monitoring: Tracking performance, handling drift, versioning, and updates. Without this, systems degrade quietly over time

- Security & compliance: Data protection, access control, and audits. Important for enterprise setups and can slow things down if missed early

- Knowledge transfer: Documentation and onboarding your team. If skipped, you stay dependent on the vendor longer than expected

- Delays & rework: Scope gaps, unclear requirements, early design misses. Small issues here tend to get expensive later

The cost of AI development outsourcing is not just what you agree on upfront. It’s everything required to make the system stable, usable, and scalable over time. Teams that account for this early tend to avoid the usual surprises halfway through the project. .

Common Failure Signals in Outsourcing of AI

You usually don’t need to wait until delivery to see issues. They start showing up early, in how the work is planned and discussed.

| What You Notice | What’s Actually Happening | What To Do Instead |

|---|---|---|

| Too much focus on the model | Everyone’s talking accuracy, but not how it fits into real systems | Start with the full system. Map data flow, inputs, and how outputs will actually be used |

| No clarity on data ownership | Teams aren’t sure who handles data, pipelines, or access | Define ownership early. Set clear pipelines and data contracts |

| Timelines look too clean | No room for retraining or real-world testing | Plan for iteration. AI work always needs a few cycles to settle |

| No MLOps plan | No visibility into model performance after deployment | Set up monitoring, versioning, and rollback from the start |

| Integration comes in late | Things break when connecting to real systems | Treat integration as core work. Plan for APIs, retries, and failures early |

| Overpromising on results | Unrealistic timelines or guaranteed outcomes | Tie expectations to data quality and test with real scenarios |

| No pushback from the partner | Everything gets a “yes,” no questions asked | Work with teams that challenge assumptions and flag risks early |

Most of these issues don’t show up suddenly. They build up early. Catching them in time keeps the project on track rather than fixing things later.

How to Differentiate Between AI-First Partners vs Traditional Outsourcing Vendors

You’ll notice this difference early, often in the first few conversations. Some vendors talk in terms of features and delivery timelines. Others start with data, workflows, and how the system will behave after deployment. That gap usually comes from how the team is structured and how they approach AI work in business.

| Aspect | AI-First Partners | Traditional Outsourcing Vendors |

|---|---|---|

| Approach To Problem Solving | Start with data, use case clarity, and system behavior before picking models | Start with feature requirements and tech stack, AI added as a layer later |

| Team Structure | Built around ML engineers, data engineers, and MLOps specialists working together | Generalist dev teams with limited AI specialization |

| Data & Pipeline Focus | Strong focus on data pipelines, feature stores, and data quality checks from day one | Assume data is ready or handled separately, leading to gaps later |

| MLOps & Lifecycle | Clear setup for model versioning, monitoring, retraining, and drift handling | Often limited to deployment, with little focus on long-term model performance |

| Integration Thinking | Design for real-world system fit, including APIs, async flows, and failure handling early | Integration is usually addressed later, causing delays and rework |

| Speed Of Execution | Faster cycles due to reusable frameworks and prior AI deployment experience | Slower due to trial-and-error and lack of AI-specific workflows |

| Outcome Ownership | Focus on business impact and system reliability, not just delivery | Focus on feature completion and timelines |

| Scalability Readiness | Built to handle growing data, usage, and continuous model updates | Scaling often requires rework due to initial architecture gaps |

With artificial intelligence outsourcing, the difference is not just technical capability. It’s how early the team thinks about data, systems, and long-term behavior.

That’s usually what separates a partner who helps you scale from one who just helps you ship.

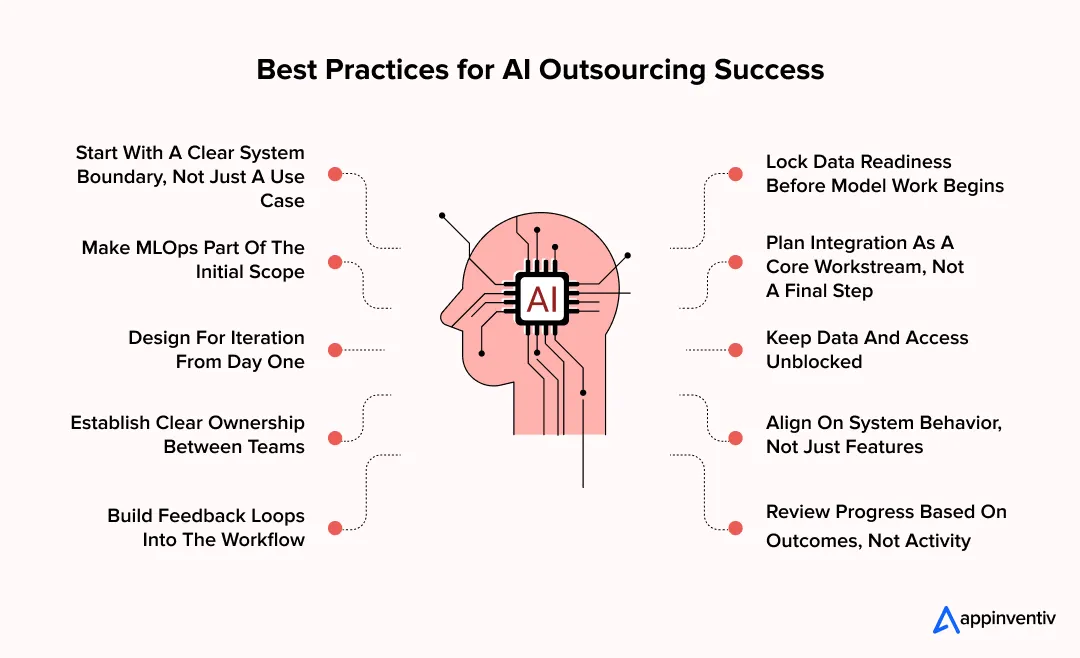

Best Practices for AI Outsourcing Success

Once the partner is chosen, the real work begins. This is where many teams assume things will just fall into place. In reality, how you run the engagement matters as much as who you hire. A few practical habits early on can make the difference between steady progress and constant course correction.

1. Start With A Clear System Boundary, Not Just A Use Case

A use case sounds simple on paper. “Build a recommendation engine” or “automate document processing.” The real complexity lies in how that system interacts with your data, APIs, and workflows.

- Define what sits inside vs outside the AI layer

- Map input sources, transformations, and output consumers

- Identify where human validation or overrides are needed

Without this, teams build in isolation and struggle when it’s time to connect everything.

Many teams start here, treating the first version as an MVP, and this guide on how to build an AI MVP can help you structure it effectively.

2. Lock Data Readiness Before Model Work Begins

This is where many timelines slip. Teams assume data will be usable, and only realize gaps once development starts.

- Audit data quality, completeness, and consistency early

- Define schemas, validation rules, and fallback handling

- Set up data contracts between systems to avoid silent breaks

In most cases, stabilizing data takes longer than building the model itself.

3. Make MLOps Part Of The Initial Scope

This is often pushed to later phases, which creates long-term instability.

- Set up model versioning, monitoring, and alerting early

- Define rollback strategies before deployment

- Track data drift and performance decay continuously

Without this, issues go unnoticed until they start affecting users.

4. Plan Integration As A Core Workstream, Not A Final Step

Integration is where most delays happen, especially in enterprise setups.

- Identify API dependencies and system constraints early

- Design for async processing, retries, and failure handling

- Test with real system inputs, not just controlled datasets

Systems that aren’t designed for real-world conditions tend to break under load.

5. Design For Iteration From Day One

AI systems don’t stay static. Data changes, patterns shift, and models need updates.

- Plan for retraining cycles based on real signals, not fixed timelines

- Keep feature pipelines flexible for updates

- Avoid tightly coupled systems that make changes difficult

If iteration isn’t built in early, every update becomes a heavy lift later.

6. Keep Data And Access Unblocked

Even strong teams slow down without access.

- Ensure timely access to datasets and systems

- Set up secure environments early

- Avoid manual data handoffs

Smooth data flow directly impacts delivery speed.

7. Establish Clear Ownership Between Teams

Shared responsibility without clarity slows everything down.

- Define who owns data pipelines, models, and deployment layers

- Align on who handles incidents, updates, and monitoring

- Avoid overlapping responsibilities that create confusion

Clear ownership reduces friction and speeds up decision-making.

8. Align On System Behavior, Not Just Features

Features are easy to define. System behavior is where complexity sits.

- Define how the system should behave under edge cases, failures, and scale

- Agree on latency expectations and fallback mechanisms

- Set expectations for how outputs are used by downstream systems

This avoids surprises when the system goes live.

9. Build Feedback Loops Into The Workflow

AI systems improve with usage, not just development.

- Capture real user feedback and system outputs early

- Track where predictions fail or need adjustment

- Use this input to guide retraining and feature updates

Without feedback, systems stagnate quickly.

10. Review Progress Based On Outcomes, Not Activity

It’s easy to track tasks. It’s harder to track impact.

- Measure progress using model performance in real scenarios

- Track business-level outcomes, not just delivery milestones

- Revisit priorities based on what’s actually working

This keeps the focus on results, not just output.

Successful AI outsourcing best practices for enterprises are less about process and more about discipline. Teams that stay close to data, system behavior, and outcomes tend to move faster and avoid unnecessary rework.

With Appinventiv, set the right foundation early and avoid delays, rework, and hidden costs.

How Appinventiv Helps Enterprises Build and Scale AI With Confidence

In most enterprise teams, the challenge shows up a little later than expected. A model works, the initial results look promising, and then things slow down when it has to run with real data and real users. That’s where Appinventiv’s AI development services are designed to step in. The focus stays on getting the foundation right early, how data flows, how systems connect, and how the solution behaves once it’s live, not just in testing.

What tends to make the difference is how things are set up from the start. Instead of jumping straight into development, there’s alignment on data readiness, integration points, and ownership across teams. That way, you’re not revisiting core decisions halfway through the project. It also helps keep internal teams in control of what matters most, while still moving quickly on execution.

Here’s how that translates across real projects:

What this really shows is a pattern. When the foundation is set up right early on, data, architecture, and ownership, AI systems are far more likely to hold up as they scale.

If you’re at that stage where decisions around AI feel high-stakes and timelines are tight, it helps to have a team that’s done this before. The right setup early can save months of rework later. Let’s connect!

FAQs

Q. How to outsource AI development for enterprises?

A. Start with clarity on the problem, your data, and how it connects to your systems. Then outsource AI development in phases, usually beginning with a small pilot before scaling.

Most teams treat this as part of a broader AI outsourcing strategy, keeping core pieces in-house while using external teams to move faster where needed.

Q. Why do enterprises choose to outsource AI development?

A. It’s mostly about speed and access to the right expertise. Building everything internally takes time, especially for hiring and setup.

That’s why many rely on AI consulting and outsourcing together. It helps validate ideas faster, and in some cases, offshore AI development also makes scaling more practical.

Q. How do you choose the right AI outsourcing partner?

A. This is where AI outsourcing partner selection matters. Look beyond portfolios and check if they’ve handled real deployments.

Strong enterprise AI outsourcing companies focus on data, integration, and long-term system behavior. That’s what actually defines choosing the right outsourcing partner.

Q. What are the common risks in AI outsourcing?

A. The risks of outsourcing AI development usually come from unclear data ownership, weak integration planning, or missing MLOps.

Many AI implementation outsourcing challenges show up later when systems don’t scale or require rework because early decisions weren’t clear.

Q. How much does it cost to outsource AI development?

A. The AI outsourcing cost depends on complexity. It’s not just development, it includes data prep, infrastructure, and ongoing updates.

The cost of AI development outsourcing is easier to manage when you work with the right AI development partner for enterprises and plan these layers early.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

How to Choose the Right AI Cybersecurity Consultant for High-Risk AI Deployments

Key takeaways: High-risk deployments require specialized security expertise. Many organizations hire AI cybersecurity consultants to identify vulnerabilities before systems go live. A qualified AI cybersecurity expert should understand model security, data pipelines, infrastructure protection, and adversarial testing. Enterprises should evaluate experience with high-risk systems, threat modeling capabilities, and monitoring strategies before hiring a consultant. Continuous…

How to Hire Machine Learning Engineers to Scale AI From Prototype to Full Production

Key Takeaways Most AI projects fail after the demo. The model works, but the system around it does not. Data scientists build the model. ML engineers make sure it survives real traffic. Scaling AI means fixing pipelines, monitoring drift, and automating retraining, not just improving accuracy. As AI matures, teams must expand. Production systems need…

Key takeaways: Most enterprises use AI for coding, but only a small share of workflows run autonomously end to end. Agentic systems reduce delivery time and manual effort by coordinating planning, coding, testing, and deployment in one flow. Strong architecture and orchestration layers decide whether multi-agent systems scale or fail in real production environments. Governance,…