- Why Are AI Agents Becoming the Backbone of Modern Cybersecurity?

- What Capabilities and Functions Define a Modern Cybersecurity AI Agent?

- How Should You Architect an AI Security Agent for Enterprise Use?

- What Are the Highest-Value AI Agent Cybersecurity Use Cases in Enterprises?

- How Do AI Agents Integrate Into SecOps and Application Security Workflows?

- How Do You Secure the AI Agents Themselves?

- What Are the Real Challenges and Limitations of AI Agents in Cybersecurity?

- How Do You Build Trust and Ensure Responsible AI Actions in Cybersecurity?

- How Do You Implement AI Agents in Cybersecurity Without Breaking Production?

- What's the Difference Between Traditional Cybersecurity Tools and AI Agents?

- What Does It Cost to Build AI Agents for Cybersecurity?

- How Can Appinventiv Help You Build, Integrate, and Scale AI Agents for Cybersecurity?

- FAQs

Key takeaways:

- AI agents for cybersecurity are moving past triage assistance into autonomous decision-making across SOC, AppSec, and threat intelligence.

- Extensive AI use in security operations saves $1.9M per breach and cuts the breach lifecycle by 80 days (IBM, 2025).

- 97% of organizations hit by an AI-related security incident lacked proper AI access controls.

- The hard part isn’t the model — it’s the architecture, governance, guardrails, and oversight around it.

- This guide reflects 10+ years of building secure, audit-ready systems for healthcare and fintech — what works in production, not in pitch decks.

They make zero-trust workable at scale. Zero-trust sounds great until you realize it requires a validation decision on essentially every request, and no human team can keep up with that. Agents handle the volume — baselining network behavior, flagging deviations, correlating identity and endpoint signals — so your zero-trust architecture isn’t theoretical.

AI agents for threat detection and response often follow two directions. Inbound: agents pull in unstructured threat feeds, extract IOCs, and turn them into detection rules and hunting hypotheses. Outbound: when you see weird activity in your environment, agents match it against known threat-actor TTPs to figure out who you’re likely dealing with and what they’ll probably try next. Saves your CTI team hours of manual correlation work.

By looking at sequences, not events. A signature-based tool sees one suspicious PowerShell execution and either alerts or doesn’t. An agent looks at that execution alongside the user’s recent login pattern, what process spawned it, what the host has been doing for the past 48 hours, and whether anything similar showed up on adjacent hosts. The individual signals can all look fine. The sequence is what gives the attack away.

Architecture first, code second. The teams that fail at this skip the governance design, pick a flashy use case, and end up with an agent that nobody trusts, and the auditor won’t approve. Get the architecture, the policy boundaries, and the audit logging right before you build anything. Then start with one workflow — usually tier-1 triage — run it in shadow mode for a few weeks, expand authority gradually. Boring works.

The global AI cybersecurity market used to be $25.35 billion in 2024. By 2030, it’s projected to hit $93.75 billion; that’s a 24.4% CAGR. But the future of this spend isn’t going toward simple detection algorithms. It’s going toward AI agents for cybersecurity.

The goal has shifted. It’s no longer about identifying or predicting alerts faster. It’s about agents that can reason across telemetry, decompose investigations into multi-step plans, take containment actions inside governed boundaries, and close the loop on entire incident classes without a human in the chain.

If you’re a CISO, VP of security engineering, or product leader evaluating AI agent development services, you’re past the awareness stage. You know what agentic AI is. What you need is execution clarity — architectural decisions, integration patterns, governance frameworks, and the operational realities of running these systems in production environments, handling regulated data.

Let’s get into it.

Why Are AI Agents Becoming the Backbone of Modern Cybersecurity?

The math behind enterprise security has stopped working. Organizations face an average of 960 security alerts daily, with enterprises with over 20,000 employees seeing more than 3,000 alerts.

It takes an average of 70 minutes to fully investigate an alert, and 62% of alerts are simply ignored altogether. Meanwhile, ISC2 reports 4.8 million unfilled cyber roles worldwide, with no relief in sight.

Traditional rule-based detection cannot bridge this gap. Hiring won’t either. What can — and what we’re seeing actually deliver in our client engagements — is a deliberate shift to AI agents in cybersecurity that can reason over telemetry, execute investigation workflows, and take containment actions at machine speed.

This is the inflection point. The enterprises that are getting it right aren’t bolting AI onto legacy SIEM stacks — they’re rearchitecting around agentic workflows, identity-first security, and continuous validation. That’s the work we want to walk you through.

From our work with regulated enterprises: “AI agents can either make your defenses stronger or create dangerous weak spots. The future of security will depend on how well organizations secure their agents.”

— Chirag Bhardwaj, VP of Technology, Appinventiv (source)

What Capabilities and Functions Define a Modern Cybersecurity AI Agent?

Before we get into architecture, let’s calibrate on what an AI agent actually does in a security context. We see teams conflate “AI agent” with “chatbot with security tools.” That confusion costs months of misaligned engineering. A real cybersecurity AI agent has six core capabilities:

- Risk identification and vulnerability scanning. Agents continuously scan code repositories, container images, IaC templates, and runtime workloads to surface vulnerabilities ranked by exploitability, asset criticality, and lateral movement potential — not just CVSS scores in isolation.

- Telemetry correlation and root cause reasoning. This is where agentic systems clearly outperform legacy SOAR. An agent ingests endpoint, network, identity, and cloud logs, then reasons across them to construct a coherent incident narrative: who did what, when, from where, and what they touched next.

- Dynamic application test execution. AI agents for cybersecurity penetration testing can run dynamic application security testing (DAST) scans, follow application logic, identify auth boundaries, and probe edge cases that signature-based scanners miss.

- Containment and playbook execution. When confidence thresholds are met, agents can execute endpoint actions — isolating hosts, revoking sessions, rotating credentials, blocking IPs — within governance-defined limits.

- Predictive suggestions and threat hunting support. Agents proactively hunt across telemetry for indicators of compromise that haven’t yet generated alerts, often by hypothesis-testing against known TTPs in the MITRE ATT&CK framework.

- Autonomous remediation and reporting. Increasingly, mature agents handle full vulnerability lifecycle work: detection, prioritization, patch validation, change-window scheduling, post-remediation testing, and stakeholder reporting.

The capability that separates production-grade cybersecurity AI agents from demos is the last one — closing the loop without human intervention for well-understood incident classes, while escalating cleanly when ambiguity is high.

How Should You Architect an AI Security Agent for Enterprise Use?

Here’s where most implementations go sideways. Teams pick a model, wire it to a few tools, and call it an agent. Six months later, they’re dealing with prompt injection, runaway costs, audit failures, and a CISO asking why an LLM has admin credentials.

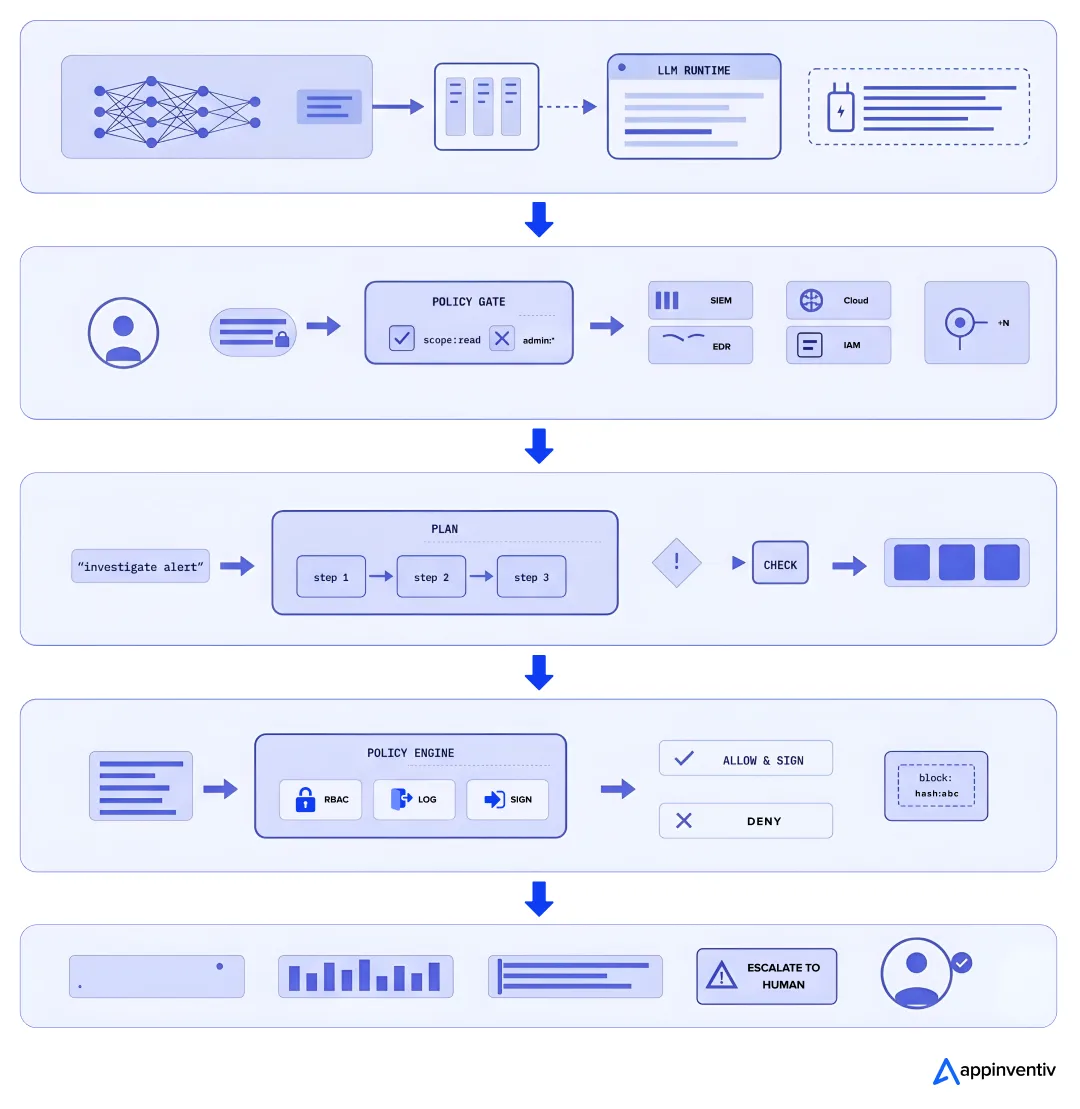

We design an AI security agent architecture around five layers, each with distinct security and governance requirements:

- Layer 1 — Inferencing stack. The model runtime, including any retrieval-augmented generation (RAG) infrastructure feeding context to the agent. This is where you make decisions about model choice, on-prem vs. hosted inference, confidential computing, and protected PCIe paths for sensitive workloads.

- Layer 2 — Tool and API integration layer. Every agent action is mediated through API tokenization and validation. We treat each tool the agent can invoke as a privileged identity with scoped permissions — never blanket admin access. API integration uses signed tokens with short TTLs and contextual binding.

- Layer 3 — Orchestration and reasoning. This is where multi-step planning happens. The orchestrator decomposes goals into tool calls, validates intermediate results, and applies runtime guardrails before any action with side effects executes.

- Layer 4 — Governance and policy. Zero-trust principles applied to the agent itself. The agent has no implicit trust — every action is policy-checked, logged with cryptographic integrity, and constrained by an on-silicon governance layer where supported.

- Layer 5 — Observability and human oversight. Operational monitoring captures every reasoning step, tool call, and decision. Manual intervention paths are first-class — the system is designed assuming humans will need to take over.

The non-negotiable design principle: the agent must always be auditable. Opaque decision-making is unacceptable in regulated environments. We use cryptographic certificate systems to verify the authenticity and integrity of AI components from build through runtime, so a compromised model or injected tool cannot impersonate a trusted agent.

Teams new to this often underestimate the security boundary. According to IBM, 97% of breached organizations that experienced an AI-related security incident say they lacked proper AI access controls. The architecture above is what closes that gap.

To fix this, expert teams providing AI integration services typically begin with an architecture audit before any code gets written — the cost of fixing a flawed agent architecture in production is roughly 10x the cost of getting it right upfront.

What Are the Highest-Value AI Agent Cybersecurity Use Cases in Enterprises?

After ten years of building secure systems for healthcare and fintech, we’ve learned that successful AI agent cybersecurity programs don’t try to do everything at once. They pick two or three workflows where agents clearly outperform existing tooling, prove value, then expand.

These are the agentic AI use cases in cybersecurity that consistently deliver measurable ROI for our enterprise clients:

| Use case | What the agent does | Benefits |

|---|---|---|

| Tier 1 SOC triage | Enriches alerts with identity, asset, and behavioral context. Auto-closes low-fidelity noise. | 60%+ fewer false positives. |

| Autonomous Threat Hunting | Hypothesizes against MITRE ATT&CK, queries telemetry and surfaces intrusions that didn’t trip a rule. | Catches slow-burning, multi-step attacks. |

| Vulnerability prioritization | Ranks CVEs by exploit availability, asset exposure, and business impact. Maps to SOC 2, HIPAA, PCI DSS and NIST CSF. | Audit-ready evidence as a byproduct. |

| Automated pentesting | Continuous adversary emulation against identity, config, and app logic. Validates detection coverage. | Replaces annual point-in-time tests. |

| Phishing and malware analysis | Sandbox detonation, threat-intel correlation, mailbox retraction and user awareness triggers. | Containment in minutes, not hours. |

| Runtime AppSec | App-API usage analysis. Detects anomalous queries. Adapts to legitimate drift. | No alert storms, no release blockers. |

| Cloud workload monitoring | Agentless AWS/Azure observation. Flags drift, privilege creep, lateral movement. | Multi-cloud visibility without instrumentation. |

How Do AI Agents Integrate Into SecOps and Application Security Workflows?

Integration is where most pilots stall. The agent works in isolation but never gets wired into the actual security platform — so it produces insights nobody acts on. Here’s how we approach implementing AI in cybersecurity systems so they survive contact with production operations.

For SecOps integration, the agent becomes a participant in your existing SIEM/SOAR stack rather than a replacement. The integration points typically include:

- Alert ingestion from SIEM via streaming API

- Telemetry queries against the security data lake

- Action execution through SOAR playbooks (with the agent as one possible executor among many)

- Bidirectional case management with the ticketing system

- Cryptographically signed audit logging to a tamper-evident store

For incident response, the agent handles intelligent alert triage and enrichment, suggests containment actions, executes approved playbooks, and produces structured incident documentation.

Critical principle: the agent’s authority scope is explicit and bounded. Containment of a workstation? Yes, autonomously. Disabling a privileged user account? Human approval gate. Modifying a firewall rule? Multi-party approval.

For AppSec integration, agents plug into the SDLC at multiple points:

- IDE-level static analysis and secure coding suggestions

- CI/CD pipeline DAST execution and SAST review

- Pre-deployment threat modeling and policy validation

- Runtime protections and application traffic anomaly detection

- Production incident investigation when AppSec issues are exploited

What makes this work in practice is treating the agent as a system that earns autonomy. New deployments operate in shadow mode for weeks, recommending actions while humans execute. As false-positive rates drop and decision quality is validated, autonomy expands within governed boundaries.

We don’t recommend giving any agent production authority on day one. If you’re from healthcare and fintech, you probably wouldn’t pass an audit even if you did.

If you’re early in your enterprise AI cybersecurity implementation journey, the AI agent development services you sign up for should include this graduated rollout pattern as a core deliverable, with explicit go/no-go criteria at each autonomy expansion.

We wire agents into your SIEM, SOAR, EDR, and IAM without rip-and-replace.

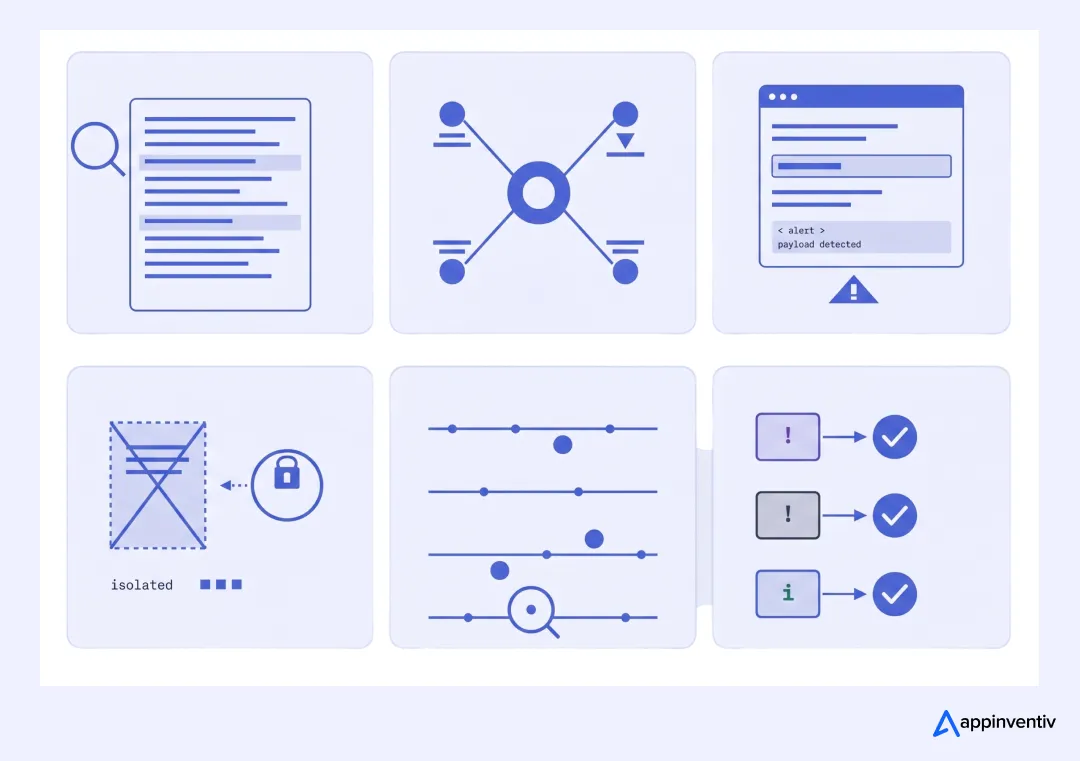

How Do You Secure the AI Agents Themselves?

This is the conversation that’s missing from most vendor pitches. Workflows of AI agents for cybersecurity hold privileged access, ingest sensitive telemetry, and can take destructive actions. They are themselves a high-value target.

As agents gain access to SIEM, SOAR, EDR, IAM, and cloud telemetry, cybersecurity for AI becomes critical to prevent prompt abuse, unsafe tool calls, data leakage, and unauthorized containment actions.

“The data shows that a gap between AI adoption and oversight already exists, and threat actors are starting to exploit it. As AI becomes more deeply embedded across business operations, AI security must be treated as foundational.”

— Suja Viswesan, VP, Security and Runtime Products, IBM

Here’s the protection model we apply when building agentic systems for clients:

- Identity-first security for agents. Every agent and every sub-agent in a multi-agent system has a unique, attestable identity. Tool access is scoped to that identity with just-in-time elevation. You should never use shared service accounts.

- Runtime guardrails. Before any action with side effects executes, the orchestrator checks it against policy. Destructive actions outside known patterns trigger human approval. Unusual sequences (e.g., the agent suddenly enumerating all user accounts) trigger automatic isolation.

- Continuous monitoring of agent behavior. Just as we monitor users for anomalous activity, we monitor agents. Sudden changes in tool-call patterns, unusual data access, or reasoning chains that don’t match the agent’s typical patterns get flagged for review.

- Visibility into the inferencing stack. We instrument the model runtime itself — token usage, latency patterns, prompt content, output distributions. Many prompt injection attacks become visible at this layer.

- Confidential computing where the stakes are highest. For healthcare and financial workloads, we use NVIDIA Confidential Computing and protected PCIe paths so even infrastructure operators can’t read the data being processed.

- Isolation boundaries. Multi-tenant agent deployments need hard isolation — process, network, and storage. A compromised customer’s agent shouldn’t be able to touch any other customer’s data.

The blast radius of a compromised agent is much larger than that of a compromised user account. Architect accordingly.

What Are the Real Challenges and Limitations of AI Agents in Cybersecurity?

We’re going to be honest about what doesn’t work yet, because the vendor marketing won’t be. These are the constraints we run into across actual enterprise deployments:

| Challenge | What it actually looks like in production | How we mitigate |

|---|---|---|

| Lack of transparency | The agent recommends an action; the SOC manager can’t explain why to the auditor | Every reasoning step is logged with structured explanations of AI recommendations; we use chain-of-thought capture and tool-call provenance |

| Data quality concerns | Garbage telemetry produces garbage agent decisions. Old log schemas, inconsistent identifiers and missing fields | Pre-deployment data quality assessment; schema normalization layer; explicit confidence scoring tied to data completeness |

| False positives and false negatives | Agents miss novel attacks that don’t fit the training distribution; agents flag legitimate admin behavior as threats | Tiered confidence thresholds; human-in-the-loop for edge cases; continuous feedback loops to improve the model |

| Adaptability problems | Models trained on yesterday’s threats degrade against today’s. Attackers actively probe and adapt | Scheduled model updates; continuous adversary simulation to detect drift; ensemble approaches that don’t depend on a single model |

| Alert volume despite AI | Poorly tuned agents create new alert categories on top of existing ones | Alert taxonomy redesign before deployment; agent outputs replace, not supplement, lower-tier alerts |

| Implementation complexity | Six-month projects become two-year programs; integration debt accumulates | Phased rollout with clear value milestones; reuse of standardized integration patterns; preference for boring, audit-friendly tech choices |

| Need for human oversight | “Autonomous” agents require ongoing tuning, validation, and decision review | Design for partnership from the start; staff for AI ops, not just AI engineering |

| Continuous 24/7 monitoring | Off-hours coverage is when attacks happen, and analysts are thinnest | Agents handle off-hours triage with strict containment authority; humans are escalated only for confirmed incidents |

| Response actions outside guardrails | An agent takes the wrong containment action and disrupts business operations | Conservative default authority; expansion of autonomy only after measured validation periods; hard-coded blast-radius limits |

| Threat hunting gaps | Agents are good at known patterns but weak at novel attacker creativity | Hybrid model — agents handle scale; senior threat hunters work the long tail |

Anyone telling you these challenges don’t exist is selling you a demo, not a system. The teams that succeed plan for these realities upfront.

How Do You Build Trust and Ensure Responsible AI Actions in Cybersecurity?

Trust isn’t a marketing claim. It’s an architectural property you can verify. As AI agents take on more autonomous actions in security environments, the trust model has to be explicit and testable.

We design trust into agentic systems through five concrete mechanisms:

- Transparency by default. Every agent decision includes an explanation grounded in evidence — which logs were consulted, which tools were called, what reasoning chain led to the recommendation. Opaque decisions are treated as bugs.

- Verifiable component integrity. A cryptographic certificate system attests to the authenticity and integrity of AI components — the model weights, the prompt templates, the tool definitions and the orchestration logic. If any component is modified outside the change pipeline, the agent refuses to start.

- Zero-trust principles applied internally. The agent doesn’t trust its own tools by default. Every API token is validated. Every response is checked against expected schemas. A subverted tool can’t extend the agent’s authority.

- Human-in-the-loop for high-stakes decisions. We build manual intervention paths as primary, not fallback. Even fully autonomous agents have well-defined “phone home” criteria that bring humans into the loop.

- Operational monitoring and runtime guardrails. Continuous behavioral baselines for the agent itself. Drift triggers an investigation. Unusual reasoning chains trigger safe-mode operation.

The on-silicon governance layer is becoming increasingly important for high-assurance environments — embedding policy enforcement at the hardware level so even a compromised orchestrator can’t bypass guardrails. This is where the AI security stack is heading, and it’s where the organizations that take this seriously are already investing.

How Do You Implement AI Agents in Cybersecurity Without Breaking Production?

Here’s the playbook we follow with enterprise clients. It’s intentionally conservative — we’ve seen too many “move fast” pilots create more risk than they prevent.

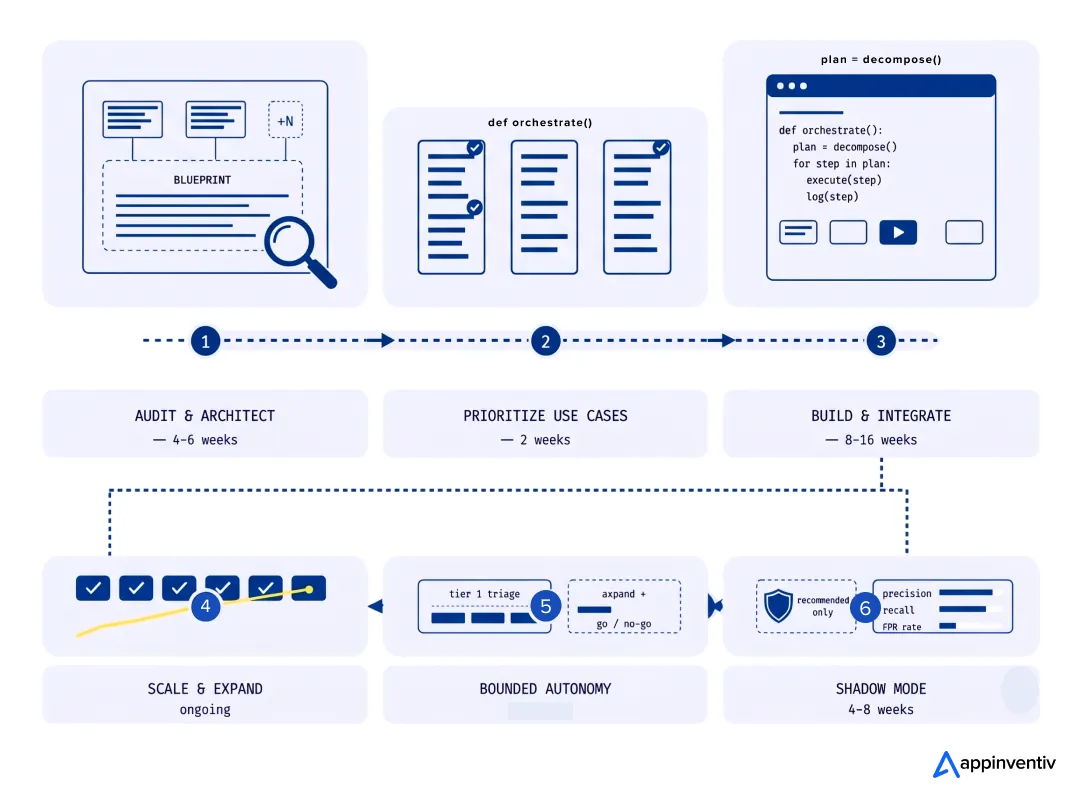

- Phase 1 — Audit and architecture design (4-6 weeks). Before any code, we map the existing security stack, data flows, identity model, and compliance obligations. We design the target architecture, identify integration points, and define the governance model. The output is a blueprint that a security architect can defend in front of an auditor.

- Phase 2 — Use case prioritization (2 weeks). We pick two or three high-value, low-risk workflows for the initial deployment — typically alert triage and enrichment, plus one application or threat hunting use case. The selection criteria are: clear ROI, well-defined success metrics, and limited blast radius if something goes wrong.

- Phase 3 — Build and integrate (8-16 weeks). Engineering work: agent orchestration, tool integrations, governance enforcement, observability, audit logging. We instrument heavily — you can’t tune what you can’t see.

- Phase 4 — Shadow mode (4-8 weeks). The agent runs alongside existing processes, making recommendations without taking action. Analysts compare agent decisions to their own. We measure precision, recall, false-positive rates, and edge-case handling.

- Phase 5 — Bounded autonomy (ongoing). The agent gets execution authority for the highest-confidence action classes. We expand the authority scope incrementally, gated by operational metrics. Each expansion has explicit go/no-go criteria.

- Phase 6 — Scale and expand. Once one workflow is proven, the team’s ready to add the next. By the third or fourth use case, the integration patterns are reusable, and rollout accelerates.

The single most important thing to get right: don’t deploy agents into broken processes. If your existing SOC has chronic data quality issues, undefined escalation paths, or unclear ownership, an AI-powered SOC automation, especially in the form of an agent, will amplify those problems, not solve them. Fix the operational fundamentals first, then add automation.

What’s the Difference Between Traditional Cybersecurity Tools and AI Agents?

Decision-makers ask this constantly, so let’s make it concrete:

| Dimension | Traditional Tools (SIEM/SOAR/EDR) | AI Agents for Cybersecurity |

|---|---|---|

| Detection logic | Signature-based, rule-based | Behavioral, contextual, reasoning-based |

| Response model | Pre-defined playbooks | Goal-directed planning with dynamic execution |

| Scaling pattern | Linear with analyst headcount | Sub-linear; one agent handles thousands of alerts |

| Adaptability | Manual rule updates | Continuous learning from outcomes |

| Investigation depth | Surface-level alert details | Multi-step root cause reasoning |

| False positive handling | Tuning by humans | Self-correction with feedback loops |

| Threat hunting | Hypothesis-driven by analysts | Continuous, automated hypothesis testing |

| Decision explainability | High (deterministic rules) | Requires explicit design (we make it high) |

| Cost structure | License + analyst time | License + inference + ops engineering |

| Compliance posture | Established, well-understood | Emerging; needs explicit governance design |

The honest answer: AI agents don’t replace traditional tools — they replace the human work of interpreting and acting on those tools’ output. The SIEM still produces telemetry. The EDR still generates alerts. What changes is who decides what to do with them, and how fast.

What Does It Cost to Build AI Agents for Cybersecurity?

Pricing in this space is all over the map because scope variability is enormous. Here’s our framework for grounding cost discussions with clients:

- Discovery and architecture (typical range: $40K–$120K). Audit, design, governance framework, integration mapping. This is non-negotiable for regulated industries.

- MVP build for a single use case (typical range: $150K–$400K). One workflow — usually SOC triage — with full integration, governance, and observability. Three to four months of engineering.

- Production-grade enterprise platform (typical range: $500K–$2M+). Multiple use cases, multi-cloud, full compliance documentation, multi-agent orchestration, custom model fine-tuning, and on-prem inference where required. Six to fifteen months.

- Ongoing operations (typical range: $80K–$400K/year). Inference costs, model updates, tuning, expansion and ongoing security work on the agents themselves.

The biggest cost driver isn’t the model — it’s the integration surface. An organization with consolidated security tooling spends a fraction of what one with dozens of point solutions does. Plan accordingly.

What we’ve seen pay back fastest: SOC tier-1 augmentation, where the productivity gains materialize in months. Compliance automation comes second — not because it’s flashier, but because audit prep cost reductions are measurable line items.

How Can Appinventiv Help You Build, Integrate, and Scale AI Agents for Cybersecurity?

Our Achievements & Case Studies

Verified awards URLs

We’ve spent the last decade building secure software for clients in healthcare, fintech, and other compliance-heavy industries. With a team of 350+ fintech professionals and a track record of delivering more than 500 custom fintech solutions globally, we know what audit-ready software actually requires — because we’ve been building it.

Our approach to building AI agents for cybersecurity is shaped by that experience. We don’t build demos. We build systems that pass HIPAA, SOC 2, PCI DSS, and GDPR audits, run reliably in production, and survive contact with adversaries.

What clients work with us on:

- End-to-end AI agent development services — from architecture and model selection through production deployment and long-term operations.

- AI integration services — wiring agentic capabilities into existing SIEM, SOAR, EDR, IAM, and ticketing stacks without rip-and-replace.

- Governance and compliance frameworks — agent-specific policy design, audit logging, and regulatory mapping for U.S. and global frameworks.

- AppSec automation — agents integrated into CI/CD for continuous security testing, vulnerability prioritization, and runtime protection.

- Industry-specific deployments — purpose-built solutions for healthcare, fintech, and other regulated environments where generic AI tools fall short.

Real client outcomes: For KPMG, we built an AI-powered data query bot that converts plain-language questions into SQL, retrieves live data, and visualizes results in context — enabling teams to make decisions in seconds instead of hours.

The Economic Times has named us “The Leader in AI Product Engineering & Digital Transformation,” and we’ve earned consecutive Deloitte Tech Fast 50 awards in 2023 and 2024. But what matters more for security work is the depth of our compliance experience: we work with clients under HIPAA, GDPR, PCI DSS, SOC 2, SAMA, and VARA frameworks every day.

If you’re past evaluation and ready to build, our team can typically have a working architecture proposal in two to three weeks. The right place to start is a focused conversation about your current stack, your top use cases, and your compliance constraints — then we’ll tell you honestly whether agentic AI is the right next move, and what the realistic path looks like.

FAQs

Q. How are AI agents used in cybersecurity?

A. Mostly for the work nobody on the team wants to do at 2 a.m. — triaging alerts, enriching them with identity and asset context, hunting for indicators that didn’t trip a rule, prioritizing vulnerabilities, running the first 80% of an incident investigation, and handling the cleanup work after a phishing wave.

The agents that earn their keep are the ones doing high-volume, well-defined work and handing off cleanly when something’s ambiguous.

Q. What are the benefits of using AI agents for cybersecurity?

A. IBM’s 2025 numbers tell the financial story pretty bluntly: $1.9M saved per breach, breach lifecycle shortened by 80 days. But honestly, the bigger benefit our clients talk about is analyst retention.

When your tier-1 people stop spending eight hours a shift closing the same false positives, they stick around. They get to do actual security work. That’s harder to put in a slide, but it’s why these projects survive past the first leadership change.

Q. What is the difference between traditional cybersecurity tools and AI agents?

A. Your SIEM tells you what happened. Your SOAR runs a playbook if it matches one. An agent figures out what to do when neither of those is enough. Traditional tools are deterministic and scale with the number of analysts you have to interpret them.

Agents handle the interpretation and action layer, which is where the human bottleneck has always been. You still need the SIEM and the EDR — they generate the telemetry that the agent reasons over.

Q. How long does it take to build and deploy AI agents for cybersecurity?

A. For a single use case like SOC triage, figure three to four months to get to shadow mode, then another month or two of bounded autonomy before you’d call it production. A full enterprise rollout across multiple workflows is more like six to fifteen months.

The biggest variable is how messy your existing tool stack is. Clients with consolidated tooling move fast. Clients with 30+ point solutions and inconsistent log schemas spend the first three months just cleaning up data plumbing.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

How to use Google AI Studio to quickly build (or "vibe code") and deploy apps

Key takeaways: Building apps with AI Studio is fast, but mostly useful for prototypes, not public-ready products. AI Studio can generate Android app previews quickly, but complex features like maps, chat, and live data still break easily. Security and compliance remain major gaps, especially when apps handle users, locations, or sensitive data. Publishing through Play…

Key takeaways: Start with research before development. Map compliance before choosing the architecture. Build the data foundation before training the model. Cost can range from $100K to $5M+, depending on scope. The biggest challenge is keeping the AI accurate, explainable, and compliant after launch. Building a real estate investment AI means planning for two industries:…

What UAE CDS 2027 Means for Your Platform: Integration, Compliance, Systems, and What to Build

Key takeaways: UAE CDS 2027 age verification compliance mandates real-time, auditable systems integrated into identity, access control, and enforcement layers across platforms. Effective compliance requires risk-based, multi-layered verification combining biometrics, Emirates ID checks, device intelligence, and behavioral signals. Legacy KYC systems fail CDS expectations; enterprises must implement continuous verification with dynamic risk scoring and re-validation…