- How Do You Hire Machine Learning Engineers for Production AI Systems?

- How Machine Learning Engineers Help Move AI Prototypes Into Production

- What Is the Difference Between Machine Learning Engineers, Data Scientists and AI Engineers?

- What Skills Should You Look for When Hiring Machine Learning Engineers?

- How Many Machine Learning Engineers Do You Need?

- Where Can Enterprises Hire Machine Learning Engineers?

- What Does It Cost to Hire Machine Learning Engineers?

- How Appinventiv Helps Enterprises Build Production AI Systems

- FAQs

Key Takeaways

- Most AI projects fail after the demo. The model works, but the system around it does not.

- Data scientists build the model. ML engineers make sure it survives real traffic.

- Scaling AI means fixing pipelines, monitoring drift, and automating retraining, not just improving accuracy.

- As AI matures, teams must expand. Production systems need dedicated ML and MLOps ownership.

- AI is not a one-time launch. It is a lifecycle that needs continuous oversight.

Most AI initiatives do not start as enterprise-scale programs. They begin with a focused experiment. A data scientist trains a model, validates it on historical data, and demonstrates measurable gains. Leadership sees potential, and the conversation quickly moves from “Does this work?” to “How do we deploy this?”

That transition is where complexity shows up. A model that performs well in a notebook does not automatically survive production traffic. Live data behaves differently. Security controls, integration layers, and scaling demands add pressure. What looked manageable in isolation often expands into a larger engineering effort involving pipelines, deployment systems, and lifecycle controls.

Industry research reflects this pattern. Gartner estimates that by 2026, nearly 60% of AI initiatives will fail to move beyond pilot stages, largely due to gaps in operational maturity, data readiness, and engineering depth. The issue is rarely model accuracy. It is production execution.

Once deployment becomes real, the skill requirements change. Building a model and running an AI system inside enterprise infrastructure are not the same task. This is typically when organizations begin planning to hire machine learning engineers or hire ML developers who understand both ML fundamentals and production systems.

At this stage, many companies start evaluating machine learning engineers for hire across internal, remote, and partner-led models. Selecting the right expertise becomes a strategic decision. It is no longer about experimentation. It is about building systems that keep working once AI becomes part of core operations.

McKinsey reports that companies that scale AI across functions see up to 3× more ROI than those stuck in experimentation.

How Do You Hire Machine Learning Engineers for Production AI Systems?

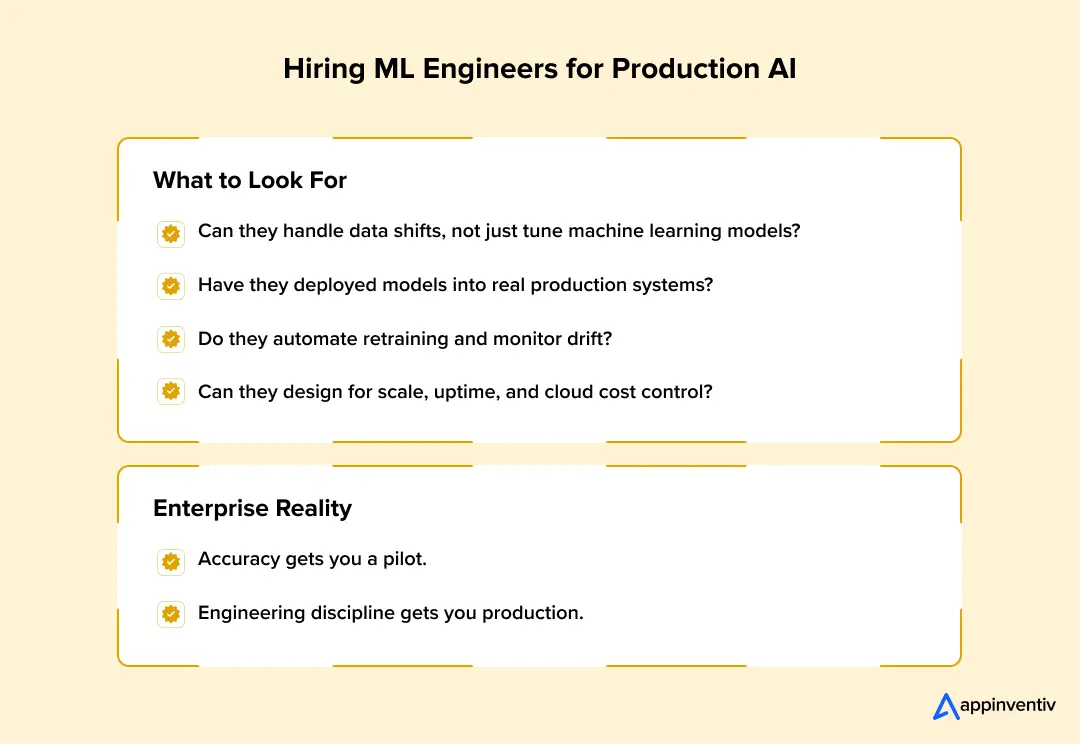

If the role supports live AI systems, the interview must test for live-system experience. Accuracy metrics are easy to discuss. Keeping a model stable under real traffic is not.

When enterprises hire machine learning engineers, the evaluation should mirror the environment the model will operate in. Production AI is part of a larger system. It interacts with APIs, databases, queues, cloud services, and monitoring tools. The engineer must understand that full picture.

Below is a practical way to structure the hiring process.

Step 1: Define the Production Reality

Before screening candidates, clarify your system constraints.

- Is inference real-time or batch-based?

- What are your latency targets?

- How often will retraining occur?

- Do you need audit logs for compliance?

Without this clarity, it becomes difficult to judge whether a candidate’s experience is relevant.

Step 2: Probe End-to-End Pipeline Experience

Ask candidates to walk through a real production workflow they have built.

You want to hear about:

- automated data ingestion pipelines

- orchestration tools used to schedule training jobs

- artifact storage and model versioning practices

- validation checks before deployment

If their experience stops at “we trained the model and handed it to DevOps,” that is a signal. Production ML requires ownership beyond experimentation.

Step 3: Assess Deployment Maturity

Production ML systems do not run directly from notebooks.

Strong candidates should be comfortable discussing:

- containerizing models using Docker

- deploying services through Kubernetes

- scaling inference endpoints based on load

- handling rolling updates and rollback scenarios

For example, in high-traffic environments, teams often use canary deployments to release new model versions gradually. Engineers who have managed this type of rollout usually understand production risk.

This is especially important when hiring ML engineers for production AI systems that support revenue-critical workflows.

Step 4: Validate Monitoring and Drift Awareness

Many AI systems degrade quietly.

Ask how they have monitored:

- prediction latency

- input feature distributions

- model accuracy over time

- data drift or concept drift

Look for answers that mention automated alerts and retraining triggers, not just dashboards. Production models require continuous oversight.

Step 5: Evaluate Cost and Scalability Thinking

Cloud infrastructure introduces another layer of responsibility.

Experienced ML engineers understand:

- how to size instances appropriately

- when to use batch inference instead of real-time endpoints

- how autoscaling policies affect cost

- how GPU workloads differ from CPU workloads

Poor infrastructure decisions can inflate monthly cloud bills quickly. Engineers who have optimized workloads bring both technical and financial discipline.

At this stage, some enterprises also evaluate whether they need to hire machine learning operations engineers separately to ensure long-term system reliability beyond initial deployment.

Enterprise Hiring Evaluation Matrix (Interview Questions to Identify Strong ML Engineers)

| Evaluation Area | What to Ask the Candidate | What a Strong Production Answer Includes | Red Flag Indicators |

|---|---|---|---|

| End-to-End ML Pipeline | “Walk me through your full ML workflow from raw data to deployment.” | Automated data ingestion, preprocessing, orchestrated training, artifact storage, reproducibility | Only discusses model training in notebooks |

| Model Deployment | “How did you deploy and update models in production?” | Dockerized models, Kubernetes deployment, autoscaling, blue-green or canary rollout strategies | Manual deployment, no version control or rollback plan |

| Monitoring & Drift Detection | “How did you monitor model performance after deployment?” | Latency tracking, data drift detection, performance thresholds, alert-based retraining triggers | No monitoring beyond basic accuracy checks |

| MLOps & Versioning | “How do you manage model and dataset versions?” | MLflow or similar tracking, versioned artifacts, reproducible training runs | No structured experiment tracking |

| Scalability & Performance | “How did you handle scaling under load?” | Autoscaling policies, request batching, resource profiling, SLA alignment | No experience with traffic spikes or scaling constraints |

| Cloud & Infrastructure | “What cloud environments have you used for ML workloads?” | Managed ML platforms, distributed training, cost optimization strategies | Only local or single-instance deployments |

| Failure Handling & Reliability | “What happens if a model endpoint fails?” | Health checks, fallback logic, rollback procedures, logging and alerting systems | No contingency planning for failures |

| Compliance & Traceability | “How do you ensure audit readiness?” | Version-controlled datasets, metadata tracking, reproducible pipelines | No documentation or audit awareness |

Hiring for production AI is less about finding someone who understands algorithms deeply and more about finding someone who has managed real systems under pressure.

Enterprises that align interviews with architecture, lifecycle ownership, and operational maturity significantly reduce surprises after deployment.

How Machine Learning Engineers Help Move AI Prototypes Into Production

A surprising number of AI initiatives start strong and still stall before reaching production. Inside research environments, models often look impressive. Data scientists train them in notebooks, evaluate them on historical datasets, and demonstrate high accuracy during internal reviews. On paper, the system appears ready.

The reality changes once the model needs to operate inside real software systems.

Several practical issues usually surface at this stage:

- The model exists only in notebooks and cannot run inside production services

- No automated pipeline exists to move data from ingestion to training and deployment

- Data pipelines are unreliable or inconsistent across systems

- There is no mechanism to track model drift once data patterns change

- ML workflows depend on manual steps instead of automated ML Ops pipelines

Most of the time when scaling an AI initiative, the model is not the real issue. The breakdown happens around it. Data does not flow the way it should. Deployment is improvised, monitoring is missing. What looked solid in testing starts wobbling once real users are involved.

That is usually the moment leadership understands the gap. It is not about improving accuracy anymore. It is about bringing in people who know how to run machine learning inside production systems. Teams that decide to hire ML engineers for enterprise AI projects are often reacting to this shift.

In many cases, companies move to hire dedicated machine learning engineers who can take ownership of the operational side. These engineers set up repeatable training pipelines, containerize models, connect them to live data feeds, and put monitoring in place so performance does not quietly degrade.

Once that layer is built properly, the difference is noticeable. The prototype stops feeling like a lab experiment and starts behaving like real software that the business can rely on.

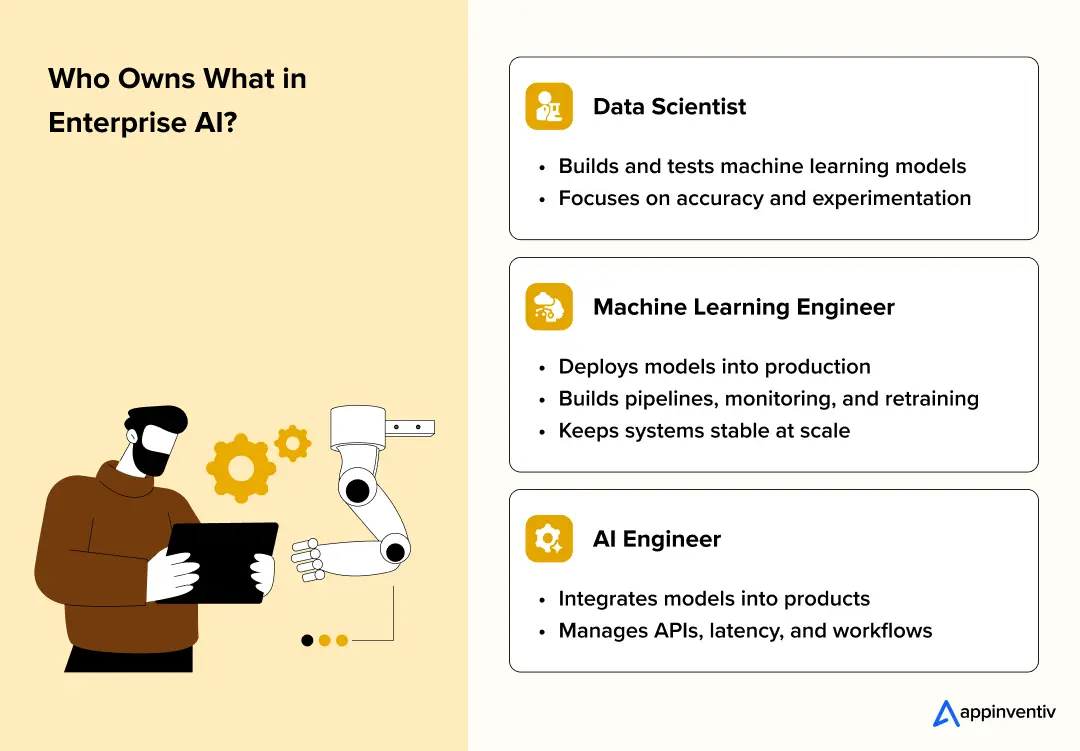

What Is the Difference Between Machine Learning Engineers, Data Scientists and AI Engineers?

Short answer: data scientists prove a model works. Machine learning engineers make sure it keeps working in production. AI engineers wire that intelligence into real products.

That difference sounds simple. In practice, it is where most AI initiatives either mature or stall.

When a company first experiments with AI, the center of gravity sits with data science. Teams clean datasets, test algorithms, and push accuracy from 78% to 84%. The work is exploratory. Code lives in notebooks, training runs happen on sampled data and failures are cheap.

But when in production the rules change.

Once that same model needs to serve predictions inside a live system, everything around it becomes more important than the model itself. Latency targets appear. Uptime requirements matter. Data pipelines cannot break silently. This is typically the point where organizations start to hire ML developers.

Here is how the roles break down in real-world enterprise environments.

| Role | Primary Focus | Typical Work | Impact on Production |

|---|---|---|---|

| Data Scientist | Model experimentation | Data analysis, feature engineering, model training and evaluation | Validates business use case |

| Machine Learning Engineer | Production ML systems | Training pipelines, deployment infrastructure, monitoring, scaling | Keeps models reliable at scale |

| AI Engineer | Product integration | APIs, microservices integration, inference optimization | Turns models into usable product features |

Data Scientists: Optimize Model Performance

Data scientists operate closest to the modeling layer. Their core job is to answer a question: Can this problem be solved with machine learning?

Their workflow often includes:

- building training datasets from raw logs or transactional systems

- creating features using domain logic

- experimenting with architectures in PyTorch or TensorFlow

- tuning hyperparameters

- evaluating metrics such as precision, recall, ROC-AUC

The environment is flexible. Training might run on a local GPU, a shared notebook server, or a managed environment like SageMaker. Data is often static snapshots pulled from warehouses.

This phase is essential. But it is still controlled.

A model that works on a clean dataset in a notebook is not automatically ready for distributed inference across millions of user sessions.

Machine Learning Engineers: Engineer the System Around the Model

Machine learning engineers operate at the intersection of software engineering, distributed systems, and ML.

When enterprises hire machine learning engineers, they are usually solving for one or more of these production realities:

1. Automated training pipelines: Instead of manually retraining models, ML engineers design workflows using tools like Airflow or Kubeflow. These pipelines handle data ingestion, preprocessing, training, validation, and artifact storage automatically.

2. Feature consistency: In production, feature mismatch between training and inference can quietly degrade performance. Engineers implement feature stores so both environments read from the same transformation logic.

3. Scalable inference architecture: Models are packaged into containers and deployed through orchestration systems like Kubernetes. Inference services may run behind load balancers, scale horizontally based on traffic, and expose REST or gRPC endpoints.

4. Observability and monitoring: Production ML systems track metrics beyond accuracy. Latency, throughput, prediction distribution shifts, and data drift all need monitoring. Engineers integrate logging, metrics dashboards, and alerting systems to detect anomalies early.

5. Model lifecycle management: Version control extends beyond code. Each model version must be traceable to training data, hyperparameters, and evaluation metrics. Tools like MLflow help manage this lifecycle.

This is the layer that enables ML engineers for scaling AI models to deliver measurable business impact. Without it, a promising model may degrade quietly or fail under load.

Also Read: Machine Learning Trends 2026: What C-Suite Leaders Needs To Watch in 2026

AI Engineers: Connect Intelligence to Applications

AI engineers focus on embedding machine learning into software products.

They work on:

- integrating inference endpoints into backend microservices architectures

- optimizing request latency for real-time use cases

- managing authentication and security between services

- ensuring AI outputs align with application workflows

For example, integrating a fraud detection ML model into a payments system requires careful handling of response times and fallback logic. A recommendation engine must integrate seamlessly with catalog services and user profiles.

AI engineers ensure the product layer can safely and efficiently consume ML outputs.

As systems grow more complex, enterprises often hire AI and ML engineers for enterprises with clearly defined ownership boundaries to avoid blurred responsibilities between research, deployment, and product integration.

Where Enterprises Invest When Scaling AI

As AI initiatives grow, the bottleneck rarely sits inside the model architecture. It usually sits in the design system.

Data updates need automation. Retraining must be predictable. Inference must scale without degrading performance. Governance and compliance requirements must be met.

That is why companies building enterprise-grade AI systems eventually expand their enterprise ML engineering team. Data scientists answer whether the model works. Machine learning engineers ensure the entire system keeps working under real-world conditions.

What Skills Should You Look for When Hiring Machine Learning Engineers?

If your AI system is expected to run in production, you need engineers who understand failure modes, not just model metrics. Accuracy on a validation set is one thing. Stability under live traffic is another. That distinction should guide how you hire ML developers.

In enterprise environments, strong ML engineers usually combine four capabilities: applied ML depth, production-grade engineering discipline, lifecycle ownership, and cloud systems awareness. The difference shows up when something breaks at scale.

1. Applied Machine Learning Depth

An ML engineer does not need to invent new architectures, but they must understand how models behave when data shifts or inference patterns change.

In production systems, issues often appear as subtle degradation. Prediction confidence may drift. Class imbalance may increase. Feature distributions may no longer resemble the training dataset.

Engineers who handle this well can:

- design feature pipelines that eliminate training and inference mismatch

- retrain and fine-tune LLM models using frameworks such as PyTorch or TensorFlow

- analyze performance drops caused by skewed data or concept drift

- optimize inference through batching, quantization, or architecture adjustments

For example, in a real-time recommendation system, naive inference calls can overload GPU instances. An engineer who understands request batching and model serving frameworks can significantly reduce compute pressure without impacting accuracy.

This level of judgment becomes critical when you hire ML engineers for enterprise AI systems where downtime or instability directly affects revenue.

2. Production Engineering Discipline

Once deployed, a model behaves like a backend service. It must respond consistently, scale predictably, and degrade safely if dependencies fail.

ML engineers therefore need a solid grounding in software engineering principles and AI tech stack.

Typical responsibilities include:

- exposing models through well-structured APIs using frameworks such as as FastAPI

- containerizing environments with Docker to ensure runtime consistency

- deploying services through Kubernetes with defined autoscaling rules

- designing inference architectures that align with SLA requirements

In a payments or fraud detection context, latency requirements may be measured in milliseconds. With predictive analytics in the supply chain, workloads may run in scheduled batch windows across distributed compute nodes. The architectural decisions differ, and the engineer must design accordingly.

The ability to reason about trade-offs between performance, cost, and reliability is what separates experimental systems from production platforms.

3. Lifecycle and MLOps Ownership

Many AI initiatives underperform not because the initial model was weak, but because its lifecycle was unmanaged.

Data evolves, user behavior shifts and external conditions change. Without structured retraining and monitoring, even well-performing models degrade over time.

ML engineers typically build systems that include:

- automated training and retraining pipelines orchestrated through workflow tools

- version control for model artifacts, datasets, and configuration metadata

- monitoring dashboards that track prediction distributions and drift signals

- threshold-based retraining triggers when performance drops below acceptable levels

In mature enterprise environments, models are treated like continuously evolving assets rather than static deployments. Engineers must ensure traceability and reproducibility, especially in regulated sectors.

This operational discipline is often the core reason organizations expand their ML engineering capability. This is also where companies begin MLOps engineers hiring initiatives or engage ML engineers for MLOps implementation to formalize automation, retraining governance, and monitoring standards.

4. Cloud and Distributed Systems Awareness

Enterprise AI rarely runs on isolated infrastructure. Training workloads may require distributed GPU clusters. Inference services may need horizontal scaling to handle fluctuating demand.

ML engineers should understand:

- managed ML environments such as AWS SageMaker, Azure Machine Learning, or Vertex AI

- cloud cost optimization strategies for GPU-intensive workloads

- autoscaling and resource allocation policies for inference services

- secure integration with enterprise data platforms

Poor infrastructure decisions can inflate cloud costs quickly. Engineers who understand workload profiling and capacity planning help maintain both performance and budget control.

Also Read: Scaling AI: Cost-Optimization Strategies for Enterprises

What This Means for Enterprise Hiring

When enterprises set out to hire ML developers, focusing only on modeling experience is rarely sufficient. Production AI depends on system design, lifecycle control, and infrastructure discipline.

The strongest ML engineers think beyond training code. They design pipelines, enforce monitoring standards, and anticipate failure scenarios. In enterprise environments, that systems mindset is what keeps AI initiatives stable long after the initial deployment.

How Many Machine Learning Engineers Do You Need?

For organizations building an ML engineering team, headcount planning should align with system complexity, model volume, and infrastructure maturity rather than short-term experimentation needs.

Here is how team structure typically evolves.

Early AI Stage

At the pilot stage, teams are small.

Typical structure:

- 1 ML engineer

- 1 Data scientist

The data scientist focuses on experimentation and model validation. The ML engineer handles basic pipeline setup and deployment. Infrastructure is usually simple, retraining may be manual, and traffic volume is moderate.

At this stage, a separate MLOps role is rarely required. Lifecycle processes are still lightweight.

Scaling Stage

Once models start supporting real workflows or multiple business units, complexity increases.

Typical structure:

- 2 to 4 ML engineers

- 1 data engineers

- 1 ML Ops engineer

ML engineers focus on model training, deployment, and performance optimization. Data engineers manage ingestion pipelines and feature preparation. This is usually the point where companies introduce a dedicated ML Ops engineer.

A dedicated ML Ops role becomes necessary when:

- retraining must be automated

- multiple models are deployed simultaneously

- monitoring and drift detection are required

- cloud cost and scaling policies need AI guardrails

Without ML Ops ownership, scaling often leads to fragmented pipelines and unstable deployments.

Enterprise AI Teams

When AI becomes embedded across products or revenue-critical systems, team size expands significantly.

Typical structure: 10 or more specialists across ML engineering, ML Ops, data engineering, and AI architecture

Enterprise AI teams operate multiple models, distributed infrastructure, and automated lifecycle systems. Governance, reproducibility, security, and performance optimization all require specialized ownership.

At this level, ML Ops is not optional. It becomes a core function responsible for maintaining stability across training pipelines, deployment infrastructure, monitoring systems, and compliance requirements.

The transition point for dedicated ML Ops usually appears when AI moves from experimentation to operational dependency. Once business outcomes rely on model outputs, lifecycle management requires clear ownership and structured engineering discipline.

Our machine learning development services help enterprises build scalable pipelines, deployment systems, and MLOps frameworks.

Where Can Enterprises Hire Machine Learning Engineers?

The hiring model you choose will directly influence how safely and quickly AI reaches production. In enterprise settings, this is not just about talent. It is about delivery risk, compliance exposure, and system stability.

When organizations decide to hire ML developers, they are usually solving one of two problems: lack of production expertise or lack of scalable infrastructure.

Enterprises evaluating options often compare internal recruitment with external machine learning engineer hiring services to accelerate access to production-ready expertise.

There are four common routes.

1. In-House Hiring

Building internally gives long-term control. Engineers grow deep knowledge of internal data systems, security policies, and architecture constraints. Over time, that context becomes valuable.

The trade-off is time and cost. Production-ready ML engineers are rare. You are hiring for distributed systems, container orchestration, MLOps automation, monitoring, and cloud optimization, not just model training. Recruitment cycles are long, and onboarding into complex enterprise stacks takes months.

For companies building a permanent enterprise ML engineering team, this path works. It just demands sustained investment.

2. Remote ML Engineers

Many enterprises now hire remote machine learning engineers to access broader talent pools. This often speeds up hiring and reduces salary pressure.

The challenge is integration. Production AI touches DevOps, data engineering, backend systems, and governance teams. Without tight coordination, remote engineers can fix components but miss system-level alignment. In regulated industries, fragmented ownership can create audit risks.

3. Enterprise Machine Learning Development Partner

When AI affects core operations such as fraud detection, forecasting, or personalization, production risk becomes expensive.

This is where an enterprise machine learning development partner offers a different value. Instead of hiring isolated engineers, enterprises gain structured ML teams, MLOps specialists, cloud architects, and governance frameworks aligned from the start.

The advantage is maturity. This approach is often preferred by organizations that want to hire top machine learning engineers without navigating extended recruitment cycles or fragmented vendor coordination.

Architecture reviews happen early. Monitoring and retraining pipelines are designed upfront. Infrastructure decisions consider scale, security, and cost control together.

For enterprises under pressure to move from pilot to production without disrupting existing systems, this approach reduces uncertainty while accelerating deployment.

Choosing how to hire machine learning engineers is ultimately a risk decision. In enterprise AI, stability and execution certainty often matter more than simply filling roles.

Also Read: The Enterprise Buyer’s Checklist Before Hiring an AI Development Partner

What Does It Cost to Hire Machine Learning Engineers?

Straight answer: it depends on what you are trying to run. A proof of concept costs one thing. A production AI system tied to revenue, compliance, and uptime guarantees costs something very different.

When enterprises decide to hire ML developers, the real question is not salary. It is scope.

In-House Hiring

If you build internally, the cost is ongoing.

A mid to senior ML engineer with production experience is not inexpensive. Add recruitment time, onboarding, infrastructure provisioning, DevOps support, and retention overhead, and the annual investment rises quickly.

More importantly, hiring takes time. Enterprise AI roadmaps often move faster than recruitment cycles. A three-month hiring delay can push production deployment out by a quarter.

For organizations building a long-term enterprise ML engineering team, this investment makes sense. It just requires planning and patience.

Remote Hiring

When companies hire remote machine learning engineers, compensation can be optimized through global talent access. This often reduces direct salary pressure.

However, infrastructure, tooling, security controls, and cloud usage still remain enterprise-level costs. Remote hiring does not automatically reduce architectural complexity.

If system design is inefficient, cloud bills will reflect that regardless of geography.

Project-Based Production AI Investment

For enterprises scaling from prototype to full production, budgeting usually aligns with system complexity.

A production-ready AI system often includes:

- automated data ingestion pipelines

- model training and retraining workflows

- containerized deployment infrastructure

- monitoring and drift detection systems

- integration with existing enterprise platforms

In most enterprise scenarios, end-to-end AI development costs typically fall between $40,000 and $400,000, depending on scale.

Lower budgets are common when:

- a single model is being deployed

- integration requirements are limited

- traffic volume is moderate

Higher budgets appear when:

- multimodal AI must operate together

- real-time inference is required

- distributed cloud infrastructure is involved

- regulatory compliance adds governance layers

The variation comes from integration depth, infrastructure resilience, and lifecycle automation, not just model complexity.

The Hidden Cost: Rebuilding

One cost leaders often overlook is rework.

Many AI systems launch without structured retraining, monitoring, or autoscaling policies. Within months, performance drops or latency increases. Engineering teams return to redesign pipelines or restructure deployment environments.

In enterprise settings, rebuilding a poorly designed system often costs more than building it correctly the first time.

Also Read: How to Build an AI Model from Scratch | A Complete Guide

How Enterprises Should Think About Budget

When planning to hire ML engineers for enterprise AI, leadership should evaluate:

- expected traffic and scaling needs

- retraining frequency

- integration with legacy systems

- compliance obligations

- cloud cost governance

Production AI is not a one-time build. It is an evolving system. Budgeting for infrastructure discipline and lifecycle management from the beginning reduces downstream risk and protects long-term ROI.

Enterprises reviewing these variables frequently rely on a structured machine learning team hiring guide or internal machine learning hiring checklist to align hiring decisions with long-term infrastructure strategy.

Build the right ML engineering foundation with structured deployment, monitoring, and lifecycle control.

How Appinventiv Helps Enterprises Build Production AI Systems

Moving from a working model to a stable production system is where many enterprise AI programs slow down. The challenge is rarely about model accuracy. It is about integrating AI into existing systems without disrupting operations, compliance, or performance. At Appinventiv, we approach production AI as an engineering problem first. Data pipelines, deployment architecture, monitoring layers, and retraining workflows are designed before scale begins. This reduces instability later.

Our machine learning development services are structured around real production demands. With 300+ AI-powered solutions delivered, 200+ data scientists and AI engineers onboard, and 150+ custom AI models trained and deployed, we have supported enterprise AI across aviation, fintech, retail, and healthcare. That experience helps us anticipate issues around traffic spikes, model drift, cloud cost control, and governance before they surface.

In high-scale environments such as the Flynas Airline App, AI capabilities must perform under real user load, not controlled test data. That requires stable inference infrastructure, rollout control, observability, and lifecycle management. Our teams work across architecture, MLOps, and backend integration to ensure AI systems operate reliably inside production ecosystems.

If your organization is planning to scale AI from pilot to enterprise-wide deployment, our ML engineering specialists can assess your current setup and outline a practical path to production stability.

FAQs

Q. What skills should a machine learning engineer have for production AI?

A. A production-ready ML engineer understands automated training pipelines, containerized deployment, monitoring, and retraining workflows. They are comfortable managing drift, latency, and infrastructure costs. Real-world system exposure matters more than experimentation alone.

Q. How do enterprises hire ML engineers to scale AI systems?

A. Enterprises begin by defining inference needs, compliance scope, and retraining frequency. Hiring decisions should reflect deployment complexity, not just model sophistication. Production experience under operational load is a key differentiator.

Q. What is the difference between ML engineers and data scientists?

A. Data scientists focus on model discovery, experimentation, and accuracy improvement. ML engineers focus on deployment, reliability, and system scalability. One proves the model works. The other ensures it keeps working.

Q. What roles are required to move AI from prototype to production?

A. Production AI typically requires data scientists, ML engineers, MLOps engineers, and data engineers. Each role owns a specific layer of the lifecycle. Clear separation prevents bottlenecks and governance gaps.

Q. How does Appinventiv help enterprises build ML engineering teams?

A. We approach AI as an operational system, not just a modeling exercise. Our teams structure pipelines, deployment infrastructure, monitoring standards, and retraining workflows from the outset. The focus is long-term production stability.

Q. What common interview questions should you ask when hiring an ML engineer for production AI?

A. Here’s how enterprise teams usually structure ML interviews:

- Model choices: Ask which models they used in real projects and why. Probe trade-offs and post-deployment outcomes.

- Data handling: Understand how they cleaned and stabilized messy, real-world data before training.

- Deployment: Have them explain how they moved models to production and handled failures or monitoring.

- Coding: Test system thinking and debugging, not obscure syntax.

- System design: Evaluate how they design APIs, manage scaling, and anticipate failure scenarios.

- Problem-solving: Present a production issue, such as latency spikes, and assess their investigation approach.

- Business impact: Ask what measurable results their work delivered.

- Communication: Ensure they can explain complex decisions clearly to non-technical stakeholders.

- Seniority check: For senior roles, assess end-to-end architecture thinking and lifecycle ownership.

A strong hiring process blends technical depth with practical judgment under real operational pressure.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

Key takeaways: Start with research before development. Map compliance before choosing the architecture. Build the data foundation before training the model. Cost can range from $100K to $5M+, depending on scope. The biggest challenge is keeping the AI accurate, explainable, and compliant after launch. Building a real estate investment AI means planning for two industries:…

What UAE CDS 2027 Means for Your Platform: Integration, Compliance, Systems, and What to Build

Key takeaways: UAE CDS 2027 age verification compliance mandates real-time, auditable systems integrated into identity, access control, and enforcement layers across platforms. Effective compliance requires risk-based, multi-layered verification combining biometrics, Emirates ID checks, device intelligence, and behavioral signals. Legacy KYC systems fail CDS expectations; enterprises must implement continuous verification with dynamic risk scoring and re-validation…

Key takeaways: AI agents for cybersecurity are moving past triage assistance into autonomous decision-making across SOC, AppSec, and threat intelligence. Extensive AI use in security operations saves $1.9M per breach and cuts the breach lifecycle by 80 days (IBM, 2025). 97% of organizations hit by an AI-related security incident lacked proper AI access controls. The…