- How Agentic Coding Systems Work?

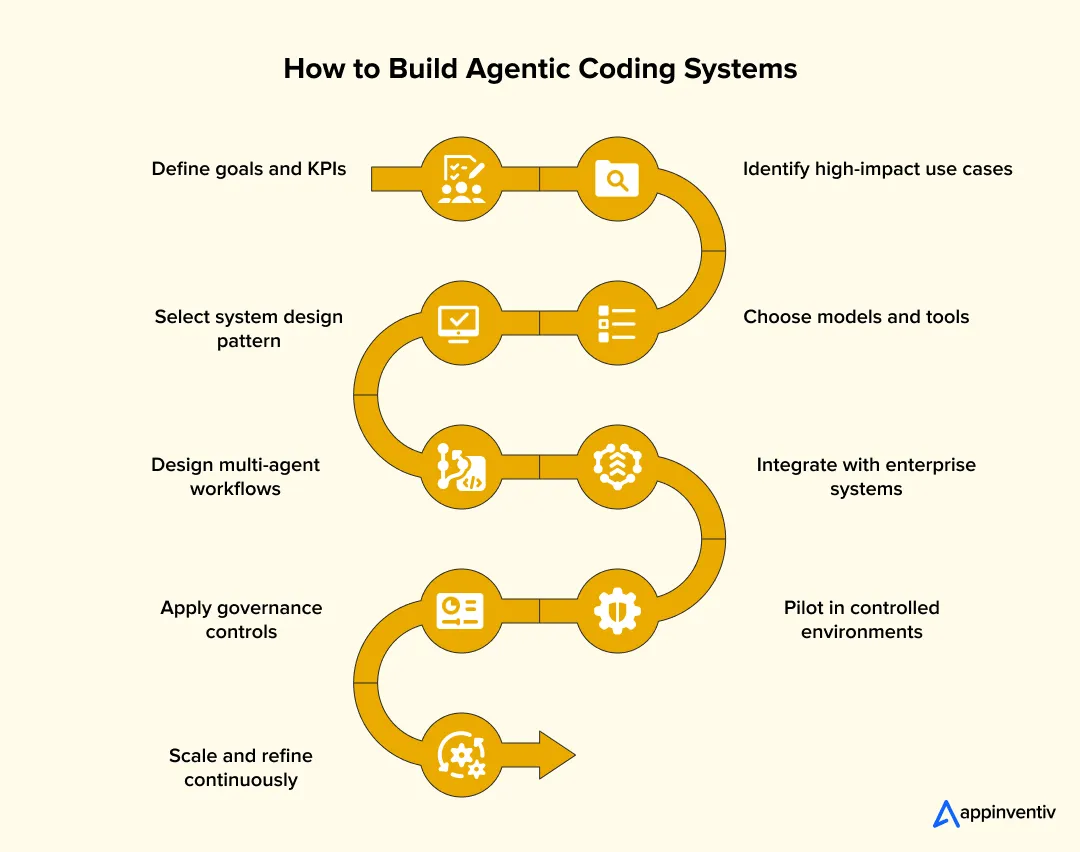

- Step-by-Step: How to Build Agentic Coding Systems

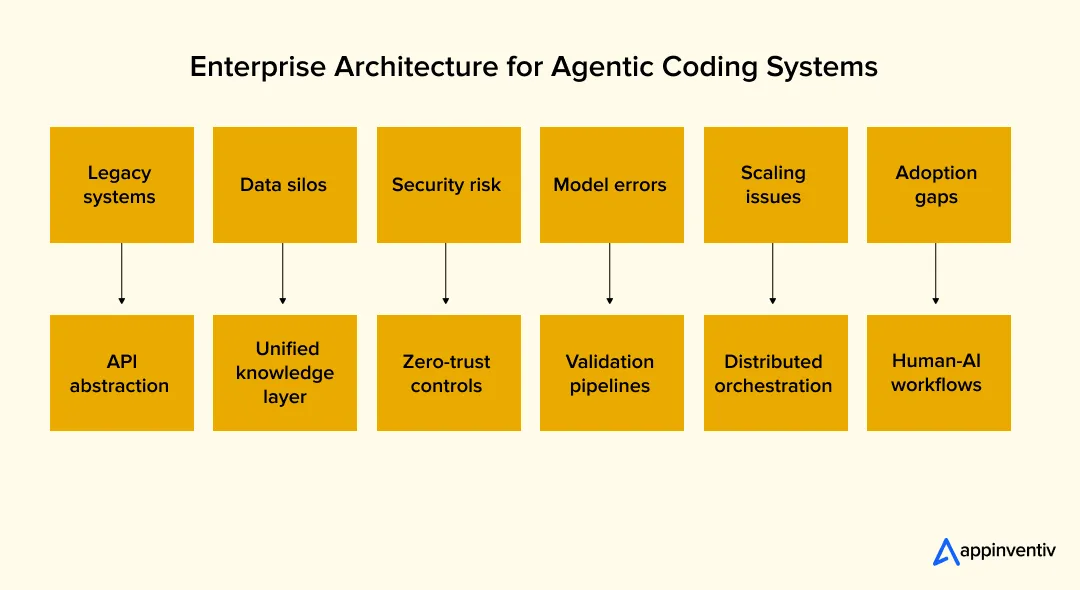

- Enterprise Architecture for Agentic Coding Systems

- Governance & Compliance Models for Agentic AI Systems

- Real-World Use Cases of Agentic Coding Systems in Enterprises

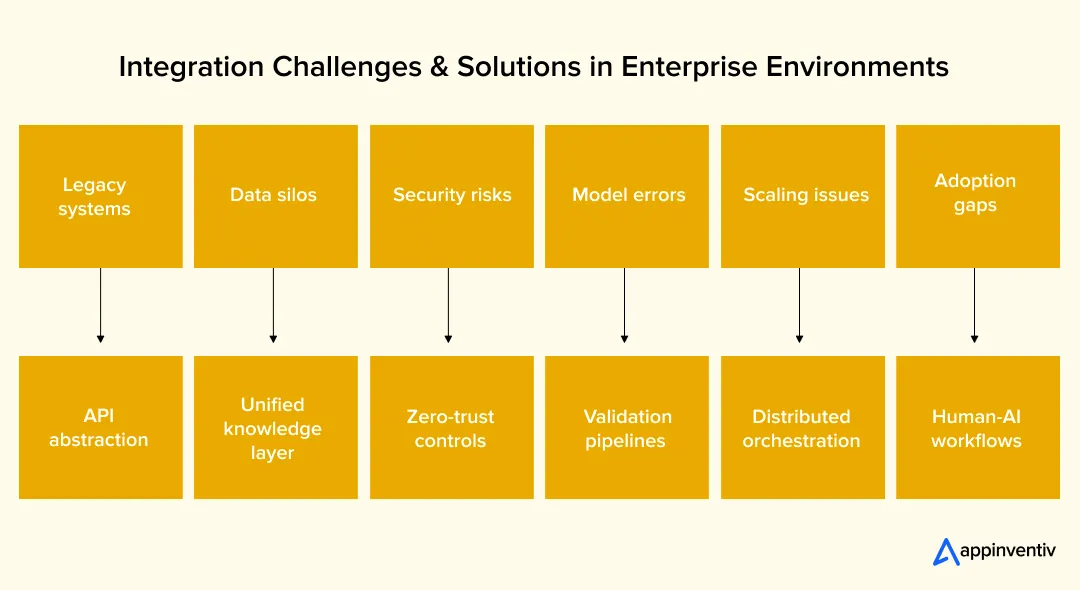

- Agentic AI Integration Challenges & Solutions in Enterprise Environments

- Cost of Building Agentic Coding Systems

- Future of AI-Powered Coding Systems (2026–2030 Outlook)

- Why Enterprises Choose Appinventiv for Agentic AI Development

- Frequently Asked Questions

Key takeaways:

- Most enterprises use AI for coding, but only a small share of workflows run autonomously end to end.

- Agentic systems reduce delivery time and manual effort by coordinating planning, coding, testing, and deployment in one flow.

- Strong architecture and orchestration layers decide whether multi-agent systems scale or fail in real production environments.

- Governance, security, and human oversight remain critical as autonomous systems interact directly with codebases and deployment pipelines.

- Enterprises that operationalize agentic systems early gain faster releases, lower costs, and better control over complex software ecosystems.

Enterprise software teams looking to build agentic coding systems are under steady pressure. A single platform can run hundreds of services and depend on dozens of internal tools. Yet developer time stays limited. Much of it still goes into fixing bugs, writing tests, and reviewing code written by others.

Tools like Copilot help with small tasks. They suggest code, complete functions, and speed up typing. Studies show that close to 60% of developer work now involves AI assistance, yet only 0–20% of tasks run fully autonomously. But these tools still do not handle end-to-end work.

A developer still has to plan tasks, connect systems, test outcomes, and push changes. This gap is where the next shift is happening.

Autonomous coding agents’ development moves beyond simple assistance. These systems work toward a defined goal. They break that goal into steps, write code, run tests, and adjust based on results. Instead of one tool, they rely on a set of agents, each with a clear role in the workflow.

For large enterprises, this changes how software gets built. Teams can reduce cycle time, handle larger systems, and keep quality under control without adding more engineers. This is not a side experiment. It is becoming part of the core engineering stack.

Most teams stop at copilots. Build full agentic systems before competitors turn speed into advantage.

How Agentic Coding Systems Work?

Agentic coding systems follow a clear execution flow that moves a task from input to deployed code. In advanced setups, these systems can run coding tasks for 45+ minutes without interruption, handling multiple steps in a single cycle. Each step is handled by agents with defined roles.

- Task ingestion

Inputs come from Jira tickets or plain text. The system extracts intent and sets scope. - Planning and decomposition

A planning agent splits the task into smaller steps and sets the order. - Multi-agent orchestration

Tasks are assigned to agents as part of broader enterprise agent workflows. Data is passed in structured formats like JSON. - Code execution and tool usage

Agents write code and trigger tools such as APIs or scripts. - Feedback and correction

Tests and logs check output. Errors trigger retries. - Deployment

Code moves through CI pipelines and is deployed after approval.

Step-by-Step: How to Build Agentic Coding Systems

Enterprises need a clear build path before they invest in agentic coding systems. The process involves system design, tooling choices, workflow engineering, and strict control layers. Each step shapes how well the system performs in production.

2.1 Define Business Objectives & Engineering KPIs

Start with measurable outcomes. This step links AI coding automation system development to real engineering impact and budget decisions.

Define targets such as release frequency, mean time to resolution, defect density, and engineering cost per feature. Map each KPI to a use case.

For example, reducing PR review time or automating regression testing. Set baseline metrics from current DevOps tools like Git logs, CI/CD reports, and issue trackers. This baseline helps measure gains after deployment.

2.2 Identify High-Impact Use Cases

Start with work that repeats across teams and follows a clear pattern. These areas are easier to automate and easier to measure.

- Feature development workflows

Autonomous coding agents development enables agents to read tickets from tools like Jira, break them into steps, and draft code. They can open pull requests, add comments, and link changes to the original task. - QA and testing pipelines

Agents can write unit tests, run regression checks through CI pipelines, and flag failures with logs. They can also run linters and basic static checks before code review. - Legacy code updates

Agents can scan older code, trace dependencies, and suggest changes. In some cases, they can split large modules or help move services to newer frameworks.

Track results using simple metrics such as time to merge, number of bugs, and release frequency.

2.3 Choose the Right System Design Pattern

AI coding agents’ system design decides how agents work together and how the system behaves in real use. It affects speed, cost, and control.

- Single-agent setup

Works for small tasks like fixing bugs or writing short functions. It runs in a straight flow with fewer moving parts. - Multi-agent setup

Better for larger tasks. One agent plans the work, another writes code, and another checks it. They pass data between each other in structured formats like JSON. - Central control model

A main controller assigns tasks and tracks progress. This setup is easier to monitor using logs and job queues. - Distributed model

Agents talk to each other directly through events or messages. This can run faster but needs clear rules to avoid overlap or errors. - Context handling

Short context stays within the model input. A longer history can be stored outside, often in a vector store, and fetched using agentic RAG retrieval when needed.

2.4 Select Models, Frameworks & Tooling Stack

This step shapes how AI coding agents’ development behaves in real workloads. The goal is to pick components that support control, traceability, and steady output across tasks.

Focus on a few core layers:

- Model Layer

- Choose between API-based or self-hosted models, a decision covered in depth in the API vs self-hosted LLM comparison

- API models offer quick setup and strong baseline performance

- Self-hosted models give more control over data, latency, and cost

- Agent Orchestration Layer

- AI coding automation platforms handle task routing, execution flow, and state tracking.

- Maintains memory across steps and retries failed tasks, which aligns with LLMOps practices for production reliability.

- Tool-Use Layer

- Function calling lets agents trigger actions such as running scripts or querying APIs

- API orchestration connects agents with Git, CI pipelines, and internal services

- Memory Layer

- Short-term context stays within prompt limits

- Long-term context is stored in vector databases and retrieved when needed

- Communication Layer

- Agents exchange structured messages, often in JSON format

- Clear schemas reduce errors and keep workflows consistent

Each choice should match system load, data sensitivity, and control needs rather than tool popularity.

Also read: Private vs Public LLM: How to Choose the Right AI Model

2.5 Design Multi-Agent Workflows

Workflows define how tasks move from idea to deployment. In many enterprise environments, close to 50% of AI tool usage is already tied to software engineering tasks, which makes workflow design a critical layer. Clear role separation keeps the system stable and easier to debug.

A common setup includes:

- Planner Agent

Breaks a task into smaller steps and sets execution order - Developer Agent

Writes code based on the plan and available context - Reviewer Agent

Checks code quality, runs static checks, and suggests fixes - Tester Agent

Generates tests, runs them, and reports failures

Execution patterns vary:

- Sequential Flow

Each agent completes its step before the next begins. This is easier to track and control. - Parallel Flow

Multiple agents run at the same time, such as testing and documentation. This reduces total runtime but needs strong agent interoperability to avoid conflicts.

Well-defined workflows reduce rework and keep outputs consistent across tasks.

2.6 Integrate with Enterprise Systems

This step connects agents with the tools that run daily engineering work. Without this layer, agents cannot act on real tasks.

Key integrations include:

- Version Control Systems (Git)

- Agents can create branches, commit code, and open pull requests

- Access is controlled through service accounts and token-based auth

- CI/CD Pipelines

- Trigger builds, run tests, and deploy changes

- Agents read pipeline logs to detect failures and retry tasks

- Internal APIs and Services

- Fetch business logic, configs, and dependencies

- Execute actions such as data updates or service calls

- Teams new to this layer can benefit from reviewing API development practices before wiring agents to internal services.

- DevOps and Observability Tools

- Capture logs, traces, and system metrics

- Help track agent actions across workflows

Structuring AI API integration through API gateways and middleware layers helps manage access, rate limits, and request validation.

2.7 Implement Governance & Security Guardrails

Agents can write and execute code, so control layers must be in place from the start.

Core guardrails include:

- Access Control

- Role-based permissions define what each agent can read or change

- Secrets managed through vaults, not hardcoded in prompts

- Human-In-The-Loop Checkpoints

- Require approval before merging code or deploying changes

- Escalate high-risk actions to senior engineers

- Audit and Trace Logs

- Record each action taken by agents

- Track code changes, API calls, and decision steps

- Input and Output Validation

- Sanitize inputs before execution

- Run checks on generated code using an LLM-as-a-Judge approach to catch unsafe patterns.

These controls reduce risk and keep agent activity aligned with enterprise policies.

2.8 Pilot Deployment with Controlled Scope

Following an AI MVP approach, start small and keep the environment contained. This step tests real behavior without exposing core systems.

- Sandbox Environments

- Run agents in isolated environments with limited data access

- Use staging repos and non-critical services for early testing

- Monitor outputs, logs, and failure cases closely

- Limited Production Rollout

- Deploy agents on low-risk workflows such as internal tools or test pipelines.

- Restrict write access to avoid unintended changes

- Track metrics like task success rate, error frequency, and execution time

A controlled rollout helps teams understand system behavior before wider adoption.

2.9 Scale, Optimize & Continuously Learn

After validation, teams that develop autonomous coding agents shift their focus to stability, cost control, and better results over time.

- Feedback Loops

- Capture outputs, errors, and user corrections

- Feed this data back into prompts and workflows

- Model Retraining and Running

- Fine-tune models on internal codebases where needed

- Adjust prompts and context retrieval for better accuracy

- Cost and Performance Control

- Cache repeated queries and reuse results

- Batch requests to reduce API calls

- Track token usage, latency, and system load

Regular updates keep the system reliable as workloads grow.

Enterprise Architecture for Agentic Coding Systems

A production-grade way to build agentic coding systems is through a layered architecture. Each layer handles a distinct concern, such as interaction, execution, state, and control. Clear separation keeps the system stable under load and easier to audit.

4.1 Reference Architecture Overview

This model breaks the system into layers that work together during execution.

- Interface Layer

- Accepts inputs from Jira, IDE plugins, or API endpoints

- Handles authentication, request parsing, and input validation

- Orchestration Layer

- Manages task queues, execution order, and retries

- Maintains workflow state using job schedulers or workflow engines

- Agent Layer

- Contains specialized agents such as planner, developer, and reviewer

- Each agent operates with defined prompts, tools, and constraints

- Tooling Layer

- Connects agents to Git, CI/CD systems, and internal APIs

- Executes actions like commits, builds, and deployments

- Data and Memory Layer

- Stores embeddings, logs, and task history

- Supports retrieval through vector search and indexed storage

- Governance Layer

- Enforces access policies, audit logs, and approval workflows

- Tracks every action for traceability

4.2 Core Components of Agentic Coding Architecture for Enterprises

These components define how the system processes tasks and maintains context.

- LLM Backbone

- API-based models for quick setup or self-hosted models for control

- Fine-tuned on internal repositories for domain-specific accuracy

- Agent Orchestration Engine

- Handles task routing, execution flow, and state transitions

- Supports retry logic and failure recovery

- Memory Systems

- Short-term context handled within prompt limits

- Long-term memory is stored in vector databases using embeddings

- Retrieval pipelines fetch relevant context during execution

- Tool Integrations

- IDE extensions, Git systems, CI/CD pipelines, and REST APIs

- Allow agents to act beyond text generation

- Observability Systems

- Centralized logs for each agent action

- Traces to follow task execution across steps

- Evaluation pipelines to measure output quality and error rates

Also Read: Enterprise Generative AI Implementation Guide

4.3 Multi-Agent Coding Workflow Architecture

The multi-agent coding workflow architecture defines how agents collaborate across a task lifecycle.

- Hierarchical Agent Systems

- A top-level planner assigns subtasks to specialized agents

- Downstream agents execute tasks and return results

- Collaborative Agent Swarms

- Multiple agents work on related tasks at the same time

- Share context through a common memory store

- This pattern is already reshaping how agentic AI in SaaS platforms handle concurrent workloads.

- Execution Models

- Sequential execution for controlled workflows

- Parallel execution for faster throughput with coordination logic

Example: Enterprise feature delivery flow

- Planner agent reads a feature request and creates subtasks

- Developer agent writes code and pushes changes to a branch

- Reviewer agent runs static checks and suggests fixes

- Tester agent executes test suites and reports results

- Orchestrator validates outputs and triggers CI/CD pipelines

4.4 Enterprise AI Agent Orchestration Frameworks

Enterprise AI agent orchestration frameworks control how agents operate at scale.

Key requirements:

- Task Routing: Assign tasks based on agent capability and workload

- State Management: Track task progress, intermediate outputs, and dependencies

- Error Handling: Detect failures, retry tasks, and escalate issues

Trade-offs:

- Open-Source Frameworks:

- Flexible and customizable

- Require more engineering effort for scaling and security

- Enterprise Platforms

- Built-in monitoring, access control, and support

- Higher cost but faster deployment and better stability

The choice depends on system scale, compliance needs, and internal engineering capacity.

Without strong integration and control layers, agent systems fail under load, complexity, and real data.

Governance & Compliance Models for Agentic AI Systems

Agentic systems do more than suggest code. They create commits, trigger pipelines, and interact with internal services. Even now, human oversight remains high, with 67% to 87% of tasks still requiring supervision, which shows why control layers are critical.

One incorrect action can move across environments within minutes. This raises risks around code integrity, data exposure, and unauthorized execution. Control cannot sit outside the system. It has to run inside every step where an agent reads, writes, or executes.

5.2 Enterprise Governance Framework (Layered Model)

- Policy and Access Control

- RBAC defines agent roles such as read-only, write, or deploy

- ABAC adds context like environment, repo, or task type

- Secrets stored in vaults such as HashiCorp Vault or AWS KMS

- Auditability and Traceability

- Code lineage tracks changes from prompt to commit hash

- Decision logs capture inputs, outputs, and tool calls

- Logs stored in immutable systems for audit trails

- Human-In-The-Loop Oversight

- CI pipelines enforce approval gates before merge or deploy

- High-risk actions trigger escalation rules

- Manual review tied to pull request workflows

- Compliance Alignment

- GDPR for data handling and retention

- SOC 2 for access control and logging practices

- ISO 27001 for security controls and risk management

- Organizations scaling governance across teams often formalize this through an AI Center of Excellence to standardize controls and accountability.

- Model Monitoring and Drift Control

- Evaluation pipelines track accuracy and failure rates

- Drift detection compares the current output with the baseline behavior

- Retraining or prompt updates triggered by thresholds

Governance-by-Design Architecture

- Embedded Governance

- Policy checks run at each agent action

- Access validation and logging happen in real time

- Central and Federated Model

- Central policies define global rules

- Teams manage local controls within set limits

This structure, often shaped with AI governance consulting services, keeps control intact as agent activity scales across systems.

Real-World Use Cases of Agentic Coding Systems in Enterprises

Enterprises are moving past single assistants and testing multi-agent coding workflows where planning, coding, testing, and validation happen as a chain of coordinated steps. The examples below reflect systems that show early agentic behavior with multiple roles and feedback loops.

Autonomous Coding Agents Development in Feature Pipelines

Cognition AI introduced Devin, an AI software engineer who plans tasks, writes code, debugs, and deploys projects end-to-end. It interacts with tools like terminals, editors, and browsers, which reflects a full agent loop rather than a single prompt-response flow.

Multi-Agent Coding Workflows In Production Environments

Microsoft and OpenAI have demonstrated multi-agent orchestration in research and enterprise tooling, where planner and executor agents collaborate across coding tasks using tool-calling and memory.

QA Automation With Agent Loops

Google has explored iterative agent-style systems where models generate code, run tests, analyze failures, and retry until tests pass. This creates a closed feedback loop similar to agentic execution.

DevOps and Workflow Automation With Agents

Amazon integrates automation across build and deployment pipelines. Recent work combines LLM-based agents with CI/CD systems to monitor runs, trigger retries, and manage rollbacks without manual steps.

Internal Agent-Based Developer Platforms

Meta has shared internal research on AI systems that assist across code navigation, editing, and validation, with a growing focus on chaining tasks across multiple steps instead of single completions.

These examples show a clear shift. Systems are moving from single-step assistance to multi-step, tool-using, self-correcting agent workflows, part of a broader shift in generative AI use cases across enterprise product development.

Agentic AI Integration Challenges & Solutions in Enterprise Environments

Enterprise systems bring constraints that do not exist in isolated demos. AI coding agents’ development must account for legacy stacks, fragmented data, and strict controls. Each challenge needs a clear technical response.

7.1 Legacy System Integration

Older systems often lack clean APIs and follow tightly coupled designs. Direct access from agents can break workflows or expose unstable dependencies.

- Solution:

- Use API abstraction layers to expose controlled endpoints

- Add middleware for request validation, rate limiting, and transformation

- Wrap legacy services with microservice adapters for safer interaction

7.2 Data Silos and Context Fragmentation

Data sits across repos, docs, and internal tools. Agents fail when context is incomplete or outdated.

- Solution:

- Build a unified knowledge layer using embeddings

- Store data in vector databases for semantic retrieval

- Sync documentation, code, and logs into a shared retrieval system

7.3 Security and Compliance Risks

Agents can access code, secrets, and internal APIs. Unchecked actions can lead to data leaks or unauthorized changes.

- Solution:

- Apply zero-trust architecture with strict identity checks

- Encrypt data in transit and at rest using TLS and key management systems

- Enforce governance policies through access controls and audit logs, consistent with DevOps automation strategies for regulated environments

7.4 Model Reliability and Hallucination Risks

Generated code can be incorrect or unsafe. Errors may pass through if not validated.

- Solution:

- Use validation agents to review outputs before execution

- Run static analysis, linting, and test pipelines

- Add retry logic based on test failures and logs

- Teams still defining their stack can use this step to align DevOps tooling choices with agent validation requirements.

7.5 Scaling Multi-Agent Systems

As tasks grow, agent coordination increases system load and cost. Poor scaling leads to delays and higher compute usage.

- Solution:

- Use distributed orchestration with task queues

- Cache repeated results and batch requests

- Track token usage, latency, and execution time for cost control

7.6 Organizational Adoption Barriers

Engineering teams may resist systems they do not trust or understand. Lack of clarity slows adoption.

- Solution:

- Define clear human-AI workflows with role boundaries

- Train teams on agent behavior and limitations

- Start with assistive roles, then expand to autonomous execution

These challenges shape how agentic systems perform in real environments. Addressing them early reduces risk and improves long-term stability.

Legacy systems, fragmented data, and security gaps stop scale. Fix the foundation before expanding.

Cost of Building Agentic Coding Systems

The AI agent development cost varies with scope. A small pilot with one workflow is far cheaper than a system that connects agents to repos, pipelines, and internal services. This aligns with market direction, where over 80% of organizations plan to increase AI investment in the near term.

Most enterprise builds fall between $50K and $500K+, based on how deep the integration goes and how many controls are in place.

| Complexity Level | System Scope & Components | Estimated Cost |

|---|---|---|

| MVP / Pilot | Single or few agents, API-based model access, basic workflow, limited repo or CI connection | $50K – $120K |

| Mid-Scale System | Multiple agents with defined roles, an orchestration layer, a vector store for context, Git and CI pipeline hooks | $120K – $300K |

| Enterprise Platform | Distributed agents, hybrid or self-hosted models, full DevOps tie-in, RBAC, audit trails, approval gates | $300K – $500K+ |

The costs to build agentic coding systems rise with deeper system access, stricter controls, and higher usage. The AI coding agents’ development cost is driven by model usage, infrastructure load, integration effort, and control layers. Hidden costs show up in monitoring, model tuning, and compliance work.

Future of AI-Powered Coding Systems (2026–2030 Outlook)

Over the past decade, enterprise software has shifted from manual workflows to automation-driven pipelines. In recent years, that shift has accelerated with the rise of AI.

Based on hands-on work across industries, including 300+ AI-powered solutions delivered and 150+ custom AI models deployed, a clear pattern is emerging.

AI software development automation is moving toward higher autonomy, tighter integration, and continuous adaptation across the software lifecycle. Enterprise adoption is rising fast, with less than 5% of applications using AI agents in 2024 expected to grow to around 40% by 2026.

Fully Autonomous Development Pipelines

Systems will handle tasks from requirement intake to deployment with minimal human input. Agents will plan work, generate code, run tests, and push updates through CI pipelines. Human input will focus on approvals and edge cases.

AI-Native Engineering Teams

Teams will operate with agents as active contributors. Developers will guide workflows, review outputs, and manage system behavior rather than write every line of code. For teams starting this transition, understanding modern AI app development foundations is a practical starting point.

Self-Healing Systems

Systems will detect failures in code, infrastructure, or pipelines and fix them without manual steps. This includes retry logic, automated debugging, and patch generation.

Convergence With DevOps and MLOps

Coding systems will integrate with deployment and model pipelines. Code generation, testing, deployment, and model updates will run as part of a single workflow with shared monitoring and control layers.

Why Enterprises Choose Appinventiv for Agentic AI Development

Building agentic coding systems is not just about models or tools. It requires AI development services with tight control over architecture, integration, and governance.

Enterprises looking to scale this capability often start by identifying the right talent, which is why knowing how to hire AI developers with system-level expertise matters. Many enterprise teams struggle here. Systems either move fast without control or become too rigid to scale.

Appinventiv focuses on AI-powered coding systems development that works in production. With 300+ AI-powered solutions delivered and 150+ custom AI models deployed, the approach is grounded in real deployment experience across industries.

What Sets Appinventiv Apart

- Enterprise AI delivery experience

Work across regulated environments and large-scale systems with complex integrations. - Governance-first architecture

Built-in RBAC, audit logs, approval workflows, and compliance alignment from the start - Scalable agentic systems

Designed for high concurrency and large workflows with up to 2x scalability increase - Measured impact

- 50% reduction in manual processes

- 90%+ agent task accuracy

- Faster execution across engineering and business workflows

- End-to-end ownership

Strategy → Architecture → Development → Deployment → Optimization

AI-Powered Business Consultant (MyExec)

A client needed a system that could act as a real-time business advisor without relying on large consulting teams.

- What was built

- Multi-agent system using a retrieval-based architecture

- Agents process documents, extract signals, and generate recommendations

- Integrated with internal systems through secure APIs

- Impact

- Faster decision cycles for business users

- Reduced manual analysis effort

- Continuous recommendations based on updated data

Enterprises that successfully build agentic coding systems focus on control, integration, and measurable outcomes. Let’s connect & build secure, scalable, production-ready agentic coding systems.

Frequently Asked Questions

Q. What are agentic coding systems?

A. Agentic coding systems are AI-driven setups where multiple agents handle different parts of software development. Instead of just suggesting code, they plan tasks, write code, test it, and push changes. Each agent has a role, such as planning or reviewing. These systems connect with tools like Git and CI pipelines, so they can act on real workflows rather than stay limited to prompts.

Q. How do agentic coding systems work?

A. They follow a step-by-step flow. A task comes in from a ticket or prompt. A planning agent breaks it into smaller steps. Other agents write code, run tests, and check results. If something fails, the system retries or fixes it. Once everything passes, the code moves through CI pipelines and gets deployed after approval.

Q. How to build multi-agent coding systems?

A. Start by defining clear roles for each agent, such as planner, developer, and tester. Set up an orchestration layer to manage task flow and state. Connect agents to tools like Git, APIs, and CI pipelines. Add a memory layer to store context. Then apply access controls and logging so every action can be tracked and reviewed.

Q. What does autonomous coding agents development involve?

A. Begin with a focused use case, such as test automation or feature development. Choose a model setup, either API-based or self-hosted. Build agents with clear tasks and connect them to enterprise systems. Add validation steps like testing and code checks. Start with a pilot, then expand once the system shows stable performance and clear gains.

Q. What are the key components of agentic coding architecture for enterprises?

A. The system includes a model layer for code generation, an orchestration layer for task flow, and an agent layer with defined roles. It also needs tool connections for Git and CI pipelines, a memory layer for storing context, and a governance layer for access control and audit logs. Each part works together to support end-to-end execution.

Q. What challenges arise when integrating agentic coding systems in enterprises?

A. Common issues include working with legacy systems that lack clean APIs, scattered data across tools, and strict security rules. Systems can also face errors in generated code or delays when scaling multiple agents. Teams may resist adoption if workflows are unclear. These challenges need structured integration, validation steps, and clear role definitions.

Q. Why are governance models important for agentic AI systems?

A. These systems can write and deploy code, so every action must be controlled. Governance models define who can access what, track changes, and enforce approval steps. They also help meet standards like GDPR or SOC 2. Without this layer, errors or unauthorized actions can move quickly across systems and create serious risks.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

Key takeaways: Start with research before development. Map compliance before choosing the architecture. Build the data foundation before training the model. Cost can range from $100K to $5M+, depending on scope. The biggest challenge is keeping the AI accurate, explainable, and compliant after launch. Building a real estate investment AI means planning for two industries:…

What UAE CDS 2027 Means for Your Platform: Integration, Compliance, Systems, and What to Build

Key takeaways: UAE CDS 2027 age verification compliance mandates real-time, auditable systems integrated into identity, access control, and enforcement layers across platforms. Effective compliance requires risk-based, multi-layered verification combining biometrics, Emirates ID checks, device intelligence, and behavioral signals. Legacy KYC systems fail CDS expectations; enterprises must implement continuous verification with dynamic risk scoring and re-validation…

Key takeaways: AI agents for cybersecurity are moving past triage assistance into autonomous decision-making across SOC, AppSec, and threat intelligence. Extensive AI use in security operations saves $1.9M per breach and cuts the breach lifecycle by 80 days (IBM, 2025). 97% of organizations hit by an AI-related security incident lacked proper AI access controls. The…