- AI Video Agent Development Architecture: How the System Works Under the Hood

- Use Cases of AI Video Agent Development Across Industries

- Key Features of AI Video Agents Development

- Required Skills to Build AI Video Agents

- Tools for AI Video Agent Development

- How to Build AI Video Agents: A Step-by-Step Guide

- Cost to Build AI Video Agent Solutions

- Compliances to Keep in Mind for AI Video Agent Development

- Challenges and Solutions in AI Video Agent Development

- How Can Appinventiv Help You Build AI Video Agents?

- FAQs

Key takeaways:

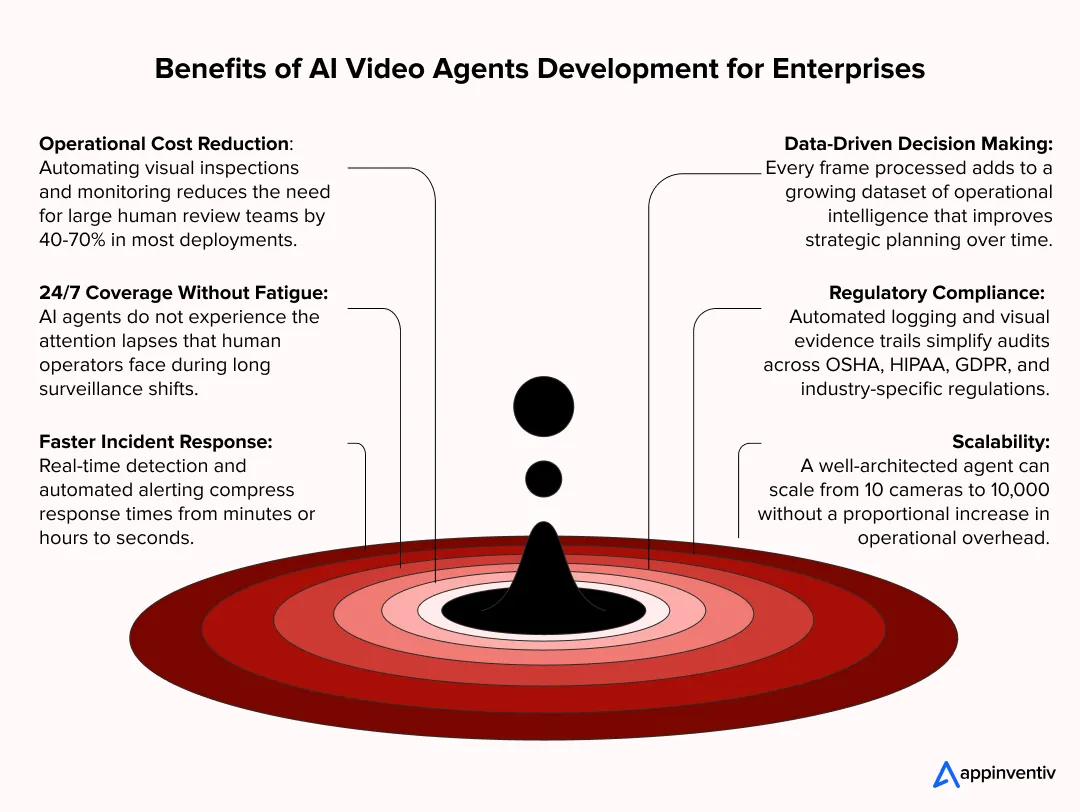

- AI video agents fuse computer vision, LLMs, and decision logic to autonomously interpret and act on live video — replacing passive cameras with real-time intelligence.

- The five-layer architecture — ingestion, perception, understanding, reasoning, action — must be engineered for sub-100ms latency at enterprise scale.

- Biggest ROI hits: manufacturing (90% fewer escaped defects), logistics (40% fewer incidents), and security (60% fewer false alarms).

- You need cross-functional depth across computer vision, multimodal AI, video engineering, edge computing, and MLOps — not one ML engineer working solo.

- Costs run $50K–$940K+, depending on scope, with most enterprises seeing payback within 6–12 months.

To put it simply, AI video agent development is the practice of engineering autonomous software agents that ingest, interpret, and act on video data without human intervention. These agents combine computer vision, large language models, and decision-making logic to transform raw video feeds into actionable intelligence — not tomorrow, but in real time.

For video-intensive industries like manufacturing, healthcare, logistics, and security, the shift is fundamental. You’re no longer deploying cameras that record. You’re deploying agents that watch, reason, and respond — flagging defects on an assembly line, escalating a safety violation, or triggering a workflow the instant something deviates from the norm.

We’ve built these systems across dozens of enterprise environments. What follows is everything we’ve learned distilled into one guide: the architecture, mandatory features, required skills, development tools, step-by-step build process, cost breakdown, compliance requirements, challenges, and the road ahead.

Let’s dive deeper!

Our expert teams will get back to you within 24 hours!

AI Video Agent Development Architecture: How the System Works Under the Hood

Understanding the architecture of AI video agents is critical before committing resources. Unlike traditional video analytics tools that rely on hardcoded rules, modern AI video agents are built on a layered, modular architecture that fuses perception, reasoning, and action into one intelligent system.

Core Layers of AI Video Pipeline Architecture

The AI video pipeline architecture typically follows a five-layer model. Each layer handles a specific responsibility, ensuring scalability, maintainability, and real-time performance.

| Architecture Layer | Responsibility | Key Technologies |

|---|---|---|

| Ingestion Layer | Captures and preprocesses video from cameras, streams, and stored files | RTSP, GStreamer, FFmpeg, WebRTC |

| Perception Layer | Detects objects, faces, actions, and scenes using computer vision models | YOLO, SAM, DINO, OpenCV, MediaPipe |

| Understanding Layer | Contextualizes visual data using multimodal AI and vision-language models | GPT-4V, Gemini Pro Vision, LLaVA, CogVLM |

| Reasoning Layer | Applies business logic, triggers workflows, and makes autonomous decisions | LangChain, AutoGen, CrewAI, custom orchestrators |

| Action Layer | Executes responses: alerts, reports, API calls, robotic commands | REST APIs, Kafka, MQTT, webhook integrations |

LLM-Powered Video Agents Architecture: Where Language Meets Vision

The real breakthrough in modern LLM-powered video agents architecture is the fusion of visual perception with natural language reasoning. Instead of simply detecting objects in a frame, these agents can do much more. For instance:

- Describing the real-time scenario

- Answering questions about a scene

- And triggering context-aware actions.

For example, a manufacturing floor agent does not just detect that a machine part is out of alignment. It interprets the visual anomaly, cross-references it with historical defect patterns stored in a RAG pipeline, and generates an incident report with recommended corrective actions, all without human input.

This is where multimodal AI video agents with vision models truly shine. By combining foundation models like GPT-4V or Gemini Pro Vision with domain-specific fine-tuning, you can create agents that understand both the visual and operational context of your enterprise. Building RAG-powered architectures can be instrumental in making these agents contextually aware and enterprise-ready.

Use Cases of AI Video Agent Development Across Industries

The use cases of AI video agent development extend far beyond basic surveillance. Enterprises across dozens of industries are deploying these agents to solve operational challenges that were previously unsolvable at scale. Here are the most impactful use cases from the industry:

| Industry | Use Case | Business Impact |

|---|---|---|

| Manufacturing | Automated visual quality inspection on assembly lines | Up to 90% reduction in defect escape rate |

| Healthcare | Real-time surgical assist and remote patient monitoring via video | Faster triage, reduced clinician workload by 30%+ |

| Retail | In-store customer behavior analysis and shelf compliance checks | 15-25% uplift in planogram adherence |

| Logistics | Warehouse operations monitoring and forklift safety compliance | 40% reduction in workplace incidents |

| Security | Anomaly detection in live CCTV feeds with automated alerting | Real-time threat identification, reduced false alarms by 60% |

| Media & Content | Automated content moderation, tagging, and compliance screening | 10x faster review cycles vs. manual moderation |

| Agriculture | Drone-based crop health monitoring and pest detection | 20-30% improvement in early intervention rates |

| Construction | Worksite safety monitoring (PPE detection, zone violations) | Proactive compliance enforcement, reduced OSHA incidents |

Enterprises across industries are deploying AI video agents to solve operational challenges at scale.

The applications of computer vision in business range from cancer detection and road monitoring to crop harvesting and preventive maintenance.

This breadth is what enables AI video agents to evolve beyond monitoring into decision-making systems. When you layer agentic intelligence on top of computer vision, the possibilities multiply exponentially.

Key Features of AI Video Agents Development

Before writing a single line of code for AI video agent development, ask yourself: What exactly must this thing do in the wild, completely untouched by human hands?

If you want a system that actually works, these are the non-negotiable key features of AI video agents development that separate a neat prototype from a hardened, production-grade beast.

- Real-Time Video Stream Processing: Latency kills. If your agent isn’t chewing through live feeds and spitting out answers in under a second, it’s useless. Nailing this brutal speed is the absolute bedrock of any serious real-time AI video analysis system development.

- Multi-Camera Orchestration: Forget single-camera tech demos. Real enterprise environments run on pure chaos. Your system has to juggle dozens, if not thousands, of concurrent streams without dropping frames or buckling under the load.

- Object Detection, Tracking, and Classification: This is the core perception engine. We’re talking about wiring up heavy hitters like YOLOv8 or DINO to lock onto targets, track their movement, and ruthlessly categorize every pixel that matters.

- Deep Scene Understanding: Drawing a bounding box around a truck is easy. Grasping the context? That’s hard. The system has to look at the scene, figure out exactly why that truck’s position is a problem, and instantly dictate what happens next.

- Natural Language Query Interface: Your operators shouldn’t need a computer science degree to pull up an event. They need to type plain English—”Show me every single guy who tailgated Gate 3 since Tuesday”—and get the exact footage instantly.

- Automated Escalation Workflows: Merely seeing an anomaly isn’t enough; the agent has to act. When things go sideways, it must immediately fire off predefined alerts directly into your Slack, SMS, or incident management software without hesitation.

- Unbreakable Audit Trails: You need receipts. Every single decision the agent makes must be logged with timestamped visual evidence and a clear reasoning trace. When the compliance auditors inevitably show up, you hand them the indisputable facts.

- Edge-Cloud Hybrid Deployment: Sending raw 4K video up to the cloud to think is a fool’s errand. You run the brutal, latency-sensitive inference right there at the edge, and only pipe the refined insights up to the cloud for deep analytics.

- Continuous Learning and Drift Monitoring: Models rot. Lighting changes. A true production agent constantly monitors its own accuracy, aggressively flagging the exact moment its performance starts to drift and demanding a retrain before it makes a costly mistake.

Required Skills to Build AI Video Agents

To successfully build AI video agents that perform reliably in production, your team needs a cross-functional mix of deep technical skills. This is not a project you hand to a single ML engineer. Based on industry requirements for high-quality AI development services, here is the skill matrix that you need to hire folks with expertise in AI video agents:

| Skill Domain | Specific Competencies | Why It Matters |

|---|---|---|

| Computer Vision | Object detection (YOLO, DINO), segmentation (SAM), tracking (DeepSORT), pose estimation | Core perception capability for any video agent |

| Deep Learning | PyTorch, TensorFlow, model training, transfer learning, fine-tuning | Custom model development for domain-specific accuracy |

| LLM & Multimodal AI | GPT-4V, Gemini Vision, LLaVA, prompt engineering, RAG integration | Enables contextual reasoning and natural language interaction |

| Video Engineering | FFmpeg, GStreamer, RTSP, WebRTC, codec optimization | Efficient ingestion and preprocessing of video streams |

| MLOps & Infrastructure | Docker, Kubernetes, model serving (TorchServe, Triton), CI/CD for ML | Production deployment, scaling, and monitoring |

| Edge Computing | NVIDIA Jetson, TensorRT, ONNX optimization, edge deployment | Low-latency inference at the source |

| Data Engineering | Video annotation pipelines, data labeling, ETL for unstructured data | Training data quality directly determines model accuracy |

| Security & Compliance | Encryption, access control, audit logging and regulatory frameworks | Non-negotiable for healthcare, finance, and government deployments |

Tools for AI Video Agent Development

The tooling landscape for AI video agent development has matured significantly. Below is the recommended stack:

Vision & Perception

- OpenCV: The workhorse for image/video preprocessing, feature extraction, and classical vision tasks.

- Ultralytics YOLOv8/v9: State-of-the-art real-time object detection and segmentation.

- Meta SAM 2: Segment Anything Model for zero-shot segmentation in video.

- Google MediaPipe: Lightweight on-device vision solutions for pose, face, and hand tracking.

LLM & Multimodal Reasoning

- OpenAI GPT-4V / GPT-4o: Industry-leading multimodal models for visual reasoning.

- Google Gemini Pro Vision: Strong alternative with native video understanding capabilities.

- LLaVA / CogVLM: Open-source vision-language models for on-premise deployments.

- LangChain / LlamaIndex: Orchestration frameworks for chaining retrieval, reasoning, and action.

Infrastructure & Deployment

- NVIDIA Triton Inference Server: Production-grade model serving with multi-model support.

- NVIDIA Jetson (Orin/AGX): Edge inference hardware for real-time processing.

- AWS SageMaker / Azure ML / GCP Vertex AI: Cloud-native MLOps platforms.

- Apache Kafka: Event streaming for high-throughput video pipeline data.

- Docker + Kubernetes: Containerization and orchestration for scalable deployments.

Annotation & Data Labeling

- CVAT: Open-source annotation tool for images and video.

- Label Studio: Flexible data labeling with multi-format support.

- Roboflow: End-to-end platform for dataset management, augmentation, and model training.

How to Build AI Video Agents: A Step-by-Step Guide

This is the section that separates strategists from doers. If you have been wondering how to build AI video agents that actually work in production, here is the process we often observe in the industry, refined across hundreds of AI deployments.

Step 1: Nail Down the Business Problem

Stop looking at the tech stack first. Find the bleeding operational wound. Are you trying to catch assembly line defects, police hardhat compliance, or flag security anomalies in a messy CCTV feed? Once you find it, set ruthless KPIs. False positives, sub-second latency thresholds, hard ROI targets. If you can’t measure it, don’t build it.

Step 2: Attack the Data Strategy

Video data is brutally expensive. It costs money to hoard it and even more to label it. Build your data pipeline immediately. You must gather footage from the actual trenches—capturing the ugly edge cases, terrible lighting, and awkward camera angles. Do not cheap out on human annotation. Garbage labels guarantee a garbage model.

Step 3: Lock in the AI Video Pipeline Architecture

You need a foundation that won’t crack under pressure. Map out your perception models and deployment targets right now. If latency will kill the project, shove an NVIDIA Jetson box right at the edge and sync the leftovers to the cloud. Need heavy analytics? A massive cloud-native setup on AWS or GCP is your weapon of choice.

Step 4: Train the Vision Models

Here is where computer vision AI video agent development actually gets its hands dirty. Grab heavy-hitters like YOLO, DINO, or SAM and violently fine-tune them against your custom data. Then, iterate. Train, fail, find out why, re-annotate, and train again. It usually takes three to five grueling cycles before the model stops acting like an amateur.

Step 5: Wire up Multimodal Reasoning

Connecting the brain. You have to layer in multimodal AI video agents with vision models so the system actually understands what it is staring at. Hook your vision outputs straight into an orchestration beast like LangChain. Now it can reason about a visual anomaly, dig through your proprietary knowledge bases, and make a decision. This step transforms a dumb camera into an autonomous agent.

Step 6: Forge the Action Layer

Intelligence without action is just an expensive dashboard. Make it do something. Splice the agent directly into your ERP, alerting systems, and incident trackers. Keep the workflows highly configurable. When rules change on the floor, the operations team should be able to tweak escalations without begging IT for an update.

Step 7: Break It on Purpose

Test it until it shatters. Throw every lighting change, occlusion, and adversarial curveball you can imagine at the system. Does it hit your KPIs? If it processes sensitive footage, harden the security until it’s a fortress. Do not deploy a liability.

Step 8: Deploy and Hunt for Drift

Launch it, but don’t walk away. Instrument the entire pipeline with aggressive monitoring—track the latency, watch for model drift, and obsess over performance dashboards. Funnel those field failures directly back into your retraining loop. Just like the hard lessons we learned about structuring prompt routing while building RAG chatbots, forcing a continuous feedback loop is the only way to keep a scaled system from collapsing.

The process involves taxes, too many compliances, and much more. Let’s save you from that headache.

Cost to Build AI Video Agent Solutions

The cost to build AI video agent development solutions varies significantly based on scope, complexity, and deployment requirements. Below is a realistic breakdown based on our project experience.

| Cost Component | Basic Agent | Mid-Complexity Agent | Enterprise-Grade Agent |

|---|---|---|---|

| Discovery & Strategy | $5,000 – $15,000 | $15,000 – $30,000 | $30,000 – $60,000 |

| Data Collection & Annotation | $10,000 – $25,000 | $25,000 – $75,000 | $75,000 – $200,000 |

| CV Model Development | $15,000 – $40,000 | $40,000 – $100,000 | $100,000 – $250,000 |

| LLM/Multimodal Integration | $5,000 – $15,000 | $20,000 – $60,000 | $60,000 – $150,000 |

| Infrastructure & DevOps | $5,000 – $10,000 | $15,000 – $40,000 | $40,000 – $100,000 |

| Testing & QA | $5,000 – $10,000 | $10,000 – $30,000 | $30,000 – $80,000 |

| Deployment & Integration | $5,000 – $10,000 | $15,000 – $40,000 | $40,000 – $100,000 |

| TOTAL ESTIMATE | $50,000 – $125,000 | $140,000 – $375,000 | $375,000 – $940,000+ |

The enterprise AI video agents development cost is offset by these gains. AI agent development costs, especially for complex tasks such as generating or analyzing videos, can fall anywhere from $40,000 to $400,000+. However, the ROI from reduced labor, faster incident response, and compliance automation often delivers payback within 6-12 months.

What Drives the AI Video Agent Development Cost

The AI video agent development cost is influenced by several variables that can shift the budget in either direction:

- Number of video streams and cameras: More streams require more compute and more complex orchestration.

- Real-time vs. batch processing requirements: Real-time AI video analysis system development demands GPU-accelerated edge hardware, which adds to the cost.

- Custom model training vs. pre-trained models: Custom models deliver higher accuracy but require significant data annotation and training compute.

- Compliance and security requirements: Healthcare (HIPAA), financial (SOC 2), and government (FedRAMP) deployments add compliance engineering costs.

- Integration complexity: Connecting to legacy ERP, SCADA, or custom enterprise systems increases development effort.

Custom AI Video Agent Development Cost vs. Enterprise Pricing

The custom AI video agent development cost varies from the enterprise-scale deployments. An enterprise contract typically includes SLAs, dedicated support, model retraining schedules, and infrastructure management, adding 15-25% to the base development cost annually.

Compliances to Keep in Mind for AI Video Agent Development

Deploying AI systems that process video data brings significant regulatory responsibility. Ignoring compliance is not just risky; it can shut down your project entirely. Here are the key frameworks you must address when you build AI video agents:

| Regulation | Scope | Impact on Video Agents |

|---|---|---|

| GDPR (EU) | Personal data protection, right to erasure, consent management | Facial recognition and biometric processing require explicit consent and data minimization |

| CCPA/CPRA (California) | Consumer privacy rights, data sale restrictions | Video data containing identifiable individuals triggers consumer rights obligations |

| HIPAA (US Healthcare) | Protected health information security | Video agents in clinical settings must encrypt data and maintain audit trails |

| EU AI Act | Risk-based AI regulation, high-risk system requirements | Real-time biometric identification in public spaces classified as high-risk |

| SOC 2 Type II | Security, availability, processing integrity | Required for SaaS-based video analytics platforms serving enterprise clients |

| BIPA (Illinois) | Biometric data collection and storage | Strict consent and retention requirements for facial recognition systems |

Compliant enterprise AI consulting frameworks suggest that effective AI consulting models must be accountable, risk-managing, and have measurable ROI. Compliance is not an afterthought; it is a design constraint that must be baked into the architecture from day one.

Challenges and Solutions in AI Video Agent Development

No AI video agent development project is without hurdles. Here are the most common challenges we encounter and how we solve them:

| Challenge | Root Cause | Our Solution |

|---|---|---|

| High latency in real-time processing | Unoptimized models, cloud-only architecture | Edge-cloud hybrid with TensorRT optimization; sub-100ms inference |

| Model accuracy degradation over time | Data drift, environmental changes | Automated drift detection with scheduled retraining pipelines |

| Massive storage and bandwidth costs | Storing raw video 24/7 | Event-driven recording, intelligent frame sampling, video compression |

| Privacy and consent management | Facial recognition on public feeds | On-device anonymization (face blurring) before cloud transmission |

| Integration with legacy systems | Proprietary protocols, limited APIs | Middleware adapters and protocol translators for SCADA, ONVIF, etc. |

| Scaling across distributed sites | Inconsistent network conditions | Containerized edge agents with offline capability and sync-on-connect |

| High false positive rates | Insufficient training data diversity | Active learning pipelines that surface hard negatives for targeted retraining |

The goal here isn’t running endless sandbox experiments. The goal is engineering systems that survive the chaos of day-to-day operations.

How Can Appinventiv Help You Build AI Video Agents?

We do not approach AI video agent development as a technology showcase. We approach it as a business problem that requires precision engineering, domain expertise, and production discipline. Here is what sets us apart:

10+ Years of Enterprise AI Experience

With 3,000+ projects delivered across 35+ industries and 1,600+ skilled professionals, we bring a depth of experience that most AI agencies simply cannot match. We have built AI systems for Fortune 500 companies and high-growth startups alike.

Full-Stack AI Capabilities

From computer vision development and LLM development to RAG architectures and generative AI services, we cover the entire AI stack in-house. No fragmented vendor management, no gaps in expertise.

Production-First Mindset

We build for production, not demos. Every agent we develop includes comprehensive monitoring, drift detection, automated retraining pipelines, and enterprise-grade security. Our AI services and solutions are designed to work across foundation models, domain-specific LLMs, vision models, and multilingual transformers.

Proven Track Record

From building an AI platform with a multi-agent RAG architecture for MyExec to engineering real-time logistics intelligence systems for Americana, our portfolio demonstrates our commitment to solving complex AI challenges at scale. Consecutive Deloitte Tech Fast 50 Awards in 2023 and 2024 validate our engineering velocity and innovation.

Flexible Engagement Models

Whether you need end-to-end development, a dedicated team extension, or strategic AI consulting services to define your roadmap, we adapt to your operating model. Our engagement structures are designed for enterprise accountability with transparent milestones and deliverables.

FAQs

Q. How do you build AI video agents from scratch?

A. Start with the business problem don’t just write code. Next, collect and painfully annotate your video data. Choose your computer vision models, wire up the LLM reasoning layer, and build out the integrations. Deploy and monitor relentlessly. Doing this solo eats up 6–12 months. Bringing in an experienced AI agent development company cuts that timeline to shreds.

Q. What are the most common use cases of AI video agents?

A. The big wins happen in manufacturing quality inspection and workplace safety compliance monitoring. Retail relies on them for customer behavior analytics, while hospitals lean on healthcare remote monitoring. Add in security anomaly detection and media content moderation. Bottom line: every single use case demands a bespoke AI video pipeline architecture.

Q. What technologies are required to build AI video agents?

A. You need heavy-hitting computer vision frameworks (OpenCV, YOLO, SAM) and deep learning libraries (PyTorch, TensorFlow). Marry those to multimodal LLMs (GPT-4V, Gemini Vision). Process the pixels with FFmpeg or GStreamer, and orchestrate via LangChain or AutoGen. Finally, host the chaos on AWS, GCP, or NVIDIA Jetson, backed by MLOps tooling like Docker and Kubernetes.

Q. How do LLMs and vision models work together in video agents?

A. Vision models are the eyes; LLMs are the brain. The perception layer detects and tracks objects. But the LLM reasoning layer actually understands the scene, answering natural language queries and triggering actions. This brutal efficiency is what makes multimodal AI video agents work—they see and think at the exact same time.

Q. Can AI video agents process real-time video streams?

A. Yes, but it takes serious engineering muscle. For real-time AI video analysis system development, we optimize heavily using TensorRT or ONNX and push inference to the edge via NVIDIA Jetson Orin. Piped through ultra-fast protocols like RTSP, you hit sub-100ms latency. The trick is balancing that inference speed without destroying accuracy.

Q. How much does custom AI video agent development cost?

A. The custom AI video agent development cost scales strictly with your ambition. A basic, single-camera setup starts around $50,000. An enterprise-grade, multi-site beast? Easily $940,000+. Your video volume, custom training, and integration depth dictate the final bill. Don’t forget the long game: annual maintenance eats 15-25% of your initial build budget.

Q. What compliance should enterprises consider for AI video agents?

A. Video data is a legal minefield. Europe enforces GDPR and the EU AI Act. California has CCPA/CPRA, and Illinois has the brutal BIPA for biometrics. Add HIPAA for healthcare and SOC 2 for SaaS. To survive an audit, you must hardcode consent management, data minimization, and encryption from day one.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

Key takeaways: Start with research before development. Map compliance before choosing the architecture. Build the data foundation before training the model. Cost can range from $100K to $5M+, depending on scope. The biggest challenge is keeping the AI accurate, explainable, and compliant after launch. Building a real estate investment AI means planning for two industries:…

What UAE CDS 2027 Means for Your Platform: Integration, Compliance, Systems, and What to Build

Key takeaways: UAE CDS 2027 age verification compliance mandates real-time, auditable systems integrated into identity, access control, and enforcement layers across platforms. Effective compliance requires risk-based, multi-layered verification combining biometrics, Emirates ID checks, device intelligence, and behavioral signals. Legacy KYC systems fail CDS expectations; enterprises must implement continuous verification with dynamic risk scoring and re-validation…

Key takeaways: AI agents for cybersecurity are moving past triage assistance into autonomous decision-making across SOC, AppSec, and threat intelligence. Extensive AI use in security operations saves $1.9M per breach and cuts the breach lifecycle by 80 days (IBM, 2025). 97% of organizations hit by an AI-related security incident lacked proper AI access controls. The…