- The Quick Difference Between Agentic Coding vs. Vibe Coding

- The Meaning of Both Concepts

- Why This Shift Happened (and Why It Happened Now)

- System Architecture: How the Two Approaches Structure a Codebase

- Orchestration: Vibe Coding vs. Agentic Coding

- How Does the Development of Agentic Coding and Vibe Coding Differ?

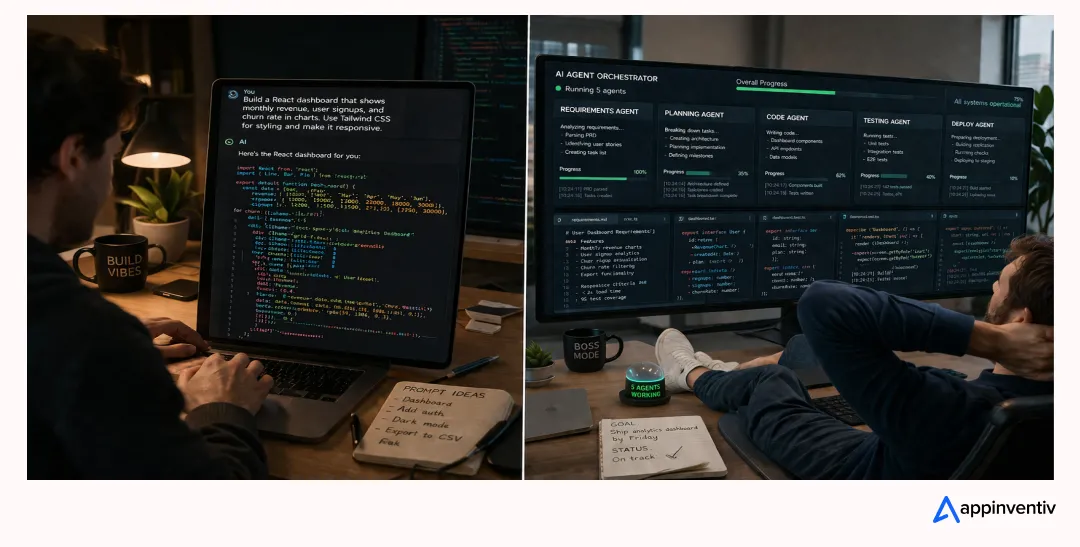

- Agentic Workflows vs. Prompt-Based Coding: The Day-to-Day Difference

- Use Cases of Agentic Coding vs. Vibe Coding

- Security, Quality, and Governance: The Part Leadership Cares About

- Skill Requirements for Vibe Coding vs. Agentic Coding

- How Can Appinventiv Help You Out?

- FAQs

Key takeaways:

- Prompt-driven workflows in Vibe Coding prioritize speed and iteration, whereas Agentic Coding begins with structured planning to ensure long-term reliability.

- Review and testing are often skipped in Vibe Coding, but Agentic Coding enforces rigorous validation through automated tests and PR-level scrutiny.

- A single conversational loop defines how Vibe Coding operates, while Agentic Coding coordinates multiple agents working in parallel with defined roles.

- Quick, disposable outputs are typical in Vibe Coding, whereas Agentic Coding focuses on scalable systems built to handle real-world complexity over time.

- High risk and weak accountability characterize Vibe Coding in production, while Agentic Coding embeds governance, auditability, and compliance into the workflow.

Somewhere between “ChatGPT, write me a to-do app” and “design, ship, and maintain a HIPAA-compliant clinical workflow platform,” software engineering has quietly split into two different disciplines. One of them is fun. The other one is what enterprises actually need.

The current shift is bigger than any framework war anybody has lived through before. It isn’t Angular vs. React. It isn’t REST vs. GraphQL. It is the quiet reshaping of who, or what, holds the pen when production code gets written.

The industry is now settling on two labels for this split: Agentic Coding and Vibe Coding. They sound similar. They are not. And if you are deciding how your teams should use Claude Code, Cursor, Copilot, or Devin on your next release, or evaluating AI agent development services for production use, conflating the two is the single most expensive mistake you can make this decade.

This guide breaks down the Agentic Coding vs. Vibe Coding debate without hype, no doom, just the architecture, the tradeoffs, and the places where each one earns its keep.

Free 30-minute audit with a senior architect. We’ll tell you if your AI coding workflow is headed for production — or a rewrite.

The Quick Difference Between Agentic Coding vs. Vibe Coding

If your team is shipping anything that touches patient data, money, personally identifiable information, or a contract with uptime penalties, stop reading vibe-coding tutorials and skip ahead to the Agentic Coding sections.

This is the crux of the Vibe Coding vs. AI-Augmented Development conversation: one is improvisation, the other is engineering with AI as leverage.

| Dimension | Vibe Coding | Agentic Coding |

|---|---|---|

| Who drives | A human typing prompts in a conversational loop | A human architecting a plan, AI agents executing it |

| Code review | Often skipped — “go with the vibes.” | Mandatory, treated like a human PR |

| Best fit | Throwaway prototypes, MVPs, internal scripts, learning | Production systems, regulated industries, long-lived codebases |

| Architecture work | Minimal upfront | Design doc or spec before any agent runs |

| Testing | Ad hoc, often manual | Automated test suites are the control surface |

| Accountability | Fuzzy — AI wrote it, humans accepted it | Humans own architecture, quality, and correctness |

| Scale ceiling | Breaks past a few thousand lines or multi-service work | Built to orchestrate multi-agent, multi-module systems |

| Risk profile | High for anything customer-facing or regulated | Controlled via guardrails, CI/CD, and human checkpoints |

The Meaning of Both Concepts

Before comparing the two, we need clean definitions. The conversation online has become a mess precisely because people use “Vibe Coding” to describe everything from a Saturday-night hackathon to a disciplined, test-driven agent workflow. Those are not the same activity, and pretending they are is how organizations end up with unmaintainable code in production.

What Is Vibe Coding?

There’s a new kind of coding I call “vibe coding”, where you fully give in to the vibes, embrace exponentials, and forget that the code even exists. It’s possible because the LLMs (e.g. Cursor Composer w Sonnet) are getting too good. Also I just talk to Composer with SuperWhisper…

— Andrej Karpathy (@karpathy) February 2, 2025

Vibe Coding was coined by Andrej Karpathy in a February 2025 tweet. The idea is simple: you describe what you want in natural language, the model writes the code, you run it, and if something breaks, you paste the error back and try again. You don’t read the diffs carefully. You don’t hold the AI to a spec. You go with the flow.

Simply put, Vibe Coding prioritizes momentum over inspection: you prompt, execute, and validate through output rather than reviewing the underlying logic. In this setup, the human acts less like an engineer and more like someone steering the prompts.

Vibe Coding is a perfectly respectable activity for the right context. A weekend hack. A personal automation. A disposable script that scrapes one page, one time. A classroom exercise where the point is to learn by watching the model code.

It stops being respectable the moment the output is expected to survive contact with real users, real data, or real auditors. Both Vibe coding and traditional development carry very different security profiles, and cybersecurity experts continue to surface new vulnerability classes that arrive with the prompt-driven side of that split.

What Is Agentic Coding?

Agentic Coding is a distinct discipline, and the difference isn’t subtle. It marks a shift from improvisation to structured execution.

The “agentic” part refers to working with autonomous coding systems that can navigate codebases, execute tasks, and refine their own outputs. But they don’t operate independently in any meaningful sense — they operate within boundaries you define.

The “engineering” part is where the real distinction lies. This approach brings back everything that gets dropped in more casual workflows: intentional architecture, documentation, testing discipline, code review, and security thinking. None of that is optional here.

In simple terms, Agentic Coding means using AI as leverage, not as a replacement for responsibility. The tools can generate, iterate, and accelerate — but the direction, the standards, and the final calls stay with you.

You may not write every line, but you are accountable for every outcome. This is also why the conversation about AI agents in enterprise environments has shifted away from “can we let the model run free” toward “how do we keep humans in the loop where it matters.”

Why This Shift Happened (and Why It Happened Now)

For the first two years of the generative AI wave, coding assistants were basically autocomplete with opinions. Useful, but incremental. That changed in late 2025 when models crossed a threshold where they could plan, execute shell commands, run tests, and loop on failures without hand-holding. Claude Code, Cursor’s agent mode, OpenAI’s Codex, and GitHub Copilot’s agentic features all hit maturity within months of each other.

The numbers tell the story. Stack Overflow’s 2025 Developer Survey — the largest annual snapshot of developer sentiment — found that 84% of respondents are using or planning to use AI tools in their development process, up from 76% in 2024, and 51% of professional developers now use AI tools daily. Adoption is no longer the question.

Trust, on the other hand, is collapsing. The same survey recorded a sharp drop in confidence, with only 29% of 2025 respondents saying they trust the accuracy of AI tools, down eleven percentage points from 2024.

Developers are leaning on AI more and believing it less — a contradiction that only makes sense if you accept that people are using the tools for different tasks than they used to. The easy stuff is working. The hard stuff is still hard. And the “vibe” approach that was compatible with prototypes is hitting a wall when it touches anything that matters.

That wall is exactly where Agentic Coding becomes relevant.

Three forces pushed the shift:

- Models became capable enough to loop. Until late 2025, coding agents could draft but not iterate reliably. Once the loop closed — generate, test, fix, retest — a new kind of workflow became possible, and the conversation shifted from single-shot prompting to discussing AI agent orchestration vs. LLM coding as two distinct operating modes.

- Vibe Coding started breaking in production. Teams that shipped vibe-coded apps quickly discovered the technical debt, security holes, and maintenance nightmares hiding underneath.

- Enterprise buyers got serious. Gartner, Forrester, and the big consultancies started pressing C-suites on agentic AI roadmaps, and “let the model wing it” stopped being an acceptable strategy, especially for organizations already investing in AI consulting services to define governance, architecture, and risk boundaries upfront.

The market picked up on it fast. Gartner predicts that 40% of enterprise applications will be integrated with task-specific AI agents by the end of 2026, up from less than 5% in 2025. That growth curve does not happen with Vibe Coding. It happens with disciplined, auditable, agent-orchestrated builds — which is to say, Agentic Coding.

We’ve deployed multi-agent systems for banks, hospitals, and insurers — governed, audited, and live in weeks.

System Architecture: How the Two Approaches Structure a Codebase

This is the section where the differences stop being philosophical and start being concrete. The architecture of a vibe-coded project and the architecture of an agentically-engineered one look nothing alike, even if they end up solving the same problem.

Here is what that difference of Agentic Coding vs. Vibe Coding looks like side-by-side, but it is recommended that you read the briefs discussed ahead for deeper insights:

| Architectural Concern | Vibe Coding | Agentic Coding |

|---|---|---|

| Design artifact | Chat history | Versioned design doc/spec |

| Task decomposition | Implicit, one prompt at a time | Explicit plan, independently executable units |

| Execution model | Single conversational loop | Multi-agent, often parallel |

| Test strategy | Manual or absent | Automated tests are the control surface |

| Failure handling | Paste error, retry | Retries, rollbacks, circuit breakers |

| Observability | None by default | Structured logs, traces and decision records |

| Context management | Model’s short-term memory | Explicit context files, retrieval and memory stores |

| Human checkpoints | At the human’s whim | Defined in the workflow |

Vibe Coding Architecture Explained

A vibe-coded project usually starts with a chat window and no blueprint. The human prompts, the model produces a file or two, and the structure of the application accretes organically, one chat turn at a time. There is rarely a design document. Tests, if they exist, come after the fact. Dependencies are pulled in whenever the model decides it needs them.

In practice, this produces applications that share a few predictable traits:

- A flat or shallow directory structure, because the model has not been told to organize otherwise.

- Business logic is tangled with presentation logic because the separation of concerns was never specified.

- Inconsistent patterns across files, because each prompt starts with a fresh context.

- Secrets, API keys, and configuration are baked into source because the fastest path to a working demo is also the most insecure one.

That last point is not hypothetical. Our own security team has run audits on dozens of vibe-coded codebases brought in for rescue work, and the findings line up with what we published in our Vibe Coding security risks analysis.

AI assistants operate in massive data dumps, producing pull requests significantly larger in scope and touching dozens of interconnected services at once, with a single hallucinated config file able to propagate a live database credential across an entire microservice architecture before anyone notices.

For a throwaway script, this does not matter. For a healthcare app collecting patient intake data, it is a regulatory incident waiting to happen.

What’s an Agentic AI System Architecture

Agentic Coding inverts the order of operations. Architecture comes first. The human — usually a senior engineer or architect — writes a design doc describing the system boundaries, the data flow, the security model, and the acceptance criteria for each component. Only then do the agents get work.

A typical multi-agent system architecture AI build has four layers:

- The planning layer. This is where goals, constraints, and task decomposition live. Sometimes it is a human-written spec. Increasingly, it is a planning agent that breaks a high-level intent into smaller, independently executable tasks. Either way, planning is explicit and durable, not ephemeral chat history.

- The execution layer. One or more coding agents (Claude Code, Cursor, Codex, Windsurf) pick up scoped tasks from the plan and produce code, run tests, and report back. In multi-agent setups, different agents handle different modules in parallel — one refactoring a service while another writes tests for a second service, as long as the tasks are truly independent.

- The orchestration layer. This is the traffic controller. It decides which agent runs when, handles handoffs, manages context windows, retries failed tasks, and escalates to humans when confidence drops below a threshold. LLM orchestration frameworks like LangGraph, AutoGen, CrewAI, and other agentic AI frameworks and tools serve this role at the enterprise level.

- The oversight and governance layer. Logging, audit trails, role-based access, guardrails, and human-in-the-loop checkpoints. This is the layer enterprises cannot skip, and the layer Vibe Coding has no equivalent for.

That fourth column — oversight — is why Agentic Coding has become the default for anything that carries a compliance burden. Healthcare, banking, insurance, and defense buyers will simply not accept a system they cannot audit.

For instance, when building insurance AI agents, it’s critical that compliance-ready architectures, decision logs, and human-in-the-loop controls are designed from day one, not bolted on after the fact.

Orchestration: Vibe Coding vs. Agentic Coding

If architecture defines how a system is structured, orchestration defines how it actually runs. This is where Agentic Coding vs. Vibe Coding becomes operational, not philosophical.

| Aspect | Vibe Coding | Agentic Coding |

|---|---|---|

| Coordination Model | Single human–AI loop | Multi-agent coordination across tasks |

| Execution Flow | Sequential, prompt-by-prompt | Parallel and asynchronous workflows |

| Task Distribution | Managed manually by the developer | Routed across specialized agents automatically |

| State & Context Management | Limited to chat memory | Shared memory layers, structured context and retrieval systems |

| Failure Handling | Retry by re-prompting | Built-in retries, fallbacks, escalation to humans |

| Human Role | Constant driver of every step | Supervisor, reviewer, and decision-maker |

| Scalability | Breaks across multiple files/services | Designed for multi-module, multi-agent systems |

In a typical Vibe Coding session, orchestration does not really exist. One human interacts with one model in a single conversational loop. The moment the task expands beyond a few files or requires coordination across systems, the workflow starts to break down. Context gets lost, dependencies are missed, and the system becomes fragile.

Agentic Coding introduces orchestration as a first-class layer. Instead of a single loop, you have a coordinated system where multiple agents handle scoped responsibilities. One agent may plan tasks, another writes code, another validates outputs, and another manages failures or escalations.

A well-orchestrated workflow typically includes:

- A planning layer that breaks down goals into executable tasks

- A routing mechanism that assigns tasks to the right agents

- A shared memory layer that maintains context across agents

- A supervision layer that monitors output quality and triggers human review when needed

This is the difference between using an AI tool and running an AI system.

In practice, this is also where most vibe-coded projects hit their ceiling. Without orchestration, scaling beyond simple use cases becomes unpredictable. With orchestration, teams can reliably build systems where multiple agents operate in parallel, with clear boundaries, shared context, and controlled handoffs.

How Does the Development of Agentic Coding and Vibe Coding Differ?

Every engineering decision is a tradeoff, and the Vibe Coding vs. Agentic Coding conversation is no exception. Here is how the two approaches actually compare when you put them under enterprise-grade scrutiny.

To summarize

| Tradeoff | Vibe Coding | Agentic Coding |

|---|---|---|

| Time to first prototype | Minutes to hours | Days |

| Time to production-ready system | Often never | Weeks to months, predictable |

| Upfront cost | Very low | Moderate |

| Total cost of ownership | High (rewrites, incidents) | Moderate and predictable |

| Quality ceiling | Low — plateaus fast | High — scales with discipline |

| Regulatory fit | Poor | Strong |

| Team size requirement | One person | Whole engineering orgs |

| Best use | Prototypes, throwaway tools | Production systems |

Speed

Vibe Coding wins on raw time-to-first-working-version. If your goal is to have a clickable prototype by the end of the day, nothing beats describing it to a chatbot and iterating. For throwaway artifacts and rapid prototyping, this is real leverage.

Agentic Coding is slower at the starting line because of the planning overhead, but it pulls ahead on anything that runs longer than a few days. The design doc that felt like friction on day one is the reason the system is still shippable on day thirty.

Cost

The cost conversation is trickier than it looks. Vibe-coded prototypes are dirt cheap to produce and expensive to maintain — sometimes so expensive they have to be rewritten from scratch. Agentic Coding carries a higher per-task cost (more planning, more review cycles, more tokens because agents loop) but compounds less technical debt.

This is something we cover in detail in our breakdown of AI agent development cost, where the numbers consistently show that the cheap-to-build path is rarely the cheap-to-own path once you account for rewrites, incidents, and compliance work.

Gartner’s own guidance on this is pointed. Their analysts warn that over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs, unclear business value, or inadequate risk controls. The teams that avoid that fate are the ones treating Agentic Coding as a discipline, not a silver bullet.

Quality and Reliability

Here, the gap is widest. Agentic Coding treats automated tests, linting, CI/CD, and code review as the control surface that makes agent output trustworthy. Vibe Coding treats them as optional.

Simon Willison, one of the most influential voices in this space, put it in a way that resonates deeply with how we train our own engineers. He observed that many of the techniques associated with high-quality software engineering — automated tests, linting, clear documentation, CI and CD, cleanly factored code — turn out to help coding agents produce better results as well. Good engineering practice and good agent practice are the same thing. They just feel like friction until you see what happens without them.

Risk and Compliance

For anything regulated, Vibe Coding is a nonstarter. You cannot explain a vibe to an auditor. You cannot produce a decision trail for something that was never recorded. HIPAA, SOC 2, PCI-DSS, GDPR, and the EU AI Act — all of them assume the presence of documentation, access controls, and reproducibility. Agentic Coding produces those by design. Vibe Coding produces them by accident, if at all.

Agentic Workflows vs. Prompt-Based Coding: The Day-to-Day Difference

The lived experience of a developer working in each paradigm is strikingly different, and if you are hiring or reorganizing a team around AI, this is the part that will actually shape your culture.t

What a Prompt-Based (Vibe) Day Looks Like

You open your editor. You open the chat panel. You type what you want. You read the output, sometimes. You run it. It works, or it doesn’t. If it doesn’t, you describe the error and try again. Your day is a long conversational loop. Your deliverable is whatever emerges by 5 p.m.

Your primary skill is prompt craft. Your primary risk is that the code looks plausible enough to ship, and plausible is not the same as correct.

What an Agentic Coding Day Looks Like

You open your plan — a markdown file, a Linear ticket, a design doc, something durable. You review yesterday’s agent output and approve, reject, or request changes, treating each diff like a junior engineer’s PR.

You scope the next task for the agent, specifying inputs, outputs, test criteria, and constraints. You kick off the agent and move to something else — reviewing architecture, pairing with a teammate, checking a third agent’s output on a different module. Your day is structured around review and direction, not typing — closer to running AI agent pipelines vs. prompt chaining in a chat window, where each pipeline carries its own state, tests, and rollback behavior.

Your primary skill is system design and judgment. Your primary risk is approving something you did not read carefully enough.

Agentic Engineers vs. Vibe Coders: Two Different Roles

These are, increasingly, two different jobs. One is a creative generalist whose superpower is rapid ideation with an AI partner. The other is a disciplined orchestrator whose superpower is turning a fuzzy business goal into a durable, testable, production-grade system built mostly by agents.

Your team probably needs both — but for different tiers of work.

Use Cases of Agentic Coding vs. Vibe Coding

After a decade of shipping software into regulated industries, our operating rule is simple: match the approach to the production threshold of the output. The practical lens here is agent-based systems vs. traditional AI development — the former earns its cost on long-lived systems, the latter still has a place in quick experiments.

If the code will live for a week and serve one person, vibe away. If it will live for years and serve thousands, engineer it properly.

For founders still in the discovery stage, it is also worth browsing through real-world AI agent business ideas before deciding which paradigm fits your roadmap — the right approach often becomes obvious once you know what you are actually building toward.

| Vibe Coding Use Cases | Agentic Coding Use Cases |

|---|---|

| Building a throwaway prototype to validate an idea in a day | Shipping systems that handle customer or patient data |

| Writing personal automation scripts with no external dependencies | Developing software in regulated industries (healthcare, finance, insurance, government) |

| Learning a new framework with AI acting as a tutor | Building codebases that will be maintained long-term (beyond a quarter) |

| Creating quick UI mockups for stakeholder validation | Enabling multiple engineers or agents to work in parallel |

| Experimenting where failure has near-zero consequences | Delivering systems requiring uptime, reproducibility, and auditability |

| Rapid internal tools meant for short-term or single-user use | Preventing risks like credential leaks or hallucinated configurations in production |

Use Them Together When

The honest answer for most enterprises is hybrid. Our engineering teams often use vibe-style prototyping to validate a feature concept in an afternoon, then rebuild the promising ones under a proper Agentic Coding workflow before anything ships. The prototype is disposable. The production version is disciplined. Treating them as the same activity is where teams get into trouble.

Security, Quality, and Governance: The Part Leadership Cares About

Every conversation we have with a CTO eventually arrives at the same three questions. How do we keep quality from drifting? How do we keep agents from doing something stupid at 3 a.m.? How do we prove to an auditor that we did?

The answers are the same answers good engineering teams have always had — they just need to be re-applied to a world where the typist is not always human. This is exactly where autonomous AI systems design patterns — guardrails, circuit breakers, human-in-the-loop checkpoints, decision logging — earn their place in the architecture.

| Area | Vibe Coding | Agentic Coding |

|---|---|---|

| Quality Control | Informal, depends on manual checks or quick iteration | Enforced through automated test suites that block faulty code |

| Testing | Optional or minimal | Mandatory tests act as the primary control mechanism |

| Code Review | Often skipped or lightweight | Strict, PR-level rigor for every agent-generated change |

| Guardrails | Rarely defined upfront | Designed in advance (RBAC, permissions, rate limits, sandboxing) |

| Error Prevention | Relies on developer intuition and quick fixes | Systematically prevents drift and unsafe actions |

| Auditability | Limited or nonexistent | Full audit logs tracking every action and decision |

| Security Oversight | Not a dedicated concern | Explicit ownership of agent risk and behavior |

| Compliance Readiness | Not suitable for regulated environments | Built for compliance (healthcare, finance, etc.) |

| Governance Approach | Reactive, if at all | Proactive, embedded into system architecture |

| Risk Management | High tolerance for failure | Designed to minimize risk and prevent critical failures |

This is the piece Gartner keeps warning about. Their 2025 analysis noted that 75% of technology leaders listed governance as their primary concern when deploying agentic AI, and 35% identified cybersecurity as the primary adoption obstacle.

Those numbers do not go down by ignoring the problem. They go down by building governance into the architecture from day one of signing up for AI agent development services.

For Vibe Coding projects, that’s almost impossible — and it is also why choosing the right AI agent development company often matters more than choosing the framework itself, since governance maturity is rarely something you can bolt on after the fact.

Vibe-coded prototypes get rewritten. Agentic systems get scaled. Book a 30-min call, and we’ll map your path from one to the other.

Skill Requirements for Vibe Coding vs. Agentic Coding

Technology shifts rarely stay confined to tools—they reshape how teams think, collaborate, and deliver. The shift from Vibe Coding to Agentic Coding is fundamentally a shift in skill expectations, not just workflows, because engineers now need to reason about autonomous AI workflow architecture rather than just individual prompts.

| Skill Area | Vibe Coding | Agentic Coding |

|---|---|---|

| Code Generation | Writing prompts and quickly iterating on generated code | Orchestrating multiple agents to generate, refine, and integrate code |

| Code Review | Basic self-review or quick validation | Deep review capability becomes critical; senior engineers spend significant time evaluating agent output |

| Testing Discipline | Optional or lightweight testing | Strong testing mindset required; tests define system reliability and prevent drift |

| System Architecture | Often evolves during development | Must be defined upfront to enable parallel agent workflows and clear task boundaries |

| Documentation Skills | Minimal or informal documentation | Writing precise specs, design docs, and constraints to guide agent behavior |

| Problem Solving | Trial-and-error with fast iterations | Structured thinking to break problems into agent-executable tasks |

| Collaboration | Primarily individual or small-team work | Hybrid collaboration with humans + agents, understanding agent strengths and limits |

| Junior Developer Skills | Learning by building and experimenting | Early focus on architecture, testing, and review instead of repetitive coding |

| Tooling & Workflow Understanding | Using AI tools as assistants | Managing agent ecosystems (CI-like workflows, pipelines, permissions) |

| Scalability Thinking | Not a priority | Essential—designing systems that multiple agents and engineers can extend safely |

| Adaptability | Flexible, informal workflows | Requires structured processes and evolving team roles |

How Can Appinventiv Help You Out?

We have spent the last decade building production software for healthcare networks, banks, insurers, logistics operators, and global enterprises — and the shift toward enterprise agentic AI development is the biggest change we have navigated with our clients in that time.

Our teams have delivered more than 3,000 projects across 35+ industries, shipped 100+ autonomous AI solutions, and built deep compliance expertise across HIPAA, HITECH, SOC 2, PCI-DSS, GDPR, ISO 42001, and the EU AI Act.

Our achievements have continuously helped us bag impactful awards.

Here is where we typically come in:

- Strategy and use-case mapping. We help you identify which of your workflows are genuinely ripe for agentic automation and which should stay human-owned — before you spend money on the wrong pilot.

- Agentic AI system architecture. We design the planning, execution, orchestration, and governance layers that make agent-driven workflows safe, scalable, and auditable in regulated environments.

- LLM orchestration and multi-agent builds. We implement custom agent pipelines using frameworks like LangGraph, CrewAI, Model Context Protocol, and enterprise platforms — integrated with your existing data, identity, and compliance stack.

- Guardrails, observability, and decision logging. We build the governance layer auditors expect, and the observability layer your SRE team needs.

- Human-in-the-loop workflow design. We keep humans in the decisions that matter and remove them from the ones that do not, so your teams scale without losing control.

- Migration from vibe-coded prototypes to production. If you have a working prototype that was built fast and loose, we help you rebuild it on an Agentic Coding foundation without losing the product velocity that made it work in the first place.

Whether you are running your first agentic pilot or scaling from ten agents to a thousand, we can help you get there without the 40% failure rate Gartner keeps warning about. If you would like to talk through your roadmap, our team is ready when you are.

FAQs

Q. What is Agentic Coding in AI?

A. Agentic Coding is a software development discipline where AI coding agents autonomously plan, write, test, and iterate on code, while a human engineer owns the architecture, quality bar, and final judgment. It sits between Vibe Coding (minimal human review) and traditional AI-assisted coding (deep human involvement at the line level).

Q. What is Vibe Coding?

A. Vibe Coding is a conversational, prompt-driven approach to building software where you describe what you want in natural language and the AI generates the code, often without detailed review. It works well for prototypes, learning, and throwaway scripts, but breaks down when code quality, security, or long-term maintenance matter.

Q. How is agentic AI different from prompt engineering?

A. Prompt engineering is the craft of getting a single model to produce a good output from a single prompt. Agentic AI is the broader discipline of designing systems where one or more models plan, act, use tools, and iterate across multiple steps — usually under human supervision and within guardrails. Prompt engineering is a subskill inside agentic AI.

Q. When should you use agentic AI systems?

A. Use agentic AI when the task involves multiple steps, needs to interact with external tools or data, has to produce auditable outputs, or will run without constant human attention. Examples: customer service triage, claims processing, discharge coordination, document extraction, and compliance monitoring.

Q. What are the limitations of Vibe Coding?

A. Vibe Coding tends to produce code that is hard to maintain, hard to audit, hard to secure, and hard to extend. It skips the practices — tests, reviews, specs, documentation — that make software survive past the first demo. For production work in regulated industries, it is essentially unusable on its own.

Q. Is agentic AI better for enterprise applications?

A. Yes, for almost every enterprise application. Enterprises need governance, auditability, compliance, reliability, and accountability — all of which Agentic Coding provides by design and Vibe Coding does not. The one exception is rapid prototyping, where vibe-style workflows are still valuable before the work transitions into a proper build.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

How to use Google AI Studio to quickly build (or "vibe code") and deploy apps

Key takeaways: Building apps with AI Studio is fast, but mostly useful for prototypes, not public-ready products. AI Studio can generate Android app previews quickly, but complex features like maps, chat, and live data still break easily. Security and compliance remain major gaps, especially when apps handle users, locations, or sensitive data. Publishing through Play…

Key takeaways: Start with research before development. Map compliance before choosing the architecture. Build the data foundation before training the model. Cost can range from $100K to $5M+, depending on scope. The biggest challenge is keeping the AI accurate, explainable, and compliant after launch. Building a real estate investment AI means planning for two industries:…

What UAE CDS 2027 Means for Your Platform: Integration, Compliance, Systems, and What to Build

Key takeaways: UAE CDS 2027 age verification compliance mandates real-time, auditable systems integrated into identity, access control, and enforcement layers across platforms. Effective compliance requires risk-based, multi-layered verification combining biometrics, Emirates ID checks, device intelligence, and behavioral signals. Legacy KYC systems fail CDS expectations; enterprises must implement continuous verification with dynamic risk scoring and re-validation…