- What Retrieval-Augmented Generation Enables in Enterprise AI?

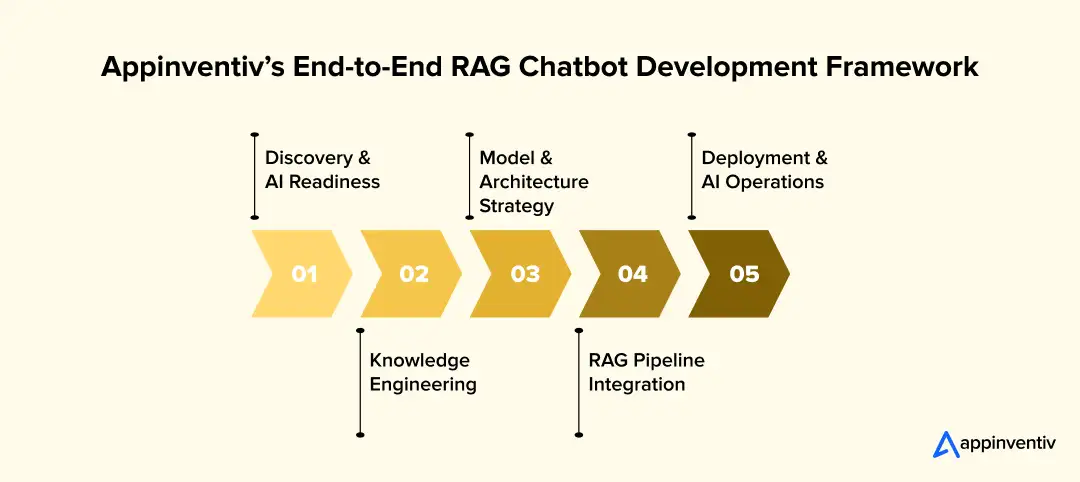

- Build an AI-Powered RAG Chatbot: Appinventiv's End-to-End Development Framework

- Technology Stack Appinventiv Typically Uses

- RAG Chatbot vs Traditional Chatbot in Enterprise Deployments

- Multimodal RAG Chatbots: The Emerging Enterprise Standard

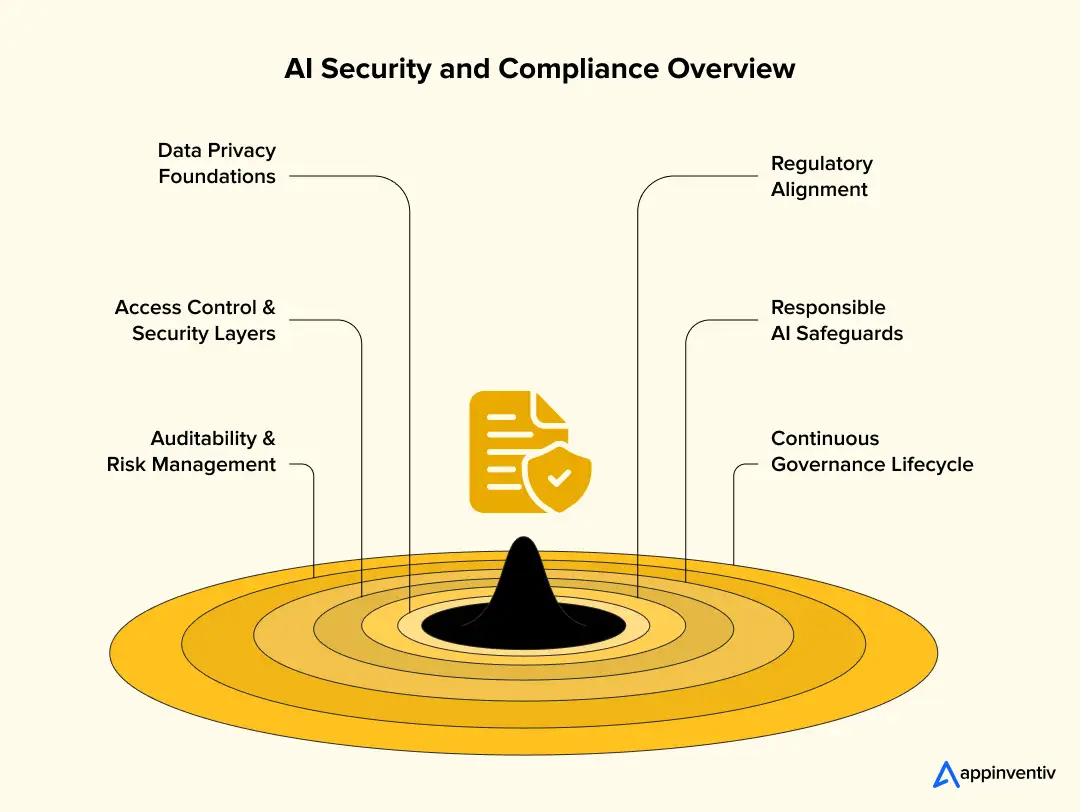

- AI Governance, Security and Compliance in Enterprise RAG Systems

- AI Evaluation and Trust Validation in Enterprise RAG Systems

- Appinventiv’s Key Tips for Performance Optimization

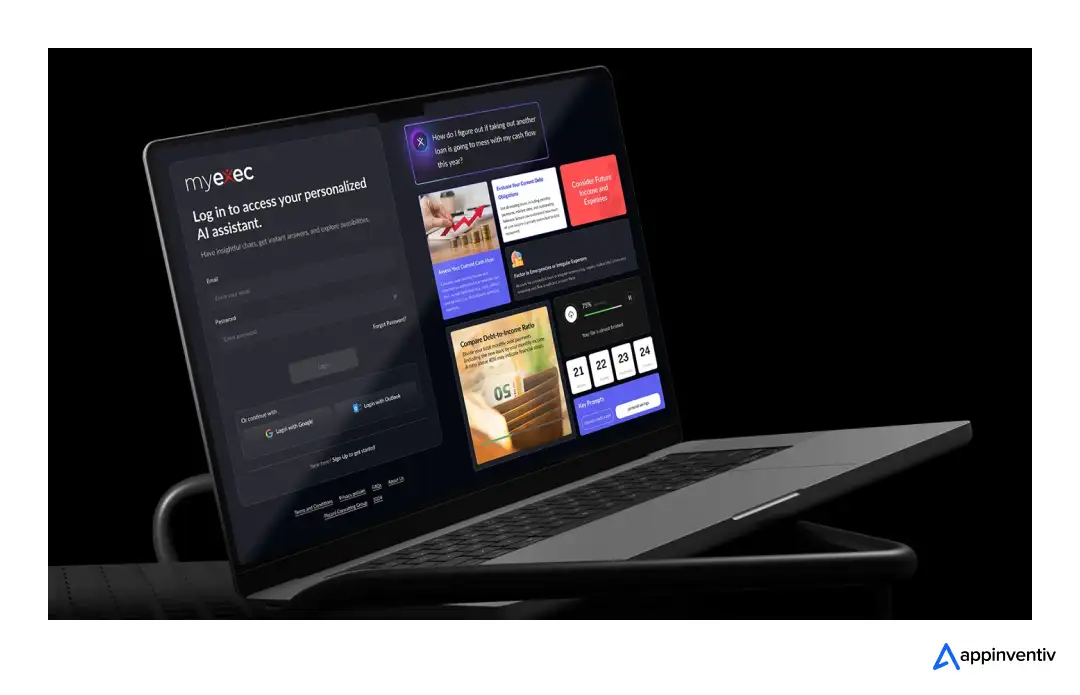

- RAG Chatbot Examples: Appinventiv's MyExec AI Implementation Deep Dive

- Cross-Industry Use Cases of RAG Chatbots

- Enterprise Investment Reality: Cost of Building a RAG Chatbot

- Enterprise RAG Implementation Readiness Checklist

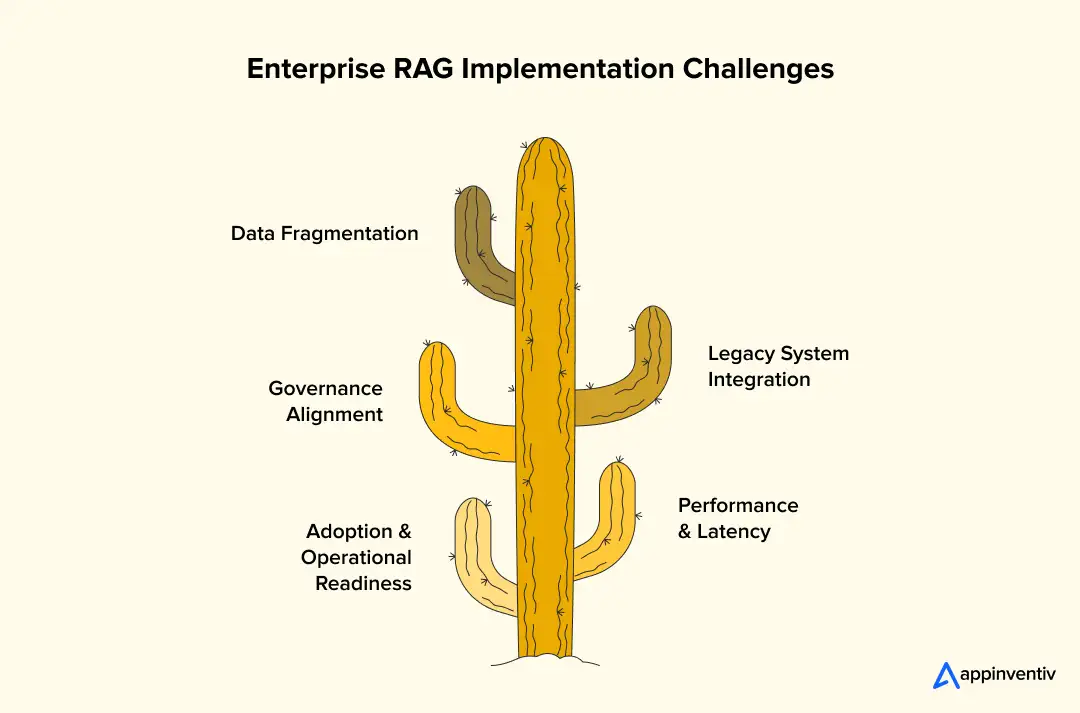

- Challenges in Building RAG Chatbots and How Appinventiv Addresses Them

- Future of RAG Chatbots: Evolution of Enterprise RAG Systems

- How Appinvenitv Helps Build an AI Chatbot With RAG Integration?

- Frequently Asked Questions

Key takeaways:

- RAG chatbots improve enterprise AI accuracy by grounding responses in verified internal business knowledge.

- Governance, security, and explainability become critical as AI shifts from pilots to enterprise infrastructure.

- Investment typically ranges $50K–$500K depending on data integration, compliance, and deployment complexity.

- Strong data engineering and architecture discipline matter more than standalone model capability for enterprise success.

- Multimodal, agentic RAG systems are emerging as operational copilots across enterprise knowledge workflows.

Most enterprise teams start with a chatbot pilot that looks promising. Responses sound intelligent, leadership sees quick productivity gains, and adoption begins smoothly. Then real usage starts. Someone checks an internal policy before a client meeting, the chatbot answers confidently, but the source is unclear. That is usually when expectations change.

Generic LLM chatbots are powerful, but an AI chatbot with RAG they are not built for enterprise accountability by default. They generate responses from broad training data, not always from your verified internal knowledge. Once AI starts supporting customer interactions, compliance workflows, or internal decisions, accuracy and traceability become non-negotiable.

Hallucination risk is only part of the concern. Governance quickly follows. Your teams need clarity on where answers come from, how sensitive data is protected, and whether responses align with approved documentation. AI stops being an experiment and starts becoming infrastructure.

This is where Retrieval Augmented Generation chatbot, or RAG, becomes relevant. By grounding responses in enterprise data, the RAG-powered chatbot improves reliability and explainability. At Appinventiv, this shift often marks the point where organizations move from AI exploration and start to build a RAG chatbot ready for real operational impact.

82% of enterprise leaders now use Gen AI weekly

What Retrieval-Augmented Generation Enables in Enterprise AI?

Most teams notice the limits of a basic chatbot once answers start needing verification. Someone checks a pricing clause or compliance policy, the response sounds right, yet your team still opens the original document to confirm.

That gap is where Retrieval-Augmented Generation becomes useful. It also reflects a broader trend: Gen AI has accelerated product time-to-market by about 5 percent and improved product management productivity by roughly 40 percent, making accuracy and trusted data access even more critical.

Here are the benefits of RAG chatbots inside enterprise environments:

Secure proprietary knowledge integration

- Internal documents, databases, and knowledge bases can be indexed safely

- Access controls stay aligned with existing enterprise security policies

- Sensitive data does not need full model retraining

Contextual and explainable AI responses

- Retrieval happens before generation, improving factual grounding

- Answers can reference source documents when needed

- Easier auditability during compliance or internal reviews

Enterprise decision intelligence support

- Faster access to distributed enterprise knowledge

- Consolidated insights without switching multiple systems

- More consistent decision support across teams

Cross-industry applicability

- Healthcare clinical documentation access

- Banking risk and compliance insights

- Retail operational knowledge retrieval

- Aviation, SaaS, and enterprise support automation

Operational productivity impact

- Reduced manual searching across repositories

- Faster internal response cycles

- More consistent information flow across departments

A RAG-based chatbot does not just improve responses. It reshapes how enterprise knowledge becomes usable at scale.

Also Read: The ROI of Accuracy: How RAG Models Solve the “Trust Problem” in Generative AI

With the benefits of RAG chatbots in mind, let’s move on to see how to develop a RAG chatbot.

Build an AI-Powered RAG Chatbot: Appinventiv’s End-to-End Development Framework

When enterprises approach us to build a RAG chatbot, it is rarely about experimenting with AI. It usually follows a trigger. A pilot lost credibility. Internal teams started double-checking answers. Or leadership realized governance gaps too late.

That shift is happening alongside broader adoption trends, with around 82% of enterprise leaders now using Gen AI weekly, making reliability, governance, and trusted knowledge access far more critical than early AI experimentation.

Enterprise Discovery and AI Readiness Alignment

This stage is less about AI models and more about business clarity. Getting this wrong slows everything later.

Here is what we typically address first while evaluating AI readiness in enterprise:

- Use case precision before technology

With MyExec AI, the initial request was an intelligent advisor. Discovery revealed multiple needs: strategic insights, document summarization, and operational Q&A. We separated these workflows early to avoid forcing one model into conflicting roles. - Data maturity reality check

Documents often arrive fragmented, inconsistently tagged, or duplicated. We built preprocessing pipelines to standardize the structure before indexing. That alone improved retrieval quality significantly. - Compliance mapping upfront

Especially for enterprise advisory assistants, auditability and access control had to be embedded early. Waiting until deployment would have meant redesign. - Stakeholder alignment

IT, compliance, and business teams rarely start aligned. Structured workshops prevented delays later. - ROI framing grounded in operations

Instead of speculative AI gains, we focused on measurable efficiency improvements such as faster knowledge access and reduced manual search time.

This discovery layer often determines whether AI adoption accelerates or quietly stalls.

Knowledge Engineering and Data Foundation

Strong RAG systems are built on disciplined data preparation. Models alone do not solve knowledge problems.

During MyExec AI development, data complexity surfaced quickly. Business documents varied in structure, depth, and sensitivity. Addressing that required focused engineering.

Key elements included:

- Controlled ingestion pipelines

LangChain orchestration ensured documents entered the system with consistent preprocessing while respecting enterprise access policies. - Semantic chunking, not arbitrary splitting

Instead of fixed-size chunks, we segmented content based on meaning, section boundaries, and contextual continuity. Retrieval accuracy improved noticeably. - Metadata enrichment for contextual filtering

Tags such as department, document type, and update frequency helped refine search relevance. - Vector storage tuned for enterprise scale

MongoDB supported persistent memory while vector indexing balanced retrieval speed with governance needs. - Embedding lifecycle governance

Refresh cycles ensured new documents flowed into the system without constantly retraining the model.

This foundation work often goes unnoticed, yet it directly shapes answer reliability.

Also Read: How AI is Revolutionizing Data Governance for Enterprises and How to Do It Right?

Model Architecture and LLM Strategy

Enterprises often assume model choice drives success. Experience shows that architectural discipline matters more.

For MyExec AI, GPT-4o as an LLM RAG chatbot provided strong reasoning capability. Still, raw model power was not enough. Structured orchestration ensured the model behaved consistently under real usage.

Critical decisions included:

- Prompt orchestration frameworks

Structured prompts injected retrieved context cleanly, reducing irrelevant outputs. - Selective fine-tuning strategy

RAG & fine-tuning are not the same. Retrieval handled evolving enterprise data. Fine-tuning was reserved for stable, domain-specific workflows. - Multi-model routing for efficiency

Lightweight models handled classification tasks while heavier models focused on reasoning queries. - Context optimization practices

Filtering retrieval results before generation reduced token consumption and improved clarity.

These RAG-based chatbot architectural choices supported stability, cost control, and performance simultaneously.

Also Read: How to develop an LLM model? A comprehensive guide for enterprises

RAG Pipeline Engineering and Integration

This phase transforms architecture into a usable enterprise system. Integration depth defines perceived value.

For MyExec AI, several engineering hurdles emerged early. Query ambiguity, mixed document formats, and system connectivity required layered solutions.

Core implementation strategies included:

- Query rewriting using agent reasoning

ReAct-style logic reformulated unclear queries before retrieval. - Hybrid search implementation

Combining vector similarity with keyword search improved precision for domain-specific terminology. - Re-ranking pipelines for accuracy

Secondary ranking layers filtered and retrieved content before generation. - Multi-agent orchestration

Separate agents handled retrieval, summarization, and analysis tasks. - Enterprise system connectivity

Secure APIs linked CRM data, internal dashboards, and document repositories without compromising governance.

This integration layer is often where AI shifts from experimental interface to operational asset.

Deployment, Scaling and AI Operations

Production-ready RAG chatbot introduces pressures pilots never reveal. Stability, cost, and observability become primary concerns. During MyExec AI deployment, operational readiness guided most decisions.

Key operational practices included in implementing RAG chatbots:

- Containerized deployment architecture

Docker containers running on AWS ECS provided predictable scaling and environment consistency. - Observability beyond uptime metrics

Retrieval accuracy, latency patterns, and response consistency were continuously monitored. - Continuous indexing pipelines

Knowledge updates entered the system regularly without retraining disruptions. - Token cost governance

Prompt optimization and usage monitoring prevented unnecessary infrastructure expenses. - Scalability safeguards

Load balancing, caching layers, and fallback strategies ensured stability during peak use.

These AI operational layers often determine whether enterprise AI earns long-term trust.

Across this RAG chatbot deployment framework, one principle consistently holds true. Enterprise RAG chatbot success rarely comes from model capability alone. It comes from disciplined data engineering, thoughtful architecture, governance awareness, and operational readiness working together.

That integrated perspective is what allows AI systems to move from promising pilots to dependable enterprise infrastructure.

Technology Stack Appinventiv Typically Uses

When enterprises ask about the tech stack, the real concern is rarely the tool itself. It is whether the stack will stay stable once real data, real users, and real compliance requirements enter the picture. Our approach focuses on how each layer behaves in production, not just what is trending.

Here is the RAG chatbot architecture for enterprises we typically structure in actual deployments.

Core Components

This layer supports retrieval accuracy, response stability, and operational scale.

- LLM RAG chatbot platforms, used with layered task routing

Instead of pushing every query to a large reasoning model, we usually route tasks. Lightweight models handle classification, intent detection, and summarization. More capable models step in only for complex reasoning. This keeps latency predictable and avoids unnecessary token costs. - Vector databases, tuned for enterprise retrieval behavior

Vector storage is configured around retrieval precision rather than raw speed alone. We combine similarity search with contextual filtering so results reflect business relevance, not just mathematical closeness. Persistent memory layers help maintain conversational continuity without repeated indexing. - Retrieval orchestration frameworks, structured as controlled pipelines

Tools like LangChain or LlamaIndex are not used as plug-and-play connectors. We design ingestion pipelines, chunking logic, and metadata flows carefully so retrieval reflects document structure, permissions, and update frequency. - Cloud infrastructure, optimized for controlled scaling

Containerized deployments on cloud platforms allow predictable scaling. Instead of reactive scaling, we plan capacity based on usage modeling so response latency stays stable even under peak loads. - API orchestration layers, built for secure enterprise integration

These APIs connect internal dashboards, CRM systems, document repositories, and analytics platforms. Security policies are enforced at the API layer, so sensitive knowledge remains compartmentalized. - Monitoring and observability, focused on AI-specific signals.

We track retrieval relevance, latency drift, hallucination indicators, and query patterns. This helps detect issues before they affect users.

Additional Layers

These often determine whether an AI deployment survives beyond the pilot stage.

- Security frameworks embedded in ingestion and retrieval.

Role-based access controls, encrypted document pipelines, and audit trails ensure enterprise data governance remains intact. - Data governance tooling tied to the document lifecycle

Access permissions, update timestamps, and document ownership are tracked so that outdated or restricted data does not influence responses. - DevOps and MLOps integration for continuous stability

Automated indexing refresh, deployment pipelines, and performance monitoring keep the system aligned with evolving enterprise knowledge without requiring disruptive rebuilds.

Data Preparation, Embeddings and Vector Database Integration

This layer often determines how accurate and reliable a RAG application chatbot becomes. Strong retrieval starts with disciplined data preparation and a well-structured vector storage strategy.

Key considerations typically include:

- Loading enterprise data through structured ingestion pipelines (APIs, file endpoints, document loaders)

- Semantic text splitting and metadata tagging before embedding generation

- Using embedding models aligned with the domain context

- Storing vectors in scalable databases like Pinecone, Neo4j AuraDB, or ChromaDB

- Designing indexing and query pipelines for fast, context-aware retrieval

RAG Chatbot vs Traditional Chatbot in Enterprise Deployments

This comparison of chatbot using RAG usually comes up when a pilot starts moving toward production. Early demos work fine. Then integration discussions begin, compliance teams get involved, and suddenly the limitations of a traditional chatbot become clearer. The difference is not just technical. It directly affects reliability, governance, and long-term usability.

Here is how the two approaches typically compare in enterprise environments:

| Aspect | Traditional Chatbot | RAG Chatbot |

|---|---|---|

| Knowledge Source | Mostly static training data, periodic retraining needed | Retrieves current enterprise data dynamically |

| Reliability | Responses may drift from the latest internal knowledge | Answers stay grounded in verified sources |

| Hallucination Risk | Higher, especially with domain-specific queries | Reduced through retrieval before generation |

| Explainability | Limited traceability to source data | Responses can link back to documents or systems |

| Governance Readiness | Harder to audit and control data flow | Easier alignment with compliance workflows |

| System Integration | Often, a standalone conversational layer | Designed to connect with enterprise data ecosystems |

| Scalability Over Time | Retraining becomes resource-intensive | Knowledge updates are handled through indexing |

| Operational Sustainability | Maintenance increases as knowledge grows | More adaptable to evolving enterprise data |

For most enterprises, choosing between a RAG chatbot and a traditional chatbot is less about chasing new AI trends and more about operational stability. Once chatbots start influencing customer interactions, internal decisions, or compliance workflows, grounded and explainable AI becomes a practical requirement rather than an optional upgrade.

Multimodal RAG Chatbots: The Emerging Enterprise Standard

Most enterprise AI conversations eventually move beyond text. A support team wants the chatbot to read a product manual PDF. A healthcare team needs image reports interpreted alongside notes. Someone from operations asks if voice transcripts can feed the same system. That shift toward multiple data types is already happening across industries.

Use cases of RAG chatbots show modern enterprise assistants increasingly work with mixed inputs:

Beyond Text-Based Knowledge

This is usually the first practical step organizations take when expanding AI capabilities.

- Documents like contracts, manuals, and reports remain central

- Images such as diagnostic scans, product photos, or diagrams add context

- Voice transcripts help capture meetings, calls, or field updates

- Structured data from dashboards and databases rounds out the picture

When these sources connect properly, responses feel more complete. Teams spend less time switching tools or manually cross-checking information.

Enterprise Applications are Already Emerging

You can already see this approach showing up across multiple enterprise environments.

- RAG chatbot examples include healthcare teams combining clinical notes with imaging summaries

- Financial services reviewing reports alongside transaction data

- Retail operations linking customer feedback, visuals, and inventory data

- Aviation and logistics teams are pulling insights from maintenance records and operational dashboards

Infrastructure Considerations You Cannot Ignore

This is where most enterprise deployments require careful planning.

- Storage and indexing requirements increase rapidly.

- Retrieval pipelines need careful tuning for different data formats

- Security policies must extend across all data types, not just text

Looking ahead, multimodal RAG chatbots will likely behave less like chat interfaces and more like operational copilots. Systems that understand multiple forms of enterprise knowledge tend to integrate more naturally into daily workflows, making adoption smoother and outcomes more reliable.

Also Read: Multimodal AI – 10 Innovative Applications and Real-World Examples

AI Governance, Security and Compliance in Enterprise RAG Systems

Implementing RAG chatbots in Enterprise AI deployments moves quickly from experimentation to scrutiny. Security teams, legal stakeholders, and compliance officers usually step in once internal data enters the system. From our experience delivering AI solutions across regulated industries, governance works best when built into the architecture early rather than layered later.

Regulatory Alignment and Data Residency

Global enterprises rarely operate under a single regulatory framework, so alignment must be proactive.

- Architectures mapped to GDPR, HIPAA, PCI DSS, SOC 2, and similar standards

- Regional data residency controls for cross-border deployments

- Vendor risk assessments when external AI models are involved

Enterprise Data Privacy Architecture

Privacy controls influence how data is ingested, indexed, and retrieved.

- Encryption is applied during ingestion, storage, and retrieval flows

- Segmented knowledge indexing to isolate sensitive datasets

- Retrieval filtering aligned with enterprise data classification policies

Role-Based Access and Zero-Trust Retrieval

Access control must extend beyond application layers into retrieval logic.

- Role-mapped knowledge access is enforced at the query level

- Identity-aware API gateways controlling system interaction

- Continuous logging of AI interactions for traceability

Responsible AI Governance

AI behavior governance is as critical as infrastructure security.

- Explainability layers supporting source traceability

- Guardrails reduce hallucination and unsupported outputs

- Prompt validation workflows during rollout phases

Auditability and Risk Mitigation

Enterprises expect AI systems to withstand audits and operational reviews.

- Documented data lineage for all indexed content

- Continuous vulnerability scanning and dependency monitoring

- Incident response readiness is built into operational workflows

Continuous Governance Lifecycle

Compliance does not end at deployment. It evolves with usage.

- Ongoing regulatory monitoring and policy updates

- Periodic security and performance reviews

- Automated enforcement of governance standards across pipelines

This structured governance approach helps ensure enterprise RAG systems remain secure, compliant, and operationally reliable as they scale.

Also Read: Enterprise AI Governance, Risk, and Compliance: An Executive Guide

Ensure secure, compliant deployment aligned with evolving enterprise AI standards.

AI Evaluation and Trust Validation in Enterprise RAG Systems

Once a RAG chatbot goes live, the real question shifts quickly. Not “Does it work?” but “Can we trust it consistently?” Enterprises usually need measurable validation before relying on AI for internal decisions, customer interactions, or compliance workflows. That is where structured evaluation becomes critical.

Here is how we typically approach trust validation in enterprise deployments.

Response Accuracy Validation

This is usually the first trust checkpoint.

- Periodic response sampling against verified enterprise knowledge

- Traceability checks to ensure answers map back to source documents

- Continuous prompt refinement based on validation feedback

Chatbot Hallucination Reduction Using RAG: Risk Monitoring

Reducing hallucinations is not a one-time fix.

- Confidence scoring for retrieved context

- Review loops for edge-case queries

- Guardrail adjustments based on usage patterns

Enterprise Feedback Loops

Adoption improves when users see continuous improvement.

- Structured user feedback capture

- Internal expert validation during early rollout

- Iterative knowledge indexing updates

Operational Trust Signals

Enterprises rely on predictable behavior over time.

- Consistency tracking across repeated queries

- Performance benchmarking against baseline expectations

- Governance alignment checks during system updates

These RAG chatbot validation techniques help ensure enterprise RAG chatbots remain reliable, explainable, and trusted as operational usage grows.

Appinventiv’s Key Tips for Performance Optimization

Performance usually becomes a focus after the first real deployment. The chatbot works, but response time, cost, or consistency starts getting attention. From our experience across enterprise AI platforms, small architectural decisions early often prevent bigger operational issues later.

Here is how we typically optimize performance in enterprise RAG deployments.

| Optimization Area | How Appinventiv Handles It | Business Impact |

|---|---|---|

| Retrieval Latency | Hybrid search, caching, filtered retrieval | Faster responses |

| Context Control | Structured prompts, filtered context | Better accuracy, lower tokens |

| Embeddings | Semantic chunking, periodic refresh | Consistent knowledge quality |

| Infrastructure Scaling | Containerized deployment, load planning | Stable performance at scale |

| Token Costs | Model routing, prompt tuning | Controlled AI spend |

| Monitoring | Continuous performance tracking | Early issue detection |

These steps help keep enterprise AI systems responsive, cost-aware, and reliable as usage grows.

RAG Chatbot Examples: Appinventiv’s MyExec AI Implementation Deep Dive

To understand how enterprise RAG systems move beyond theory, the MyExec AI assistant offers a practical example of custom RAG chatbot development. The RAG-powered chatbot was built as a conversational business consultant designed to analyze complex business documents and deliver strategic recommendations through a chat interface.

At the core was a multi-agent RAG chatbot architecture, meaning different AI agents handled intent routing, document retrieval, analysis, and response generation. This structure allowed the system to reason through business queries rather than simply answering conversational prompts.

Key RAG chatbot implementation elements included:

- Document intelligence pipeline: A RAG framework retrieved factual snippets from uploaded reports, ensuring recommendations stayed grounded and reducing hallucination risk.

- Agent orchestration using the React framework: Specialized agents planned actions, retrieved data, and generated insights collaboratively.

- Memory-driven architecture: MongoDB stored the session context, so conversations retained continuity across interactions.

- Enterprise-grade deployment: Docker containers on AWS ECS enabled scalable, secure rollout with performance monitoring via LangSmith.

The outcome was a scalable AI consultant capable of turning dense business data into actionable insights while maintaining accuracy, explainability, and operational stability for enterprise-style deployments.

Cross-Industry Use Cases of RAG Chatbots

RAG chatbot systems rarely exist in isolation. They sit inside complex enterprise ecosystems where compliance, security, scale, and performance expectations are already established. Our experience building enterprise RAG chatbot solutions across industries has shaped how we approach these deployments, particularly when reliability and governance are non-negotiable.

Healthcare Platforms: Compliance Awareness

Healthcare AI consistently highlights how critical data governance is. Clinical documentation, patient data handling, and regulatory expectations require strict access controls, traceability, and secure data pipelines. These learnings translate directly into how we design knowledge ingestion and retrieval safeguards for enterprise RAG assistants.

Banking And Financial AI Solutions: Security Rigor

Financial platforms demand precision, auditability, and strong encryption standards. AI systems supporting financial insights or fraud analysis taught us how to structure secure retrieval workflows, implement strict permission layers, and maintain response explainability. These practices reduce risk when enterprise chatbots interact with sensitive business information.

Aviation And Enterprise Saas Applications: Scaling Expertise

Large-scale operational platforms bring performance pressures. Real-time data access, distributed infrastructure, and high user concurrency helped shape our approach to containerized deployment, load balancing, and resilient AI architecture for RAG systems.

Consumer AI Platforms: Performance Optimization

High-traffic consumer apps emphasize latency control, cost efficiency, and consistent response quality. Techniques like prompt optimization, caching strategies, and efficient model routing often carry over directly into enterprise AI deployments.

Together, these cross-industry experiences strengthen our ability to design RAG chatbot systems that remain secure, scalable, and operationally reliable across varied enterprise environments.

Enterprise Investment Reality: Cost of Building a RAG Chatbot

Cost usually becomes a real discussion once AI moves from pilot to production. Enterprise RAG chatbots are not just conversational tools. They connect internal knowledge, security layers, and operational workflows. That is why investment typically ranges between $50K and $500K, depending on scope.

Here is how that investment typically breaks down:

| Cost Driver | Typical Cost Range | What Drives It |

|---|---|---|

| Data preparation | $10K–$80K | Cleaning documents, structuring data, and semantic tagging |

| Integration depth | $15K–$120K | CRM, ERP, knowledge bases, API connectivity |

| Compliance & security | $10K–$70K | Access control, encryption, and audit readiness |

| Model customization | $5K–$60K | Prompt design, routing logic, and domain adaptation |

| Infrastructure setup | $10K–$100K | Cloud deployment, storage, scaling, and readiness |

| Governance tooling | $5K–$70K | Monitoring, reporting, lifecycle management |

How enterprises usually justify the investment:

- Faster access to internal knowledge

- Reduced manual research effort

- More consistent customer and employee support

- Stronger governance over AI usage

Most organizations treat RAG-based chatbot development as an infrastructure investment that supports long-term AI adoption rather than a short-term feature build.

Also Read: Agentic RAG Implementation in Enterprises – Use Cases, Challenges, ROI

Enterprise RAG Implementation Readiness Checklist

Before moving forward with an RAG chatbot implementation, a quick readiness check can prevent delays, rework, and governance surprises.

Challenges in Building RAG Chatbots and How Appinventiv Addresses Them

RAG chatbot deployments usually look straightforward at the pilot stage. Then, real enterprise conditions surface. Data lives in silos, legacy systems resist integration, compliance reviews slow timelines, and operational teams need clarity before adoption. These challenges just like AI adoption challenges are normal. The difference comes from how early they are addressed.

Fragmented Enterprise Data Ecosystems

Enterprise knowledge rarely sits in one clean repository. Documents are scattered across shared drives, CRMs, internal portals, and departmental systems. Without structured consolidation, retrieval becomes inconsistent and response confidence drops.

How we address it:

- Unified ingestion pipelines across enterprise systems

- Metadata normalization for consistent retrieval context

- Controlled indexing without disrupting existing workflows

Legacy Integration Complexity

Many enterprise platforms were built long before AI or RAG integration became relevant. Limited APIs, outdated architecture, and security constraints often slow implementation more than expected.

How we address it:

- Middleware layers bridging legacy infrastructure securely

- API abstraction reduces long-term dependency risks

- Phased integration to maintain operational continuity

Governance Approval Cycles

AI deployments often trigger extended security, legal, and compliance reviews. Enterprises need clear traceability, data control, and risk visibility before moving into production.

How we address it:

- Governance alignment early in architecture design

- Built-in audit trails and explainability features

- Documentation supporting regulatory approval workflows

Retrieval Latency Challenges

As enterprise knowledge bases grow, retrieval performance can fluctuate. Slow or inconsistent responses quickly affect user confidence and system adoption.

How we address it:

- Hybrid search optimization and caching strategies

- Efficient indexing architecture

- Continuous latency monitoring and tuning

Adoption and Operational Hurdles

Technical deployment alone does not guarantee usage. Teams often need clarity on reliability, workflow fit, and data trust before fully adopting AI assistants. Without that alignment, even capable systems remain underutilized.

How we address it:

- Phased rollout with validation checkpoints

- User enablement aligned with daily workflows

- Continuous feedback loops to refine system behavior

Addressing these challenges in building RAG chatbots early helps systems move from experimental pilots to dependable enterprise infrastructure.

Clarify risks early before scaling enterprise RAG systems across operations.

Future of RAG Chatbots: Evolution of Enterprise RAG Systems

Development of RAG AI chatbot systems are already moving beyond simple Q&A chatbots. Conversations with clients increasingly focus on assistants that can reason, integrate, and act within workflows. From what we see across ongoing projects, the shift is toward AI systems that behave more like operational copilots than standalone tools.

- Agentic enterprise assistants are gaining traction.

Instead of single-response chatbots, enterprises are exploring multi-agent systems where specialized AI components handle retrieval, reasoning, and task execution. We are already designing architectures that support this modular evolution. - Multimodal enterprise knowledge ecosystems are expanding.

Text alone is rarely enough now. Documents, dashboards, images, transcripts, and structured data increasingly feed the same AI layer. Our builds are structured to accommodate mixed knowledge formats from the start. - Autonomous workflow assistance is emerging.

AI is moving from answering questions to supporting operational actions such as report generation, data summarization, and internal decision support. - Continuous enterprise AI learning is becoming standard.

Index refresh cycles, monitoring pipelines, and governance frameworks ensure systems stay aligned with evolving business knowledge.

At Appinventiv, we design RAG systems with this trajectory in mind so enterprises are ready for what comes next, not just current AI capabilities.

How Appinvenitv Helps Build an AI Chatbot With RAG Integration?

By now, you have seen how enterprise RAG chatbots move from pilots to operational systems. The difference usually comes down to engineering discipline, governance awareness, and experience working across complex enterprise environments. As a RAG development company, this is the approach we bring when building AI products at Appinventiv.

Here is a snapshot of our AI delivery footprint:

AI engineering scale and capability

- 300+ AI-powered solutions delivered across industries

- 200+ data scientists and AI engineers working on enterprise AI initiatives

- 150+ custom AI models trained and deployed in production environments

- 75+ enterprise AI integrations completed across complex ecosystems

- 50+ bespoke LLMs fine-tuned for domain-specific enterprise use cases

- Experience spanning 35 industries globally

Industry recognition and ecosystem strength

- Featured in the Deloitte Fast 50 India for two consecutive years

- Recognized among APAC’s high-growth companies by Statista and Financial Times

- Multiple strategic AI partnerships supporting enterprise deployments

Typical enterprise impact from AI deployments

- Up to 75 percent faster decision-making cycles

- Prediction accuracy reaching approximately 98 percent in mature models

- Time to market acceleration up to 10x for AI-enabled initiatives

- Operational cost reductions averaging around 40 percent

This experience shapes how we build a RAG chatbot systems through our AI application development services. The focus stays on reliability, governance alignment, scalability, and measurable business value rather than experimentation alone.

Frequently Asked Questions

Q. What is a RAG chatbot?

A. A RAG chatbot combines a language model with a retrieval system that pulls information from your organization’s actual data before generating answers. This means responses stay grounded in internal documents, databases, or knowledge bases. For enterprises, it usually improves accuracy, traceability, and trust compared to chatbots relying only on pre-trained model knowledge.

Q. How to build a RAG chatbot?

A. To build a RAG chatbot usually starts with organizing enterprise data first. Documents are cleaned, indexed, and embedded so they can be retrieved quickly. After that, a language model is connected through a retrieval pipeline, followed by security controls, evaluation loops, and deployment planning to ensure reliability under real usage conditions.

Q. What is a RAG-based chatbot?

A. A RAG-based chatbot is essentially an AI assistant that retrieves relevant business information before generating responses. Instead of guessing from training memory, it references enterprise knowledge sources. This approach is commonly used where accuracy, compliance alignment, and explainability matter, especially in regulated industries or knowledge-heavy enterprise environments.

Q. How does Appinventiv build a RAG chatbot for production-grade environments?

A. At Appinventiv, production builds typically start with a data readiness assessment and governance alignment. Retrieval pipelines are designed around secure enterprise knowledge integration, not just model performance. Deployment includes monitoring, scaling strategy, and evaluation workflows so the chatbot remains reliable as usage grows and enterprise data evolves over time.

Q. What is the ROI of building a RAG-based chatbot?

A. ROI usually comes from faster knowledge access, reduced manual research, and improved decision support. Enterprises often see efficiency gains in customer support, internal operations, and analytics workflows. Over time, grounded AI responses help reduce operational costs while improving consistency, which tends to justify the infrastructure investment.

Q. What architecture is required for RAG chatbot deployment?

A. A typical enterprise RAG chatbot architecture includes a knowledge ingestion layer, vector retrieval system, language model integration, governance controls, and monitoring infrastructure. Cloud or hybrid deployment is common. The key requirement is ensuring data access control, retrieval accuracy, and performance stability as enterprise usage scales.

Q. How is data prepared and embedded for enterprise RAG chatbot systems?

A. Data preparation usually starts by loading documents through endpoints like the /v2/files API, then reading files, splitting text using a text splitter, and enriching metadata such as node_label or text_node_properties. An embedding model or embedding function generates vectors stored in a vector store like Chroma DB for efficient retrieval.

Q. How are vector databases integrated into enterprise RAG chatbot systems?

A. Vector database integration involves storing embeddings in platforms like Pinecone, Neo4j AuraDB, or ChromaDB to enable fast semantic retrieval. Tools such as Pinecone Index, Neo4jVector, or LangChain Neo4j Cypher chain help manage indexing, query execution, and retrieval workflows while ensuring scalable, context-aware chatbot responses.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

AI Inventory Management in Australia: Benefits, Use Cases, and Implementation Strategies

Key takeaways: Traditional ERP planning reacts to history; AI predicts demand shifts, reallocates stock dynamically, and prevents costly stockouts across distributed Australian networks. AI in inventory management in Australia typically reduces inventory levels 20–40% while maintaining service targets, unlocking significant working capital across multi-state operations. Enterprise implementations usually range from AUD 70,000 to AUD 700,000+,…

How to Hire RAG Architects for Enterprise AI

Key takeaways: Define your RAG architecture scope to avoid misaligned hiring decisions Identify end-to-end architectural ownership across retrieval, governance, and scaling Evaluate deep technical capabilities beyond prompt engineering and tools Validate governance and compliance readiness at the retrieval layer Test system-level thinking through real-world failure scenarios Choose the right hiring model based on scale, risk,…

Key Takeaways: Healthcare chatbots in the UAE are moving beyond queries to support triage, patient communication, and real clinical workflows across hospitals. Effective healthcare chatbot development depends on clean data, strong system integrations, and alignment with regulations like DHA, DOH, and MOHAP. Development costs typically range from AED 147,000 to AED 1,470,000+, depending on complexity,…