- What Is Claude Cowork and Why Does It Matter?

- Cost to Build an AI Coworker Like Claude: The Quick Answer

- The Architecture Influencing the Claude Cowork Platform Development Cost

- The Hidden Costs Most Teams Miss

- Open Source Alternatives to Claude Cowork: Build vs. Buy vs. Fork

- How to Build an AI Coworker Platform: A Phased Approach

- What Technologies Are Required to Build an AI Coworker System?

- Why Enterprises Are Investing in AI Coworker Platforms

- How Appinventiv Can Help Build Your AI Coworker Platform

- FAQs

Key takeaways:

- AI coworker platform development ranges from $80,000 to $1,500,000+, based on complexity, from MVP to full enterprise systems.

- Agentic orchestration is the biggest cost driver, powering task planning, memory, and multi-step execution.

- Computer-use capabilities and integrations significantly increase costs, especially for enterprise-grade automation.

- Ongoing LLM inference and maintenance can exceed initial build costs if not optimized early.

- A phased build approach reduces risk, controls budget, and accelerates ROI validation.

When Anthropic launched Claude Cowork in January 2026, it didn’t just release another chatbot feature. It fundamentally changed what enterprises expect from AI. Within weeks, software stocks slid, Microsoft responded with its own Copilot Cowork integration, and open source teams scrambled to ship alternatives.

Now, every CTO and VP of Engineering we speak with is asking us the same question: how much does it cost to build an AI coworker platform like Claude?

We’ve been building AI systems for over a decade at Appinventiv, well before “agentic” became a buzzword. We’ve shipped 300+ AI-powered solutions and trained 150+ custom models.

This is exactly where understanding the AI agent workflow platform development cost becomes critical, because most teams underestimate orchestration complexity early on.

Our track record gives us something most “cost guide” articles don’t have: real invoice data, real project timelines, and real architectural decisions that determine whether your AI coworker platform costs $150K or $1.5M.

This blog breaks all of that down. No vague ranges. No hand-waving. Just the numbers, the decisions behind them, and the roadmap you need to build an AI coworker like Claude without blowing your budget.

Let’s discuss your project and calculate the budget.

What Is Claude Cowork and Why Does It Matter?

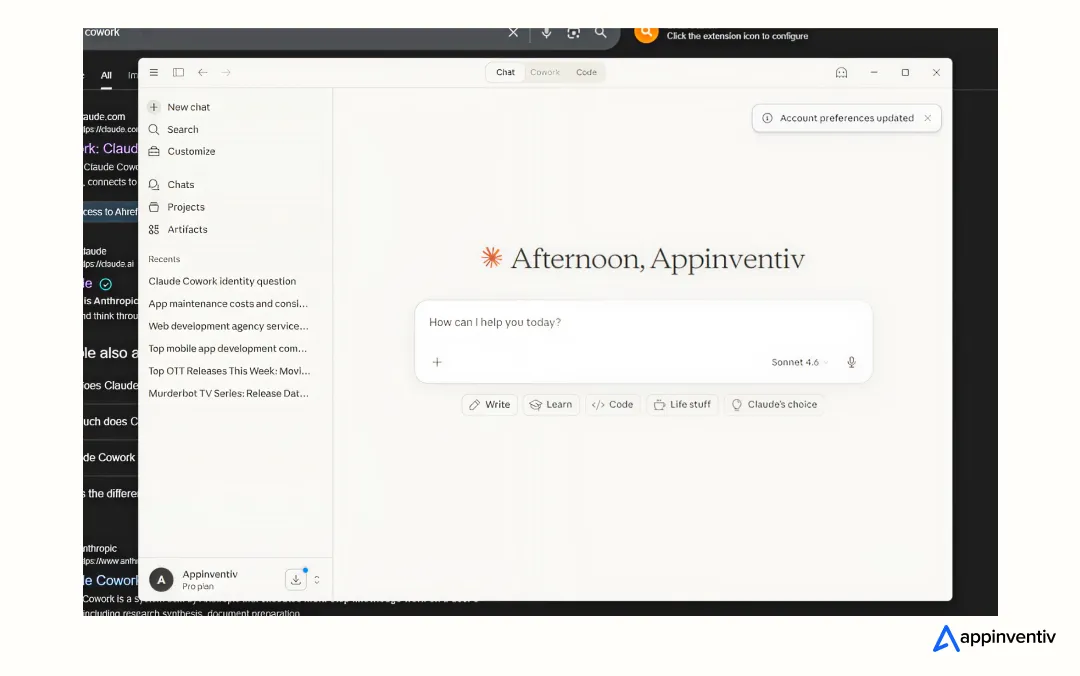

Before we talk about costs, let’s be specific about what you’re building. Claude Cowork is not a chatbot with a nicer UI.

In short, Claude Cowork is an AI agent workflow platform that acts like a remote employee who happens to live inside your computer.

From an enterprise lens, this quickly evolves into a discussion around enterprise AI agent platform development cost, especially when scalability and compliance enter the picture.

It is a desktop-native agentic AI system that can:

- Plan and execute multi-step tasks autonomously (research, document assembly, file organization)

- Access local files and applications on your computer (read, create, edit, organize)

- Connect to enterprise tools like Google Drive, Gmail, DocuSign, and FactSet via connectors and plugins

- Use your computer directly, including opening apps, navigating browsers, and filling spreadsheets

- Accept tasks remotely through Dispatch, so you can message from your phone, and the agent works on your desktop

In short, Claude Cowork is an AI agent workflow platform that acts like a remote employee who happens to live inside your computer. Anthropic’s Head of Product for Enterprise described it as the shift toward “vibe working,” where professionals describe outcomes, and the AI handles execution.

When you set out to build an AI coworker platform, you are replicating this entire capability stack. That includes the LLM backbone, the agentic orchestration layer, the computer-use interface, enterprise integrations, security controls, and the end-user experience that makes non-technical workers comfortable delegating real tasks.

Cost to Build an AI Coworker Like Claude: The Quick Answer

For teams that want the bottom line before the breakdown, here it is:

| Platform Complexity | Estimated Cost Range | Timeline |

|---|---|---|

| MVP / Proof of Concept | $80,000 – $150,000 | 3 – 4 months |

| Mid-Complexity (single-domain, limited integrations) | $150,000 – $350,000 | 5 – 8 months |

| Full Enterprise Platform (multi-agent, multi-integration) | $350,000 – $800,000+ | 8 – 14 months |

| Claude Cowork-Grade (computer use, autonomous planning, plugin ecosystem) | $800,000 – $1,500,000+ | 12 – 18+ months |

These numbers come from our actual project data across AI agent development engagements. They include discovery, architecture, development, testing, deployment, and the first three months of post-launch support. They do not include ongoing LLM inference costs, which we cover separately below.

However, if you’re evaluating benchmarks, the cost to develop the Claude cowork platform varies heavily based on the autonomy level and integrations.

Now, let’s break down where every dollar goes.

The Architecture Influencing the Claude Cowork Platform Development Cost

You cannot budget accurately without understanding the agentic workflow system architecture you are building. A Claude Cowork-like platform has six core layers, and each layer carries its own cost profile.

1. LLM Foundation Layer

This is the reasoning engine. Claude Cowork runs on Anthropic’s Opus 4.6, which features a 1-million-token context window and a 14.5-hour task completion horizon. Your platform needs a comparable language model backbone.

Your options:

| Approach | Pros | Cons | Cost Impact |

|---|---|---|---|

| Commercial API (OpenAI, Anthropic, Google) | Fast to deploy, high quality | Ongoing token costs, vendor lock-in | Low build cost, high run cost |

| Open-source model (LLaMA 3, Mistral, Qwen) | Full control, no per-token fees | Requires GPU infra, fine-tuning | High build cost, lower run cost |

| Hybrid (open-source for common tasks, commercial for complex reasoning) | Balanced cost and quality | Routing complexity | Medium build, medium run |

Estimated development cost: $20,000 – $80,000, depending on whether you are wrapping an API or hosting and fine-tuning your own models.

We typically advise our generative AI development clients to start with commercial APIs during the MVP stage and shift toward hybrid or open-source once usage patterns are clear. That saves 30-50% on the initial generative AI budget to build.

2. Agentic Orchestration Layer

This is the “brain” of the system. It takes a user’s high-level request, breaks it into sub-tasks, assigns those tasks to the right tools or models, monitors progress, handles errors, and reassembles the output. Claude Cowork does this well enough that you can “queue up tasks and let Claude work through them in parallel.”

Building this layer from scratch is the single most expensive component of the entire project. This layer of enterprise AI assistant development cost involves:

- Task decomposition and planning logic (turns “prepare a quarterly report from these five data sources” into a sequence of file-reads, data extraction, analysis, and document generation)

- Tool selection and routing (decides when to use a file-system tool vs. a browser vs. an API connector)

- Memory and context management (tracks what has been done, what remains, and what the user cares about)

- Error recovery and retry logic (handles failed API calls, ambiguous instructions, and partial completions)

- Multi-agent coordination, if you want parallel execution (one agent researches while another drafts)

Estimated development cost: $60,000 – $200,000

Frameworks like LangGraph, CrewAI, and Haystack can reduce backend engineering costs by 20-40% here, but significant custom work is still needed for production-grade reliability.

We go deeper into orchestration approaches in our enterprise AI copilot development guide.

3. Computer Use and Desktop Interface Layer

What makes Claude Cowork different from a typical AI assistant is that it actually operates your computer. It generates mouse movements, keyboard actions, and screen navigation. It can open applications, navigate websites, fill forms, and manage files just like a person would.

Building this capability means developing:

- A screen capture and interpretation system (understands what’s on the screen)

- An action execution engine (generates mouse clicks, keystrokes, and navigation sequences)

- Application-specific adapters for common tools (Excel, browser, file system)

- Safety controls (permissions, confirmation prompts, sandboxing)

Anthropic reported that Claude’s computer use capabilities improved from under 15% to 72.5% on OSWorld, which is a benchmark for AI agents navigating real computer environments. Reaching that level of reliability is non-trivial.

Estimated development cost: $80,000 – $250,000

This is the layer most teams underestimate. If your platform needs to replicate Claude Cowork’s computer use capabilities, budget generously here. If you are building a more constrained system that works through APIs and connectors only (without direct screen interaction), you can reduce this to $20,000 – $60,000.

4. Enterprise Integration Layer

Claude Cowork connects to Google Drive, Gmail, DocuSign, FactSet, and supports customizable plugins. Any Claude Cowork alternative you build will need a comparable integration ecosystem.

Each integration involves:

- API authentication and token management

- Data mapping and schema normalization

- Error handling and rate limiting

- Security and compliance controls (especially for regulated industries)

Cost per integration:

| Integration Type | Cost Range |

|---|---|

| Standard SaaS connector (Google, Slack, Jira) | $5,000 – $15,000 each |

| Complex enterprise system (SAP, Salesforce, legacy ERP) | $20,000 – $50,000 each |

| Custom plugin framework (so clients can build their own) | $40,000 – $80,000 |

| MCP (Model Context Protocol) support | $15,000 – $30,000 |

Estimated total for integration layer: $60,000 – $250,000

This number depends entirely on how many integrations you ship at launch. We recommend starting with 3-5 high-value connectors and expanding based on user demand. Popular AI integration examples can help you prioritize which connectors move the needle.

5. Security, Compliance, and Governance Layer

When your AI coworker has access to a user’s file system, email, and enterprise applications, security is not optional. It is the layer that determines whether enterprises will adopt your platform at all.

Required components include:

- Role-based access control (RBAC)

- End-to-end encryption for data in transit and at rest

- Audit logging for every agent action

- Configurable permission boundaries (folder-level, app-level)

- Compliance with GDPR, HIPAA, SOC 2, and the EU AI Act (depending on target market)

- Data residency controls for multinational deployments

Estimated development cost: $40,000 – $120,000

If you are targeting US enterprise customers, HIPAA and SOC 2 compliance alone can add $30,000-$60,000 in audit and certification costs on top of the development work. These are not optional for large deals.

6. Frontend and User Experience Layer

Claude Cowork runs as a desktop application (macOS and Windows) with a chat-like interface where users assign tasks, review progress, and provide feedback. The Dispatch feature lets users send tasks from their phones.

Building the frontend involves:

- Desktop application (Electron, Tauri, or native)

- Task management UI (queue, progress tracking, approval workflows)

- Mobile companion app or web interface

- Real-time status updates and notifications

- Settings for folder access, connector management, and preferences

Estimated development cost: $40,000 – $120,000

This layer is often where open-source Claude Cowork alternatives cut corners. Tools like Kuse and OpenWork ship functional but minimal interfaces. If you are building for enterprise buyers, investing in a polished, intuitive UX is what separates a tool people test from a tool people adopt.

The Hidden Costs Most Teams Miss

The architectural layers above cover the build. But the total AI platform development cost includes several line items that consistently surprise first-time buyers.

Ongoing LLM Inference Costs

This is the biggest recurring expense and the one most frequently underestimated.

| Usage Tier | Monthly Token Cost (Commercial API) |

|---|---|

| Light (1,000 tasks/month, simple queries) | $500 – $2,000 |

| Moderate (10,000 tasks/month, mixed complexity) | $5,000 – $15,000 |

| Heavy (50,000+ tasks/month, complex multi-step) | $25,000 – $80,000+ |

Smart model routing (using a cheaper model for simple tasks and routing complex tasks to a premium model) can cut per-conversation costs by up to 80%.

Data Preparation and Readiness

Your AI coworker is only as good as the data it can access. Clean, well-structured data pipelines are foundational. Budget 20-30% of your total timeline for data readiness work, especially if your enterprise clients have messy document repositories or poorly documented internal APIs.

Estimated cost: $15,000 – $60,000

Maintenance and Retraining

Annual maintenance typically runs 15-25% of the initial build cost. This covers prompt updates, model upgrades, integration upkeep, security patches, and performance optimization.

Team and Talent Costs

A realistic development team for a mid-complexity AI coworker platform includes:

| Role | Monthly Cost (US-based) | Monthly Cost (Offshore) |

|---|---|---|

| AI/ML Engineer | $30,000 – $40,000 | $10,000 – $16,000 |

| Backend Engineer | $28,000 – $36,000 | $8,000 – $14,000 |

| Frontend Engineer | $14,000 – $18,000 | $5,000 – $8,000 |

| DevOps/Infra Engineer | $15,000 – $20,000 | $5,000 – $9,000 |

| QA Engineer | $10,000 – $14,000 | $4,000 – $6,000 |

| Project Manager | $12,000 – $16,000 | $5,000 – $8,000 |

Choosing the right development model can reduce overall AI development cost by 30-50%.

Open Source Alternatives to Claude Cowork: Build vs. Buy vs. Fork

Before committing to a full custom build, it is worth evaluating what already exists. Several open-source projects now offer Claude Cowork-like capabilities:

| Alternative | Key Strength | Limitation |

|---|---|---|

| OpenWork | Automation-first, supports WhatsApp/Slack/Telegram connectors | Early-stage, limited enterprise features |

| Kuse | Rust-native, blazing performance, cross-platform, BYOK | Still maturing, community-driven support only |

| DeepSeek-Cowork | Low-cost (uses DeepSeek API), mobile integration via Happy app | Hybrid architecture adds complexity |

| Openclaw | Lives in existing messaging channels (WhatsApp, Discord, Slack) | Not desktop-native, less suitable for file-heavy work |

| Eigent | Self-hosted multi-agent workflows | Requires significant configuration |

- When open-source makes sense: You want to validate a use case quickly, your team has strong DevOps capability, and you are comfortable with community-supported tooling.

- When custom build makes sense: You need deep integration with proprietary systems, operate in a regulated industry, require full IP ownership, or your workflows cannot be templated. For most enterprise-grade deployments, custom development is the right call.

Unpack this decision further in our AI agent development cost analysis.

How to Build an AI Coworker Platform: A Phased Approach

At Appinventiv, we never recommend building everything at once. The phased approach reduces risk, validates assumptions early, and controls burn rate.

Phase 1: Discovery and Architecture (4 – 6 weeks | $15,000 – $30,000)

- Define target workflows and user personas

- Map integration requirements

- Select LLM strategy (commercial, open-source, hybrid)

- Design the agentic workflow system architecture

- Produce a detailed technical specification and cost model

Phase 2: MVP Build (3 – 5 months | $80,000 – $200,000)

- Core orchestration engine with 2-3 workflows

- 2-3 enterprise connectors

- Basic desktop or web interface

- Permission and safety controls

- Internal testing and iteration

Phase 3: Enterprise Hardening (3 – 5 months | $100,000 – $250,000)

- Computer use capabilities (if required)

- Advanced multi-agent coordination

- Compliance and audit controls

- Plugin/connector framework for extensibility

- Performance optimization and load testing

Phase 4: Scale and Ecosystem (Ongoing | $50,000 – $150,000/quarter)

- Additional integrations and plugins

- Mobile companion app

- Advanced analytics and reporting

- Customer onboarding tooling

- Continuous model optimization

This phased model is how we approach every enterprise AI assistant development engagement. It lets you ship something usable fast and expand based on what users actually need.

What Technologies Are Required to Build an AI Coworker System?

The technology stack for a production-grade AI coworker platform spans several layers:

| Layer / Category | Tools & Technologies |

|---|---|

| LLM and AI Layer | Claude API (Anthropic), GPT-4o (OpenAI), LLaMA 3 (Meta), Mistral, Gemini; LangGraph, LangChain, CrewAI, Haystack |

| Backend | Python (FastAPI, Django), Node.js, Go, Redis (caching), PostgreSQL (storage) |

| Desktop Application | Tauri (Rust), Electron (JavaScript), Swift (macOS), C# (Windows) |

| Infrastructure | AWS, GCP, Azure, Docker, Kubernetes, Terraform |

| Security | OAuth 2.0, SSO, AES-256 encryption, SOC 2, HIPAA compliance |

| Observability | ELK Stack, CloudWatch and custom dashboards for monitoring |

The stack you choose directly impacts both build cost and long-term operational cost.

Why Enterprises Are Investing in AI Coworker Platforms

The business case for building an AI coworker platform goes beyond efficiency gains. Here’s what we’re seeing across the industry:

- Productivity at scale. Anthropic’s internal data shows that Cowork users complete tedious tasks that would otherwise get skipped, leading to better decisions. Tasks that take a human analyst 4-6 hours (compiling research, organizing documents, drafting reports) can be completed by an AI coworker in minutes.

- Talent leverage. A well-built AI coworker lets one employee do the output of three, without burnout. For organizations struggling with hiring in specialized roles (legal research, financial analysis, compliance review), this is a force multiplier.

- Competitive positioning. Anthropic’s enterprise customers account for roughly 80% of its business. That tells you where the market is headed. Companies that build or adopt AI coworker platforms now will set the operational baseline that competitors have to match.

- Revenue from the platform itself. If you are building a commercial AI coworker platform (not just an internal tool), the subscription and usage-based revenue models are well-proven. Claude Cowork is bundled with Pro ($20/month) and Max ($100-200/month) plans. Microsoft is integrating equivalent capabilities into its 365 Copilot suite.

We design systems that maximize output while minimizing cost!

How Appinventiv Can Help Build Your AI Coworker Platform

We’ve been developing AI systems and offering AI consulting services since well before the current wave. That experience means we don’t just write code; we make architectural decisions that save you hundreds of thousands of dollars over the lifetime of your platform.

Here’s what working with us looks like:

- Strategy-first engagement. We start with your business case, not your feature list. We identify which workflows deliver measurable ROI first and build from there.

- Full-stack AI team. 1600+ engineers across AI/ML, backend, frontend, DevOps, QA, and project management. No subcontracting, no gaps.

- Proven delivery. 3000+ projects shipped, 80+ Gen AI applications launched, and recognition from the Economic Times as a leader in AI Product Engineering and Digital Transformation. Back-to-back Deloitte Tech Fast 50 wins in 2023 and 2024.

- Flexible engagement models. Dedicated team, time-and-material, or fixed-price, depending on your stage and certainty.

- Post-launch support. We don’t disappear after deployment. Our teams handle ongoing optimization, retraining, and scaling.

Whether you’re building an internal AI coworker for your operations team or a commercial platform to compete with Claude Cowork, we bring the architecture expertise and delivery muscle to make it happen.

Explore our AI development services

FAQs

Q. How much does it cost to build an AI coworker platform like Claude?

A. Expect to drop at least $80,000 for a bare-bones MVP. Chasing the full enterprise dream with autonomous agents and deep integrations? We tell our clients to budget north of $1.5 million. The heavy lifting—orchestration engines and legacy system connectors—eats the most capital. Don’t forget the rent, either. Keeping those LLMs breathing costs another $50,000 to $200,000+ annually.

Q. What technologies are required to build an AI coworker system?

A. You need a robust stack, not just a simple API wrapper. Experts build the core on an LLM backbone and wire the nervous system using orchestration frameworks like LangGraph or CrewAI. Next, these pros attach a sleek Tauri or Electron interface, secure REST/OAuth connectors for your legacy tech, and park the whole beast on rock-solid AWS, GCP, or Azure infrastructure.

Q. How long does it take to develop an AI coworker platform?

A. Forget overnight miracles. A functional MVP takes 3 to 5 months to hit the floor. A mid-tier setup with serious security reaches the 6 to 10-month mark. If you want a full Claude-level system? You’re staring down a 12 to 18-month marathon. Our playbook is simple: ship in phases. Get real tools in your team’s hands early, then scale up.

Q. What are the benefits of building AI coworker platforms for enterprises?

A. Brutal efficiency. We see these tools slashing routine grunt work—like document wrangling and data hunting—by up to 80%. It lets your operation scale output without blindly inflating headcount. More importantly, those critical but tedious tasks that humans consistently ignore finally get executed without complaints.

Q. Can Appinventiv help build AI coworker platforms for enterprise workflows?

A. Absolutely. Appinventiv experts don’t just talk theory; they engineer the systems doing the heavy lifting. These pros have shipped over 300 AI solutions, trained 150+ custom models, and nailed 75+ brutal enterprise integrations across finance, healthcare, and retail. Folks in the firm here have managed the entire chaotic lifecycle from the initial blueprint straight to deployment. Reach out, and map a realistic roadmap.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

AI Voice Receptionist Development: Business Use Cases, Technology Architecture, and Cost

Key takeaways: Start with real call use cases, not tech. Define high-frequency intents, workflows, and escalation paths before building anything. Build a low-latency voice pipeline. Reliable telephony and real-time audio streaming make conversations feel natural. Set up the core AI loop. STT→LLM→TTS should handle intent, context, and responses in near real time. Ground responses with…

Key takeaways: Generative AI specialises in content synthesis and creation, while Agentic AI focuses on autonomous reasoning and multi-step task execution. Targeted benefits of generative AI and agentic AI in Australia include nearly a 35% reduction in operational bottlenecks and 70% faster workflows. Prioritise Gen AI for workforce augmentation and Agentic AI for high-stakes, autonomous…

How Much Does It Cost to Build a Conversational AI Chatbot in the UAE?

Key Takeaways AI chatbot development in the UAE typically costs AED 150,000–1,470,000 ($40K–$400K), based on complexity. Backend integration and compliance drive cost more than the chatbot interface itself. Arabic NLP, UAE hosting, and PDPL requirements increase budget and engineering effort. LLM + RAG enterprise chatbots sit at the higher end due to integration and infrastructure…