- The Fraud Landscape in Australia: Emerging Threat Patterns

- Why Traditional Fraud Detection Systems Are Failing

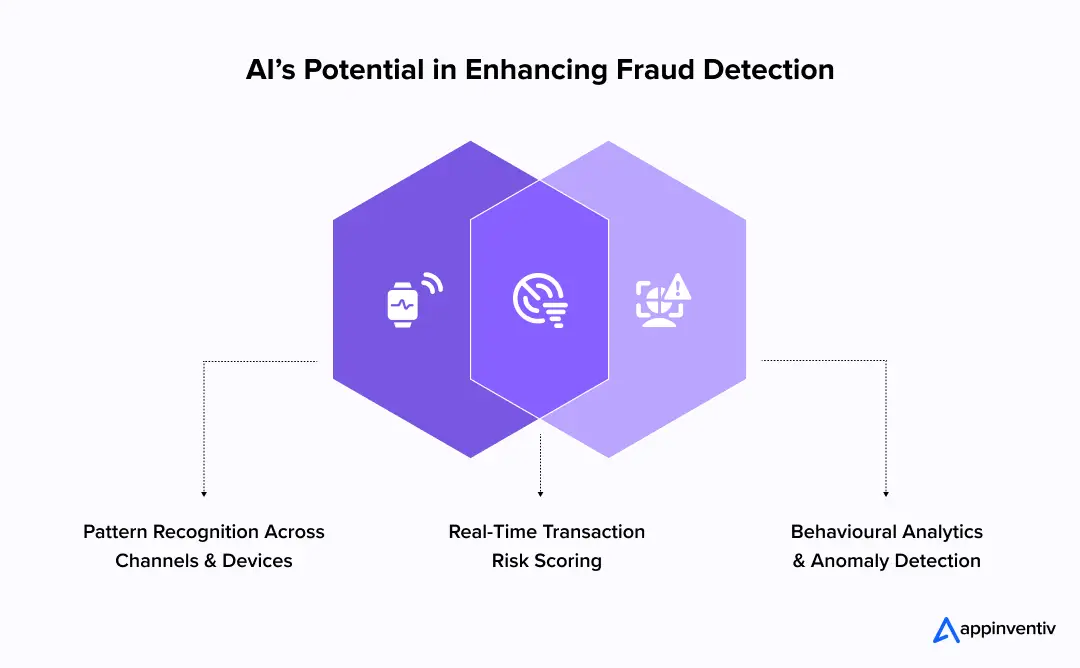

- How AI Enhances Fraud Detection Capabilities

- Core AI Techniques Used in Fraud Detection

- AI Use Cases for Financial Fraud Detection in Different Australian Industries

- Compliance & Regulatory Considerations for AI Integration in Fraud Prevention

- AI Fraud Detection Architecture: What Enterprises Need to Build

- How to Deploy AI Fraud Detection Successfully

- Challenges in Implementing AI in Fraud Systems & Strategies to Address Them

- What is The Cost of Implementing AI Fraud Detection?

- Business Benefits of Using AI for Fraud Detection

- Questions Leaders Should Ask Before Implementing AI Fraud Detection

- Future Trends of AI Fraud Detection in an Era of Real-Time Finance

- How Appinventiv Helps Organisations Deploy AI Fraud Detection

- FAQs

Key takeaways:

- AI Fraud Detection in Australia is moving from static rule engines to real-time behavioural risk intelligence embedded directly into payment and identity flows.

- AI for financial fraud detection helps reduce false positives, accelerate response time, and protecting revenue without increasing customer friction.

- Australian institutions must align AI deployments with APRA CPS 234, ASIC resilience standards, and the Privacy Act 1988.

- The cost to implement AI for financial fraud detection ranges between AUD 70,000 and AUD 700,000 or more.

Fraud in Australia is no longer a fringe risk. It is systemic, fast-moving, and increasingly sophisticated. From real-time payment scams to identity manipulation across digital channels, Aussie organisations are facing a sharp rise in financial crime exposure.

According to the Australian Competition and Consumer Commission, scam losses reported through Scamwatch continue to reach alarming levels each year. Full-year data released around late 2025 indicate that total reported scam losses reached about AUD $334.9 million, a roughly 5% rise on the prior year’s comparable figure. Investment, phishing, and romance scams were among the highest contributors to loss totals.

At the same time, obligations under the Privacy Act 1988 and sector-specific regulations are tightening, placing greater accountability on organisations to detect, prevent, and report fraud proactively.

Traditional rule-based fraud detection systems rely on static, rule-based systems that flag transactions based on pre-defined thresholds. In a world of rapid adoption of the New Payments Platform (NPP), open banking through the Consumer Data Right (CDR), and the move toward T+0 settlement cycles, these systems are no longer sufficient. This is where AI fraud detection in Australia is moving from experimentation to necessity.

Artificial intelligence in Australian FinTech industry introduces adaptive learning models that analyse behavioural signals, transaction anomalies, device fingerprints, and network relationships in real time. Instead of reacting to known patterns, AI systems continuously learn from evolving threats.

However, deploying AI fraud detection is not simply about integrating a machine learning model. It requires architectural clarity, cross-functional alignment, and a structured roadmap. This blog examines how AI fraud detection is being applied across Australian industries, the compliance considerations, and a practical implementation roadmap.

With total reported scam losses reaching AUD $334.9 million in 2025, you must act swiftly to enhance fraud prevention with the power of AI.

The Fraud Landscape in Australia: Emerging Threat Patterns

The Australian market presents a unique target for global fraud syndicates due to its high wealth concentration and advanced digital infrastructure. We are observing several distinct shifts in how threats manifest:

- Payment and Card Fraud Evolution: While traditional skimming is down, “card-not-present” fraud and sophisticated interception of digital wallets are rising.

- Account Takeover (ATO) & Credential Theft: Large-scale data breaches have flooded the dark web with Australian PII (Personally Identifiable Information), leading to automated “credential stuffing” attacks against banking and retail portals.

- Synthetic Identity & Mule Accounts: Fraudsters are increasingly combining real and fabricated data to create “Frankenstein” identities that bypass basic KYC checks. These are often used to manage mule networks that facilitate money laundering.

- Insider Fraud and Internal Risk: Insider threats remain understated. Privileged access misuse and collusion schemes are harder to detect through static monitoring.

- Scam Ecosystems: Australia has seen a significant spike in “authorised push payment” (APP) fraud, where victims are manipulated into sending money voluntarily. Detecting these requires AI that understands conversational context and behavioural anomalies rather than just technical red flags.

Why Traditional Fraud Detection Systems Are Failing

Traditional systems rely on “if-then” logic. For example, “if a transaction is over $5,000 and occurs overseas, flag it.” Modern criminals know these rules as well as we do and have built scripts to stay just below the radar. Furthermore, legacy rule engines were designed for slower transaction environments. They depend on predefined thresholds and known risk markers. They fail in three predictable ways:

- They generate excessive false positives.

- They react to known patterns rather than emerging ones.

- They struggle with cross-channel data correlation.

Traditional vs AI-Powered Fraud Detection in Australia

| Feature | Traditional Rule-Based Systems | AI-Powered Fraud Detection |

|---|---|---|

| Detection Logic | Static, manually updated rules | Dynamic, self-learning algorithms |

| Data Processing | Limited to transactional variables | High-dimensional (behavioural, device, network) |

| Response Time | Often reactive or post-settlement | Real-time inference and blocking |

| Accuracy | High false positives; high friction | Precision scoring; low customer friction |

| Scalability | Becomes slower as rules increase | Scales horizontally with data volume |

The role of AI powered Fraud Detection is not to replace governance but to enhance precision.

How AI Enhances Fraud Detection Capabilities

Leveraging AI for fraud detection in Australia allows firms to move from “searching for a needle” to “understanding the haystack.” By utilising machine learning, businesses can establish a baseline of “normal” behaviour for every single user. Here are some other potential capabilities of AI in Australia:

- Behavioural Analytics and Anomaly Detection: AI in fraud detection in Australia examines behavioural baselines rather than static rules. It analyses device usage, transaction velocity, geolocation shifts, and network associations in real time.

- Real-Time Transaction Risk Scoring: Instead of post-event analysis, AI assigns dynamic risk scores before transaction settlement. This reduces exposure windows in real-time payment ecosystems.

- Cross-Channel Pattern Recognition: Fraud rarely occurs within a single system. AI aggregates signals across web, mobile, API, and contact centre interactions. Pattern recognition extends beyond isolated events.

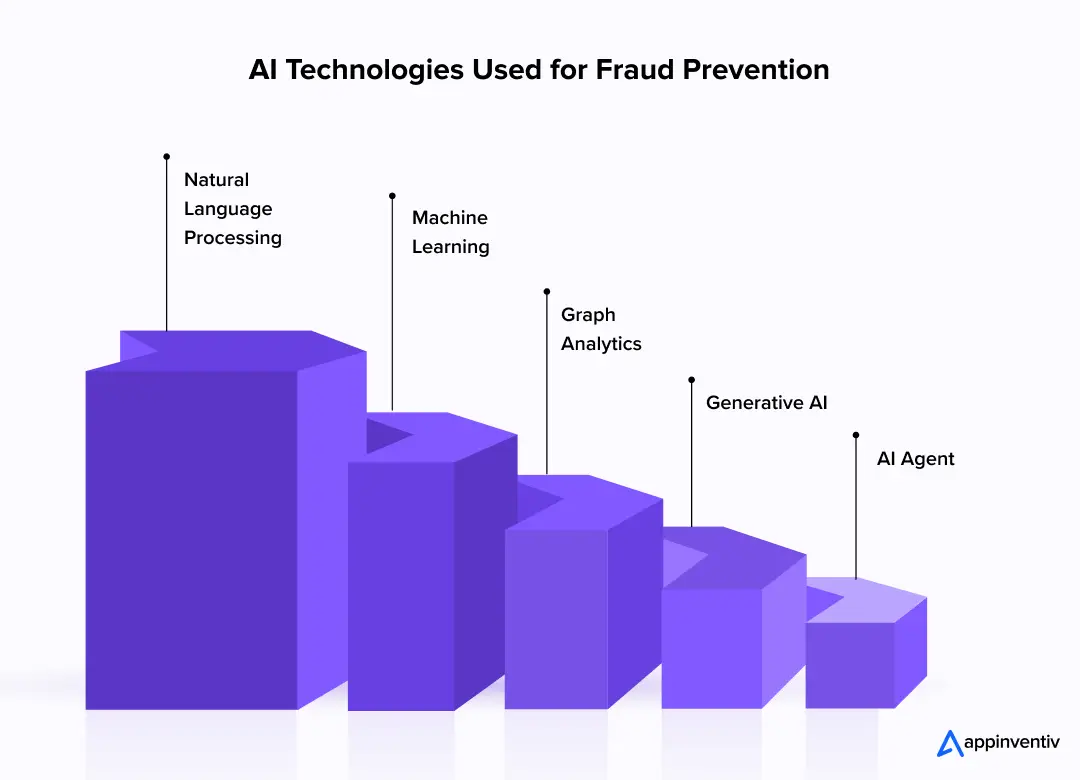

Core AI Techniques Used in Fraud Detection

AI in financial fraud detection relies on layered modelling approaches. No single algorithm can manage the scale, speed, and regulatory scrutiny associated with AI fraud detection. Each AI technique plays a distinct role within the broader architecture. Let’s see how:

- Machine Learning Risk Models: Supervised learning using historical data helps identify known fraud types, while unsupervised learning detects “never-before-seen” anomalies.

- Graph Analytics: This is critical for uncovering mule networks. By mapping the relationships between accounts, IP addresses, and physical locations, we can see the “clusters” of illicit activity.

- Natural Language Processing (NLP): NLP is vital for identifying phishing attempts and social engineering scripts in SMS and email communications.

- Generative AI for Fraud Detection: Use cases of Gen AI in Australia are to create synthetic “fraudulent” data to train models where real-world examples are scarce, ensuring the system is ready for new attack vectors.

- AI Agents for Fraud Detection: These autonomous AI agents in Australia can handle the initial triaging of alerts, gathering evidence and closing low-risk cases without human intervention, which drastically reduces the burden on SOC teams.

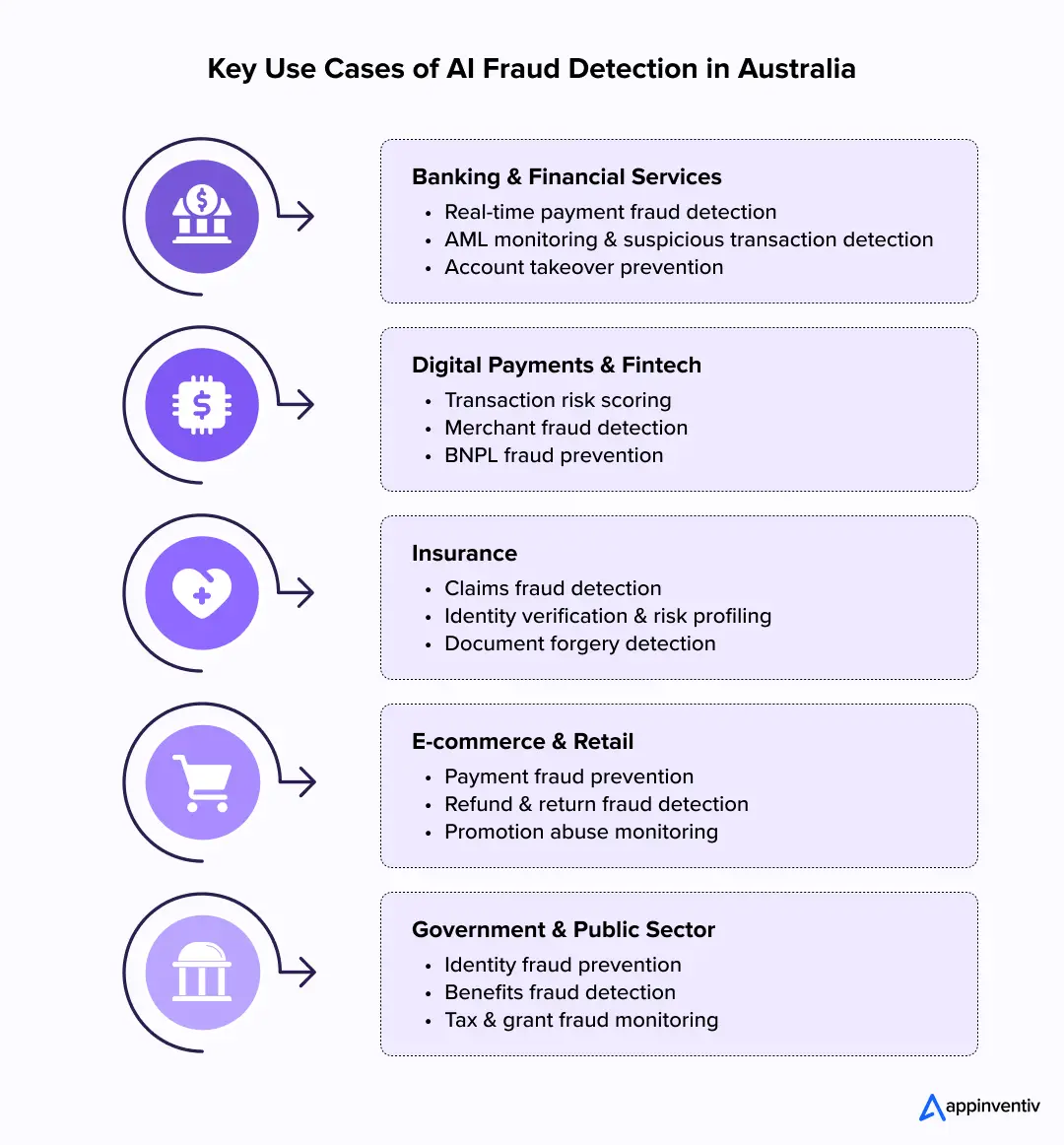

AI Use Cases for Financial Fraud Detection in Different Australian Industries

AI fraud detection in Australia is being deployed across regulated and high-velocity transaction environments. The application varies by industry, but the underlying principle remains the same: behavioural intelligence embedded into operational workflows. Some of the most prominent use cases of AI for fraud detection in different industries are:

Banking & Financial Services

Banks operate within real-time payment infrastructure and strict prudential regulation. AI in financial fraud detection is most mature in this sector.

Primary applications include:

- Real-time outbound payment fraud detection before settlement

- Behavioural monitoring for account takeover

- Suspicious transaction detection aligned with AML obligations

AI for fraud detection in Australia allows banks to reduce false positives while maintaining strong regulatory defensibility. Instead of broad transaction blocks, institutions can apply risk-weighted interventions.

Digital Payments & FinTech

Fintech platforms operate across API-driven ecosystems where transaction velocity and third-party integrations increase exposure. Static rule engines struggle to scale in these environments.

Common applications include:

- Real-time transaction risk scoring within payment gateways

- Merchant fraud monitoring across aggregated platforms

- BNPL misuse and synthetic identity detection

Fraud detection using AI enables fintechs to protect margin and liquidity without disrupting customer experience. Precision becomes a commercial advantage.

Insurance

Insurance fraud often materialises at claim stage rather than at payment initiation. Detection requires pattern analysis across documentation, identity signals, and behavioural inconsistencies.

Key use cases include:

- Claims anomaly detection based on historical loss patterns

- Document forgery and image inconsistency analysis

- Identity verification and risk profiling during claim processing

AI fraud detection software allows insurers to preserve payout speed while tightening fraud controls in high-value claim categories.

E-Commerce & Retail

Retail platforms operate at high transaction volumes where small fraud leakage compounds quickly. Excessive friction, however, directly impacts conversion rates.

AI in Australian eCommerce for fraud detection is typically applied in:

- Checkout transaction risk scoring

- Refund and return abuse monitoring

- Promotion and voucher misuse detection

AI in fraud detection for eCommerce helps retailers shift from blanket blocking strategies to contextual intervention models that protect both revenue and customer loyalty.

Government & Public Sector

Government agencies manage large-scale benefit disbursement and digital service platforms. Fraud exposure affects public funds and institutional credibility.

Primary applications include:

- Identity fraud detection in digital service portals

- Detection of duplicate or coordinated claims across cross-agency datasets

- Tax and grant fraud pattern identification

Fraud detection AI in public sector environments must prioritise explainability and audit readiness. Detection accuracy alone is insufficient without governance transparency.

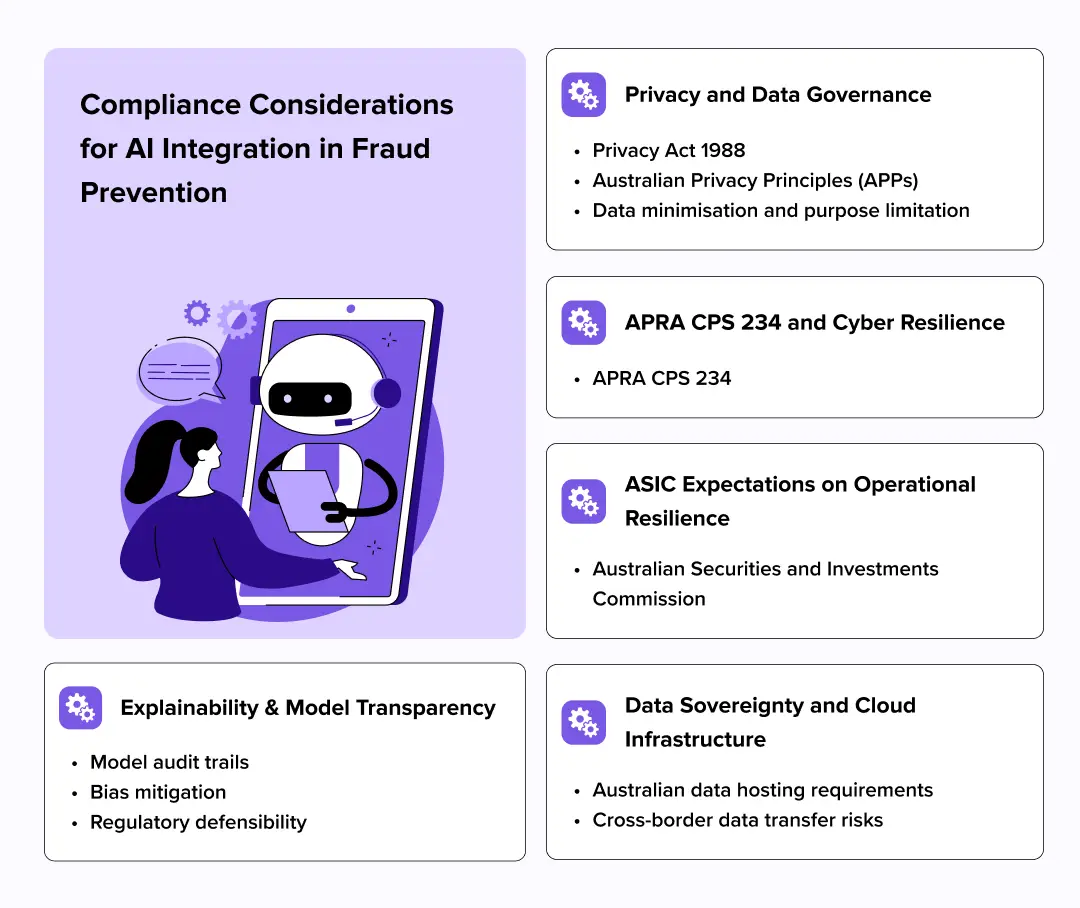

Compliance & Regulatory Considerations for AI Integration in Fraud Prevention

Compliance should not be an afterthought; it must be baked into the AI architecture. In the Australian enterprise landscape, the regulatory burden is significant.

Privacy and Data Governance: The Privacy Act 1988 and the Australian Privacy Principles (APPs) dictate how data is used to train models. Enterprises must ensure “data minimisation” – only using the data strictly necessary for fraud detection and maintaining transparency about how AI-driven decisions affect individuals.

APRA CPS 234 and Cyber Resilience: For financial institutions, APRA CPS 234 mandates that third-party vendors (like a custom software developer) must meet the same security standards as the bank itself. This includes robust vulnerability management and the ability to demonstrate that the AI fraud detection software in Australia is protected against “model poisoning” or adversarial attacks.

Explainability and ASIC Expectations: ASIC is increasingly focused on “algorithmic accountability.” If a customer is denied a service or their account is frozen by an AI, the firm must be able to explain the “why.” We solve this by implementing XAI (Explainable AI) frameworks that provide a human-readable audit trail of the logic used by the model.

Data Sovereignty: Many Australian boards now mandate that sensitive transactional data must reside on Australian soil. This impacts the choice of cloud provider and the architecture of the AI training pipeline.

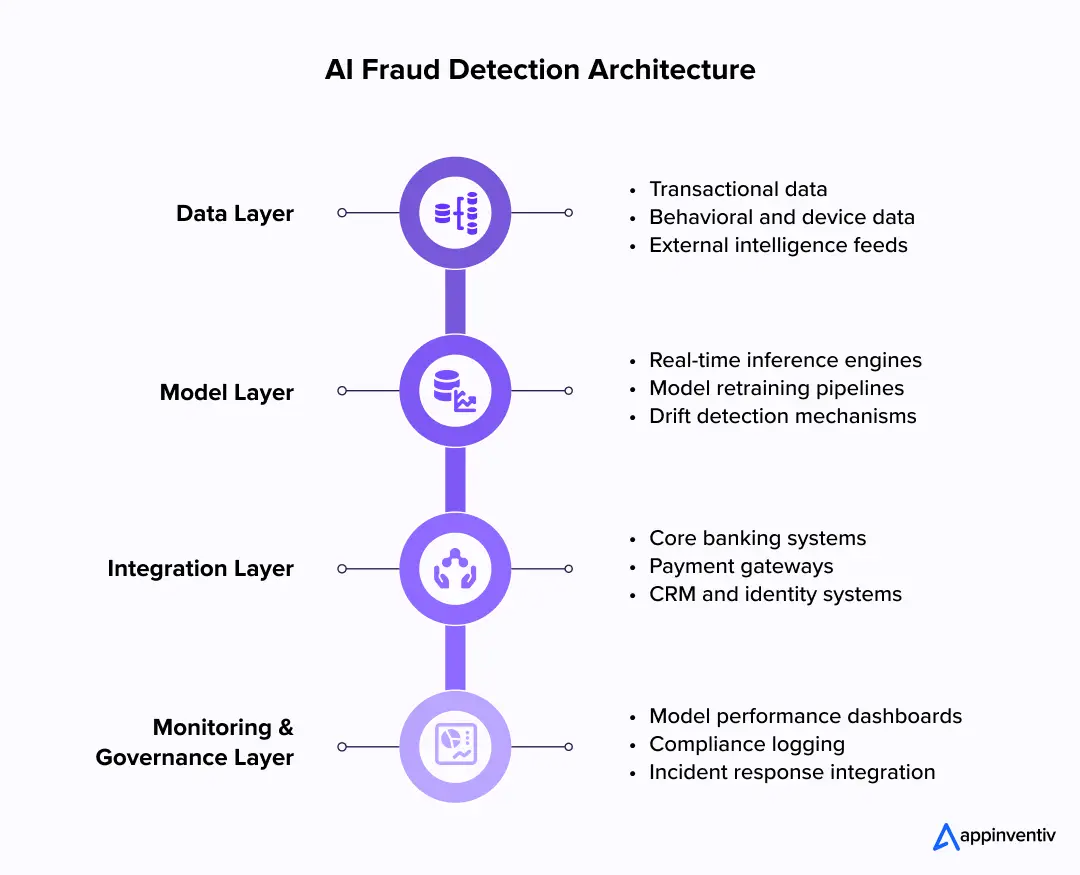

AI Fraud Detection Architecture: What Enterprises Need to Build

AI fraud detection in Australia requires layered architecture that integrates data ingestion, modelling, orchestration, and governance. Algorithm selection alone does not determine long-term success.

Effective architecture ensures scalability, resilience, and compliance alignment.

Data Layer

The data layer aggregates transactional and contextual inputs required for behavioural modelling. Model reliability is directly influenced by data quality and normalisation discipline.

This layer typically includes:

- Real-time transactional feeds from payment and core systems

- Behavioural and device-level telemetry

- External intelligence and sanctions data

Robust feature engineering and cleansing pipelines significantly enhance fraud detection using AI performance.

Model Layer

The model layer converts structured data into risk intelligence. It must operate within strict latency constraints in real-time environments.

Core components include:

- Real-time inference engines for transaction scoring

- Model retraining pipelines with version control

- Drift detection mechanisms to monitor performance degradation

Building an AI fraud detection strategy requires formal lifecycle governance covering validation, deployment, and periodic review.

Integration Layer

Integration determines whether AI becomes embedded infrastructure or isolated analytics. Deep connectivity across enterprise systems is essential.

AI fraud detection software in Australia must integrate with:

- Core banking and ledger platforms

- Payment gateways and switching infrastructure

- CRM and identity management systems

Latency alignment with settlement cycles is critical. AI in fraud detection in Australia cannot rely solely on batch processing.

Monitoring and Governance Layer

Long-term sustainability depends on continuous oversight and performance visibility. Monitoring frameworks provide both operational and regulatory assurance.

This layer generally includes:

- Performance dashboards tracking precision, recall, and false positives

- Compliance logging capturing model decisions and overrides

- Incident response integration linking alerts to investigation workflows

The role of AI powered fraud detection extends beyond prevention. It supports governance reporting and executive risk visibility.

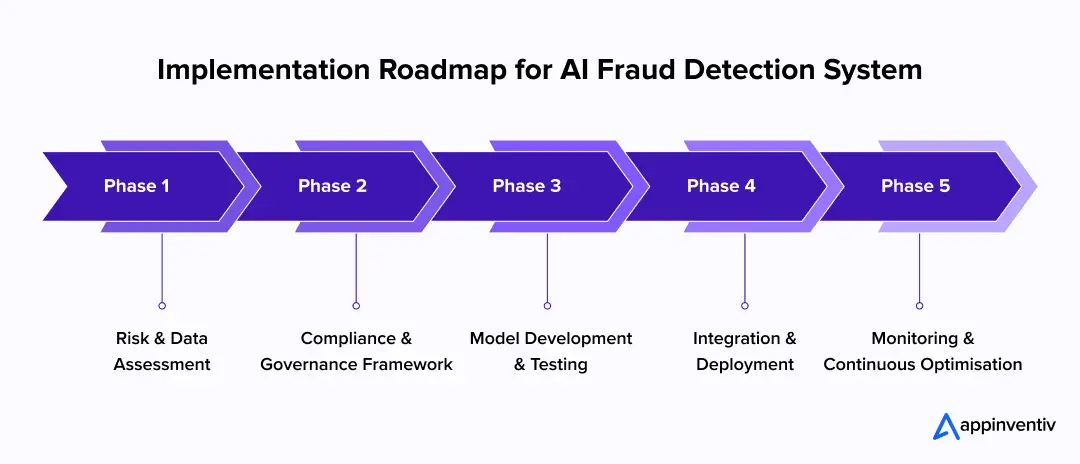

How to Deploy AI Fraud Detection Successfully

AI fraud detection should be approached as a structured transformation initiative rather than a model deployment exercise. Institutions that treat it as a technology add-on often struggle with integration, governance gaps, and performance drift.

A phased execution model reduces risk and improves long-term sustainability.

Risk & Data Assessment

Before model selection, leadership must understand where fraud exposure actually sits within the enterprise. Not all transaction flows carry equal risk.

This phase typically includes:

- Mapping fraud exposure points across payment and onboarding journeys

- Assessing historical fraud loss data and typologies

- Evaluating data completeness, quality, and accessibility

AI in fraud detection in Australia is only as strong as the underlying data foundation. Early assessment prevents downstream model instability.

Compliance & Governance Framework

Compliance considerations in AI based fraud detection must be embedded before production rollout. Governance cannot be retrofitted without operational disruption.

Core activities include:

- Defining regulatory alignment requirements across APRA, ASIC, and privacy obligations

- Establishing AI oversight committees or model risk governance structures

- Documenting explainability, bias testing, and validation standards

Building an AI fraud detection strategy without formal governance increases audit exposure.

Model Development & Testing

Model development should align with clearly defined fraud typologies and risk tolerance thresholds. Over-engineering without defined business objectives often leads to inflated false positives.

Key activities include:

- Training supervised models on historical labelled fraud data

- Validating precision, recall, and false positive impact

- Performing fairness and bias assessments

Fraud detection using AI requires iterative testing in controlled environments before full-scale deployment.

Integration & Deployment

AI for fraud detection in Australia must integrate seamlessly with transaction orchestration systems. Latency tolerance is minimal in real-time finance environments.

This stage typically involves:

- Embedding real-time inference engines within payment flows

- Integrating with CRM and case management platforms

- Establishing rollback and failover mechanisms

Deployment discipline ensures fraud detection enhances, rather than disrupts, customer experience.

Monitoring & Continuous Optimisation

Fraud typologies evolve. Static AI models degrade over time. Continuous oversight is therefore essential.

Ongoing controls should include:

- Monitoring model drift and performance degradation

- Periodic retraining using updated fraud datasets

- Reviewing false positive trends and customer friction metrics

AI powered fraud detection is a living system. Its value depends on disciplined lifecycle management.

Also Read: How to Build an AI App in Australia: A Complete Guide

Build AI-driven fraud detection systems aligned with APRA, ASIC, and CDR obligations.

Challenges in Implementing AI in Fraud Systems & Strategies to Address Them

AI fraud detection introduces architectural, governance, and operational complexity. Most implementation failures are not caused by weak algorithms but by structural gaps in data, integration, and oversight. The following issues surface consistently in enterprise deployments.

Data Quality and Siloed Systems

Challenge:

Many enterprises operate across fragmented core systems, legacy databases, and disconnected digital channels. Inconsistent data formats, missing fields, and limited cross-channel visibility weaken fraud detection using AI models and increase false positives.

Solution:

Establish a unified fraud data layer before model deployment. Consolidate transactional, behavioural, and device signals into a governed data pipeline with clear lineage tracking and feature engineering standards.

Overfitting and Model Bias Risks

Challenge:

Models trained heavily on historical fraud patterns may perform well in testing but degrade in production. Overfitting reduces adaptability, while insufficient bias testing creates regulatory and reputational exposure.

Solution:

Implement structured validation protocols, including cross-validation, fairness testing, and periodic independent model reviews. AI in financial fraud detection must include drift monitoring and retraining triggers to remain adaptive.

Integration Complexity with Legacy Systems

Challenge:

Many Australian enterprises still operate legacy systems designed for batch processing. Real-time scoring engines can create latency or sequencing conflicts when inserted into existing transaction flows.

Solution:

Adopt API-led orchestration and introduce middleware where required to decouple scoring from core settlement systems. AI for fraud detection in Australia must respect transaction timing constraints rather than disrupt them.

Also Read: Legacy System Modernisation in Australia

Regulatory Compliance Gaps

Challenge:

High detection accuracy does not guarantee regulatory defensibility. Without documentation, audit trails, and defined accountability, automated decisions may become difficult to justify under review.

Solution:

Embed compliance for fraud detection at design stage. Maintain structured documentation of model assumptions, logging mechanisms, and escalation pathways for disputed decisions.

Managing False Positives vs Customer Friction

Challenge:

Aggressive fraud thresholds may reduce loss but increase customer disruption. Repeated transaction declines or manual reviews erode trust and operational efficiency.

Solution:

Use contextual risk scoring instead of rigid approval or rejection logic. AI powered Fraud Detection should support graduated responses such as step-up authentication or temporary monitoring rather than immediate blocking.

What is The Cost of Implementing AI Fraud Detection?

The cost of AI fraud detection implementation varies based on integration depth, compliance requirements, and system complexity. On average, the cost to implement AI detection system ranges between AUD 70,000 and AUD 700,000+

Key cost drivers include:

- Data engineering and pipeline consolidation

- Model development, validation, and bias testing

- Real-time API integration and system orchestration

- Governance tooling and compliance documentation

- Ongoing monitoring and retraining infrastructure

AI fraud detection software development cost in Australia becomes more cost-intensive when legacy systems require substantial refactoring.

Cost & Timeline Breakdown by Project Complexity

| Project Complexity | Estimated Cost (AUD) | Typical Timeline | Features for AI Detection System |

|---|---|---|---|

| Basic Projects | 70,000 – 150,000 | 3 – 5 months | Basic fraud detection, rule-based alerts, limited integration with a single payment channel |

| Moderate Complexity | 150,000 – 300,000 | 5 – 8 months | Limited integration points, focused payment fraud use case, moderate compliance requirements |

| Highly Advanced | 300,000 – 500,000 | 8 – 10 months | Multi-channel fraud detection, real-time scoring, integration with core systems |

| Enterprise-Scale Transformation | 500,000 – 700,000 | 10 – 12+ months | Cross-platform deployment, full governance framework, APRA-aligned controls |

Cost clarity improves when organisations define measurable fraud reduction targets and governance expectations early in the planning phase.

AI fraud detection in Australia should be treated as a risk infrastructure investment rather than a discretionary innovation spend.

Business Benefits of Using AI for Fraud Detection

AI in financial fraud detection delivers measurable financial and operational impact when deployed correctly. Industry studies suggest that the Commonwealth Bank of Australia (CBA), which serves over ten million customers, has made a strategic investment in AI security features. As a result, the bank has yielded significant dividends: a 50% halving of scam losses and a 30% contraction in fraud reports.

Strategic benefits include:

- Reduced direct fraud losses

- Lower manual investigation costs

- Faster fraud response cycles

- Stronger compliance posture

- Improved customer trust and retention

The benefits of using AI for fraud detection extend beyond immediate loss prevention. They strengthen operational resilience and board-level risk visibility.

Questions Leaders Should Ask Before Implementing AI Fraud Detection

Executive oversight is critical in AI initiatives that influence customer access and liquidity flows. Leadership team must ask disciplined questions before approving large-scale deployment.

Key questions include:

- Do we have the data quality required for AI fraud detection to deliver reliable outcomes?

- How will models remain explainable under regulatory scrutiny?

- Can our infrastructure support real-time inference within payment settlement windows?

- What governance framework oversees AI decision-making?

- How will we manage disputes arising from automated decisions?

These questions determine long-term defensibility more than model selection criteria.

Future Trends of AI Fraud Detection in an Era of Real-Time Finance

Australia’s financial ecosystem continues to move toward instant settlement and embedded finance models. Fraud networks are simultaneously adopting automation and generative tools. Future trends in AI fraud Detection will look like:

- Generative AI for fraud detection in Australia will increasingly be used for adversarial testing and scenario modelling.

- AI agents for fraud detection will automate investigation workflows and prioritise high-risk alerts.

- The role of AI powered fraud detection will expand from transaction screening to holistic risk orchestration across digital ecosystems.

- Institutions that embed compliance, resilience, and integration discipline into AI design will maintain a competitive and regulatory advantage.

In short, the future of fraud detection lies in collaborative intelligence. We expect to see more “federated learning,” where institutions share fraud patterns without sharing underlying sensitive customer data. This allows the entire Australian ecosystem to get smarter simultaneously.

For the enterprise leader, the mandate is clear: the speed of your defence must match the speed of the attack.

How Appinventiv Helps Organisations Deploy AI Fraud Detection

AI fraud detection in Australia is only as effective as the engineering foundation it sits upon. We, as an experienced artificial intelligence development company in Australia, don’t just deploy algorithms; we architect resilient, long-term digital assets that solve the “operational tension” between security and user experience.

With over 10 years of experience in APAC delivery and 5+ agile delivery centers across Australia, we understand the nuances of the local market – from the high-speed requirements of the NPP to the strict data residency expectations of Australian boards.

Our presence on the QLD Government ICTSS Panels and our CMMI Level 3 certified processes ensure that every line of code we write is audit-ready and built for the high-stakes environment of Australian enterprise.

The Appinventiv advantage in Australia Includes but is not limited to:

- Localised Strategic Advisory: Our Australian staff brings deep business expertise to the table, helping CIOs and CTOs align their AI initiatives with ACSC Essential Eight guidance and APRA CPS 234 expectations.

- Proven Scale: We have successfully deployed 3000+ digital assets and 300+ AI powered solutions in Australia across 35+ industries, maintaining a 78% client retention rate by prioritising stability over hype.

- Measurable Business Impact: Our implementations typically drive a 35% efficiency gain in enterprise programs by reducing manual oversight and exception backlogs.

- Security First, Always: We maintain a 99.50% security compliance SLA (ISO, SOC2), ensuring your fraud detection infrastructure is as secure as the transactions it protects.

Whether you are a FinTech scaling across the Sydney and Brisbane or a legacy insurer modernising a claims engine in Melbourne and Perth, we provide the technical foresight and FinTech software development services in Australia needed to transform fraud from a persistent business risk into a managed operational function.

FAQs

Q. What is AI fraud detection?

A. AI fraud detection in Australia is a technology-driven approach that uses machine learning, behavioural analytics, and pattern recognition to identify and block fraudulent activity in real-time. Unlike traditional systems, it learns from new data to stay ahead of evolving threats.

Q. How does AI in fraud detection work?

A. The system ingests data from multiple sources – transactions, device IDs, IP addresses, and user behaviour. It compares these data points against historical patterns and a baseline of “normal” behaviour to assign a risk score to every event in milliseconds.

Key functional steps include:

- Data Aggregation: Consolidating structured transaction logs with unstructured behavioral metadata.

- Feature Engineering: Identifying specific variables (e.g., typing cadence, IP velocity) that correlate with high-risk events.

- Real-time Inference: Running active sessions through pre-trained models to generate an immediate “Accept, Challenge, or Block” decision.

Q. How can AI help catch fraud in banking?

A. In banking, AI monitors for AML red flags, suspicious payment velocity, and account takeover attempts. It can identify complex “layering” techniques used in money laundering that are often invisible to traditional rule-based filters. Specific applications include:

- KYC Verification: Using computer vision to spot document forgeries or deepfake artifacts during digital onboarding.

- Mule Detection: Identifying clusters of accounts that show coordinated, circular fund movements.

- Social Engineering Defense: Utilising NLP to detect “coaching” patterns in authorized push payment (APP) scams.

Q. How does AI prevent identity theft?

A. AI prevents identity theft by using behavioural biometrics and device fingerprinting. Even if a fraudster has a user’s legitimate credentials, the AI can detect that the way they navigate an app or the specific device profile they are using is inconsistent with the true owner’s established history.

Q. How does Appinventiv ensure compliance with Australian standards?

A. We align our delivery with the Privacy Act 1988, APRA CPS 234, and the ACSC Essential Eight. Our development centers follow ISO 27001 and ISO 9001 standards, ensuring all AI models are transparent, audit-ready, and compliant with Australian data sovereignty laws.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

Key takeaways: Generative AI specialises in content synthesis and creation, while Agentic AI focuses on autonomous reasoning and multi-step task execution. Targeted benefits of generative AI and agentic AI in Australia include nearly a 35% reduction in operational bottlenecks and 70% faster workflows. Prioritise Gen AI for workforce augmentation and Agentic AI for high-stakes, autonomous…

How Much Does It Cost to Build a Conversational AI Chatbot in the UAE?

Key Takeaways AI chatbot development in the UAE typically costs AED 150,000–1,470,000 ($40K–$400K), based on complexity. Backend integration and compliance drive cost more than the chatbot interface itself. Arabic NLP, UAE hosting, and PDPL requirements increase budget and engineering effort. LLM + RAG enterprise chatbots sit at the higher end due to integration and infrastructure…

AI Inventory Management in Australia: Benefits, Use Cases, and Implementation Strategies

Key takeaways: Traditional ERP planning reacts to history; AI predicts demand shifts, reallocates stock dynamically, and prevents costly stockouts across distributed Australian networks. AI in inventory management in Australia typically reduces inventory levels 20–40% while maintaining service targets, unlocking significant working capital across multi-state operations. Enterprise implementations usually range from AUD 70,000 to AUD 700,000+,…