- How Agentic RAG Works Inside Enterprise Environments

- Agentic RAG vs Traditional RAG vs Agentic AI: What Enterprises Should Know

- Benefits of Agentic RAG Implementation

- Enterprise-Grade Agentic RAG Architecture: What Production Systems Actually Require

- Real World Use Cases of Agentic RAG for Enterprises

- Implementation Challenges of Agentic RAG in Enterprises

- Implementation Frameworks and Development Strategy for Agentic RAG

- Platforms Supporting Enterprise Agentic RAG Deployments

- ROI Modeling for Agentic RAG Adoption

- What Does the Future of Agentic RAG in Enterprise Look Like?

- How Appinventiv Supports Enterprise Agentic RAG Implementation

- Frequently Asked Questions

- Agentic RAG improves decision accuracy while maintaining compliance, governance visibility, and enterprise data traceability.

- Enterprises deploying AI agents report strong ROI as operational efficiency and knowledge accessibility steadily improve.

- Hybrid retrieval plus agent reasoning enables scalable AI workflows across complex enterprise systems and datasets.

- Governance, observability, and security architecture determine whether enterprise AI moves beyond pilot to production.

- Agentic RAG adoption is accelerating as enterprises prioritize reliable AI for regulated, knowledge-intensive business operations.

Most teams hit a point where their early RAG deployment stops scaling the way they expected. The system answers questions well enough, yet once usage grows, cracks start to show. Someone usually notices it first during a pilot expansion. A compliance analyst asks a multi-part question, and the response comes back partially grounded, partially guessed. That is when the conversation shifts from “it works” to “can we trust it in production?”

If your organization is already running agentic AI retrieval augmented generation systems, you have probably seen some version of this. Static retrieval flows struggle with complex reasoning. Context windows get tight. Governance questions start piling up. And when multiple enterprise systems need to talk to each other, simple RAG pipelines begin to feel restrictive compared to agentic AI RAG approaches.

This is where agentic RAG implementation starts entering enterprise discussions. Not as a replacement for RAG, but as a way to make it operationally dependable. AI agents can plan retrieval steps, call tools, validate outputs, and keep context over longer workflows. The difference shows up most clearly when workflows become layered, regulated, or time-sensitive.

Many US enterprises are now shifting from proof-of-concept AI toward accountable AI systems. Reliability, auditability, and measurable ROI are driving agentic RAG implementation in enterprise decisions more than novelty. Agentic RAG fits into that transition because it focuses on execution, not just response generation.

The sections ahead break down how enterprises are implementing it, what architectural choices matter, where ROI typically comes from, and the challenges your team should anticipate before scaling.

52% of enterprises using GenAI already run AI agents in production

How Agentic RAG Works Inside Enterprise Environments

Once agentic RAG implementation in enterprise workflows is in place, reliability becomes the real test. The question is no longer whether it answers, but whether it can reason, retrieve, and act consistently when systems, data, and decisions intersect.

Google Cloud’s ROI report notes that 52% of enterprises using GenAI already run AI agents in production, and 88% report positive ROI, which explains why reliability and consistency are getting more attention.

Operational Characteristics in Production

A quick look at how agentic AI in the enterprise with RAG behaves once deployed.

Key operational shifts:

- Multi-step reasoning loops: retrieve, evaluate, retrieve again

- Adaptive retrieval, agents choose data sources dynamically

- Tool orchestration, APIs, enterprise apps, automation triggers

- Persistent memory, context carries across sessions and workflows

| Capability | Traditional RAG Behavior | Agentic RAG Behavior |

|---|---|---|

| Retrieval flow | Single pass retrieval | Iterative, adaptive retrieval |

| System interaction | Limited integrations | Active tool orchestration |

| Context continuity | Session-bound | Persistent memory support |

| Workflow automation | Minimal | Cross-system execution |

Enterprise Workflow Integration

Where agentic AI RAG fits in the existing enterprise infrastructure.

Typical integrations:

- Knowledge bases, CRM platforms, ERP systems, document repositories

- Event-driven triggers like document uploads or compliance alerts

- Automated cross-system decision flows that reduce manual handoffs

In practice, this often means AI becomes part of operational pipelines instead of sitting as a separate chatbot interface.

Validation and Reliability Layers

Enterprises keep outputs trustworthy using these common safeguards:

- Critic agents reviewing responses before delivery

- Evidence grounded in retrievable data sources

- Observability dashboards tracking accuracy and drift

These layers help maintain accountability while scaling AI use. That balance, automation with oversight, is usually what makes agentic AI with RAG viable in enterprise environments.

Also Read: How Agentic AI Is Changing the Way SaaS Applications Operate

Agentic RAG vs Traditional RAG vs Agentic AI: What Enterprises Should Know

Most enterprise teams eventually compare these approaches side by side, not out of curiosity, but because production AI brings practical questions around reliability, governance, and scalability.

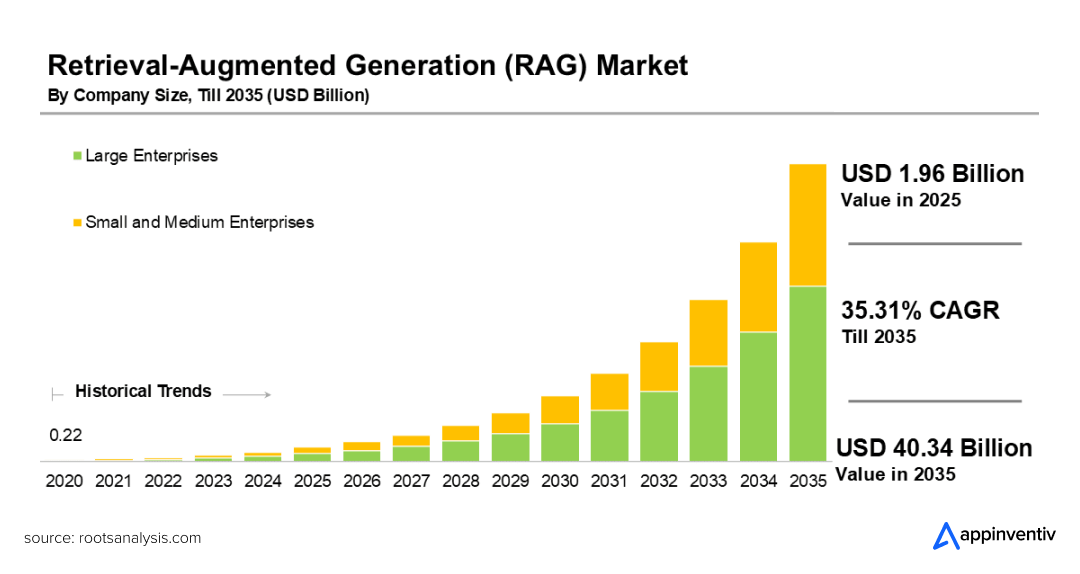

The RAG market is projected to reach $40.34 billion by 2035, with large enterprises leading adoption, so the conversation often shifts from “what works” to “what holds up under real operational pressure.”

Agentic RAG vs Traditional RAG: Where Traditional Systems Start Struggling

Traditional RAG performs well during pilots, but agentic AI with RAG handles complexity better. Still, once data volume, workflows, and users increase, limitations begin to surface.

Common pressure points:

- Context limits, large knowledge bases, and exceed the retrieval scope

- Static retrieval logic struggles with layered queries

- Minimal orchestration across enterprise systems

- Workflow rigidity when decisions require multiple steps

Many teams notice this during cross-department adoption. Follow-up queries grow more complex, yet retrieval pipelines stay static.

RAG vs Agentic AI: Where Pure Agentic Systems Need Grounding

Agentic AI improves automation and reasoning. Without strong retrieval, accuracy and traceability can weaken, especially in regulated environments.

Typical concerns:

- Higher hallucination risk without grounded sources

- Inconsistent domain accuracy

- Compliance validation becomes harder

- Extra engineering needed for auditability

This approach can still work for internal automation. Even so, enterprise knowledge workflows usually require verifiable information.

Why Enterprises Are Choosing Agentic RAG Over RAG

This is where agentic AI RAG starts standing out. It blends structured retrieval with autonomous reasoning, which many enterprises see as a practical balance.

Operational advantages:

- Retrieval-backed reasoning improves reliability

- Better continuity across complex workflows

- Compliance-friendly outputs with evidence grounding

- Easier scalability across enterprise knowledge systems

When organizations evaluate agentic RAG vs RAG or agentic RAG vs traditional RAG, the decision often comes down to trust, traceability, and operational flexibility rather than model capability alone.

Strategic Comparison Snapshot

| Capability | Traditional RAG | Standalone Agentic AI | Agentic RAG |

|---|---|---|---|

| Knowledge grounding | Retrieval-based | Model-dependent | Retrieval plus agent reasoning |

| Context continuity | Limited | Moderate | Strong with memory layers |

| System orchestration | Minimal | Strong | Strong with grounded retrieval |

| Hallucination control | Moderate | Lower control | Higher control |

| Enterprise scalability | Moderate | Variable | High |

| Compliance readiness | Moderate | Lower without extra layers | Stronger with validation |

For enterprises comparing agentic RAG vs RAG or assessing agentic RAG vs traditional RAG, the direction is becoming clearer. Traditional RAG supports structured retrieval well. Pure agentic AI improves autonomy. Enterprise agentic RAG solutions combine both, which is why they are increasingly viewed as production-ready approaches for AI deployments.

Also Read: RAG vs Fine Tuning: Which AI Approach is Best for Your Business?

Benefits of Agentic RAG Implementation

At some point, leadership stops asking how the AI works and starts asking what it improves. That shift usually happens after a pilot runs for a few months. Someone notices analysts finishing research faster, support teams escalating fewer issues, or compliance reviews taking less time. Those signals are where the real value discussion begins.

Decision Quality That Holds Up Under Pressure

Accuracy matters most when decisions carry risk. Agentic AI with RAG helps by grounding outputs in retrievable enterprise data while allowing multi-step reasoning

Typical measurable improvements include:

- Higher response precision in knowledge-heavy workflows

- Fewer manual verification cycles

- Faster resolution of complex queries

This often translates into shorter decision timelines and fewer escalations.

Productivity Gains Without Workflow Disruption

Automation is not just replacing tasks. It often removes repetitive knowledge retrieval so teams can focus on judgment-driven work.

Common productivity indicators:

- Reduced research time for analysts

- Faster documentation synthesis

- Quicker response cycles in customer or internal support

Many enterprises measure agentic RAG impact as reduced decision latency across operational teams.

Cost Efficiency That Shows Up Operationally

Enterprise agentic RAG solutions, infrastructure, and AI costs do exist. Still, operational savings frequently offset them once deployments mature.

Areas where savings appear:

- Lower manual processing overhead

- Reduced duplicated research efforts

- Fewer compliance rework cycles

This typically leads to lower operational overhead over time.

Knowledge Accessibility Across Teams

Enterprise knowledge often sits scattered across systems. Agentic RAG improves discoverability without forcing major workflow changes.

Results organizations often track:

- Faster onboarding for new employees

- Improved cross-team collaboration

- More consistent knowledge access

Risk Mitigation and Compliance Stability

This benefit rarely shows immediately. It becomes visible during audits or regulatory reviews.

Key improvements include:

- Better traceability of AI-generated outputs

- Stronger documentation consistency

- Improved compliance response readiness

Together, these results explain the growing interest in the benefits of agentic RAG. The impact of AI in business with RAG tends to show up not as a single dramatic metric, but as steady improvements across decision speed, operational efficiency, and governance readiness.

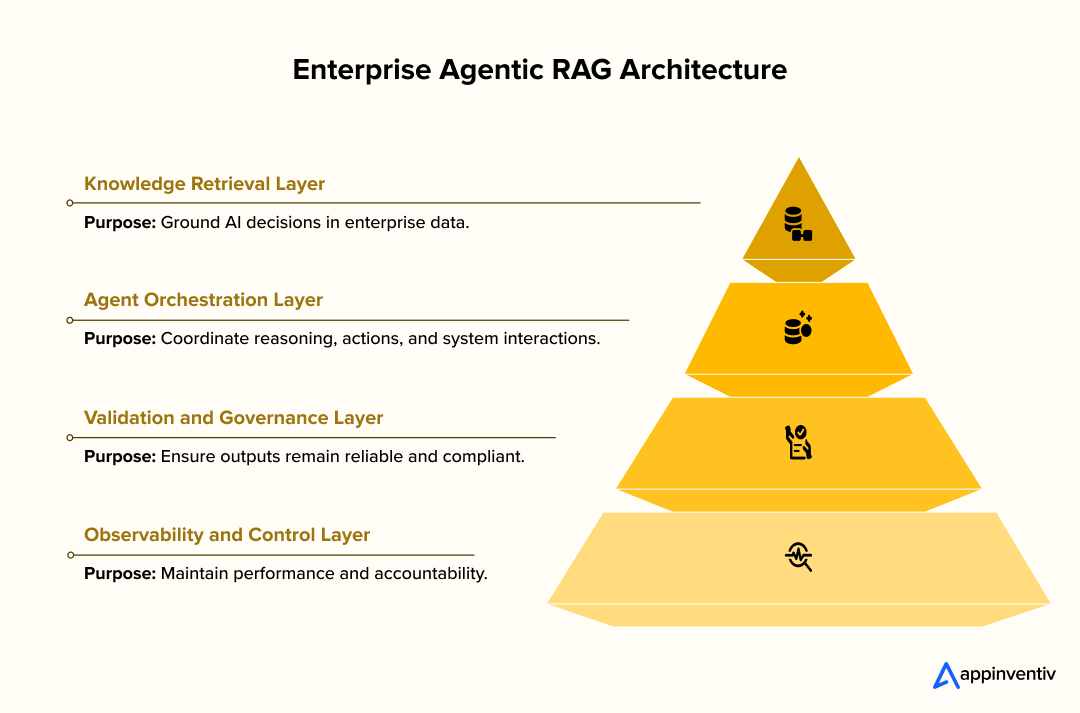

Enterprise-Grade Agentic RAG Architecture: What Production Systems Actually Require

Architecture decisions start becoming real once the pilot ends. Early prototypes often run on a single vector store and a basic orchestration script. That works for demos. It rarely holds up once enterprise data volume, compliance constraints, and latency expectations enter the picture. A production-grade agentic RAG setup usually evolves into a layered architecture with clear governance and observability baked in from the start.

Core Reference Architecture Components

Most enterprise-grade RAG architecture deployments end up organizing around these components because they directly influence scalability, reliability, and operational control.

Retrieval infrastructure

- Hybrid retrieval combining vector search, keyword search, and structured database queries

- Chunking strategies aligned with document semantics, not arbitrary token limits

- Metadata filtering for access control and contextual precision

- Index refresh pipelines to keep embeddings synchronized with live data

Agent orchestration layer

- Planner executor agents coordinating multi-step retrieval and reasoning

- Function calling support for structured API interactions

- Memory layers supporting short-term conversational context and longer operational state

- Workflow routing logic based on query intent

Tool ecosystem

- API integrations with CRM, ERP, document repositories, and analytics systems

- Workflow automation triggers tied to enterprise events

- External knowledge connectors when regulated data boundaries are allowed

Validation and guardrails

- Critic agents verifying factual grounding before response delivery

- Hallucination detection heuristics and confidence scoring

- Policy enforcement layers for compliance-sensitive outputs

Observability stack

- Prompt and retrieval logging for auditability

- Response quality evaluation dashboards

- Latency and cost monitoring tied to usage patterns

Security, Compliance, and Governance Foundations

Security and governance decisions in enterprise-grade RAG architecture often decide whether an AI system stays a pilot experiment or becomes a production asset.

Key architectural safeguards include:

- Role-based access controls integrated with enterprise identity providers

- Encryption in transit and at rest for embeddings and retrieved data

- Data lineage tracking from ingestion to response generation

- Compliance alignment with regulatory frameworks relevant to your sector

Key priorities include:

- Role-based access controls and encryption

- Prompt, response, and agent audit logging

- Data lineage visibility across workflows

- Compliance alignment with SOC 2, GDPR, or HIPAA, where relevant

Traceability becomes especially important during audits. Teams need visibility into which data influenced specific outputs.

Also Read: AI Governance Consulting: How to Build Guardrails, Observability, and Responsible AI Pipelines

Implementation Pipeline Overview

Implementation of the agentic RAG pipeline usually happens step by step, starting with data readiness and gradually moving toward full production orchestration.

Data ingestion strategy

- Source prioritization based on business impact

- Document normalization, deduplication, and metadata tagging

- Continuous implementation of agentic RAG pipeline components for dynamic data environments

Embedding lifecycle management

- Model selection aligned with domain vocabulary

- Periodic re-embedding when models or data change

- Versioning to support rollback and evaluation

Agent orchestration design

- Task decomposition strategies

- Tool selection policies

- Context retention boundaries to manage latency and cost

Production deployment

- Containerized inference infrastructure

- Scalable vector database clusters

- Continuous monitoring and evaluation loops

This structured approach helps ensure the agentic RAG framework supports reliability, scalability, and governance rather than just functionality.

Real World Use Cases of Agentic RAG for Enterprises

Where this actually shows up in production. Not theory, not prototypes, but enterprise workflows where retrieval plus agent reasoning is already shaping decisions.

Financial Services

Financial institutions are using retrieval-grounded AI to interpret regulations, analyze risk data, and support faster compliance decisions.

Example: Legal and regulatory research

Platforms like ROSS Intelligence use retrieval-augmented AI to search large legal corporations, summarize case law, and support legal strategy development. Lawyers use it to surface precedents faster and build arguments backed by verifiable sources.

Typical enterprise applications:

- Regulatory intelligence copilots, Risk analysis assistants

- Risk analysis assistants

- Compliance documentation automation

This is often where agentic RAG use cases gain early traction because auditability matters.

Also Read: How Agentic AI is Revolutionizing the Financial Sector: A Deep Dive for the C-Suite

Healthcare and Life Sciences

Healthcare organizations are exploring agentic RAG use cases for clinical research, documentation, and decision support where accuracy and traceability are critical.

Example: Clinical knowledge retrieval

Clinical RAG frameworks are being evaluated using curated medical resources to answer clinical questions with stronger factual grounding compared to standard LLM outputs.

Healthcare deployments increasingly focus on:

- Clinical documentation support

- Evidence synthesis for care decisions

- Research knowledge retrieval

Documentation accuracy matters here. Even small errors can trigger billing or audit complications.

Also Read: How Agentic AI in Healthcare Is Bringing in Industry-level Transformation

Retail and Commerce

Agentic RAG in Retail and commerce teams are combining customer intelligence with operational data to improve forecasting, personalization, and inventory planning.

Example: Amazon AI retail systems

AI-driven forecasting and recommendation systems help retailers optimize pricing, product placement, and demand estimation so customers see relevant products at the right time.

Common applications of agentic RAG in enterprises include:

- Demand intelligence agents

- Personalization copilots powered by agentic AI in customer service

- Inventory knowledge assistants

These deployments usually integrate CRM, supply chain, and analytics data sources.

Also Read: 10 Use Cases & Benefits of How AI Agents Are Revolutionizing the Retail Industry

Manufacturing and Industrial Operations

Manufacturers are pairing operational data with AI reasoning to monitor equipment health, optimize production workflows, and reduce downtime risk.

Example: Siemens predictive AI initiatives

Industrial AI systems analyze operational data to improve production planning and maintenance decisions, helping reduce downtime and improve efficiency.

Other frequent applications:

- Predictive maintenance copilots

- Technical documentation assistants

- Production optimization intelligence

This is where agentic RAG use cases for scaling with AI agents become operationally visible.

Enterprise Knowledge Automation

Many use cases of agentic RAG for enterprises involve deploying it internally to improve knowledge access, research workflows, and everyday operational decision-making.

Example: Enterprise documentation AI

Healthcare RAG deployments show how retrieval-backed AI can reduce documentation review time while improving contextual accuracy.

Broader enterprise uses include:

- Internal research copilots

- Policy intelligence assistants

- Knowledge base automation

Organizations usually adopt this first internally before customer-facing deployments.

Across industries, the most consistent pattern is this: agentic RAG becomes valuable where decisions depend on large, evolving knowledge sources. That is why agentic RAG use cases and applications continue expanding across regulated, data-intensive sectors.

Also Read:

Appinventiv partnered with MyExec to build an AI business consultant using a multi-agent RAG architecture. The platform analyzes business documents, generates contextual recommendations, and supports data-driven decisions for SMBs. The engagement covered end-to-end RAG AI development, orchestration design, and scalable deployment aligned with real business workflows.

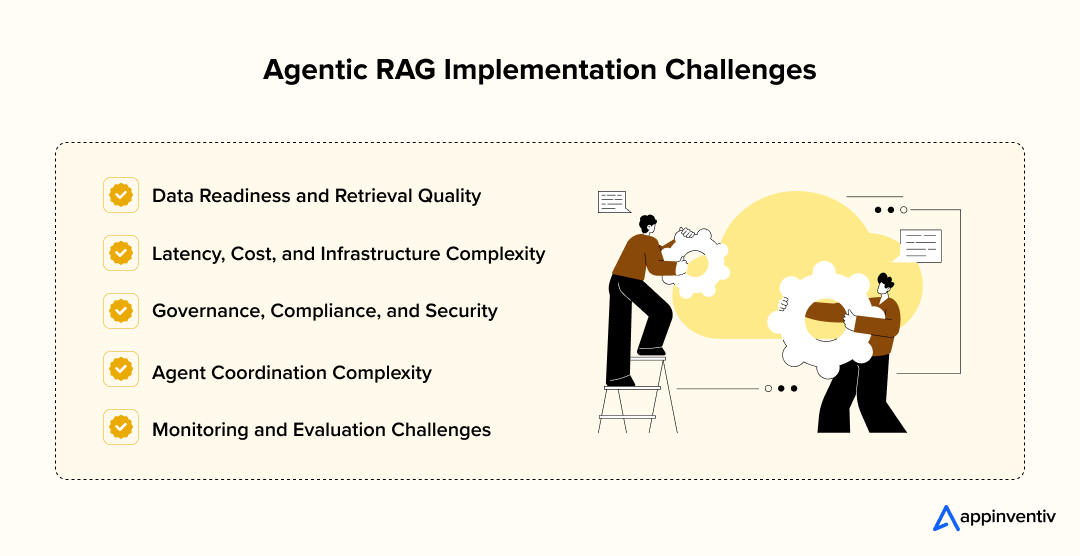

Implementation Challenges of Agentic RAG in Enterprises

Production deployments rarely fail because of the model alone. The bigger hurdles usually involve data readiness, infrastructure realities, governance, and operational oversight. Addressing these early keeps implementations stable as usage grows.

Data Readiness and Retrieval Quality

Reliable outputs depend heavily on how well enterprise data is structured, tagged, and maintained. Retrieval quality often reflects upstream data discipline more than model capability.

Mitigation strategies:

- Standardize metadata and document structure

- Use hybrid retrieval combining vector and keyword search

- Maintain regular embedding refresh cycles

- Establish clear data ingestion pipelines

Also Read: How Enterprises Can Leverage Agentic AI for Smarter Data Engineering

Latency, Cost, and Infrastructure Complexity

Scaling RAG with AI agents introduces additional retrieval and reasoning steps. Without thoughtful infrastructure design, response latency and operational costs can increase.

Mitigation strategies:

- Implement retrieval caching and query routing

- Optimize indexing and storage architecture

- Use model tiering for cost control

- Monitor performance continuously

Governance, Compliance, and Security

Enterprise AI deployments must remain explainable, traceable, and secure. Governance requirements often shape architectural decisions as much as technical ones.

Mitigation strategies:

- Role-based access controls tied to identity systems

- Encryption for embeddings and data flows

- Audit logging for prompts and outputs

- Clear data retention policies

Agent Coordination Complexity

When multiple agents collaborate, orchestration discipline becomes essential to avoid conflicting actions or context drift.

Mitigation strategies:

- Define clear agent responsibilities

- Use structured orchestration frameworks

- Implement memory management controls

- Apply guardrails to enforce workflow discipline

Also Read: How AI Agent Interoperability Can Boost Efficiency and Reduce Costs

Monitoring and Evaluation Challenges

Maintaining consistent performance requires ongoing visibility into retrieval accuracy, model behavior, and system drift.

Mitigation strategies:

- Continuous evaluation datasets

- Observability dashboards for performance tracking

- Retrieval quality metrics

- Human validation for sensitive workflows

Proactively managing these challenges of agentic RAG in enterprises helps maintain reliability as deployments scale.

Implementation Frameworks and Development Strategy for Agentic RAG

Most enterprises figure out agentic RAG implementation after an initial pilot. The model works, the retrieval works, yet scaling it across teams needs a clearer build strategy. How you structure agents, development phases, and platform choices often determines whether the system stays experimental or becomes operational.

Agent Framework Patterns

The framework you choose for agentic RAG development shapes how reasoning flows, how retrieval happens, and how reliably the system executes tasks.

Common architectural patterns:

- ReAct agentic RAG framework pattern: Interleaves reasoning with retrieval steps. Useful when queries require iterative exploration.

- Plan-and-execute agents: Separate planning from execution. Works better for structured enterprise workflows.

- Multi-agent orchestration: Dedicated agents handle retrieval, validation, and actions. Supports scalability and modular governance.

| Framework Pattern | Best Fit Scenario | Operational Advantage |

|---|---|---|

| ReAct | Research-heavy workflows | Iterative reasoning accuracy |

| Plan-and-execute | Structured business processes | Controlled task execution |

| Multi-agent orchestration | Complex enterprise systems | Scalability and modularity |

How to Build an Agentic RAG System?

Understanding how to build an agentic RAG system infrastructure successfully usually follows a phased rollout. Jumping straight to production rarely works.

Typical progression

- Discovery phase: Identify priority workflows, sensitive data boundaries, and success metrics.

- Pilot stage: Validate retrieval precision, orchestration logic, and governance alignment.

- Production deployment: Expand integrations, monitoring, and operational automation.

This lifecycle keeps adoption controlled while allowing continuous improvement.

Buy vs Build vs Partner Strategy

Enterprises rarely face a simple build-or-buy decision anymore. Partner-led implementation has become a third viable path.

| Strategy | When It Fits Best | Key Advantages | Considerations |

|---|---|---|---|

| Build | Strong internal AI capability | Full control, customization, governance | Higher time and resource investment |

| Buy | Need rapid deployment | Faster rollout, lower engineering overhead | Less flexibility, vendor dependency |

| Partner | Balanced expertise needs | Domain expertise plus faster scaling | Requires alignment on governance and ownership |

Many enterprises combine these approaches. Managed AI infrastructure may be bought, orchestration layers built internally, and specialized implementation partners brought in for scaling. That blended strategy often works best when planning how to build an agentic RAG system at enterprise scale.

Appinventiv’s Note From the Implementation Floor

During one enterprise deployment, the biggest hurdle was not retrieval accuracy, but context drift across multi-step workflows. Agent responses were technically correct, yet lost business context over time. Introducing a critical agent plus structured memory checkpoints stabilized outputs, reduced verification overhead, and improved stakeholder trust in production AI decisions.

Structured frameworks help enterprises transition from experiments toward reliable, scalable AI deployment.

Platforms Supporting Enterprise Agentic RAG Deployments

Once enterprise agentic RAG solutions architecture decisions become clearer, platform selection usually follows. Most enterprises assemble a layered stack rather than relying on a single vendor. Governance maturity, integration depth, and scalability typically matter more than model performance alone.

Top Platforms Supporting Agentic RAG Architecture

Agentic RAG generative AI integration deployments typically rely on multiple technology layers working together. Each platform category plays a distinct role in ensuring retrieval accuracy, orchestration stability, and enterprise-grade scalability.

| Platform Layer | Role in Agentic RAG | Typical Enterprise Options |

|---|---|---|

| LLM providers | Reasoning, generation, tool calling | OpenAI, Anthropic, Cohere, Google |

| Vector databases | Semantic retrieval and indexing | Pinecone, Weaviate, Milvus, Redis |

| Agent orchestration platforms | Workflow coordination and tool integration | LangChain, LlamaIndex, Semantic Kernel |

| Cloud AI ecosystems | Infrastructure, security, scaling | AWS, Azure AI, Google Cloud Vertex AI |

How Enterprises Typically Choose

Platform selection usually reflects operational priorities rather than technical preference alone. Integration fit, compliance readiness, and long-term scalability often influence decisions more than individual platform features.

Platform evaluation often comes down to:

- Data residency and compliance requirements

- Compatibility with existing enterprise systems

- Latency, cost, and scaling expectations

- Observability and governance controls

Some organizations prioritize flexibility with open frameworks. Others lean toward managed ecosystems for faster deployment and operational support.

These combinations increasingly represent the top platforms supporting agentic RAG architecture in production environments. The most stable enterprise setups usually combine reliable LLM providers, scalable retrieval infrastructure, orchestration frameworks, and cloud-native governance controls rather than depending on a single platform.

ROI Modeling for Agentic RAG Adoption

This is usually where the tone around agentic RAG implementation in enterprise changes. Early demos spark interest, but budget discussions follow quickly. Someone from finance asks what the rollout actually costs, how fast teams benefit, and whether the gains hold up after the initial excitement fades. That is where a grounded ROI model becomes useful.

Cost Components

When evaluating agentic RAG vs RAG, most enterprise agentic RAG projects land somewhere between $50K and $500K. The variation often has less to do with the model and more to do with integration depth, data readiness, and governance requirements.

| Cost Area | What It Covers | Typical Cost Range |

|---|---|---|

| Infrastructure | LLM usage, vector storage, cloud compute | $15K to $150K |

| Engineering | Data cleanup, orchestration setup, integrations | $20K to $250K |

| Operations | Monitoring, optimization, governance controls | $10K to $100K |

Costs often increase when legacy systems are involved or when compliance reviews require additional safeguards.

Also Read: How to Integrate RAG in Your Application? Process and Costs

ROI Calculation Framework

Most teams start with simple comparisons rather than complex financial modeling.

Example scenario:

A support operations group spends about $40K annually on internal research and documentation reviews. After deploying an agentic RAG assistant, research time drops roughly 25 to 30 percent. That usually translates to about $10K to $12K yearly savings.

If implementation costs around $100K, payback typically stretches across two to three years, depending on adoption and usage consistency.

Common evaluation steps:

- Capture baseline operational cost and workflow duration

- Measure efficiency gains after deployment

- Include infrastructure and maintenance costs

- Consider risk reduction or compliance improvements where applicable

Traditional RAG vs Agentic RAG ROI Snapshot

| Metric | Traditional RAG | Agentic RAG |

|---|---|---|

| Accuracy | Moderate | Higher with validation |

| Hallucination Risk | Higher | Reduced via grounding |

| Automation Scope | Limited | Multi-step workflows |

| Governance Readiness | Added later | Built into architecture |

| Long-Term Efficiency | Variable | Improves with scale |

Enterprises typically prioritize reliability and governance stability over short-term deployment speed.

Typical ROI Impact Areas

Returns tend to accumulate gradually rather than appearing overnight.

- Faster internal research cycles

- Reduced manual documentation effort

- More consistent compliance responses

- Less duplicated knowledge work across teams

Enterprises that treat agentic RAG as operational infrastructure, not just a quick automation project, usually see steadier long-term value.

Also Read: How Much Does It Cost to Build an AI Agent?

What Does the Future of Agentic RAG in Enterprise Look Like?

Many enterprises are still early in adoption. Pilots are expanding, but the focus is shifting from experimentation to reliable workflows that support daily decisions.

Autonomous Enterprise Agents

Teams are testing AI-powered agentic RAG enterprise solutions that track workflows, surface insights, and sometimes trigger next steps. It usually starts with internal research or reporting tasks before expanding.

Multimodal Retrieval

Agentic AI retrieval augmented generation handles more than text – enterprise data includes images, dashboards, transcripts, and structured records that are becoming part of retrieval pipelines, especially in healthcare, retail, and industrial settings.

Governance Becoming Central

Security, auditability, and compliance are now part of initial planning rather than post-deployment checks. That shift is shaping adoption speed.

Agent Ecosystems Emerging

Organizations are moving toward agentic RAG generative AI integration with multiple specialized agents working together instead of one large assistant. This gradual shift reflects where the future of agentic RAG in enterprise environments appears to be heading.

Also Read: Top AI Trends in 2026: Transforming Businesses Across Industries

Future-ready AI investments require architecture clarity, governance alignment, and ROI focus.

How Appinventiv Supports Enterprise Agentic RAG Implementation

Enterprises exploring enterprise agentic RAG solutions usually need more than model access. Architecture, integrations, governance, and production readiness all matter. As a RAG development company, Appinventiv works with enterprises to design systems that move beyond pilots into dependable operational AI.

Support typically spans the full lifecycle:

- Architectural design aligned with enterprise data ecosystems

- Integration with CRMs, ERPs, knowledge bases, and APIs

- Compliance-aware development with governance controls built in

- Production deployment, monitoring, and scaling support

Experience snapshot:

| Capability | Numbers |

|---|---|

| Autonomous AI agents deployed | 100+ |

| AI engineers and data scientists | 200+ |

| Custom AI models deployed | 150+ |

| Industries served | 35+ |

Business impact observed:

- Manual process reduction by around 50%

- Agent task accuracy exceeding 90%

- Scalability improvements up to 2x

Organizations looking to operationalize AI responsibly often combine internal teams with external expertise. Appinventiv provides structured AI application development services to help enterprises deploy and scale agentic RAG systems with greater confidence.

Frequently Asked Questions

Q. How does agentic RAG differ from traditional RAG?

A. Traditional RAG usually retrieves information once, then generates a response. Agentic RAG goes further. It can plan retrieval steps, re-check data, call tools, and refine answers. That makes it better suited for complex enterprise workflows where accuracy, context continuity, and explainability matter more than quick responses.

Q. How does Appinventiv implement enterprise-grade agentic RAG systems?

A. Implementation typically starts with data assessment and architecture planning. Appinventiv focuses on integrating enterprise systems, building retrieval pipelines, adding governance controls, and supporting production deployment. The goal is reliability and scalability, not just functionality, so systems can operate consistently within enterprise workflows and compliance expectations.

Q. How does answer generation and post-processing work in an agentic RAG system?

A. Answer generation usually begins with chunk retrieval and context building to support conversation understanding. A large language model handles response generation, often supported by question rewriting and a reranking step. During agent execution, a state structure maintains contextual continuity. A grading decision and aggregation prompt then help assemble an accurate final response.

Q. What system setup is required before implementing an agentic RAG application?

A. Setting up an agentic RAG application typically involves configuring the environment, installing required dependencies, and managing packages listed in requirements.txt. Teams often organize data in a docs/ folder within a project repository. Tools like LangChain help connect core components, including LLM provider configuration, embedding models, a chat interface, and testing workflows through a notebook environment.

Q. How does document preparation and indexing work in an agentic RAG system?

A. Document preparation typically starts with a chunking strategy, using methods like parent/child splitting strategy, recursivecharactertextsplitter, or markdownheadertextsplitter. Tools such as LlamaIndex support hierarchical document indexing, improving retrieval accuracy. Strong embedding model quality ensures reliable vector embeddings stored in a vector database. Platforms like Amazon Bedrock Knowledge Bases and Amazon OpenSearch Serverless enable indexing using semantic or hybrid approaches.

Q. How does answer generation and post-processing work in an agentic RAG system?

A. Answer generation usually begins with chunk retrieval and context building, followed by question rewriting to improve conversation understanding. A large language model drives response generation, while a reranking step and grading decision refine accuracy. During agent execution, state structure preserves contextual continuity, and an aggregation prompt helps assemble the final grounded output consistently.

Q. Is agentic RAG necessary for production-grade enterprise AI?

A. Not always, but it becomes useful when workflows involve complex decisions, multiple data sources, or compliance requirements. Basic RAG may handle simpler tasks. As enterprise usage grows, agentic capabilities often help maintain accuracy, traceability, and operational stability across larger deployments.

Q. What types of data can agentic RAG systems access?

A. Most deployments handle structured databases, documents, knowledge bases, APIs, and internal enterprise applications. Some systems also retrieve images, transcripts, or analytics dashboards. Access usually depends on governance policies, integration scope, and security controls defined during implementation.

Q. What makes Agentic RAG different from a chatbot?

A. A chatbot typically responds conversationally using predefined logic or model outputs. Agentic RAG can retrieve enterprise data, reason through tasks, validate responses, and interact with business systems. That makes it more of a workflow assistant than a simple conversational interface.

Q. How does Agentic RAG enhance user experience?

A. Users usually notice faster information access, fewer repetitive searches, and more context-aware responses. Instead of jumping between tools, they can get grounded insights in one place. Over time, this tends to streamline research, reporting, and decision-support workflows.

Q. What industries benefit most from Agentic RAG?

A. Industries with large knowledge bases and regulatory requirements see the most value. Financial services, healthcare, retail, manufacturing, and enterprise SaaS are common examples. These sectors often rely on accurate, traceable information to support operational decisions and compliance obligations.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

Key takeaways: Generative AI specialises in content synthesis and creation, while Agentic AI focuses on autonomous reasoning and multi-step task execution. Targeted benefits of generative AI and agentic AI in Australia include nearly a 35% reduction in operational bottlenecks and 70% faster workflows. Prioritise Gen AI for workforce augmentation and Agentic AI for high-stakes, autonomous…

How Much Does It Cost to Build a Conversational AI Chatbot in the UAE?

Key Takeaways AI chatbot development in the UAE typically costs AED 150,000–1,470,000 ($40K–$400K), based on complexity. Backend integration and compliance drive cost more than the chatbot interface itself. Arabic NLP, UAE hosting, and PDPL requirements increase budget and engineering effort. LLM + RAG enterprise chatbots sit at the higher end due to integration and infrastructure…

AI Inventory Management in Australia: Benefits, Use Cases, and Implementation Strategies

Key takeaways: Traditional ERP planning reacts to history; AI predicts demand shifts, reallocates stock dynamically, and prevents costly stockouts across distributed Australian networks. AI in inventory management in Australia typically reduces inventory levels 20–40% while maintaining service targets, unlocking significant working capital across multi-state operations. Enterprise implementations usually range from AUD 70,000 to AUD 700,000+,…