- Internal Readiness: What Enterprises Must Clarify Before Hiring an AI Development Partner

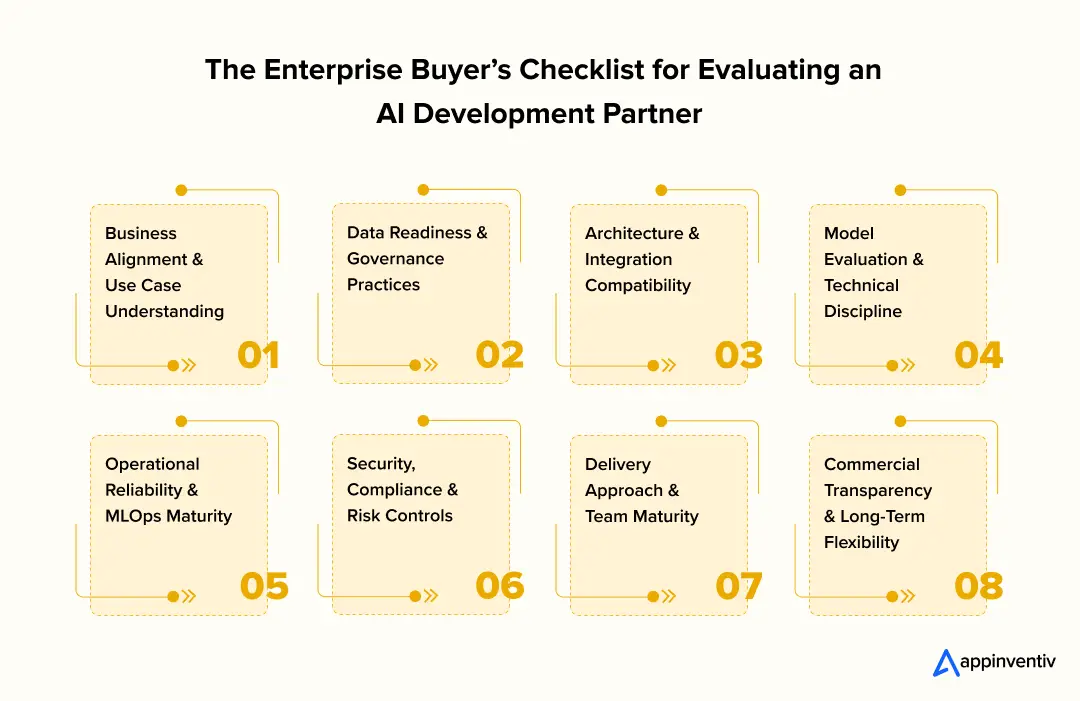

- The Enterprise Buyer’s Checklist for Evaluating an Enterprise AI Partner

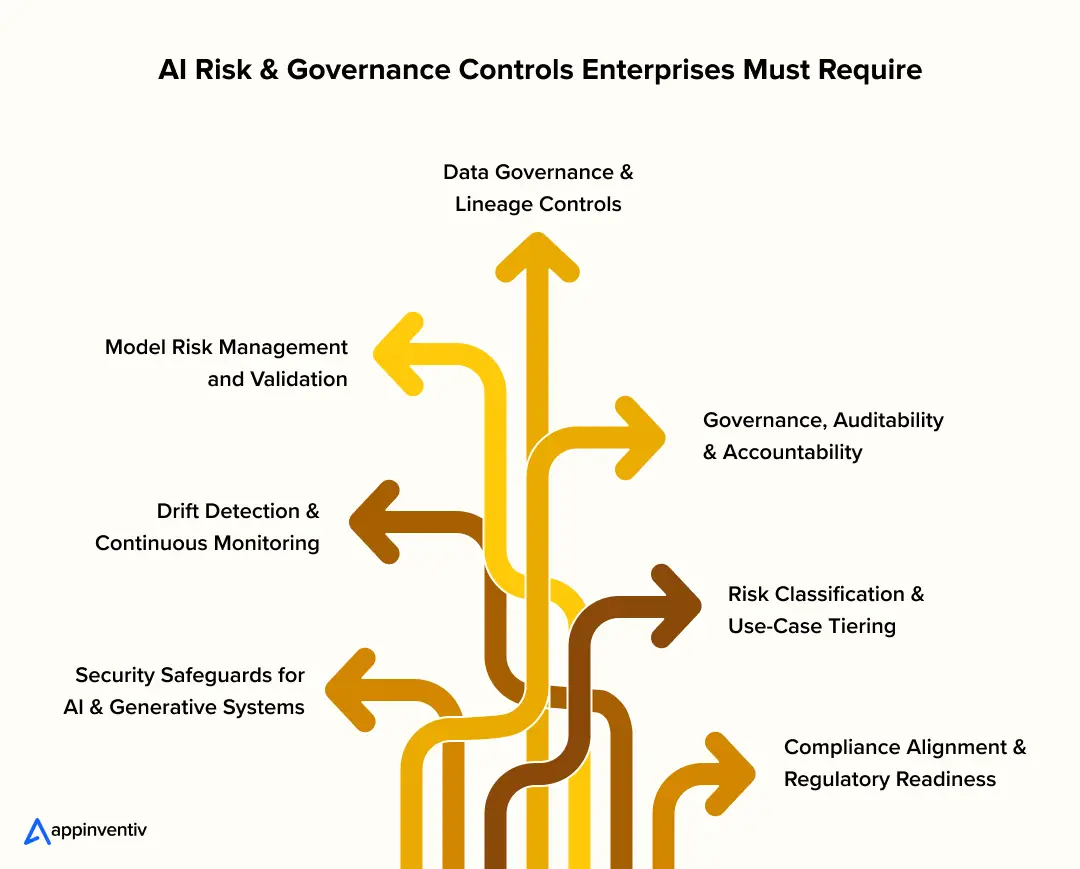

- AI Risk & Governance Controls Enterprises Must Require

- Choosing the Right AI Partner with Confidence

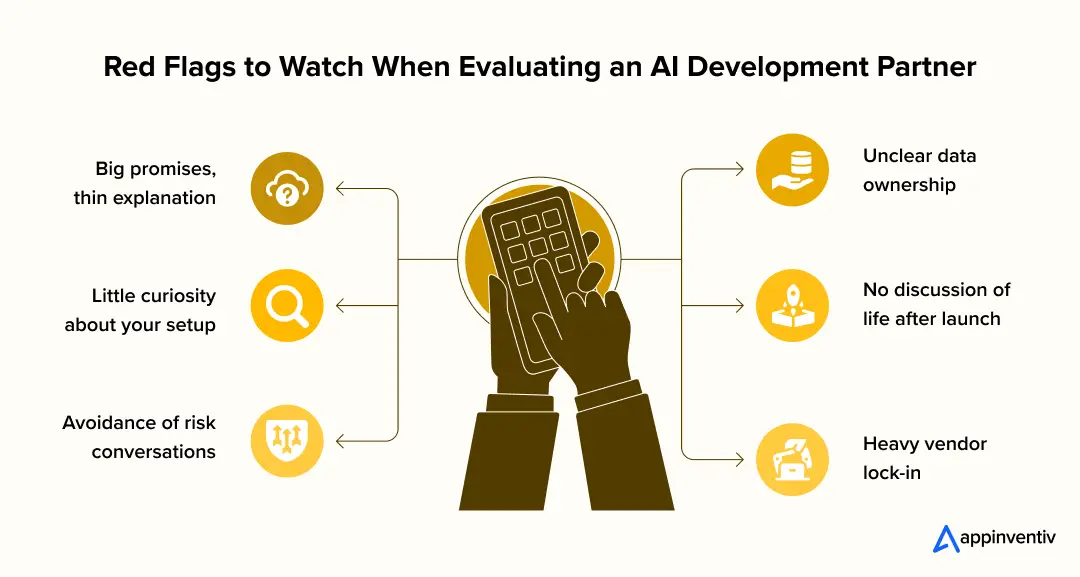

- Red Flags to Watch When Evaluating an AI Development Partner

- Moving Forward with the Right AI Development Partner

- How Appinventiv Supports Enterprises as an AI Consulting Company

- FAQs

Key takeaways:

- Choosing an AI development partner is less about technical demos and more about real-world fit, governance, and operational reliability.

- Internal alignment on outcomes, data ownership, and integration realities is essential before engaging any enterprise AI partner.

- Strong partners demonstrate delivery maturity through governance controls, security discipline, and lifecycle management, not just model accuracy.

- AI risk management, compliance readiness, and monitoring capabilities are critical to ensuring long-term reliability and regulatory alignment.

- A structured evaluation process helps enterprises avoid vendor lock-in and select an AI partner built for sustained operational value.

Choosing an AI partner often starts with excitement. The demo works, the outputs look impressive, and the potential feels immediate. Then the practical questions begin. Systems don’t integrate as easily as expected. Data ownership becomes a discussion. Security and compliance reviews slow progress. What seemed straightforward starts to feel layered.

That reality is pushing organizations to approach enterprise AI vendor selection more deliberately. Rather than relying solely on presentations, many teams now use an AI development partner checklist to understand how a provider delivers, integrates, and supports solutions once they move into production. The question shifts from “Can they build it?” to “Will it work in our environment?”

The stakes are rising quickly. Gartner projects global AI spending will reach $2.5 trillion by 2026, reflecting the depth of enterprises’ AI investments. As adoption accelerates, the cost of choosing the wrong partner grows just as fast.

Understanding how to choose an AI development company means looking beyond portfolios. A strong AI & ML development partner must work comfortably within existing systems, security controls, and regulatory expectations.

This guide supports choosing AI consulting partner options with clarity, outlining what to confirm before hiring an AI development partner so you can select an enterprise AI development partner built for real operational value, not just promising demos.

Ensure your AI investment aligns with systems, governance, and compliance before development begins.

Internal Readiness: What Enterprises Must Clarify Before Hiring an AI Development Partner

Many AI projects struggle before a partner is even chosen. The issue usually isn’t the technology. It’s internal confusion. Teams expect different outcomes. Data ownership isn’t clear. Success gets defined differently across departments.

Taking time to align internally makes conversations with an enterprise AI development partner far more productive. Without clarity, vendors fill in gaps, and projects lose direction early.

This early alignment also helps organizations evaluate whether potential providers’ AI development services truly match operational needs rather than offering generic solutions.

Start with outcomes. AI should solve a real operational problem, not exist as an experiment. Agree on what success looks like: faster decisions, fewer manual steps, better risk visibility, or measurable cost savings. Without this alignment, even a strong AI & ML development partner cannot deliver meaningful results.

Data readiness is another reality check. Many organizations assume their data is ready until integration begins, only to discover gaps. Knowing where data lives, who controls access, and what compliance rules apply prevents delays later.

Technical constraints also matter. Legacy systems, security layers, and identity frameworks shape what’s possible. A clear picture also helps evaluate the partner’s AI solution delivery model and whether their approach supports long-term scalability.

Before speaking with vendors, align on:

- Success definition: the operational impact you expect

- Data ownership & access: where data lives and who controls it

- System integrations: platforms, APIs, and identity controls

- Security & compliance boundaries: privacy obligations and risk tolerance

- Decision ownership: who approves and who is accountable

This alignment doesn’t take long, but it makes every conversation that follows more focused and productive.

Enterprise AI Vendor Evaluation Matrix

| Evaluation Area | What Strong AI Partners Demonstrate |

|---|---|

| Business Alignment | Clear understanding of workflows and KPIs |

| Data Governance | Defined data ownership and compliance controls |

| Architecture Fit | Integration with existing enterprise systems |

| Model Reliability | Transparent evaluation and testing processes |

| MLOps | Monitoring, retraining, and lifecycle management |

| Security | AI-specific safeguards and compliance alignment |

| Delivery Maturity | Experienced leadership and structured delivery |

| Commercial Flexibility | Clear pricing, portability, and ownership |

The Enterprise Buyer’s Checklist for Evaluating an Enterprise AI Partner

Once your internal priorities are clear, the real challenge begins: figuring out whether a partner can deliver in your environment. This is where decisions often go off track. A polished demo can be convincing. Slide decks can sound confident. But none of that shows how a team performs when systems need to connect, compliance reviews begin, and real users start relying on the solution.

A dependable enterprise AI development partner should be evaluated on more than technical skill. How they manage delivery, handle risk, integrate with existing systems, and support long-term operations will shape whether AI becomes an everyday value or another stalled initiative.

Think of this checklist as a way to separate promise from proof.

1. Business Alignment and Use Case Understanding

Strong partners spend more time understanding workflows than talking about tools. They look for friction points, decision delays, and areas where manual effort slows progress.

Look for:

- A clear grasp of how work actually happens day to day

- Experience in environments similar to yours

- Outcomes defined in business terms, not technical metrics

2. Data Readiness and Governance Practices

AI projects rarely fail because of algorithms. They fail because data is incomplete, inconsistent, or poorly governed.

Look for:

- Practical thinking around data access and quality

- Awareness of privacy and regulatory obligations

- Clear ownership and auditability practices

3. Architecture and Integration Compatibility

Even the best models fall short if they cannot fit into existing systems.

Look for:

- Experience integrating with enterprise platforms and APIs

- Understanding of identity, access, and security controls

- Architecture that fits your infrastructure, not one that forces change

This stage also supports AI technical expertise evaluation, ensuring the team can integrate securely within complex enterprise environments.

4. Model Evaluation and Technical Discipline

Accuracy alone doesn’t tell the whole story. What matters is how the system performs in real conditions.

Look for:

- Transparent testing and evaluation methods

- Attention to error patterns and edge cases

- Explainability where decisions need to be understood

A disciplined approach here reflects strong AI technical expertise evaluation and signals readiness for high-impact use cases.

Also read: LLM-as-a-Judge

5. Operational Reliability and MLOps Maturity

AI systems need ongoing care. Without monitoring and lifecycle management, performance can degrade quickly.

Look for:

- Monitoring and drift detection practices

- Clear retraining and maintenance processes

- Readiness to respond if performance drops

These practices indicate overall AI delivery maturity and determine whether systems remain reliable over time.

6. Security, Compliance, and Risk Controls

Enterprise environments carry obligations that extend beyond standard security.

Look for:

- Alignment with relevant compliance requirements

- Safeguards against data leakage or misuse

- Audit trails and governance mechanisms

7. Delivery Approach and Team Maturity

How a team works often matters as much as what they build.

Look for:

- Experienced leadership guiding delivery

- Clear communication rhythm and checkpoints

- Documentation and knowledge transfer practices

This is often where AI delivery maturity becomes visible in real workflows and decision checkpoints.

8. Commercial Transparency and Long-Term Flexibility

Contracts should support flexibility, not create dependency.

Look for:

- Clear pricing and scope change processes

- Defined ownership of models and assets

- Portability and exit provisions

Clear ownership and portability provisions help ensure the engagement remains sustainable when working with an AI outsourcing partner for enterprises.

Also Read: How to Hire an AI Agent Development Company

Looking at partners through this lens makes enterprise AI vendor selection more grounded and less reactive. It also helps ensure that when you move forward with hiring an AI development partner, you are choosing a team equipped to support real operations, not just impressive demonstrations.

Assess architecture fit, security posture, and operational reliability with an enterprise-focused evaluation.

AI Risk & Governance Controls Enterprises Must Require

AI introduces a different kind of exposure than conventional software. System behavior can shift as data changes. Outputs are not always predictable. In generative environments especially, responses may surface that no one explicitly defined. When controls are weak, the impact rarely stays technical. It shows up in compliance gaps, flawed decisions, and questions around accountability.

For that reason, AI risk management cannot live only in policy documents. In mature environments, it is built into how data is handled, how models are tested, how systems are monitored, and how responsibility is assigned when something goes wrong. Mature governance frameworks are a strong indicator of AI delivery maturity and long-term operational reliability.

Below are the controls enterprises should reasonably expect.

1. Data Governance & Lineage Controls

When results look wrong, teams need answers quickly. That requires knowing where data originated, who accessed it, and how it moved through the system.

Controls to require:

- Clear lineage from source to output, supported by reviewable logs

- Access rules based on role and necessity, not convenience

- Data classification aligned with regulatory and business sensitivity

- Retention and deletion enforced by system policy

- Masking or anonymization for fields that should never be exposed

Why it matters: Without traceability, investigations slow down and compliance reviews become difficult.

2. Model Risk Management & Validation

Accuracy alone does not guarantee reliability. Models must behave predictably under real operating conditions.

Controls to require:

- Validation using out-of-sample and unseen datasets

- Bias checks reflecting real business and regulatory exposure

- Error analysis focused on impact, not just percentages

- Explainability where decisions affect customers, finances, or safety

- Performance thresholds tied to business risk tolerance

Why it matters: Issues often appear in edge cases, and those are usually the ones with the highest consequences.

3. Drift Detection & Ongoing Monitoring

Models rarely fail suddenly. Performance erodes gradually as real-world conditions change.

Controls to require:

- Monitoring for data drift and behavioral shifts in production

- Alerts tied to business risk, not only technical thresholds

- Controlled retraining workflows with review checkpoints

- Version control with rollback capability

Why it matters: By the time drift becomes obvious, decision quality may already be compromised.

4. Security Safeguards for AI & Generative Systems

AI systems introduce new attack surfaces, especially when external inputs and retrieval pipelines are involved.

Controls to require:

- Protections against prompt manipulation and misuse

- Output filtering aligned with internal policies

- Secure retrieval pipelines with validation at the source

- Guardrails to prevent sensitive data exposure

- API controls, rate limiting, and anomaly detection

Why it matters: Without safeguards, systems can be influenced in ways that are difficult to detect and explain.

5. Governance, Auditability & Accountability

AI systems should be governed with the same discipline as any system that influences operational outcomes.

Controls to require:

- Decision and activity logging for traceability

- Human oversight for high-impact actions

- Policy enforcement through workflows rather than guidelines

- Usage tracking and audit trails

- Clear ownership and escalation paths

Why it matters: Auditability builds internal trust and stands up better under regulatory review.

6. Risk Classification & Use-Case Tiering

Not every AI use case requires the same level of oversight. Governance should scale with impact.

A practical tiering approach

- Tier 1: Informational outputs

- Tier 2: Decision support

- Tier 3: Automated actions with financial, legal, or safety impact

Each tier should define review frequency, monitoring intensity, and required human intervention.

7. Compliance Alignment & Regulatory Readiness

Regulatory expectations continue to evolve. Systems should be designed with that reality in mind.

Controls to require:

- Alignment with applicable privacy and industry regulations

- Documentation that supports audits without rework

- Explainability where decisions are regulated

- Controls for data residency and cross-border processing

Also Read: Executive Guide to Enterprise AI Governance and Risk

Choosing the Right AI Partner with Confidence

Even with a solid checklist, the way you run the evaluation can shape the outcome. When there’s no clear process, teams often lean toward the slickest presentation rather than the partner who actually fits their environment. A bit of structure helps keep enterprise AI vendor selection grounded in real operational needs instead of first impressions. A structured evaluation process supports choosing AI solution partner options based on real operational fit rather than presentation quality.

At this point, the aim isn’t to be dazzled. It’s to feel confident in the choice. You’re looking for an AI project delivery partner who can work within your systems, respect constraints, and stay dependable after the solution goes live.

Keeping the evaluation consistent makes it easier to compare options and supports a more realistic AI capability assessment.

A practical way to approach the decision:

- Start with a focused shortlist: Look for teams that understand your use case and have worked in similar environments. Prioritizing teams with proven delivery records helps identify a dependable AI project delivery partner.

- Have working conversations, not sales demos: Talk through integration realities, data handling, and governance expectations.

- Try a small pilot or discovery phase: Real-world testing reveals far more than a polished presentation. Pilot validation is one of the most reliable ways to confirm whether an AI solution delivery model works in real conditions.

- Confirm security and compliance alignment: Make sure their practices align with your policies and required AI regulations and compliance standards.

- Speak with current clients: Ask how the team responds when priorities shift or challenges arise. Speaking with existing clients helps verify whether the team performs consistently as an AI project delivery partner.

- Clarify ownership early: Define responsibilities, escalation paths, and ongoing support before signing. Clear ownership structures are especially important when engaging an AI outsourcing partner for enterprises.

A structured evaluation reduces uncertainty when hiring an AI developer. More importantly, it improves the chances of choosing an enterprise AI services provider that remains reliable long after implementation is complete.

Red Flags to Watch When Evaluating an AI Development Partner

Not every risk shows up in a proposal. Some of the real signals appear in conversation. Ask about data handling, AI integrations, or what happens after launch. Listen carefully to how the team responds. The tone often tells you more than the slides.

During enterprise AI vendor selection, pay attention to what feels unclear. A strong partner will talk about trade-offs and constraints without hesitation. If every answer sounds perfect but light on detail, that’s usually a cue to pause.

A few things worth watching:

- Big promises, thin explanation: High accuracy claims with no real detail on testing conditions or production behavior.

- Unclear data ownership: Vague answers around access controls, privacy safeguards, or compliance responsibility.

- Little curiosity about your setup: No real questions about legacy systems, identity controls, or workflow dependencies.

- No discussion of life after launch: Uncertainty around monitoring, retraining, maintenance, or model drift.

- Avoidance of risk conversations: Limited clarity on governance or who is accountable when things go wrong.

- Heavy vendor lock-in: Architecture choices that make switching providers difficult later.

Catching these signs early doesn’t just lower risk. It helps you choose an enterprise AI partner who is practical, transparent, and ready for real operating conditions. Gaps in transparency often signal weaknesses in AI technical expertise evaluation and delivery discipline.

Moving Forward with the Right AI Development Partner

Making the final call can feel like the finish line. In practice, it’s where the working relationship actually starts. You’ve compared options, tested what feels workable, and chosen a direction. Now the focus shifts to making sure both teams understand how things will run once the work begins. At this stage, confirming expectations helps ensure your selected AI project delivery partner can support long-term operations.

This is a good time to slow down and clear up assumptions. Small misunderstandings around scope or ownership can turn into friction later. Dependable enterprise AI developers won’t rush through this step. They know alignment now saves time and frustration down the road.

Before moving ahead, it helps to confirm a few practical details:

- Agree on what success looks like: Make sure both teams share the same picture of progress and results.

- Clarify responsibilities early: Decide who handles delivery, oversight, and ongoing support.

- Reconfirm security and compliance expectations: Ensure practices align with internal policies and required AI compliance and security standards.

- Set clear communication paths: Decide how updates will be shared and how issues should be raised.

- Plan what happens after launch: Outline monitoring, maintenance, and long-term ownership.

Spending a little extra time here can prevent confusion later and helps the partnership begin on steady ground.

Partner with a team equipped to deploy, secure, and scale AI across enterprise environments.

How Appinventiv Supports Enterprises as an AI Consulting Company

Most leadership teams already see where AI could help. The harder part is making it work within existing processes, older systems, and compliance boundaries. As an AI consulting company, Appinventiv works with enterprise teams to turn that intent into practical plans that fit how work actually happens. The focus stays on outcomes teams can measure and solutions people can use without disrupting daily operations.

One example is the MyExec AI Business Consultant, which helps leaders interpret business data through a conversational interface. Instead of working through multiple reports, they can surface insights quickly and act with clearer context. In another engagement, JobGet, an AI-driven matching and automation platform, helped users discover relevant job opportunities faster, improving engagement while simplifying the experience.

As an enterprise AI development partner, Appinventiv brings strategic guidance and hands-on delivery to ensure solutions integrate smoothly, remain secure, and continue delivering value after launch. If you’re assessing feasibility, risk, or long-term impact, connect with Appinventiv’s AI consulting experts to explore how AI can support more steady, informed operations.

FAQs

Q. How should enterprises evaluate AI development partners?

A. Try to look past the demo. A practical AI development partner checklist helps you understand whether the team truly gets your business priorities, treats data responsibly, and can integrate with the systems you already rely on. This makes enterprise AI vendor selection feel less like a gamble and more like an informed decision.

Q. What should CIOs look for in an AI development company?

A. When deciding how to choose an AI development company, CIOs should focus on reliability and fit. Does the architecture align with your environment? Are compliance needs understood? Can the solution be maintained and scaled? A dependable enterprise AI development partner shows real integration experience and steady operational discipline.

Q. How can organizations reduce risk when outsourcing AI development?

A. Risk usually drops when expectations are clear early. Choose an AI outsourcing partner for enterprises that follows solid governance practices, aligns with AI compliance and security standards, and defines ownership from the start. Running a small pilot and reviewing data-handling practices can help confirm AI project governance and risk controls before the full rollout.

Q. What questions should enterprises ask AI vendors before signing?

A. Before hiring an AI development partner, ask how the solution will fit into your current systems, how data privacy will be protected, how performance will be monitored over time, and who owns the models and pipelines. Questions about lifecycle management and portability help protect long-term flexibility.

Q. How does Appinventiv help enterprises deliver AI at scale?

A. As an AI consulting company and AI implementation partner for enterprises, Appinventiv works alongside teams from early strategy through deployment and ongoing support. Their focus on governance, integration, and operational reliability helps ensure AI solutions scale securely and deliver measurable value.

Q. How can enterprises assess an AI partner’s reputation and experience before signing?

A. Look beyond marketing claims and review evidence of real delivery. Examine case studies that reflect enterprise-scale deployments, measurable KPIs, and industry relevance. Ask for client references and examples of continuous monitoring, data governance practices, and independent validation. Signals such as security scans, license compliance checks, and regression testing frameworks also indicate delivery maturity and operational discipline.

- In just 2 mins you will get a response

- Your idea is 100% protected by our Non Disclosure Agreement.

AI Inventory Management in Australia: Benefits, Use Cases, and Implementation Strategies

Key takeaways: Traditional ERP planning reacts to history; AI predicts demand shifts, reallocates stock dynamically, and prevents costly stockouts across distributed Australian networks. AI in inventory management in Australia typically reduces inventory levels 20–40% while maintaining service targets, unlocking significant working capital across multi-state operations. Enterprise implementations usually range from AUD 70,000 to AUD 700,000+,…

How to Hire RAG Architects for Enterprise AI

Key takeaways: Define your RAG architecture scope to avoid misaligned hiring decisions Identify end-to-end architectural ownership across retrieval, governance, and scaling Evaluate deep technical capabilities beyond prompt engineering and tools Validate governance and compliance readiness at the retrieval layer Test system-level thinking through real-world failure scenarios Choose the right hiring model based on scale, risk,…

Key Takeaways: Healthcare chatbots in the UAE are moving beyond queries to support triage, patient communication, and real clinical workflows across hospitals. Effective healthcare chatbot development depends on clean data, strong system integrations, and alignment with regulations like DHA, DOH, and MOHAP. Development costs typically range from AED 147,000 to AED 1,470,000+, depending on complexity,…